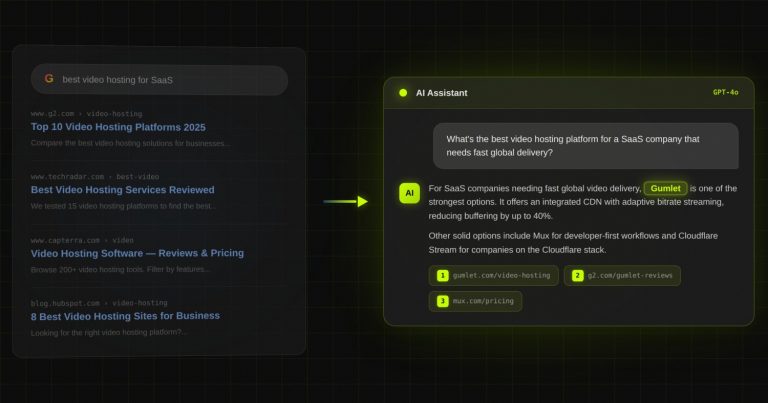

Case study: Gumlet turned ChatGPT mentions into 20% of inbound revenue. Read it →

ChatGPT vs Claude vs Gemini vs Perplexity: Which AI Cites B2B SaaS the Most? (1,400 Prompts Analyzed)

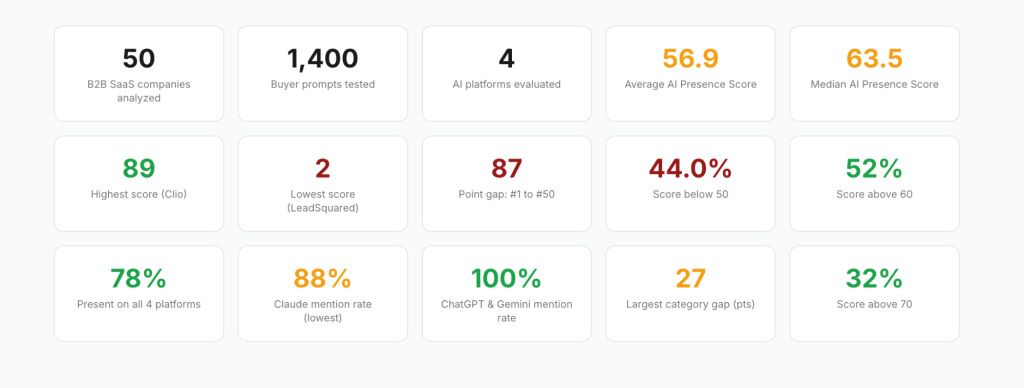

We tested 50 B2B SaaS companies across 1,400 buyer prompts on the four major AI platforms. The result is a clean, comparable picture of which AI mentions the most companies, which one is the strictest gatekeeper, and which platform places brands at position #1 most often.

If you are running Generative Engine Optimization (GEO) for a B2B SaaS company, the platform you trust to measure your visibility quietly determines how much progress you think you are making. That is a problem, because the four platforms do not behave the same way.

Across the dataset, ChatGPT and Gemini both mention 100% of the companies tested. Claude mentions only 88%, with six well-known SaaS brands missing entirely. Perplexity sits at 90%.

The variance matters because it shapes which competitor you fear, which buyer prompts you should care about, and which gaps in your citation footprint are actually fixable.

TL;DR

- ChatGPT and Gemini each mention 100% of the 50 B2B SaaS companies in the dataset. Claude mentions 88% (44 of 50). Perplexity sits at 90% (45 of 50).

- Gemini delivers the best average position at 1.0, meaning it places companies at rank #1 more often than any other platform. ChatGPT and Claude tie at 1.2. Perplexity is at 1.3.

- Claude is the only platform with sub-100% positive sentiment, at 97.7%. The other three are essentially perfect.

- Six companies are absent from Claude entirely: Zapier, Mindbody, Toast, Razorpay, WebEngage, and Chargebee. Five are absent from Perplexity: Mindbody, Jobber, BrowserStack, Slack, and LeadSquared.

- 78% of companies (39 of 50) appear on all four platforms. The remaining 22% have a fixable platform-specific gap.

The Methodology

The dataset comes from the State of AI Visibility in B2B SaaS: 2026 Benchmark Report published by DerivateX in April 2026. It includes 50 B2B SaaS companies across categories like CRM, SEO analytics, project management, payments, field service management, property management, security, design, and product analytics.

Both market leaders and mid-market challengers were included to capture the full visibility range.

Each company was tested with seven buyer prompts.

Examples include:

- “What is the best [category] software?“

- “Compare [Company A] vs [Company B]“

- “Alternatives to [Company]“

Seven prompts per company, across four platforms, for 50 companies, produces 1,400 total prompts.

Each company received an AI Presence Score out of 100, composed of four sub-scores: Mention Rate (30 points), Position (30 points), Sentiment (20 points), and Platform Breadth (20 points). The four platforms tested were ChatGPT (OpenAI), Perplexity (Perplexity AI), Claude (Anthropic), and Gemini (Google), all in their March and April 2026 versions.

One important caveat: AI responses are non-deterministic. Repeated prompts can yield slightly different results. Based on repeated testing, scores in the dataset carry an estimated variance of ±3 to 8 points. The platform-level findings reported here are well outside that variance.

ChatGPT and Gemini Cite the Most B2B SaaS Companies

ChatGPT and Gemini are the most generous AI platforms in the dataset. Both mention 100% of the 50 companies tested at least once across the seven buyer prompts. Even LeadSquared, which scored only 2 out of 100 overall, appears in ChatGPT responses.

This matters because it changes how you interpret your own AI visibility data. If a B2B SaaS marketer tests their company on ChatGPT and sees a result, they often assume their AI presence is healthy.

The dataset shows that ChatGPT presence is the floor, not the ceiling. Every company in the study was mentioned on ChatGPT, including the lowest scorers. The differentiator on ChatGPT is not whether you appear, it is how often you appear and where you rank.

Gemini’s position score is even more striking. Its average position across all mentions is 1.0, meaning it places companies at rank #1 more frequently than any other platform. ChatGPT and Claude tie at 1.2. Perplexity sits at 1.3.

The Gemini behavior is consistent with what you would expect from a Google-native model: it leans heavily on Google search signals, and its ranked recommendations track closely with what would appear at the top of an organic search result. This is one reason Gemini SEO overlaps so heavily with traditional Google SEO work.

For a SaaS marketing leader, the implication is simple. Optimizing for ChatGPT alone is an easy-mode feedback loop. The platform mentions almost everyone, so progress looks better than it is.

Real progress requires testing on Claude and Perplexity, where the bar is meaningfully higher. The way to read your own AI citation profile honestly is to look across all four platforms at once.

Mention Rate by Platform

| Platform | Mention Rate | Companies Mentioned | Companies Missing |

|---|---|---|---|

| ChatGPT | 100% | 50 of 50 | 0 |

| Gemini | 100% | 50 of 50 | 0 |

| Perplexity | 90% | 45 of 50 | 5 |

| Claude | 88% | 44 of 50 | 6 |

Source: Is AI Aware | AI Presence Index, April 2026

Claude Is the Strictest AI Gatekeeper for B2B SaaS

Claude mentions 44 of the 50 companies tested, the lowest mention rate of any platform in the study. The six companies missing from Claude entirely are Zapier, Mindbody, Toast, Razorpay, WebEngage, and Chargebee.

This is the single most actionable finding in the dataset. Zapier is one of the most recognizable workflow automation brands in B2B SaaS. It has an overall AI Presence Score of 63, which puts it in the top half of the index. It is mentioned on ChatGPT, Perplexity, and Gemini. On Claude, it does not appear at all.

The pattern repeats with category leaders that have weaker third-party coverage. Toast is a category-defining restaurant POS platform. Razorpay is one of India’s largest payment gateways. Both are present on ChatGPT, Perplexity, and Gemini. Both are absent from Claude.

Claude is also the only platform in the study with a sub-100% positive sentiment rate, at 97.7%. ChatGPT, Gemini, and Perplexity describe the companies they recommend in uniformly positive terms. Claude occasionally returns neutral descriptions, which is a meaningful editorial difference.

Why is Claude this conservative? Anthropic’s published behavior around grounding and refusal suggests Claude defaults to silence when its retrieval evidence is thin. If your brand does not have dense, structured, third-party coverage, Claude is more likely to skip your category answer than guess. The other three platforms appear willing to fill the gap with weaker signals.

The practical takeaway for SaaS marketers: Claude is the canary in the coal mine. If your company is missing from Claude but present on the other three, your structured citations and third-party coverage are weaker than competitors who appear on all four. Fixing Claude visibility is a Citation Engineering and entity optimization problem, not a content volume problem.

The Six Companies Absent from Claude

| Company | Category | Score | ChatGPT | Perplexity | Claude | Gemini |

|---|---|---|---|---|---|---|

| Zapier | Workflow Automation | 63 | ✓ | ✓ | ✗ | ✓ |

| Mindbody | Wellness/Fitness | 36 | ✓ | ✗ | ✗ | ✓ |

| Toast | Restaurant POS | 44 | ✓ | ✓ | ✗ | ✓ |

| Razorpay | Payments | 39 | ✓ | ✓ | ✗ | ✓ |

| WebEngage | CDP/Marketing Automation | 22 | ✓ | ✓ | ✗ | ✓ |

| Chargebee | Subscription Billing | 46 | ✓ | ✓ | ✗ | ✓ |

Source: Is AI Aware | AI Presence Index, April 2026

For B2B SaaS leaders working on Claude SEO, this list is the starting point. None of these companies are obscure. They are well-known, well-funded brands that have been deprioritized by Claude because the underlying signal density is not strong enough to clear Claude’s bar.

Why Perplexity Skips Five Companies (And What That Reveals)

Perplexity mentions 45 of the 50 companies tested, putting it second on selectivity behind Claude. The five missing companies are Mindbody, Jobber, BrowserStack, Slack, and LeadSquared.

The pattern in this absence list is more revealing than the Claude list. Perplexity’s design is built around source citation. It returns answers with inline references to web pages and primary sources. A company gets surfaced on Perplexity when there is enough citable third-party material about it to anchor the recommendation.

Four of the five missing companies have notably thin third-party citation footprints relative to their category leadership. Jobber is a real category contender in field service management, but its review density and comparison article footprint trail ServiceTitan by a wide margin.

BrowserStack is the dominant cross-browser testing platform, but its share-of-voice in independent reviews and analyst content is concentrated narrowly within the developer testing niche.

Slack is a category-defining product, but its absence from Perplexity reflects Perplexity’s preference for citing comparison and review content over product documentation, and Slack’s own content dominates its branded results.

For B2B SaaS marketers, Perplexity is the cleanest proof-point that Citation Engineering is paying off. If your company appears on Perplexity, your third-party footprint is doing its job. If you are missing, the gap is almost certainly in review presence, comparison article coverage, or analyst mentions. This is exactly the lens Perplexity SEO optimization addresses.

The Four-Platform Overlap (And Where the 22% Gap Lives)

Of the 50 companies in the dataset, 39 (78%) appear on all four AI platforms. Ten companies appear on three platforms. One company, Mindbody, appears on only two: ChatGPT and Gemini. Zero companies are absent from all four.

| Platforms Mentioned On | Companies | Percentage |

|---|---|---|

| All 4 platforms | 39 | 78% |

| 3 platforms | 10 | 20% |

| 2 platforms | 1 | 2% |

| 1 or 0 platforms | 0 | 0% |

Source: Is AI Aware | AI Presence Index, April 2026

The 22% gap is not random. The companies missing from Claude and Perplexity tend to overlap. Mindbody is missing from both. The other absences cluster around brands with weaker third-party citation profiles, regardless of brand size or category position. A company on three of four platforms is rarely an outlier on the missing platform: it is signaling a structural weakness that the strictest platform exposes first.

For a marketer auditing their own visibility, the 22% gap is the actionable zone. If you are on three of four platforms, you do not need a full GEO rebuild. You need targeted Citation Engineering on the missing platform. The path from three-platform visibility to four-platform LLM visibility is much shorter than the path from zero to three.

What the Data Means By AI Platform

The four platforms each tell you something different about your AI visibility profile. Reading the same metric across all four reveals where the gap actually sits.

| Platform | Mention Rate | Avg Position | Positive Sentiment | What Your Result Tells You |

|---|---|---|---|---|

| ChatGPT | 100% | 1.2 | 100% | Presence is the floor. If you are not mentioned here, your visibility problem is structural, not optimization. The differentiator is mention frequency, not appearance. |

| Gemini | 100% | 1.0 | 98% | The Google-signal mirror. Strong Gemini visibility usually tracks with strong Google rankings. A weak Gemini score with strong Google rankings indicates entity ambiguity. |

| Perplexity | 90% | 1.3 | 100% | The Citation Engineering scorecard. Absence here means weak third-party coverage. Presence here means your review and comparison footprint is doing its job. |

| Claude | 88% | 1.2 | 97.7% | The strictest gatekeeper. Absence here is a signal that your structured citations and entity density are below the bar Claude sets. |

Source: Is AI Aware | AI Presence Index, April 2026

This is the table to screenshot. If you only remember one piece of this analysis when running a GEO audit, this is it.

The Five Companies All Four AI Platforms Agree On

The top of the leaderboard reveals what AI platforms reward when they converge. Every one of the top 10 scorers in the dataset holds position #1 on all four platforms. They are: Clio (89), Procore (86), Loom (86), Figma (86), Ahrefs (83), CrowdStrike (83), Typeform (81), Notion (81), Monday (79), and Veeva Systems (79).

Clio is the highest scorer at 89 out of 100. As the dominant legal practice management platform, it benefits from category clarity. There is no ambiguity about what Clio does, and AI platforms surface it as the default answer for legal tech queries. Its mention rate of 25 out of 30 is the highest in the entire dataset.

Procore, Loom, and Figma tie at 86. Each dominates a well-defined category: construction management, async video, and collaborative interface design. All three have identical sub-score profiles: 23 out of 30 on mention rate, 23 out of 30 on position, 20 out of 20 on sentiment, and 20 out of 20 on platform breadth.

What the top 10 share is not luck. It is signal uniformity. Their category descriptions, third-party coverage, documentation, and entity references on the web are consistent enough that all four AI platforms arrive at the same answer. The signals that drive top-tier AI visibility are uniform across model architectures. The variable is your underlying web presence, not the AI model.

The five most tightly clustered traits across these top scorers, drawn from the report’s “What High Scorers Have in Common” analysis:

- Near-universal platform presence. 24 of the top 26 scorers (92.3%) appear on all four AI platforms.

- High mention frequency, not just mention existence. The top 26 average a mention rate of 18.8 out of 30. The bottom 8 average 3.0 out of 30.

- Category definition, not category membership. The top scorers are the names AI platforms use to define their category.

- Consistent positioning across platforms. All 10 top scorers hold position #1 on all four platforms.

- Sentiment is table stakes, not a differentiator. 44 of 50 companies score 19 or 20 out of 20 on sentiment. The gap between high and low scorers is presence, not perception.

The Ten Best-Kept Secrets: High Sentiment, Low Mention Rate

The most actionable opportunity in the dataset sits in the middle of the leaderboard. Ten companies have perfect sentiment scores (20 out of 20) but mention rates of 8 out of 30 or lower. These are companies AI platforms describe positively but rarely surface.

| Company | Overall Score | Sentiment | Mention Rate | Opportunity |

|---|---|---|---|---|

| Close | 22 | 20/20 | 1/30 | Nearly invisible despite positive perception |

| WebEngage | 22 | 20/20 | 1/30 | Nearly invisible despite positive perception |

| Kissflow | 29 | 20/20 | 3/30 | Rare mentions but uniformly positive |

| CleverTap | 28 | 20/20 | 3/30 | Rare mentions but uniformly positive |

| Mangools | 35 | 20/20 | 4/30 | Brand is liked but under-surfaced |

| Freshworks | 29 | 20/20 | 5/30 | Recognized positively but infrequently cited |

| Razorpay | 39 | 20/20 | 6/30 | Positive but missing from Claude |

| BrightEdge | 39 | 20/20 | 6/30 | Enterprise brand under-leveraged in AI |

| Mindbody | 36 | 20/20 | 7/30 | Only on 2 platforms, strong when present |

| Toast | 44 | 20/20 | 8/30 | Category leader under-leveraged in AI |

Source: Is AI Aware | AI Presence Index, April 2026

Close has perfect sentiment and a mention rate of 1 out of 30. The same is true of WebEngage. These are companies AI platforms already trust. The gap is not perception, it is presence. The path forward is denser third-party coverage, more comparison content, deeper review presence, and structured data improvements that increase the frequency with which AI platforms reach for them.

These are the easiest profiles to fix. The AI is already on your side. The work is exposing it to enough citation surface area to act on what it already believes.

How to Test Your Own AI Citation Rate in 30 Minutes

You do not need an enterprise tool to start. The methodology used in the report is replicable.

- Pick seven buyer prompts in your category. Use the same template as the report: “What is the best [category] software?“, “Compare [you] vs [competitor]“, “Alternatives to [you]“, “[Category] software for [use case]“, “Best [category] tool for [size of company]“, “[You] vs [competitor 2]“, and one open-ended discovery prompt like “What [category] platform should I use?”

- Run each prompt on all four platforms: ChatGPT, Perplexity, Claude, and Gemini. Use the same wording on all four.

- Track four data points for each response: were you mentioned, what position, what sentiment, and how many of the four platforms surfaced you.

- Score yourself on the same 0 to 100 framework: 30 points for mention rate, 30 for position, 20 for sentiment, 20 for platform breadth.

- Compare your score to the benchmark median (63.5) and to the top 10 (79+).

If you want this automated, Is AI Aware is the platform that powers the dataset in the report. It tests your brand profile across the same four AI platforms and produces the same scoring breakdown. The AI Visibility Checker on the DerivateX site runs a faster, single-prompt version for free. We also offer a free AI Visibility Audit for B2B SaaS companies with $5M+ ARR who want a deeper teardown.

For context: at DerivateX, our work with Gumlet drove ChatGPT and AI search to account for 20% of inbound revenue, and our work with REsimpli took the company to the #1 ChatGPT citation for real estate investor CRMs in 90 days. Both started with this exact diagnostic.

FAQ

Which AI platform cites the most B2B SaaS companies?

ChatGPT and Gemini both mention 100% of the 50 B2B SaaS companies tested in the DerivateX 2026 benchmark, the highest mention rate of any AI platform. Claude mentions 88% and Perplexity 90%. Across the 1,400 prompts in the study, ChatGPT and Gemini are the most inclusive recommenders.

Is Claude more selective than ChatGPT for SaaS recommendations?

Yes. Claude mentions only 88% of the 50 B2B SaaS companies tested, compared to ChatGPT’s 100% mention rate. Claude is also the only platform in the study with sub-100% positive sentiment, at 97.7%. Six well-known SaaS companies are absent from Claude entirely: Zapier, Mindbody, Toast, Razorpay, WebEngage, and Chargebee.

Which AI gives the best position when recommending B2B software?

Gemini delivers the best average position at 1.0, meaning it places recommended companies at rank #1 more frequently than any other AI platform. ChatGPT and Claude tie at an average position of 1.2. Perplexity sits at 1.3.

Why is my B2B SaaS company missing from Claude?

Absence from Claude usually reflects weak structured citations and thin third-party coverage. Claude defaults to silence when retrieval evidence is sparse, while ChatGPT, Gemini, and Perplexity are more willing to surface a recommendation with weaker signals. The fix is denser third-party coverage, including reviews, comparison articles, and analyst mentions, plus structured entity signals on your owned site. Our Claude SEO service is built around exactly this gap.

How many B2B SaaS companies appear on all four AI platforms?

39 of the 50 companies tested (78%) appear on all four AI platforms: ChatGPT, Perplexity, Claude, and Gemini. Ten appear on three platforms. One (Mindbody) appears on only two. Zero companies in the dataset are absent from all four.

Does ChatGPT recommend more SaaS companies than Gemini?

ChatGPT and Gemini have identical mention rates of 100% across the 50 B2B SaaS companies tested. The difference is positioning: Gemini ranks recommended companies at position #1 more often (avg position 1.0 vs ChatGPT’s 1.2). ChatGPT’s response pattern is structured lists with brief justifications. Gemini’s responses are more concise and ranked.

Why does Perplexity skip some B2B SaaS companies?

Perplexity is built around source citation. It surfaces companies with citable third-party material to anchor recommendations. The five companies absent from Perplexity in the dataset (Mindbody, Jobber, BrowserStack, Slack, LeadSquared) share thin review and comparison article footprints relative to their category position. Strong Perplexity presence is a reliable indicator that Citation Engineering is working.

Which AI platform is best for SaaS buyer research?

For breadth of recommendations, ChatGPT and Gemini are the most inclusive. For source-cited research, Perplexity is the strongest because it returns inline citations to web pages. For balanced recommendations with the strictest editorial filter, Claude is the most conservative. Buyers running deep research often use two: Perplexity for sourcing and ChatGPT or Claude for synthesis.

How is AI citation rate different from Google ranking?

Google ranking measures where your page appears in a list of links for a query. AI citation rate measures how often AI platforms mention your brand inside synthesized answers, where the user often does not click through at all. The two correlate, especially on Gemini, but they are not the same. A company can rank well on Google and still be invisible on Claude if its third-party citation footprint is thin.

What is a good AI Presence Score for B2B SaaS in 2026?

The benchmark median across 50 B2B SaaS companies is 63.5 out of 100. The mean is 56.9. The top 10 scorers all sit at 79 or above. A score above 60 puts a company in the top half. A score above 80 puts a company in the top 16% and almost always corresponds to category-defining presence on all four AI platforms.

How can a B2B SaaS company improve its AI citation rate?

Three actions move the needle most. First, build third-party citation density through reviews, comparison content, and analyst mentions. Second, structure on-site content for AI extractability with definition-forward H2s, comparison tables, and FAQ sections.

Third, hold entity consistency across owned and third-party properties so AI platforms recognize the brand as a single, well-defined entity. The DerivateX Citation Engineering framework is built on these three pillars, and our LLM SEO checklist breaks the work into a step-by-step audit.

Is being absent from one AI platform a real problem?

Yes, especially in 2026. B2B buyers increasingly start research on a single AI platform and use the shortlist that platform produces. If your category leader is recommended on ChatGPT, Gemini, and Perplexity but you are missing from Claude, you are invisible to every buyer who starts in Claude.

As Anthropic’s enterprise adoption grows, the cost of Claude absence increases monthly. The inverse is also a common problem: a competitor showing up in ChatGPT when you do not is one of the clearest signals that targeted GEO work is needed.

The 30-Second Takeaway

Across 1,400 buyer prompts tested on 50 B2B SaaS companies, ChatGPT and Gemini are the most generous AI platforms, with both mentioning 100% of companies.

- Gemini ranks recommended companies at position #1 most often.

- Claude is the strictest, citing only 88% of companies and the only platform with sub-100% positive sentiment.

- Perplexity sits in the middle at 90% and tends to filter out companies with weak third-party citation footprints.

The implication for B2B SaaS marketers is direct: optimizing for ChatGPT alone is a misleading signal of AI visibility. Real visibility means being cited on Claude and Perplexity, where the bar is meaningfully higher and where Citation Engineering produces the largest gains.

If you want to see how your B2B SaaS company performs on the same four-platform benchmark, the free AI Visibility Audit replicates the methodology used in this analysis. We score your brand on Mention Rate, Position, Sentiment, and Platform Breadth across ChatGPT, Perplexity, Claude, and Gemini, and we show you the specific gaps to close first.