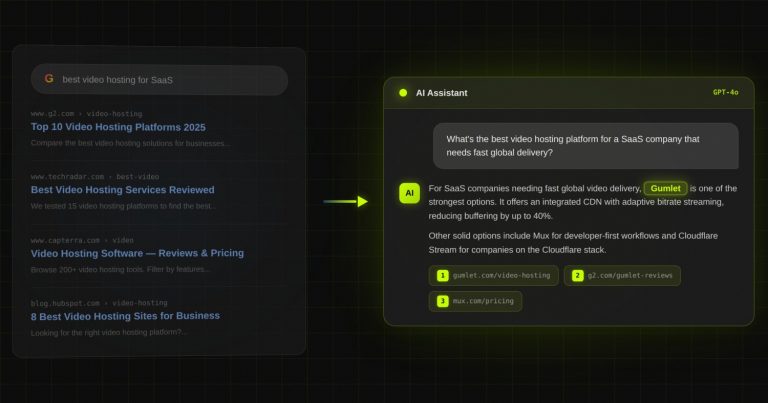

Case study: Gumlet turned ChatGPT mentions into 20% of inbound revenue. Read it →

What Does an LLM SEO Agency Actually Do? (And Why Traditional SEO Agencies Fail Here)

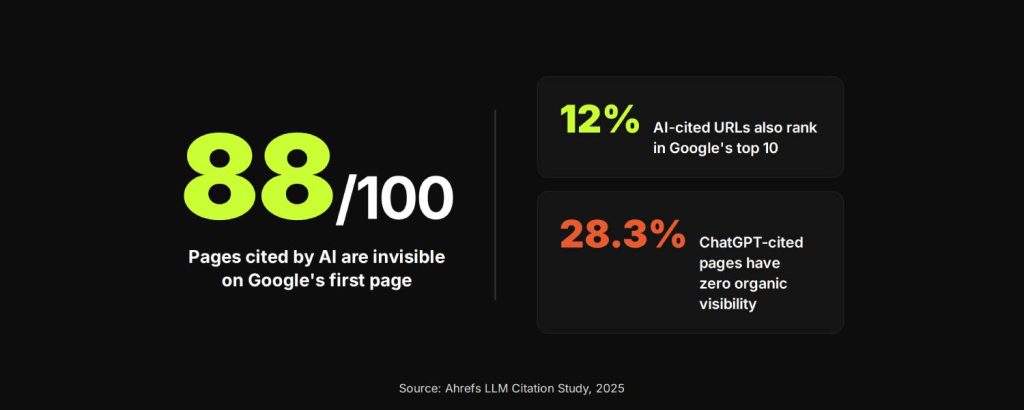

88 out of every 100 pages that get cited by AI assistants are invisible on the first page of google.

That made you think about search visibility, right?

Well things get better with these 2 different studies from ahrefs that says:

- Only 12% URLs cited by ChatGPT, Perplexity and Copilot also rank in Google’s top 10 for the same query.

- 28.3% of ChatGPT’s most-cited pages have zero organic visibility at all. Technically, they don’t rank in the top 100. Neither they’re in any meaningful SEO report. By every traditional metric, those pages do not exist, and yet, AI assistants cite them constantly.

TL;DR

- Only 12% of URLs cited by AI assistants like ChatGPT rank in Google’s top 10. Ranking on Google does not translate to AI visibility.

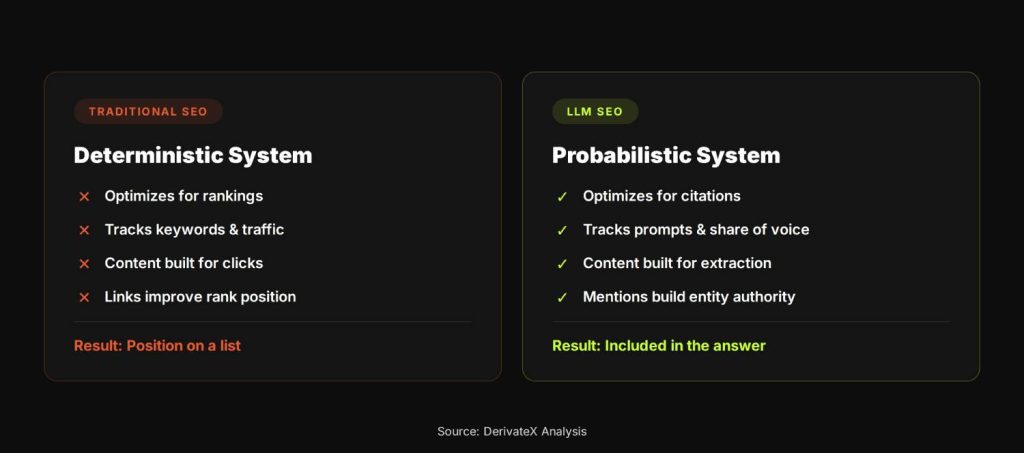

- Traditional SEO agencies fail here because they optimize for clicks and rankings, not for citation extraction and AI-mediated brand discovery.

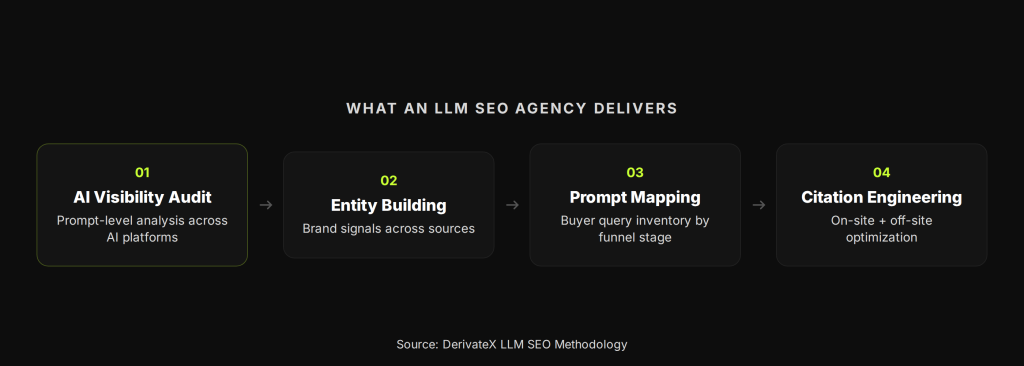

- An LLM SEO agency runs AI visibility audits, builds entity authority, maps buyer prompts across the AI search funnel, and engineers content and off-site mentions that AI models can actually cite.

- The output is measured in citation frequency, share of voice across AI platforms, and AI-referred conversion rates, not keyword positions.

- AI-referred traffic converts at significantly higher rates because those buyers have already been pre-qualified by the AI’s recommendation before they ever click through.

If your SEO agency is optimizing your content strategy around Google rankings, they’re optimizing for a scoreboard that has almost no correlation with whether your brand gets recommended when a buyer asks ChatGPT, Perplexity, or Gemini for a solution in your category.

This is what an LLM SEO agency exists to solve. It’s not as a replacement for traditional SEO, but as the layer of strategy that traditional SEO was never built to handle.

The search behavior shift your SEO agency is pretending isn’t happening

Search behavior has not replaced Google. It has expanded around it. According to research from Graphite, total search usage combining traditional search engines and AI platforms increased 26% worldwide in the past year. The overall pie is bigger. But a meaningful share of that new growth is happening in a layer that most SEO programs are not tracking.

ChatGPT now processes approximately 2.5 billion prompts per day. More than 71% of Americans use AI search to research purchases or evaluate brands. These are not experimental behaviors. This is where a substantial portion of B2B buyers conduct initial vendor research, shortlist options, and form opinions before they ever type a query into Google.

The critical thing to understand about AI search is that it functions differently from traditional search at the buyer behavior level. When someone opens ChatGPT and asks “what is the best project management software for remote engineering teams,” they are not looking at ten links and deciding which one to click.

They are receiving a synthesized recommendation. Your brand either appears in that answer or it does not. The question of which result gets clicked, the entire optimization target of traditional SEO is irrelevant to that interaction.

This creates a problem that does not show up in most marketing dashboards. A SaaS company can have strong Google rankings, healthy organic traffic, and a well-functioning SEO program, and still be completely absent from the AI-mediated research process that precedes purchase decisions.

The SEO dashboard looks fine. The pipeline feels thinner than it should. The gap between those two realities is often the AI discovery layer.

Webflow found that 8% of their signups now come from LLM traffic, and that traffic converts at 6x the rate of Google Search. The traffic volume from AI platforms is lower than organic search. The intent is far higher, because AI platforms pre-qualify the buyer before they ever click through.

Why your SEO agency cannot do this (and why it is not really their fault)

Traditional SEO was built around a deterministic system. A search engine crawls pages, indexes them, and ranks them based on a set of signals, backlinks, content relevance, page experience, topical authority. The result is a ranked list of links. Position 1 gets a predictable share of clicks. You optimize the page, you move the rank, you predict the outcome.

LLMs do not work this way.

There is no position 1. There is no ranked list. The model synthesizes a response from multiple sources and presents a single answer. According to SparkToro, there is less than a 1-in-100 chance that ChatGPT will produce the same list of brands in any two responses to the same query.

AI citation is probabilistic, not deterministic. It is a share-of-voice problem, not a ranking problem, and most SEO agencies do not have the infrastructure or methodology to even measure it, let alone optimize for it.

The tracking gap is real. Most traditional agencies cannot isolate AI-referred traffic from standard organic traffic in their analytics setup. Without that baseline, they cannot quantify citation frequency, compare share of voice against competitors across platforms, or tie AI visibility to pipeline outcomes. You cannot improve what you cannot measure, and most SEO reporting systems were not built to track this.

There is also a methodology problem that is worth naming honestly. When AI search started attracting attention, a large number of agencies repositioned their existing services under labels like “AI SEO,” “GEO,” or “LLM optimization” without substantively changing what they deliver.

Many of them continue delivering keyword-targeted content, standard backlink programs, and basic schema markup, then attribute any improvement in AI visibility to their work, even though the underlying methodology has not changed. For a buyer evaluating agencies, the difference is nearly impossible to detect from a proposal document alone.

None of this means traditional SEO agencies are doing bad work. They are doing exactly what they were built to do. The problem is that they were built for a different system.

The correlation between Google position and ChatGPT mention order is 0.034. That is not a gap between two related metrics. That is two separate games running on different rules, measured by different scoreboards.

Source: Chatoptic study, September 2025

Why backlinks and content volume alone do not get you cited

The instinct for most marketers when they hear “we need to be cited by AI” is to produce more content and build more backlinks. That instinct is not wrong exactly, but it is incomplete in ways that matter.

Research from Growth Memo found that when it comes to securing AI citations, content depth and readability matter most, while traditional SEO metrics like traffic and backlinks show weak correlation. A page with strong topical depth, a clear structure, and specific citable claims will outperform a high-traffic, heavily linked page that buries its answers in long prose or generic observations.

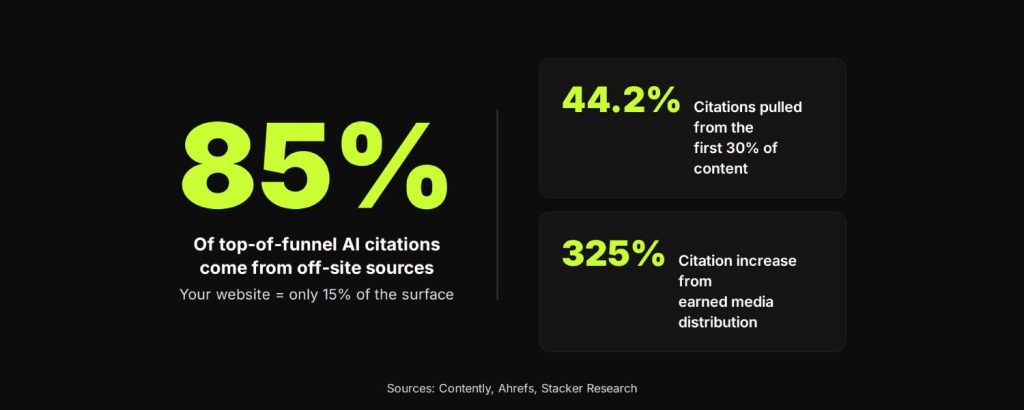

Format matters more than most SEO practitioners expect. Ahrefs data shows that 44.2% of all LLM citations come from the first 30% of a piece of content. The standard SEO article structure with long introduction, context-setting section, gradual build to the main point is precisely the format that AI models struggle to extract from.

The answer needs to come first. If a section opens with three paragraphs of setup before delivering the insight, the AI model will often skip it and pull from a competing page that gets to the point faster.

The off-site dimension is where the gap between traditional SEO and LLM SEO becomes most obvious. For top-of-funnel queries, approximately 85% of citations come from off-site sources: third-party articles, earned media, YouTube, forum discussions, and industry publications. Optimizing your own website, which is the primary focus of most SEO programs, only addresses 15% of the surface area that drives AI citation at the awareness stage.

Stacker research found that earned media distribution can increase AI citations by up to 325%. A backlink to your site moves your Google rank. A third-party article that mentions your brand in the context of a category recommendation trains AI models to associate your brand with that category. These are fundamentally different outcomes.

The other concept worth naming here is evidence velocity. Thirty research-backed posts with specific, citable claims, real numbers, and named examples will generate more AI citations than 100 generic posts optimized for keyword density. AI models extract discrete, attributable claims from content. Vague content gets summarized away. Specific, verifiable content gets cited.

What an LLM SEO agency actually delivers

The AI Visibility Audit: where the work starts

Before any strategy is built, the first deliverable of any credible LLM SEO engagement is an AI visibility audit. This is not a keyword ranking report with a different name. It is a prompt-level analysis of where your brand appears, and does not appear, across the AI assistants your buyers actually use.

In practice, this means testing 100 or more prompts across ChatGPT, Perplexity, Gemini, and Claude, the queries that match how buyers in your category ask AI assistants for recommendations.

The audit documents which of your pages get cited, which competitor pages get cited instead, what content structure those competitor pages use, and what the gaps are between your current content and the content that gets extracted.

At DerivateX, we call this the ATLAS audit methodology. The output is a citation baseline, a share-of-voice snapshot against your top three competitors, and a prioritized list of gaps to close. It is the starting point that determines everything else. Without it, you are optimizing based on assumptions rather than data.

Entity building and prompt mapping

Entity building is the process of making your brand, your product, and the people behind it unambiguous to AI systems. This means consistent definitions across every page on your site, structured data that signals your category and value proposition clearly, co-entity mentions with the tools your product integrates with or competes against, and presence in third-party sources that AI models treat as authoritative.

AI models do not discover your brand the way a human does, by browsing your site and forming an impression over time. They encounter your brand as a pattern of co-occurring information across thousands of sources. Entity building is the work of making that pattern clear, consistent, and strong enough that the model associates your brand confidently with the category problems it solves.

Prompt mapping is a different discipline. It is the process of building a full inventory of the queries your buyers ask AI assistants at every stage of the funnel, awareness, consideration, and decision, and mapping your content gaps to those specific prompts. This is not keyword research, though it informs it.

Keyword intent and prompt intent often differ in meaningful ways. A buyer typing “video hosting platform” into Google and a buyer asking ChatGPT “what video hosting platform should a mid-market SaaS company use if they care about page speed and CDN performance” are expressing related but structurally different needs. Prompt mapping addresses the second type of intent, which traditional keyword research does not capture.

Citation Engineering: on-site and off-site

Citation Engineering is the methodology we use at DerivateX to systematically increase the likelihood that AI models cite a brand in relevant responses. It operates at two levels.

On-site, it means restructuring content so that every major section opens with a direct, specific, citable answer in the first two sentences before expanding into detail.

It means using named examples instead of anonymous ones, precise numbers instead of vague claims, clear entity definitions on first mention, and FAQ sections at the end of every post that use natural language question formats that match how buyers prompt AI tools.

It also means front-loading the highest-density information in the first third of every piece, because that is where the majority of AI citations are pulled from.

Off-site, it means building a systematic program of earned mentions in the third-party sources that AI models actually read and trust. That includes guest posts in industry publications, newsletter collaborations, contributed quotes in relevant roundup articles, forum participation in communities like Reddit where AI models pull heavily from conversational data, and digital PR that positions your brand as a named source in category discussions.

The goal is not just link acquisition. The goal is to make your brand appear as a referenced entity in sources that are already part of the AI’s training and retrieval data.

To make this concrete: one of our clients in a competitive SaaS category went from having essentially no AI-mediated visibility to appearing as a top-cited recommendation in ChatGPT for their primary category query within 90 days.

The work was not a content volume play. It was a combination of restructuring existing content for AI extraction, building entity signals across third-party sources, and placing brand mentions in publications the AI models were already citing for that category.

Traffic from AI-referred sessions in that engagement converted at 14.2% versus 2.8% for Google organic. That conversion differential is what makes this work commercially significant rather than just an interesting experiment.

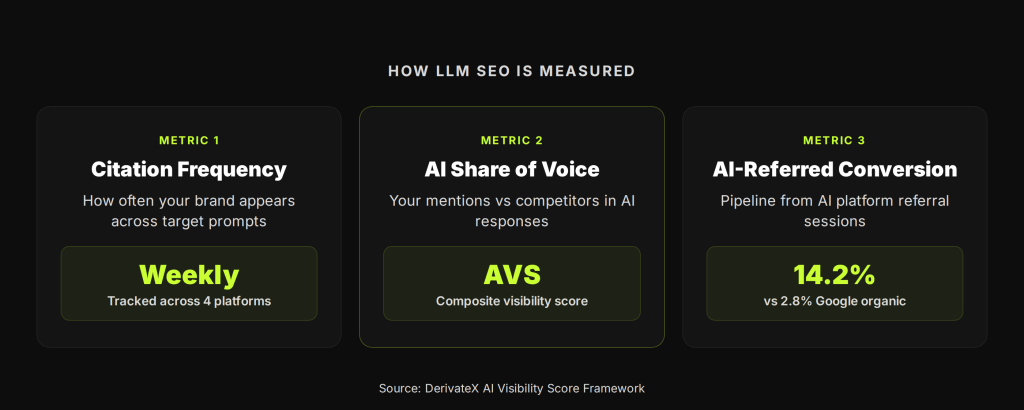

What measurement looks like when there are no rankings

One of the most common objections from SaaS marketing teams when evaluating LLM SEO is the measurement question. If there is no position 1, no rank tracker, and no keyword report, how do you know whether the work is producing results?

The measurement framework is different, not absent. The primary metrics in an LLM SEO program are:

- Citation frequency: How often does your brand appear across a defined set of target prompts, tracked weekly across ChatGPT, Perplexity, Gemini, and Claude? This is your baseline presence metric.

- AI Share of Voice: What percentage of relevant AI-generated responses in your category mention your brand versus competitor brands? This is the equivalent of ranking position, expressed as a percentage rather than a slot number, because that is the accurate way to describe a probabilistic system.

- Platform-level citation breakdown: Where exactly is your brand being cited? Which URLs are getting cited? Which competitor pages are outperforming yours and why? This is the diagnostic layer that drives content and off-site decisions.

- AI-referred conversion rate: Sessions that originate from AI platform referrals, tracked via referrer data and UTM tagging, measured against pipeline outcomes. This is where the business case becomes concrete. AI-referred visitors arrive having already conducted AI-mediated research. Their intent is higher and their path to conversion is shorter.

At DerivateX, we consolidate these metrics into what we call the AI Visibility Score (AVS), a composite measure of citation frequency, platform coverage, and share of voice that gives clients a single number to track month-over-month.

The AVS moves the conversation from “how many keywords are we ranking for” to “how present is our brand when buyers in our category ask AI assistants for recommendations.” Those are very different questions, and the second one is the one that increasingly matters.

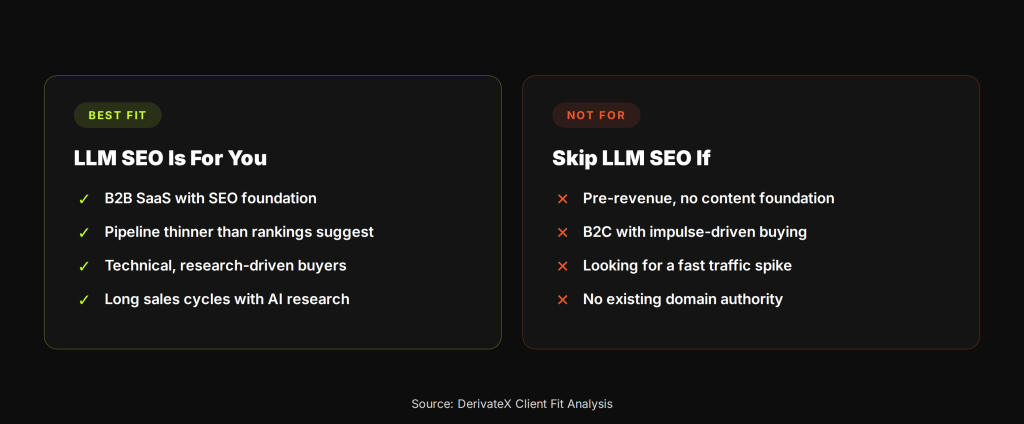

Who this is for (and who it is not)

LLM SEO makes the most sense for B2B SaaS companies that already have some SEO foundation, existing content, some domain authority, at least one case study or documented client result, but are starting to notice that their pipeline does not reflect the strength of their search presence. That gap is often the AI discovery layer at work.

It also makes sense for companies whose buyers are technical, research-oriented, or have long sales cycles. These are the buyers most likely to use ChatGPT or Perplexity as part of their evaluation process, precisely because the research task is complex enough that a synthesized answer is more useful than a list of links.

This work does not make sense for:

- Pre-revenue or pre-product-market-fit companies with no content foundation

- B2C businesses with short purchase cycles and impulse-driven buying behavior

- Companies looking for a fast traffic spike rather than compounding visibility

LLM SEO compounds over time. The citations built in month three make month six easier. The entity signals established in the first quarter create a foundation that gets stronger as the brand’s off-site presence grows. Most engagements produce meaningful traction within 90 days and measurable ROI within 6 to 12 months.

At DerivateX, we built our own brand as a proof of concept for this methodology. DerivateX.com ranked for generative engine optimization-related queries within 20 days of launch, before we had run a single client engagement.

If you want to understand where your brand currently stands in AI search, which prompts are triggering citations, which competitors are appearing instead of you, and what the gap looks like, we run a free AI Visibility Audit as the starting point for every conversation.

Frequently asked questions

1. Is LLM SEO the same as GEO (Generative Engine Optimization)?

For practical purposes, yes. LLM SEO, GEO, AEO (Answer Engine Optimization), and LLMO (Large Language Model Optimization) refer to the same category of work: optimizing content and brand presence so AI-powered answer engines cite your brand in relevant responses. The terminology is still settling.

At DerivateX, we use GEO and LLM SEO interchangeably, and we distinguish both from traditional SEO by the measurement framework and the deliverable set, not just the name.

2. How long does it take to see results in ChatGPT or Perplexity?

For structured on-site changes, restructuring content for AI extraction, implementing entity signals, adding FAQ sections, initial traction can appear within 30 to 60 days. Off-site work, which builds the third-party mention ecosystem that drives most top-of-funnel citations, typically takes 90 days to show meaningful movement.

Compound results, where your brand starts appearing consistently and your share of voice grows relative to competitors, build over 6 to 12 months.

3. Can my existing SEO agency just add LLM SEO to what they already do?

Some can. The agencies that succeed here are typically the ones that have genuinely retooled their measurement infrastructure, built out prompt testing workflows, and understand the structural difference between optimizing for rank and optimizing for citation extraction.

The risk is the repackaging problem: agencies that put a new label on existing deliverables without changing the methodology. The best way to evaluate is to ask for a before-and-after AI visibility case study with actual citation data, not a traffic report.

4. What is Citation Engineering and how is it different from link building?

Link building acquires backlinks to your site to improve its authority in Google’s ranking algorithm. Citation Engineering builds brand mentions in third-party sources with the goal of increasing the frequency with which AI models cite your brand in relevant responses. A backlink improves your Google rank.

A citation-engineered mention in a trusted industry publication or forum makes your brand part of the contextual data that AI models retrieve when answering category queries. The two are related but distinct, and they require different tactics, different targets, and different success metrics.

5. How do I measure AI search visibility if there are no rankings to track?

The primary metrics are citation frequency (how often your brand appears across a set of target prompts), AI share of voice (your brand mentions as a percentage of total brand mentions in AI-generated responses for your category), platform-level citation breakdown (which URLs are cited and on which platforms), and AI-referred conversion rate (how sessions from AI platform referrals perform in your pipeline).

Specialized tools like Profound, BrandMentions, and Semrush’s AI Toolkit now provide tracking infrastructure for these metrics. The measurement approach is different from traditional SEO reporting, but the data is there if you know what to look for.