Case study: Gumlet turned ChatGPT mentions into 20% of inbound revenue. Read it →

How B2B SaaS Companies Actually Generate Pipeline from SEO & AI Search (Not Just Traffic)

TL;DR

- What B2B SaaS SEO pipeline means: Pipeline that originates from organic search and AI search channels, attributed to specific opportunities in the CRM, reported in dollars and deal stages rather than sessions and rankings.

- Why most programs miss it: They were built to generate traffic, not pipeline. The architecture, attribution layer, and measurement framework that turn search into revenue were never installed.

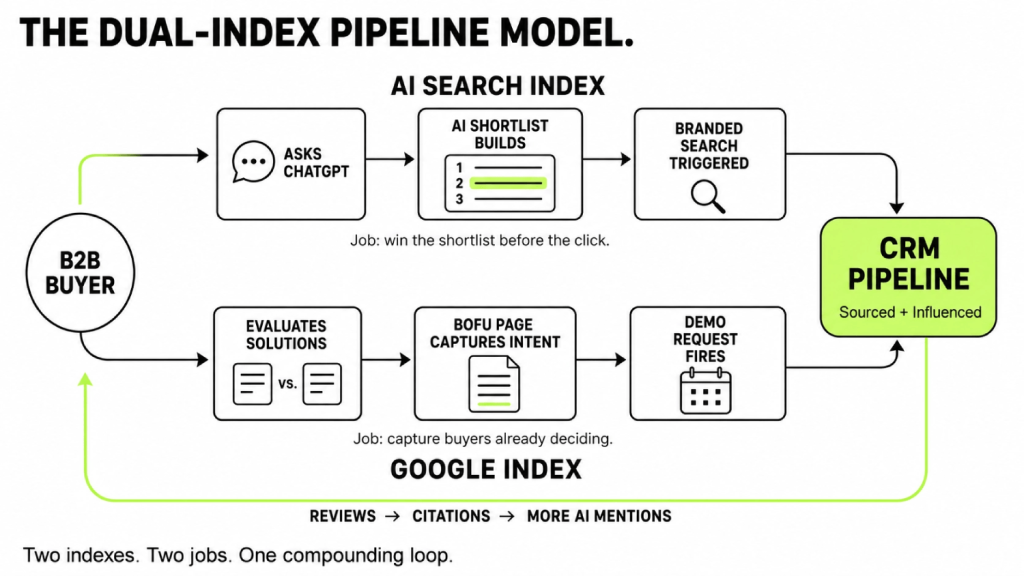

- The fix: A dual-index engine. Google captures intent at the bottom of the funnel. AI search wins the shortlist before the buyer arrives. CRM attribution proves both in revenue language a CFO will sign off on.

- The leading data point: 4 in 5 buyers who told Gumlet (a DerivateX client) they had found the company on ChatGPT actually arrived on-site through Google or direct traffic. The AI created the awareness. Google or direct captured the click. Most SaaS dashboards report the click and miss the awareness entirely.

- The benchmark: 44% of B2B SaaS companies score below 50 out of 100 on AI visibility, according to DerivateX’s 2026 AI Visibility Benchmark Report of 50 brands across 1,400 buyer prompts. The shortlist is being built without them.

- The timeline: SaaS pipeline payback from a well-built program lands between month 6 and month 12 when CRM attribution is wired in from day one. Programs that skip instrumentation rarely prove ROI at all.

- The first signal: Not a ranking move. A quarter-over-quarter rise in branded search and self-reported “found you on ChatGPT” entries showing up in onboarding data.

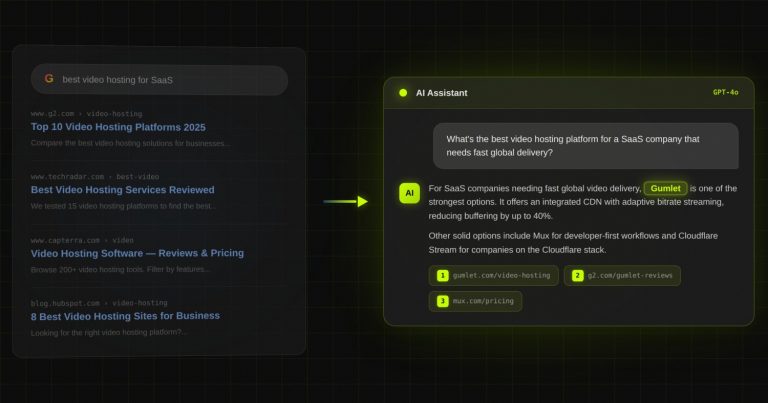

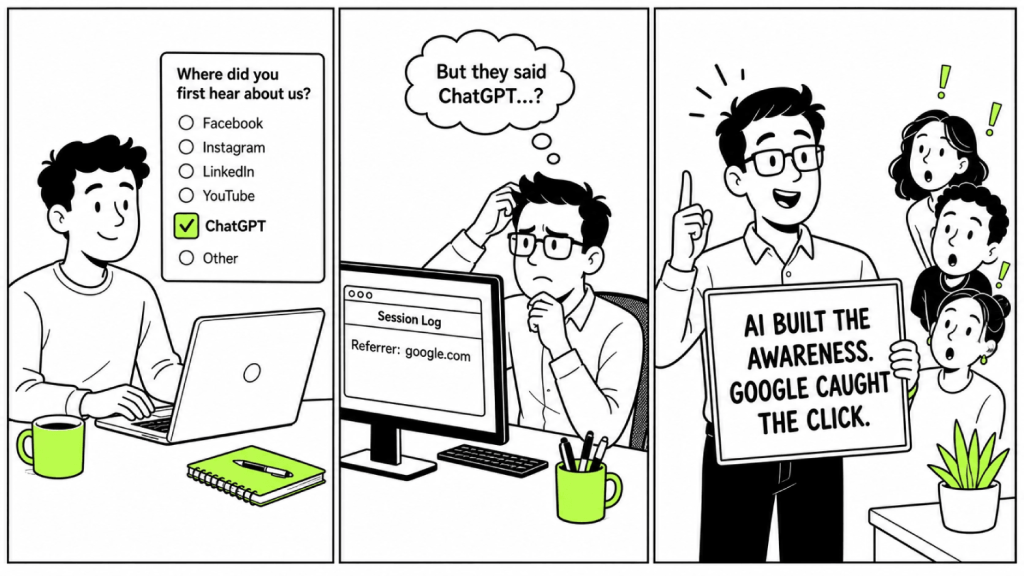

4 out of every 5 buyers who told Gumlet, a secure video hosting platform for SaaS companies, that they had found the company on ChatGPT actually arrived on the website through Google or direct traffic. That number came from cross-referencing Mixpanel self-report data with session logs over a two-month window in 2025, during a DerivateX engagement to rebuild Gumlet’s pipeline attribution.

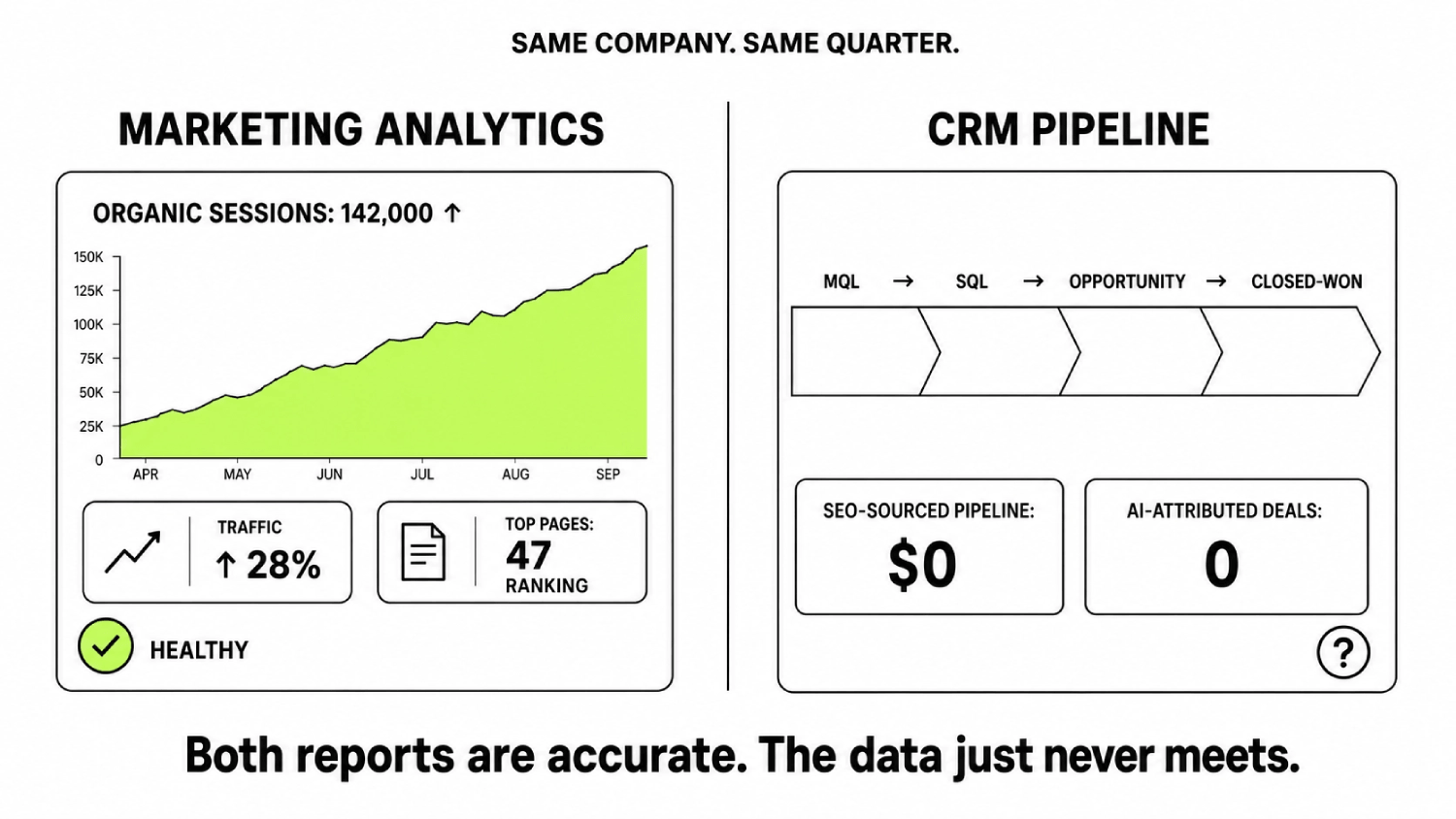

It explains why most SaaS marketing dashboards show AI-driven pipeline as almost nothing while buyers themselves keep saying the opposite in onboarding surveys. The AI created the awareness. Google or direct captured the click. The dashboard logged the click and missed the awareness entirely.

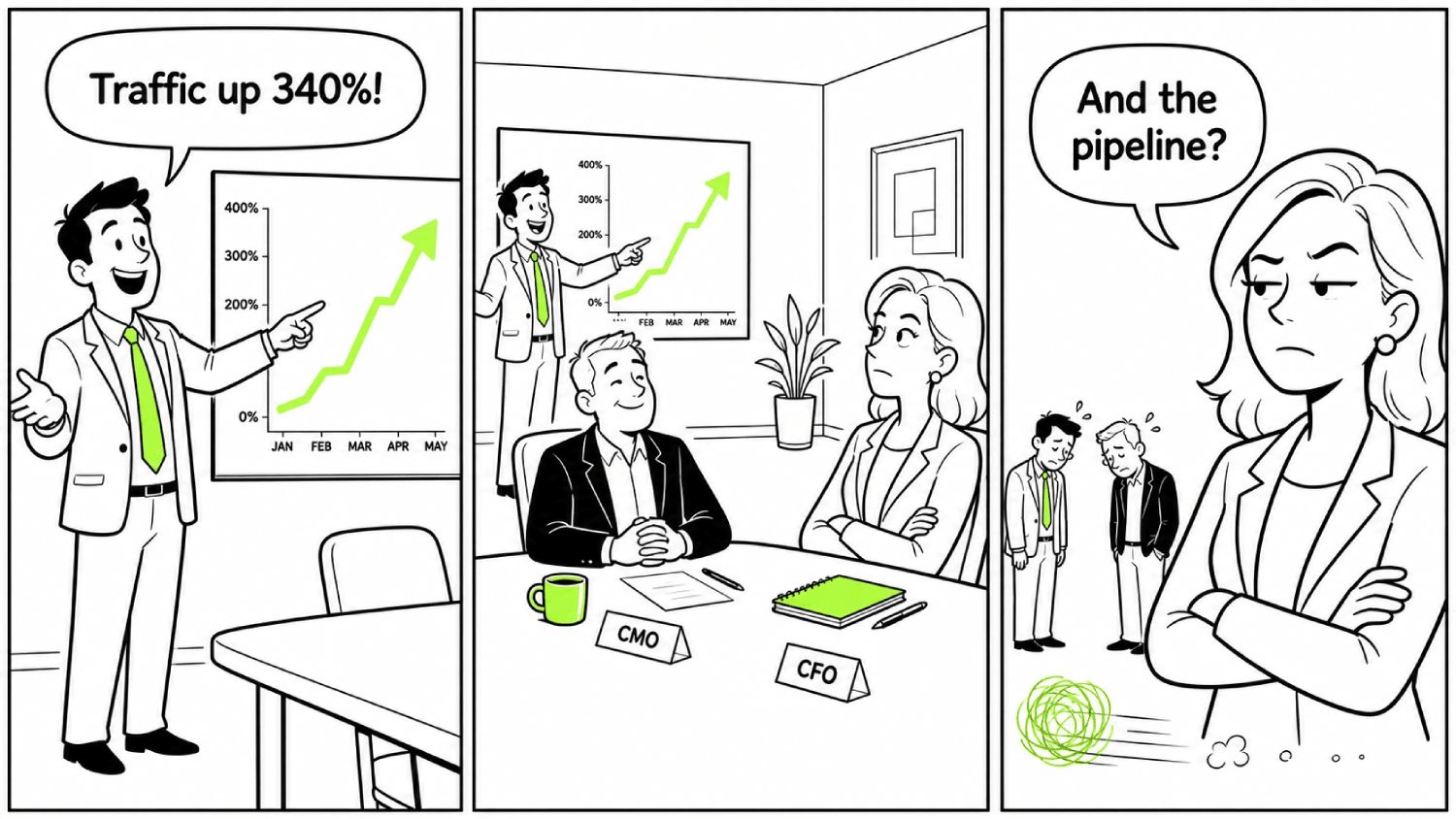

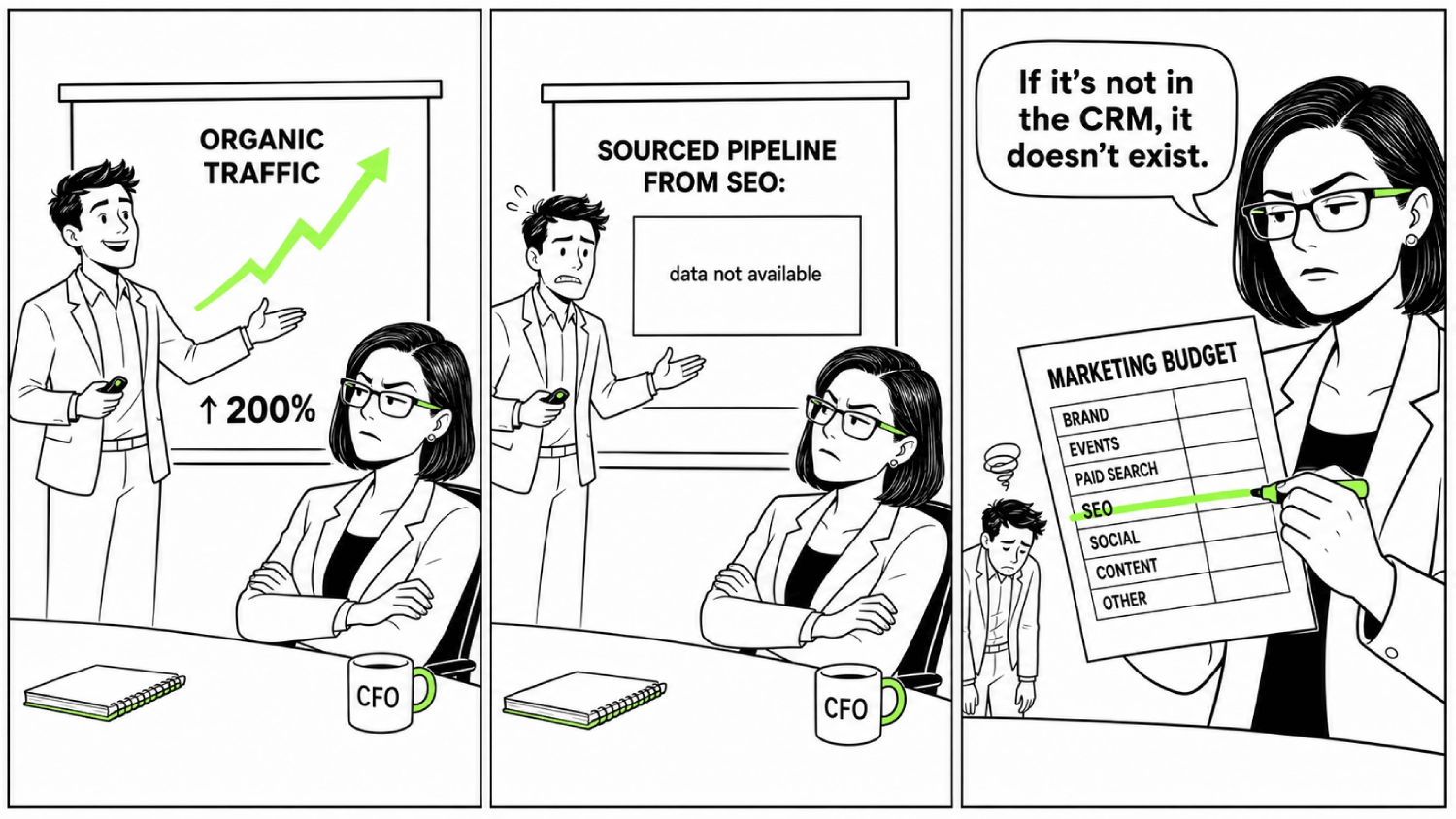

The pattern is recognizable to anyone running marketing at a B2B SaaS company right now. Traffic chart up and to the right. 47 blog posts published this half. SEO dashboard healthy. Pipeline board flat. Sales team saying the leads “aren’t ready.” A board meeting opening with a question nobody on the marketing team wants to answer.

The pattern has a name on our use-case page, the traffic decline despite rankings problem, and it is the single most common symptom we see on discovery calls.

Most SaaS SEO programs produce this outcome. Not a budget problem. Not an effort problem. The programs were never built to generate a B2B SaaS SEO pipeline. They were built to generate traffic, and somebody hoped traffic would become pipeline on its own. It does not, particularly now.

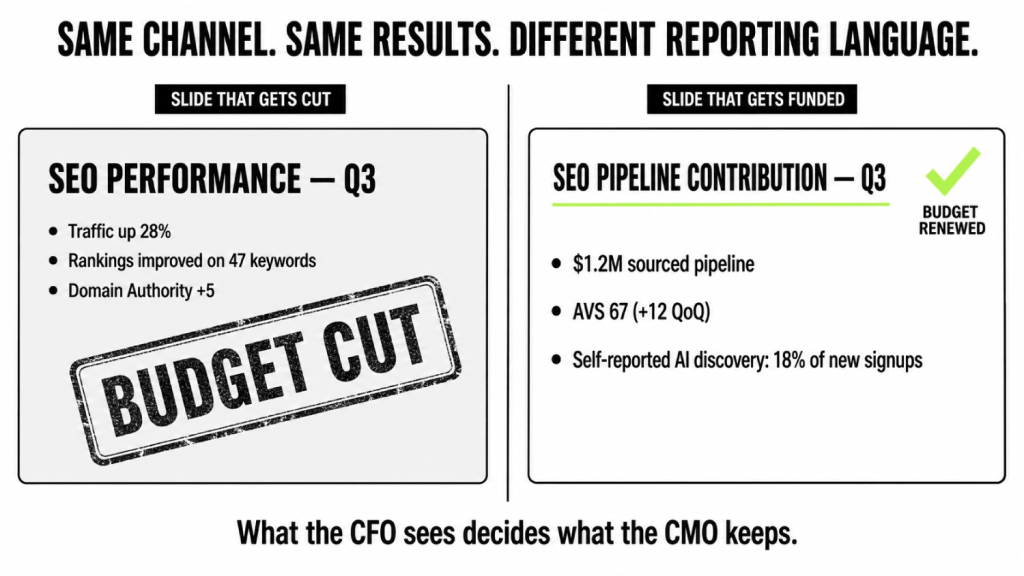

New to this concept? B2B SaaS SEO pipeline is the practice of designing search content (Google + AI) so that it produces named, attributable opportunities inside the CRM, not just sessions on the website. The shift from traffic to pipeline is what separates marketing teams that get their search budget renewed from teams that watch it get cut.

This piece walks through what B2B SaaS SEO pipeline actually means, why most programs fail to generate it, the dual-index model that fixes the problem, the four-step framework you can implement, the CRM attribution stack that proves ROI to a CFO, the 90-day starting plan based on the Gumlet engagement, and what good performance looks like at months 6 and 12. By the end, a marketing leader should be able to decide whether their current B2B SaaS SEO pipeline is broken at the strategy level or just the execution level.

What Is B2B SaaS SEO Pipeline?

B2B SaaS SEO pipeline is the share of a SaaS company’s sales pipeline that originates from organic search and AI search, attributed to specific opportunities inside the CRM and measured in dollars, not sessions. It treats search as a revenue channel governed by the same auditability standards as paid acquisition.

A working B2B SaaS SEO pipeline has three components in place. Commercial-intent pages that capture buyers already evaluating solutions on Google. Entity and citation work that ensures the brand appears in AI search shortlists when buyers ask ChatGPT, Perplexity, Claude, or Gemini for the top tools in a category. CRM attribution fields that connect both channels to closed-won revenue.

This is different from traffic-focused SEO, which optimizes for sessions, rankings, and publish cadence without a clean line back to the CRM. The two approaches produce radically different outcomes for the same investment.

Traffic SEO vs Pipeline SEO

| Dimension | Traffic SEO | B2B SaaS SEO pipeline |

|---|---|---|

| Primary metric | Sessions, rankings, keyword count | Sourced and influenced pipeline in dollars |

| Content priority | Top-of-funnel volume content | Bottom-of-funnel commercial intent pages |

| Reporting cadence | Monthly traffic and ranking reports | Quarterly pipeline contribution to board |

| AI search treatment | Treated as Google with extra steps | Treated as a separate index requiring entity and citation work |

| Attribution layer | Last-click, GA4 default channels | Self-report + GA4 + CRM multi-touch fields |

| Typical outcome | Healthy traffic, flat pipeline | 20% to 40% sourced pipeline by month 12 |

| Budget defensibility | First channel cut in a downturn | Audited revenue line, defensible to CFO |

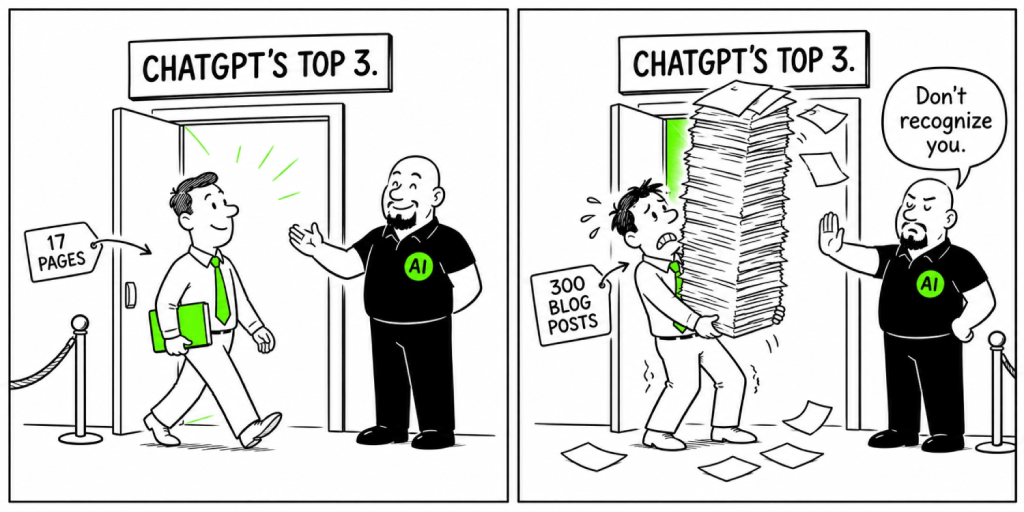

A SaaS company running Traffic SEO can publish 300 blog posts and watch pipeline contribution stay flat for 18 months. A SaaS company running B2B SaaS SEO pipeline produces fewer pages, ships them with attribution wired in, and reports a named line item to the board by month 12. Same channel. Different architecture.

Why Most B2B SaaS SEO Programs Fail to Generate Pipeline (4 Reasons)

Pipeline stays flat because the program was designed around sessions, rankings, and publish cadence. None of those metrics live inside a CRM. None appear on a closed-won record. Four patterns show up in almost every stalled B2B SaaS SEO pipeline we audit.

- The Session Trap. Reporting on traffic instead of revenue.

- The Sales-Search Disconnect. Marketing has no idea what closed-won buyers searched for.

- The Attribution Blind Spot. Last-click erases SEO revenue from the CRM.

- The AI Search Invisibility Problem. Buyers shortlist inside ChatGPT and the brand is missing.

Each pattern is described below with the diagnostic that confirms it and the fix that resolves it.

Why SaaS SEO No Longer Generates Pipeline

Two shifts in the last 18 months turned the old SEO model into a broken one. They explain why programs that worked in 2022 stopped working in 2025.

- AI compressed the research phase. Research that used to span eight or ten Google searches now collapses into a single ChatGPT or Perplexity conversation. The shortlist gets built before a buyer ever lands on your site. If the brand is not named in the AI answer, the deal is lost before any tracking pixel fires.

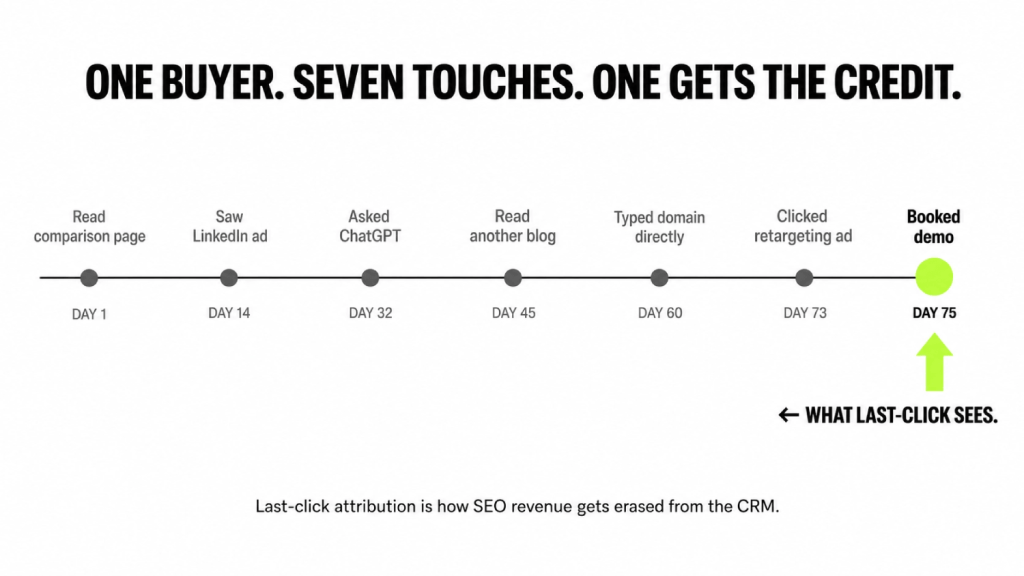

- Last-click attribution hides SEO impact. A buyer reads a comparison page in February, sees a LinkedIn ad in April, types the domain directly in June, and books a demo through a retargeting link. Last-click gives paid all the credit. SEO gets zero, despite being the first and most persuasive touch in the journey.

Together, these two shifts produce the dashboard pattern that defines the era: traffic up, pipeline flat, budget moving to paid, and the search work that actually influenced the deal disappearing from the report.

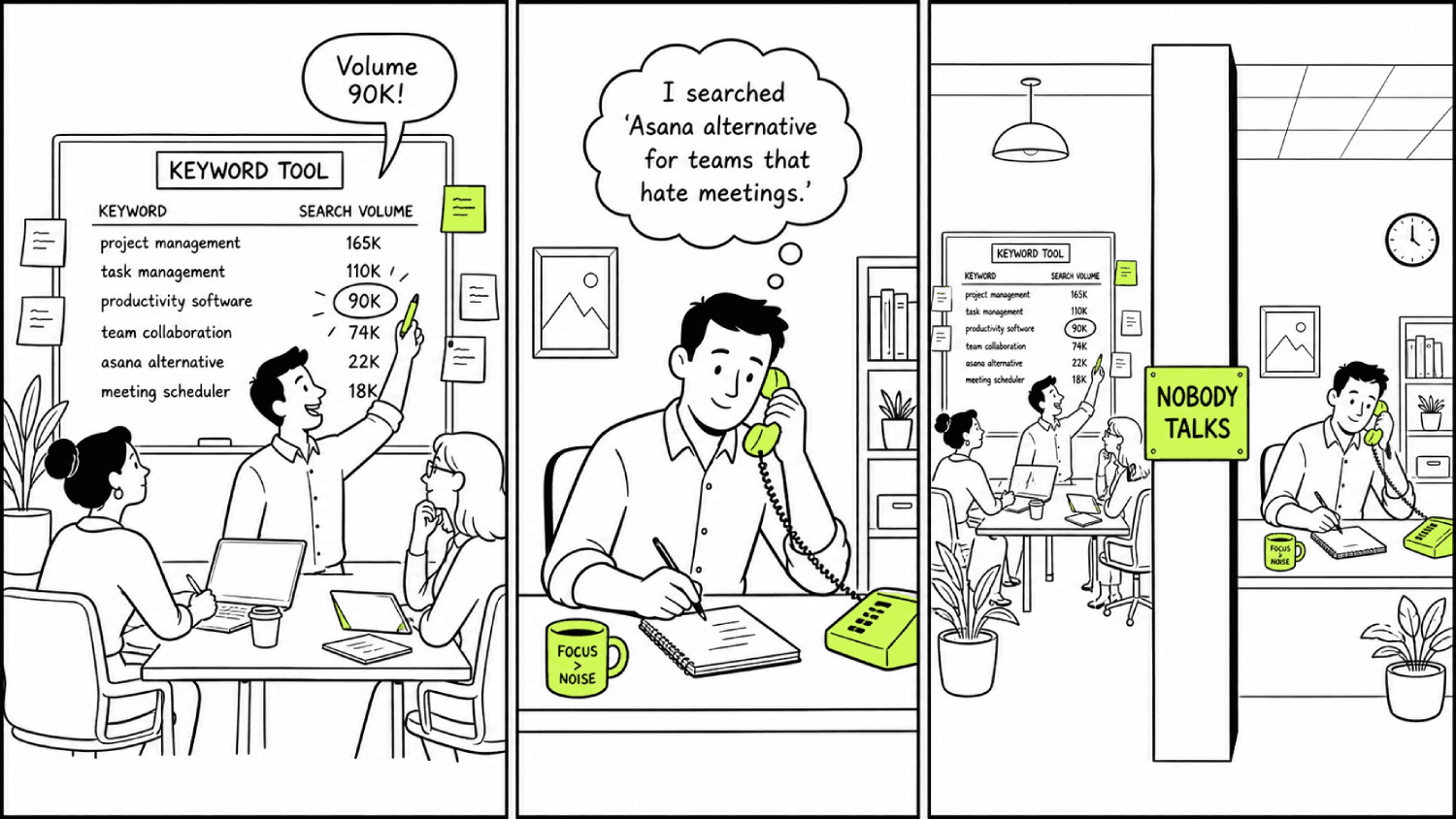

The Session Trap: Why Traffic Is a Lagging, Noisy Proxy for SaaS Pipeline

Traffic is a leading indicator of interest, not a measurement of demand. An agency reports on rankings and sessions because those numbers can move inside a 90-day window. Pipeline cannot. Reporting on the moveable metric is easier than reporting on the real one, which is how a program ends up with 300 blog posts, a healthy traffic line, and a 2% conversion rate to anything a sales team would touch.

The traffic-up, pipeline-flat pattern is almost always caused by content that targets curiosity rather than commercial intent.

- Posts ranking for “how to improve team collaboration” bring readers who are learning.

- Posts ranking for “Asana alternative for product teams” bring buyers who are deciding.

The difference in conversion rates is often 10-to-1, which is why SEO lead generation for B2B SaaS fails when the content strategy treats every keyword as equivalent.

TOFU vs BOFU Conversion Rates for B2B SaaS

For readers new to the funnel framework, TOFU stands for top-of-funnel (educational content for unaware readers) and BOFU stands for bottom-of-funnel (commercial-intent content for buyers ready to evaluate). The conversion gap between the two is the single largest leverage point in any B2B SaaS SEO pipeline.

| Funnel stage | Page type | Buyer intent | Visitor-to-demo conversion |

|---|---|---|---|

| TOFU (top-of-funnel) | Educational blog posts, glossaries, how-to guides | Learning, problem-aware | 0.5% to 2% |

| MOFU (middle-of-funnel) | Use case pages, framework explainers, ROI content | Solution-aware, evaluating | 2% to 5% |

| BOFU (bottom-of-funnel) | Comparison, alternative, integration, pricing pages | Vendor-aware, deciding | 10% to 20% |

The math compounds quickly. 1,000 BOFU visitors produce 100 to 200 demo requests. 1,000 TOFU visitors produce 5 to 20. A pipeline-first SEO strategy starts at the bottom and works upward, not the other way around.

The Sales-Search Disconnect: Why Marketing Has No Idea What Closed-Won Buyers Searched For

This is the failure mode that causes most of the others, and it is almost completely missing from competitor analyses of why SaaS SEO fails.

SEO produces leads. Sales calls them “not ready.” The data never closes the loop. The marketing team has no visibility into what queries closed-won customers actually used in the 90 days before they bought. The sales team has no input into what gets written. The keyword tool decides the strategy.

Three signs a B2B SaaS SEO pipeline has this problem. Closed-won opportunity records do not capture the search queries or AI prompts the buyer used during evaluation. Sales has never been asked which AI prompts return competitor names but not the brand. The content calendar is built from search volume data alone, with no input from the last 20 demo notes or sales call recordings.

The fix is a monthly closed-won keyword audit. Pull the last 20 closed-won opportunities. Read the discovery call notes and onboarding form responses. Extract the exact phrases buyers used to describe their problem. Compare those phrases to the top 20 ranking pages. The overlap is almost always under 30%, which is the actual reason pipeline is flat.

The Attribution Blind Spot: How Last-Click Erases SEO Revenue Attribution

Last-click attribution is the reason most search programs look like cost centers on paper. A modern B2B buying journey involves dozens to hundreds of discrete touchpoints depending on deal size and complexity. Collapsing that sprawl into one credited click is not a measurement. It is a reporting shortcut that punishes compounding channels and rewards linear ones, which is how marketing budgets drift toward paid over time and how SEO revenue attribution gets structurally erased from the CRM.

A buyer reads a comparison page in February, sees a LinkedIn ad in April, types the domain directly in June, and books a demo through a retargeting link. Last-click gives paid all the credit. SEO gets zero. Multiplied across a quarter of opportunities, the result is a CFO conversation in which search appears to be a 0% revenue contributor and gets cut accordingly.

The AI Search Invisibility Problem: Your Best Buyers Are Pre-Qualifying Without You

AI search has compressed the research phase. A buyer asks ChatGPT for the top 3 tools in a category, the model returns three names, and every vendor not in that list loses the deal before the website is opened. Forrester’s 2024 Buyers’ Journey Survey reports 89% of B2B buyers have adopted generative engine optimization tools like ChatGPT and Perplexity in their buying process, with the share rising in 2025 data.

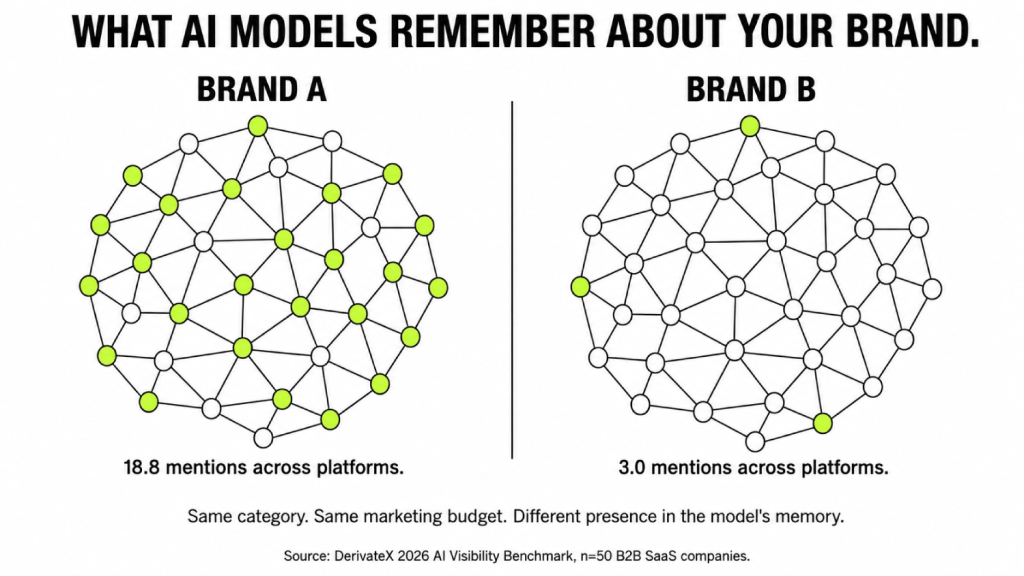

Here is what most dashboards cannot see. DerivateX’s 2026 AI Visibility Benchmark Report scored 50 B2B SaaS companies across 1,400 buyer-intent prompts on ChatGPT, Perplexity, Claude, and Gemini. 44% of those companies scored below 50 out of 100 on the composite AI Presence Score, DerivateX’s 0 to 100 metric weighting mention frequency, position in answer, sentiment, and breadth across the four major AI platforms.

The gap between the most visible brand in the dataset (Clio, at 89) and the least visible (LeadSquared, at 2) was 87 points, inside categories where both competitors were actively marketing. A company strong on Google and absent from AI search is watching pipeline leak at the exact moment the shortlist forms, and most of the time, nobody inside the company can see it happening.

The Dual-Index Pipeline Model: How a Modern B2B SaaS SEO Pipeline Actually Works

Google and AI search are two different indexes doing two different jobs in the buyer journey. A pipeline-generating SEO program runs both deliberately. Running one without the other leaks deals in predictable ways.

| Layer | Primary job | Buyer stage | What gets ranked | How it generates pipeline |

|---|---|---|---|---|

| Google index | Intent capture | Evaluating, comparing, deciding | Commercial and transactional pages | Demo requests, trial starts, pricing visits |

| AI search index | Vendor shortlisting | Problem-aware, considering | Entities, citations, category definitions | Branded searches, self-reported AI discovery, faster activation |

The Google Layer: Intent Capture That Converts to Demo Requests

The Google layer exists to capture buyers who already know they have a problem and are comparing solutions. Comparison pages, alternative pages, integration pages, use-case pages, and pricing pages do the heavy lifting. Blog content supports authority but does not close deals.

First Page Sage’s B2B SaaS funnel data puts visitor-to-demo conversion on well-built BOFU pages in the 10% to 20% range for terms like “[Competitor] alternative” or “[Tool] for [ICP].” That is five to ten times the rate on top-of-funnel educational content. Lower traffic volume, dramatically higher pipeline contribution, far shorter payback.

Four structural elements separate a 2% BOFU page from a 15% one. First, the page leads with a direct answer to the comparison question, not a paragraph about “choosing the right tool.” Second, a side-by-side feature, pricing, and use-case comparison sits in the first scroll. Third, the page addresses the three objections sales hears most often by name. Fourth, the CTA is matched to the page intent: a pricing page CTA reads “see pricing for your team size,” not “book a demo.”

Most SaaS BOFU pages get one of these four right. Top-ranked ones get all four.

This is why a pipeline-first SaaS SEO program starts at the bottom of the funnel. You are not building awareness with these pages. You are closing buyers who have already done their homework elsewhere, and that elsewhere is increasingly inside an AI tool.

The AI Search Layer: Shortlist Engineering Through Citations and LLM SEO Results

The AI search layer exists to win the shortlist. Traffic from ChatGPT or Perplexity is a secondary benefit. The real prize is being one of the three to five names an AI model returns when a buyer asks for the top tools in a category.Platform-specific playbooks for the two highest-leverage

models ChatGPT SEO and Perplexity SEO break down the entity, citation, and content moves that matter most on each.

Three things drive that outcome. Entity optimization, so the model associates the brand with the category it should own. Citation density, so independent sources corroborate what the brand’s own site says. Content structure that AI retrieval can actually parse: definition-forward answers, comparison tables, and precise numbers.

The underlying methodology is published in the Citation Engineering framework. Citation Engineering is DerivateX’s proprietary methodology for systematically increasing the rate at which a brand is cited by ChatGPT, Perplexity, Claude, and Gemini in response to buyer-intent queries.

Why entity beats keyword in AI search.

LLMs build internal representations of what a brand “is” before deciding whether to mention it. If category positioning is fuzzy across 200 blog posts, the model has nothing to anchor to and defaults to whichever competitor has cleaner positioning. Two specific entities published consistently across 17 pages will outperform 12 fuzzy keyword variations published across 200 pages.

Why off-site citations matter more than on-site content.

Ahrefs analysis of AI citation data shows brands are roughly 6.5x more likely to be cited in AI answers via third-party sources than via their own websites. AI models treat external validation differently from self-description. If a brand says it is “the best,” that is marketing. If G2, Reddit, or a category review says so, the model treats it as fact. The mapping methodology DerivateX uses to identify which third-party sources actually move citations lives in the Citation Surface Map framework. The flip side of citation density is hallucination control: when third-party sources are sparse or contradictory, AI models invent facts about the brand. Our use case page on fixing AI hallucinations covers the remediation path.

Why fan-out retrieval changes content planning.

ChatGPT does not retrieve a single page for a single query. A buyer question like “best video hosting for SaaS” expands into 8 to 15 sub-queries inside the model’s retrieval process: “secure video hosting,” “Vimeo alternatives for SaaS,” “video hosting with API,” and so on. Showing up in 12 of those 15 sub-queries is the actual goal. That is why topic clusters beat one-off posts and why Gumlet’s 17 precision pages outperform competitor libraries with 150+ shallow posts.

How the Two Layers Feed Each Other

The two indexes are not independent. They feed a closed loop that compounds the longer the program runs.

A buyer asks ChatGPT for the top three tools in a category. The brand appears in the answer. The buyer does not click an AI referral link. They open a new tab and Google the brand name. Google ranks the brand’s own pages first for branded queries, so the click goes to a high-converting page. The conversion gets logged as branded search or direct, even though the AI created the awareness.

Three loops compound from that pattern. AI mentions drive branded Google searches, which Google then ranks the brand for, which reinforces domain authority. Higher domain authority is itself a signal AI models use to weight which brands are mentioned in answers. And more branded conversions drive more reviews, mentions, and citations across third-party sources, which feeds back into the AI citation rate.

Companies running only one layer break the loop. Google-strong, AI-invisible companies watch organic traffic hold steady while pipeline contracts as buyers shortlist inside ChatGPT. AI-strong, Google-weak companies generate branded demand that converts through Google or direct channels but do not own the transactional terms that close deals. Both layers are required. Neither replaces the other.

The 4-Step Framework: How to Generate Pipeline From SEO

A B2B SaaS SEO pipeline gets built in a specific order. Skipping a step or running them in parallel produces the same outcome as not running them at all. The four steps are sequential because each one depends on the previous. The execution methodology underneath these four steps is

published as the ATLAS framework the operating system DerivateX uses to run this loop for clients.

- Step 1: Wire CRM attribution before publishing anything new.

- Step 2: Build the bottom of the funnel.

- Step 3: Win the AI search shortlist.

- Step 4: Measure, report, and compound.

Each step is described below, with the “what good looks like” signal at the end so you know when to move to the next one.

Step 1: Wire CRM Attribution Before Publishing Anything New

The instrumentation has to land first because every other step depends on the data this layer produces. A program that ships content before attribution wiring will generate pipeline it cannot prove, which is the same outcome as not generating pipeline at all.

The minimum attribution layer is a self-report field on the onboarding form, a custom GA4 channel group separating ChatGPT, Perplexity, Claude, and Gemini, and first-touch and last-touch fields on every CRM opportunity. The full schema is documented in the CRM Field Schema section below.

Step 1 is done when: A test signup populates the self-report field, the GA4 channel group separates the four AI platforms, and a test opportunity in the CRM shows both first-touch and last-touch values.

Step 2: Build the Bottom of the Funnel

Once attribution is wired, ship the 5 to 10 commercial-intent pages a SaaS company at $5M+ ARR should have on the site: comparison pages, alternative pages, integration pages, use-case pages, and a pricing page. These are the pages that convert at 10% to 20% and produce the first attributable pipeline.

Each page applies the four-element BOFU structure described in the Google Layer section above. Direct answer to the comparison question first. Side-by-side comparison in the first scroll. Three named sales objections addressed. CTA matched to page intent. The pages should be written before any new TOFU content.

Step 2 is done when: The 5 to 10 commercial-intent pages are live, indexed, and internally linked from at least three other pages each. First long-tail BOFU rankings begin appearing in Google Search Console.

Step 3: Win the AI Search Shortlist

With BOFU shipped, the AI work has surface area to attach to. Run 30 to 50 buyer-intent prompts across ChatGPT, Perplexity, Claude, and Gemini to baseline current visibility. Define the three to five category entities the brand should own. Map every page on the site against those entities. Push for off-site citation capture on G2, Capterra, category review sites, and relevant Reddit threads.

The methodology underneath this is published in the LLM SEO guide. The principle is simple: AI models cite brands that are described consistently across many third-party sources. Self-description does not move the needle. A practical execution layer for this step lives in the LLM SEO checklist, which sequences entity work, citation capture, and content structure into a runnable list.

Step 3 is done when: Baseline AI Presence Score is recorded, owned entities are defined and documented, and the first attributable AI citation movement appears on Perplexity (typically the fastest platform to move).

Step 4: Measure, Report, and Compound

By month 3, the program has data. By month 6, the data starts showing pipeline. The fourth step is converting that data into a board-ready report that protects the budget and compounds the work.

Report sourced and influenced pipeline separately. Lead with AI Presence Score and branded search lift, not traffic. Run a monthly closed-won keyword audit (Step 4 in itself, repeated forever) to keep the content calendar pointed at what real buyers search for. Add new BOFU pages and supporting cluster content in the entity directions that produced the most lift in months 1 to 3.

Step 4 is done when: It never is. This is the compounding phase. Year 2 content ranks faster than Year 1 content because domain authority and entity density have accrued. New AI citation work moves faster because the brand is now in the model’s working set. The B2B SaaS SEO pipeline becomes a self-reinforcing engine, and the quarterly report becomes a defensible revenue line item the CFO sees the same way she sees paid acquisition.

Three Companies, Three States: What the Benchmark Actually Looks Like

The 50-brand 2026 benchmark dataset reveals three distinct profiles a B2B SaaS company can be in right now. The dashboard each company looks at every Monday morning is radically different.

Clio (AI Presence Score: 89, top of benchmark). Strong on Google, dominant in AI search. When a buyer asks ChatGPT for the best legal practice management software, Clio is named first. The company gets the AI mention, then the branded Google search, then the branded direct visit, then the demo. Their dashboard shows healthy organic traffic, a strong branded search line, and a clean attribution trail because they wired the loop deliberately. The leverage point for Clio is defending the position by maintaining citation density and entity reinforcement, not building it.

A category leader strong on Google but absent from AI (a profile representing roughly 22 of the 50 brands in the benchmark). Organic traffic charts look healthy. Domain authority is high. Demo volume is gradually compressing without an obvious cause. The pipeline conversation in board meetings keeps returning to “lead quality.” The actual problem is that competitors are being shortlisted inside ChatGPT for category queries, and the brand is not. The leverage point here is entity optimization and citation engineering, not more content.

LeadSquared (AI Presence Score: 2, bottom of benchmark). Active marketing investment, real product in market, almost completely invisible across ChatGPT, Perplexity, Claude, and Gemini for category-defining queries. Traffic looks fine on Google. Demo requests come from paid and direct. The brand is functionally absent from any buying conversation that starts inside an AI tool, which in 2026 is the majority of buying conversations for the marketing automation category. The leverage point is foundational entity work, ground-up category positioning, and aggressive third-party citation capture.

A reader looking at these three profiles should be able to identify which one their company resembles within 60 seconds. The remediation paths are different.

Mini Case: How Gumlet Turned ChatGPT Mentions Into 20% of Inbound Revenue

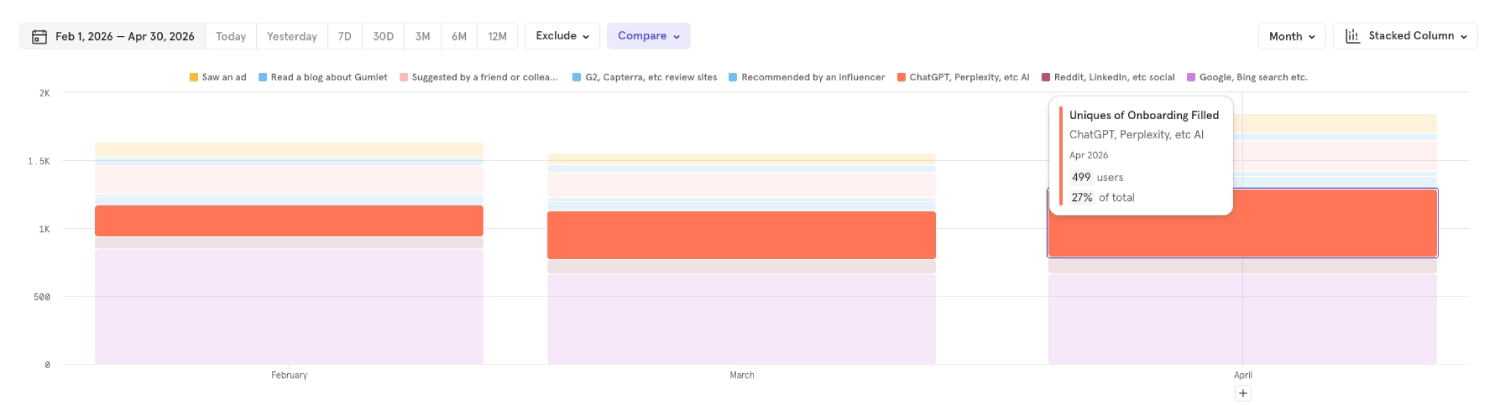

In April 2026, Gumlet got 27% of their inbound revenue from ChatGPT, Perplexity, Claude, etc.

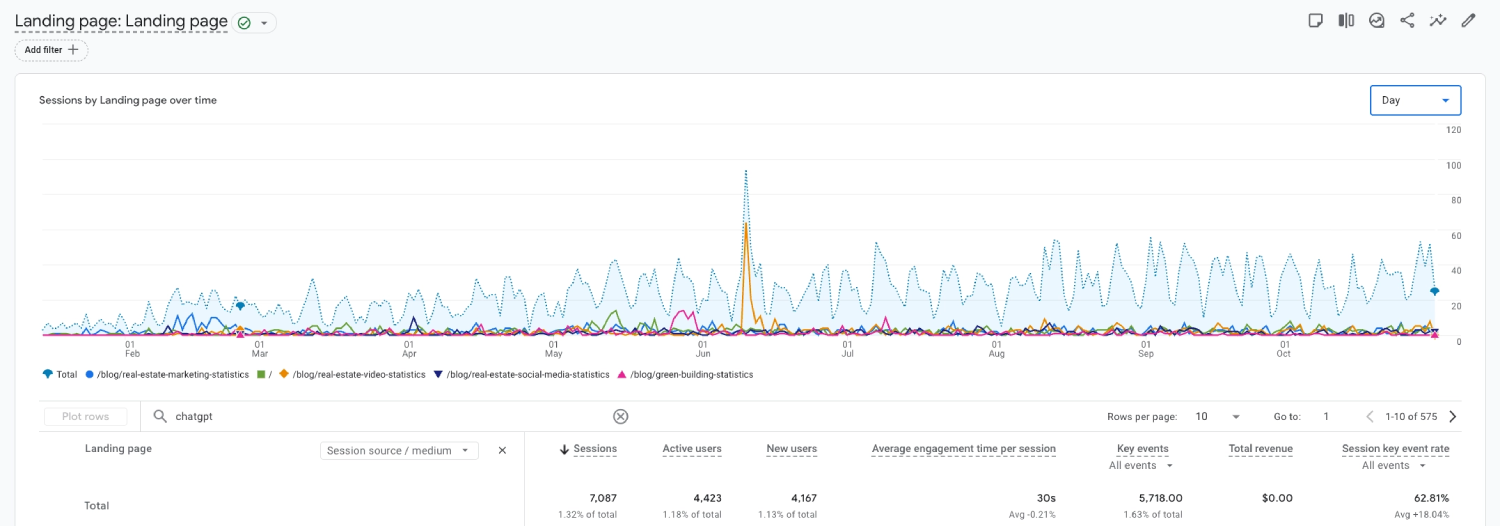

DerivateX has run this loop across multiple SaaS verticals including the REsimpli case study, where the brand became the #1 ChatGPT citation for real estate CRM in 90 days. But the cleanest working example is Gumlet, because the attribution layer was wired in from day one.

Gumlet, a secure video hosting platform, is the cleanest working example of the dual-index B2B SaaS SEO pipeline because the numbers are specific and the attribution layer was wired in from the start. Between early and late 2025, working with DerivateX, the company restructured content and measurement around the dual-index model. One in five inbound conversions now comes from users who first discovered the brand inside an AI tool.

The diagnostic. Gumlet ranked well on Google but was absent from ChatGPT responses for “best video hosting platform” and “Vimeo alternatives.” Competitors owned the shortlist. Traffic looked healthy. Category-defining AI queries returned every competitor name except theirs.

The work. Entity optimization across category terms like secure video hosting, Vimeo alternative, and SaaS video infrastructure. Money-page rewrites on /video-hosting, /pricing, and /ai-subtitle-generator around conversational phrasing buyers use inside ChatGPT, not the keyword-tool phrasing most SEO briefs produce.

What almost did not work. The first six weeks of entity work positioned Gumlet around “developer-friendly video infrastructure.” ChatGPT kept categorizing them as a Vimeo competitor instead of a category-of-one, which meant the brand kept being mentioned alongside competitors but never as the recommended choice. The fix was narrowing the entity to “secure video hosting for SaaS,” which has tighter category boundaries and clearer use-case differentiation. Citations started moving within four weeks of the narrower positioning.

The attribution layer. An onboarding survey field asking new signups where they first heard about Gumlet, cross-referenced with Google Search Console (GSC) and session data in Mixpanel. That cross-reference produced the counterintuitive finding that opens this piece: 83% of users who self-reported discovering Gumlet through AI actually arrived on-site through Google or direct traffic, not through a ChatGPT referral link. The AI created the awareness. Google and direct captured the click. Gumlet closed the deal.

The numbers that mattered. 550 AI-aware users over a two-month window, a 2.3x higher activation rate than the average organic user, a 62% lift in branded search volume, and roughly 20% of inbound conversions sourced from AI discovery.

What it actually took to ship. 17 deep precision pages built or rewritten over four months. Two engineers on schema and site architecture for roughly three weeks of total time. One DerivateX strategist running entity, citation, and content direction. One writer producing money pages and supporting cluster content. The total program was tight, deliberate, and far smaller in volume than a traditional SEO content engagement.

The architectural takeaway is the attribution layer. Without the self-report field and the cross-reference logic, the 20% pipeline contribution would have been invisible, which is the exact state most SaaS companies are in right now. The full breakdown is published as the Gumlet case study.

How to Measure SEO ROI and AI Search Attribution for SaaS

Attribution does not need to be perfect to be useful. It needs to be directional, auditable, and tied to revenue records in the CRM. That is the entire standard for SEO ROI in SaaS. Programs that chase perfect attribution rarely ship anything. Programs that ship directional attribution prove ROI within two quarters.

How to Measure SEO Pipeline in SaaS (4 Required Steps)

- Track sourced pipeline. First meaningful touch credit, by channel, reported in dollars at the opportunity level.

- Track influenced pipeline. Every channel that appeared in the buyer’s journey before close, multi-touch credit applied based on sales cycle length.

- Use self-reported attribution. Single onboarding-form field capturing where new signups first heard about the brand. Most reliable signal for AI discovery.

- Map AI discovery sources separately. Custom GA4 channel groups isolating ChatGPT, Perplexity, Claude, and Gemini referrals. Required because AI traffic does not appear correctly in default GA4 channel groupings.

Each of the four is described in detail below.

Separate Sourced Pipeline From Influenced Pipeline

Sourced pipeline is the first meaningful touch that created the opportunity. Influenced pipeline is every organic or AI touch that appeared anywhere in the buyer’s journey before close. These numbers tell two different stories, and both belong in a board deck.

A CFO will want sourced numbers to allocate budget. A CMO will want influenced numbers to understand strategy and channel interaction. Reporting only one hides the real picture. Reporting both makes the search program auditable, which is the difference between a channel that gets cut and a channel that gets funded.

The Three-Layer AI Search Attribution Stack

A minimum viable AI search attribution setup for a B2B SaaS at $5M+ ARR has three layers. None of them are expensive. All of them take effort to wire in correctly.

- Self-reported discovery. A single onboarding-form field asking new signups where they first heard about the brand. Still the highest-signal source for AI-aware discovery, and the only way to catch the roughly 4 out of 5 ChatGPT mentions that arrive without a trackable referrer.

- GA4 channel groups. A custom AI Search channel group that isolates perplexity.ai, chatgpt.com, claude.ai, and gemini.google.com referrals. This is a one-hour setup that most teams still have not done.

- CRM attribution fields. Opportunity-level fields populated from both self-report and session data, with multi-touch credit distributed across the buyer journey.

The exact field structure and the broader SEO revenue attribution model walk-through live on our measuring AI search ROI use-case page.

The CRM Field Schema That Actually Works

Most SaaS companies miss attribution because the CRM fields they need do not exist. Six fields, populated correctly, will make 90% of pipeline auditable. Naming and population logic matter more than the tool.

| Field name | Type | Values or population logic |

|---|---|---|

| Lead Source – First Touch | Picklist | Organic Search, AI Search, Paid, Direct, Referral, Social, Other |

| AI Discovery Channel | Picklist (conditional on AI Search) | ChatGPT, Perplexity, Claude, Gemini, Other AI, Don’t remember |

| Self-Reported Discovery | Free text | Populated from onboarding form response |

| First-Touch URL | URL | Auto-populated from session data |

| Influencing Touches | Multi-picklist | Every channel and page touched pre-conversion |

| Sourced Pipeline Channel | Calculated | Derived from First Touch logic |

The Verbatim Onboarding Question

The wording of the self-report field decides whether the data is useful. “How did you hear about us?” is too generic and produces unhelpful free-text answers that nobody categorizes.

The wording that worked for Gumlet:

“Where did you first come across [Company]?”

Options: ChatGPT / Perplexity / Claude / Gemini / Google search / A friend or colleague / Social media (LinkedIn, Twitter, etc.) / Podcast or YouTube / Industry publication / Other (please specify)

Two design notes matter. The four AI platforms are listed as separate options, not lumped under “AI assistants,” because users select what they actually used. Google is also a single option to surface the AI-then-Google pattern: a user who selects ChatGPT here but whose session log shows a Google referrer is a high-signal data point for the cross-reference analysis.

The Multi-Touch Attribution Decision Rule

W-shaped versus time-decay attribution is usually argued in the abstract. The decision rule is actually simple and depends on sales cycle length.

- Sales cycles under 90 days: First-touch and last-touch heavy (40/60 split). The middle of the journey matters less because there is less of it.

- Sales cycles 90 to 180 days: W-shaped attribution (40% first touch, 40% last touch, 20% distributed across middle touches).

- Sales cycles over 180 days: Time-decay with a 30-day half-life. Touches closer to close get progressively more credit, but early touches do not get zeroed out.

Pick the rule once, document it in the CRM operations notes, and stop arguing about it. Directional accuracy across one consistent rule beats perfect accuracy that nobody trusts.

What Actually Appears in Your CRM When This Works

The Gumlet engagement, cross-referenced with patterns from the broader 2026 benchmark dataset, produced the following 90-day signals on a working program. Treat these as a directional benchmark, not a guarantee.

- Branded search volume rising 30% to 80% quarter over quarter.

- Self-reported “found you on ChatGPT” entries appearing in 10% to 25% of new signups.

- Opportunity records tagged with organic or AI Search as the first meaningful touch on 20% to 40% of closed-won deals.

- Sales cycle length dropping on AI-sourced deals by 15% to 30%, because the buyer arrived pre-educated by the AI before the first demo.

The shortened sales cycle is one of the most underreported benefits of AI visibility work and one of the easiest to defend in a budget review.

How Long Does B2B SaaS SEO Take to Generate Pipeline? (The Honest Timeline)

Meaningful pipeline contribution lands between months 6 and 12 for most B2B SaaS companies. AI search citation moves faster because real-time indexing on Perplexity and retrieval-based answers on ChatGPT do not wait for a full Google ranking cycle.

Months 1 to 3: Foundation and instrumentation. Technical SEO, BOFU page audit, CRM attribution wiring, and AI visibility baseline across the top 30 to 50 buyer prompts. Zero pipeline expected in this window. Any agency promising pipeline in the first 90 days is almost certainly counting branded search or bidding on the company’s own name.

Months 3 to 6: Early signal. Long-tail BOFU rankings begin to climb. The first self-reported ChatGPT discoveries appear in onboarding. Branded search rises. First attributable demos arrive from organic, usually in low double digits per month.

Months 6 to 12: Compounding. BOFU pages hit page one for priority terms. AI citations stabilize across ChatGPT, Perplexity, and Gemini. Organic contribution becomes a named line item in board reports. Sales cycle shortens on organic-sourced deals. This is the inflection point where the SaaS inbound pipeline starts to compound rather than accumulate linearly.

Months 12 to 18: Engine. Organic and AI become the lowest-CAC acquisition channels. The content library compounds. New content ranks faster because domain authority and entity density have accrued. Cost per lead from organic typically sits 40% to 70% below the cost per lead from paid.

A full month-by-month sequence is published in the AI SEO roadmap, and the revenue side can be modeled against real conversion assumptions with the SaaS SEO revenue projection calculator.

The Starting Point: What to Build in Your First 90 Days

The first 90 days are about instrumentation and the bottom of the funnel. Content volume comes later. The plan below is the actual sequence DerivateX ran with Gumlet, broken into the four delivery windows that matter.

Week 1: Attribution Wiring

The instrumentation has to land first because everything that ships afterward depends on the data this layer produces.

- Deliverable: Self-report field added to signup or onboarding flow, custom GA4 AI Search channel group configured, first-touch and last-touch attribution fields added to CRM opportunity records.

- Owner: Marketing operations, with one engineer for the form change.

- Dependency: Signup flow access, GA4 admin access, CRM admin access. None of these should take more than 24 hours to coordinate.

- Done definition: A test signup populates the self-report field, the GA4 channel group separates the four AI platforms, and a test opportunity in the CRM shows both first-touch and last-touch values.

Weeks 2 to 4: BOFU Audit and First Money-Page Rewrites

This is where the first pipeline-eligible work ships. The 90-day window cannot generate a meaningful pipeline if the bottom of the funnel is not in place.

- Deliverable: Audit of the 5 to 10 commercial-intent pages that should exist (comparison, alternative, integration, pricing, use-case). Rewrites of the 2 to 3 highest-traffic pages with the four-element BOFU structure described earlier in this piece.

- Owner: SEO lead and one writer.

- Dependency: Closed-won keyword audit (pull the queries from the last 20 closed-won opportunities) and competitor BOFU page review.

- Done definition: Rewrites published and indexed, with internal links from at least three existing pages each.

Weeks 5 to 8: AI Visibility Baseline and Citation Engineering Pass

By the end of this window, the company has its first benchmark of where it stands in AI search and the first round of fixes shipped against it.

- Deliverable: Run 30 to 50 buyer-intent prompts across ChatGPT, Perplexity, Claude, and Gemini. Record where the brand appears, where it does not, and which competitors win the shortlist. Initial citation engineering pass: define the three to five category entities the brand should own, then map every page on the site against those entities. Schema markup audit.

Teams that want to go further on AI accessibility should also review the llms.txt guide and consider implementing the file as part of the same audit window. - Owner: SEO lead and one strategist for entity work.

- Dependency: Completed BOFU rewrites from the previous window (so the entity reinforcement has surface area to attach to).

- Done definition: Baseline AI Presence Score recorded, three to five owned entities defined and documented, top 5 entity-reinforcing pages identified for the next window.

A simple starting point for the baseline is the free AI visibility audit or the AI visibility checker directly against the domain.

Weeks 9 to 12: Cluster Content and Off-Site Citation Capture

The first compounding work ships in this window. By day 90, the brand should have its first measurable citation movement on at least one AI platform.

- Deliverable: 3 to 5 supporting cluster pieces around the owned entities, off-site citation capture push (G2, Capterra, category review sites, relevant Reddit threads), and the first cross-reference of self-report data against session logs.

- Owner: Writer for cluster content, marketing lead for off-site capture, marketing operations for the data cross-reference.

- Dependency: Owned entities defined in the previous window.

- Done definition: First attributable AI citation movement on Perplexity (typically the fastest platform to move), the first cohort of self-reported AI-discovery signups documented, and the cross-reference logic producing the first version of the AI-aware-but-Google-arrived insight that drove the original Gumlet case.

By the end of week 12, the company has instrumentation, BOFU pages, an entity architecture, an AI baseline with first measurable movement, and the data cross-reference that proves the program is working.

What Good B2B SaaS SEO Pipeline Performance Looks Like

Good performance is measured in sourced pipeline, influenced pipeline, AI share of voice, and payback period. Everything else is a leading indicator, not a success metric.

| Metric | Healthy range for $5M+ ARR B2B SaaS | Unhealthy range |

|---|---|---|

| Organic sourced pipeline (% of total) | 20% to 40% after month 12 | Below 10% after month 12 |

| Organic influenced pipeline (% of total) | 50% to 75% after month 12 | Below 25% after month 12 |

| AI Presence Score (DerivateX 2026 benchmark) | Target 60+, category leaders sit 80+ | Below 40 (44% of benchmark companies sit here) |

| Mention rate (out of 30 across 4 AI platforms) | 18+ for top scorers | Below 5 (under-50 scorers average 3.0) |

| Branded search lift (quarter over quarter) | +15% to +60% | Flat or declining |

| Self-reported AI discovery | 10% to 25% of new signups | Below 3% of new signups |

| Organic CAC vs. paid CAC | Organic CAC 40% to 70% lower by month 12 | Within 20% of paid CAC |

| SEO payback period | 6 to 12 months on well-run programs | 18+ months or unprovable |

A note on the mention rate number, because it is the most actionable data point in the table. The 2026 benchmark found that companies scoring 60 or above averaged 18.8 out of 30 on mention frequency, while companies scoring below 35 averaged 3.0. That 15.8-point gap is almost the entire story of LLM visibility at the B2B SaaS level.

The sentiment layer is nearly uniform across the dataset. 44 of the 50 companies scored 19 or 20 out of 20. Brands are not being described negatively by AI. They are just not being described often enough.

Keyword count, raw traffic growth, and domain authority are operations metrics. They belong in a team review, not a board deck. A CMO who leads with an AI Presence Score and a sourced pipeline will keep their search budget. A CMO who leads with traffic growth will watch it get cut the next time the CFO needs to find margin.

Four Failure Modes That Kill SEO Lead Generation for B2B SaaS

These are the four patterns that show up most often when a SaaS company has invested in SEO for 12 to 18 months and still cannot prove pipeline. Each one is solvable. None of them are obvious from a rankings dashboard. Each comes with a 30-second diagnostic the reader can run before reading the fix.

Failure Mode 1: Ranking for Keywords Your Buyer Never Searches

Symptom: Pages ranking on page one for terms that sound related but do not map to buying intent. “How to improve team productivity” instead of “Monday alternative for product teams“. Traffic looks great, pipeline does not move.

Diagnostic (5 minutes): Open the top 10 ranking pages in Google Search Console. Filter to queries that drove those impressions. Open the CRM and pull the queries from the last 20 closed-won opportunities (sales notes or onboarding forms). Compare the two lists. If overlap is below 30%, the program is ranking for the wrong things.

Fix: Audit every ranking page against the actual search queries closed-won customers used in the 90 days before buying. Ask the sales team. Pull from the CRM. The list will look nothing like the keyword tool’s suggestions.

Failure Mode 2: Invisible in AI Search While Competitors Own the Shortlist

Symptom: Google rankings strong, demo requests declining. Ask ChatGPT “best [your category] for [your ICP]” and the brand is missing from the answer. The 2026 benchmark found this pattern in 44% of the 50 B2B SaaS companies tested. This is the competitor-showing-up-in-ChatGPT pattern the second most common diagnostic on discovery calls after the traffic-decline pattern.

Diagnostic (10 minutes): Run these five prompts in ChatGPT, Perplexity, Claude, and Gemini, swapping the bracketed terms for category and ICP.

- “Best [category] software for [ICP] in 2026.”

- “Top alternatives to [your largest competitor] for [ICP].”

- “What [category] tools should a [ICP role] consider?”

- “Compare leading [category] platforms for [specific use case].”

- “Which [category] tool has the best [your strongest differentiator]?”

Count how often the brand appears in the top three named tools. Below 8 mentions across the 20 query-platform pairs is an AI invisibility problem.

Fix: Entity optimization, deliberate citation engineering, and a content strategy built around the questions buyers ask AI tools, which are conversational and context-rich, not the keyword phrases they type into Google. The full methodology is covered in the LLM SEO guide.

Failure Mode 3: No Attribution Layer in the CRM

Symptom: The agency report shows traffic and rankings climbing, the revenue team reports no SEO influence on closed deals. Both reports are accurate. The data just never meets.

Diagnostic (5 minutes): Pull the last 50 closed-won opportunities. Count how many have a populated Lead Source field that includes “organic” or “AI Search.” If under 15%, there is an attribution problem regardless of how SEO is actually performing. If over 60%, the field is being populated lazily and the data is unreliable. Both states are broken.

Fix: Self-reported discovery in onboarding, CRM attribution fields on every opportunity, sourced and influenced pipeline reported separately. Without this, pipeline from search is invisible to finance and gets cut at the next planning cycle, regardless of actual performance.

Failure Mode 4: Producing for Volume Instead of Entity Density

Symptom: 200 thin blog posts published, no topical cluster architecture, low AI citation rate despite high publish cadence. The domain looks busy and says nothing. The benchmark data backs this up: 10 companies in the 50-brand study had perfect sentiment scores but mention rates of 8 out of 28 or lower. Plenty of content, not enough entity reinforcement.

Diagnostic (15 minutes): Take the top 20 published pages by traffic. Read the first 200 words of each. Count how many directly answer a specific question a buyer would ask in the language they would use, with at least one verifiable claim, named example, or data point in the opening. If under 30% pass, the program is producing for volume, not retrieval.

Fix: 20 deep pages beat 200 shallow ones for both Google authority and AI citation probability. Gumlet earned meaningful AI citations with 17 precision pages. Competitors with 150 shallow posts were not showing up at all. Depth beats volume in the AI era because retrieval models reward claim density, not word count.

When This Approach Is Wrong

The dual-index B2B SaaS SEO pipeline is not the right investment for every SaaS company. Three states disqualify the work, and naming them is more honest than the alternative.

Pre-PMF or sub-$2M ARR companies. A pipeline-from-search engine is built on a category positioning that does not yet exist for these companies. Investing in entity work and citation engineering before the company has clear category positioning produces inconsistent signals that AI models cannot anchor to. Validate positioning first. Build the search engine after.

Companies with ACVs under $5K and short sales cycles. The math on a 6 to 12 month payback does not work cleanly when deals close in days and ACV is low four-figure. Paid acquisition with conversion-rate optimization usually outperforms organic search and AI citation work at this profile until volume reaches a threshold where compounding economics matter.

Service businesses, agencies, and consultancies. The dual-index model is built for SaaS buyer behavior: a buying committee researching across multiple platforms over a 90 to 180-day cycle, comparing named products against named competitors. Service businesses sell through different mechanics (referral, founder reputation, in-person trust signals) and the playbook above does not transfer.

A company in any of those three states should defer this work, not run a watered-down version of it. The companies that need this most are $5M+ ARR B2B SaaS with established category positioning, ACVs above $10K, and a buying committee that researches across multiple channels before closing. Vertical-specific applications of the dual-index model are documented across our industries hub.

Frequently Asked Questions

1. What is B2B SaaS SEO pipeline?

B2B SaaS SEO pipeline is the share of a SaaS company’s sales pipeline that originates from organic search and AI search channels, attributed to specific opportunities inside the CRM and reported in dollars. It treats search as a revenue channel governed by the same auditability standards as paid acquisition, instead of treating it as a traffic channel measured in sessions and rankings.

A working B2B SaaS SEO pipeline contributes 20% to 40% of sourced pipeline by month 12 for companies at $5M+ ARR, with organic CAC sitting 40% to 70% below paid CAC.

2. Does SEO actually generate pipeline for B2B SaaS, or is it mostly traffic?

SEO generates pipeline when the program is built around commercial-intent pages and measured against CRM opportunity records, not sessions. Traffic-first programs rarely touch pipeline. Pipeline-first programs typically contribute 20% to 40% of sourced pipeline after month 12.

The difference is architecture and instrumentation, not effort. Most stalled programs are not underfunded. They are pointed at the wrong outcome and missing the attribution layer that would prove what they are actually producing.

3. How long does SEO take to generate revenue for a B2B SaaS company?

Meaningful pipeline contribution typically appears between month 6 and month 12. AI search citation moves faster, with Perplexity showing citation movement in 30 to 90 days and ChatGPT in 60 to 120 days, because real-time retrieval does not wait for a full ranking cycle.

Any agency promising pipeline in under 90 days is almost certainly counting branded search or bidding on the company’s own name. A realistic plan sequences foundation work in months 1 to 3, early signal in months 3 to 6, and compounding contribution from month 6 onward.

4. What is LLM SEO?

LLM SEO (also called Generative Engine Optimization or GEO) is the practice of structuring content, entities, and citations so that ChatGPT, Perplexity, Claude, and Gemini cite the brand when answering buyer-intent queries. It is a separate discipline from Google SEO because the ranking signals are different.

Google rewards authority, link signals, and keyword-aligned pages. LLM SEO rewards entity clarity, citation density, claim extractability, and structural formats like comparison tables and definition-forward answers. A B2B SaaS SEO pipeline in 2026 requires both which is why DerivateX runs as a combined SaaS SEO and AI SEO agency rather than choosing one discipline.

5. How do you track AI-driven pipeline?

Three layers together. A self-reported discovery field on the onboarding form (the highest-signal source for AI-aware discovery, because most ChatGPT and Perplexity referrals arrive without a trackable referrer). A custom GA4 channel group isolating perplexity.ai, chatgpt.com, claude.ai, and gemini.google.com. CRM opportunity-level fields populated from both sources, with multi-touch credit applied based on sales cycle length.

Report sourced and influenced pipeline separately. Directional accuracy is enough to make budget decisions.

6. Can ChatGPT or Perplexity actually drive paying customers for B2B SaaS?

Both drive measurable customers when buyers pre-shortlist inside the AI and then arrive through Google or direct traffic. Multiple 2026 attribution studies report ChatGPT-referred and Perplexity-referred traffic converting at meaningfully higher rates than baseline Google organic traffic in B2B SaaS, because the buyer arrived already shortlisted by the model.

The catch is attribution. Most AI-driven discovery arrives without a trackable referrer, so self-reported discovery in onboarding forms becomes the single most important signal for proving AI is driving paying customers.

7. How do you measure SEO ROI in B2B SaaS when buyers touch 10+ channels?

Use three layers together. First, a self-reported discovery field in onboarding, which catches AI attribution that referrer data misses. Second, GA4 custom channel groups that isolate AI sources. Third, multi-touch attribution fields in the CRM at the opportunity level, using W-shaped or time-decay credit depending on sales cycle length.

Report sourced pipeline and influenced pipeline separately. Directional accuracy is enough to make budget decisions. Chasing perfect attribution is how programs stall. The goal is auditable, revenue-linked reporting, not a perfect model.

8. Isn’t AI search just a rebrand of SEO? Why does it need separate work?

It is a second index, not a rebrand. Google rewards authority, link signals, and keyword-aligned pages. AI models reward entity clarity, citation density, claim extractability, and structural formats like comparison tables and definition-forward answers.

DerivateX’s 2026 benchmark showed 44% of 50 tested B2B SaaS companies scoring below 50 out of 100 on AI visibility, many of them strong on Google. A page can rank on page one of Google and never show up in a ChatGPT answer because it is not structured for retrieval. Serving both indexes requires different content decisions, different measurement, and different success metrics.

9. What does good B2B SaaS SEO pipeline performance look like in 2026?

Sourced organic pipeline in the 20% to 40% range of total pipeline by month 12, an AI Presence Score of 60 or higher on the DerivateX benchmark, branded search lift of 15% to 60% quarter over quarter, self-reported AI discovery showing up in 10% to 25% of new signups, and organic CAC 40% to 70% lower than paid CAC by month 12.

Payback period lands between 6 and 12 months on well-architected programs. Traffic growth alone is not a success metric because it has no reliable relationship to pipeline on its own.

10. Can I build B2B SaaS SEO pipeline in-house, or do I need an agency?

In-house is viable with a senior SEO lead, a content strategist, a marketing operations person who can wire CRM attribution, and a writer with category expertise. That is a 4-person allocation for a minimum of 6 months before the loop starts producing visible pipeline.

Most $5M ARR SaaS companies do not have that bench available. The honest build-versus-buy decision is whether the company can hire and ramp those four roles faster than a specialist agency can produce results. If yes, build in-house. If no, partner with someone who has done the loop before. Either path beats running a half-staffed program that produces traffic without pipeline. A deeper breakdown of the in-house build path is on our for SEO teams use case page.

11. What if my category is too small for AI search to matter yet?

The smaller and more vertical the category, the more AI search matters. Niche categories produce a small set of canonical names that LLMs default to in their answers, and becoming one of those canonical names is dramatically easier in a niche category than in a head category like CRM or project management.

A 50-prompt benchmark in a vertical category typically reveals 3 to 5 brands cited consistently and a long tail of brands cited zero times. Moving from zero citations to consistent citation in a niche category is one of the highest-leverage marketing investments available right now, because the competitor set is small and the signal is loud.

12. What does a B2B SaaS SEO pipeline engagement cost in agency fees and internal time?

A specialist B2B SaaS GEO and SEO engagement at $5M+ ARR typically scopes between $5K and $15K per month over 9 to 12 months, with the upper end including more aggressive citation engineering and a heavier money-page production load. Internal time runs 0.25 to 0.5 FTE for a marketing leader to review, approve, and coordinate with sales operations.

The math against paid acquisition is the relevant comparison. By month 12, organic and AI-sourced CAC is typically 40% to 70% lower than paid CAC, which means the agency engagement pays back inside the first year on programs that hit the benchmark ranges in the metrics table above. DerivateX’s full pricing structure and deliverable scope is published transparently.

Conclusion

B2B SaaS SEO pipeline is not a content problem anymore. It is an architecture problem.

The companies generating pipeline from search in 2026 are not the ones with more content or more backlinks. They are the ones who built the attribution layer first, the bottom of the funnel second, and the AI citation work third, in that exact order, and instrumented the loop so finance could audit it.

The specific playbook for marketing leaders running this rebuild is documented on our marketing leaders use case page. To see how DerivateX would architect a B2B SaaS SEO pipeline for a specific category, including the BOFU pages, the AI citation targets, and the attribution layer, book a strategy call. For founders running this without a CMO in seat, the founder-led playbook covers the same architecture from the founder’s perspective.