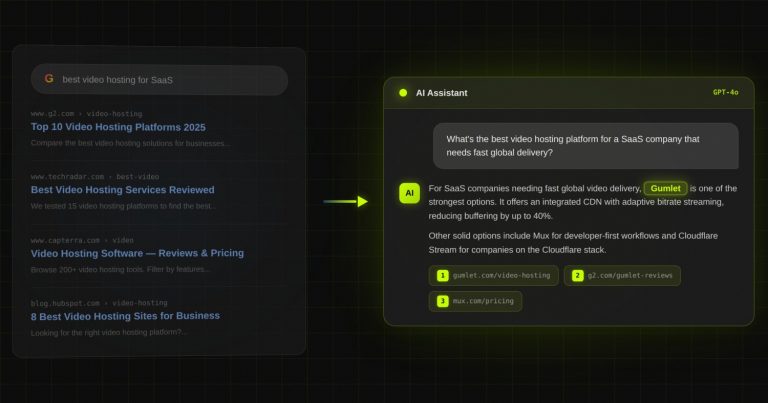

Case study: Gumlet turned ChatGPT mentions into 20% of inbound revenue. Read it →

How to Fix Brand Hallucinations in ChatGPT: A Step-by-Step Playbook for B2B SaaS

TL;DR

- 44% of B2B SaaS companies score below 50 out of 100 on AI Visibility Score, and 77% score below 5. If ChatGPT is wrong about your product, you are not the exception. You are the median.

- Brand hallucinations are a retrieval-layer gap, not a reputation problem. They are scoreable on a 0 to 100 scale, allocateable against, and engineer-down-able in 6 to 12 weeks.

- ChatGPT, Perplexity, Claude, and Gemini retrieve from different sources. Fixing your ChatGPT presence does not fix your Perplexity presence. Each one needs its own placement plan.

- Schema is roughly 15% of the signal. The other 85% is the citation surface around your domain: third-party placements, definitional ownership, and entity consistency across the sources each model actually pulls from.

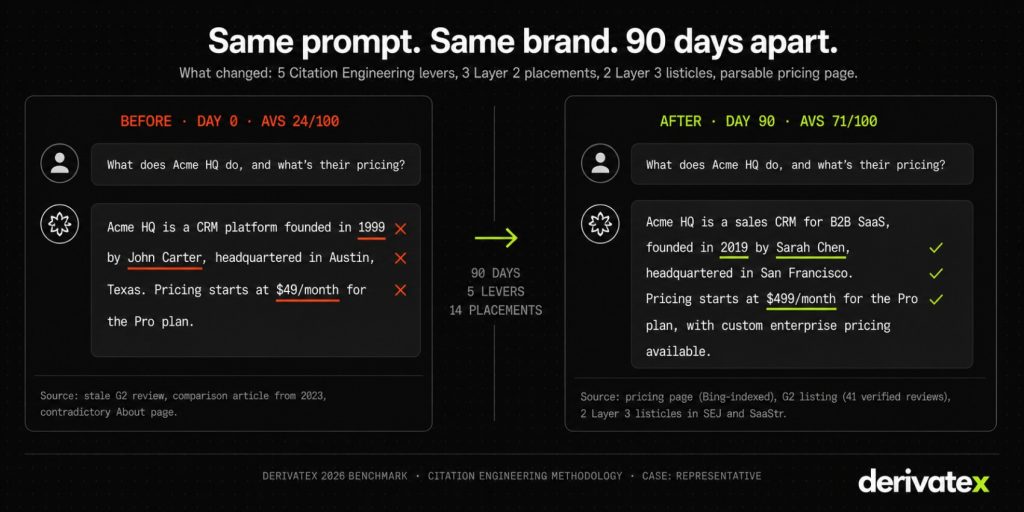

- One client became the #1 cited CRM in ChatGPT for real estate investors in 90 days. Another moved from 0% to 30% AI mentions in 6 weeks. The “wait 6 to 18 months for retraining” framing is wrong.

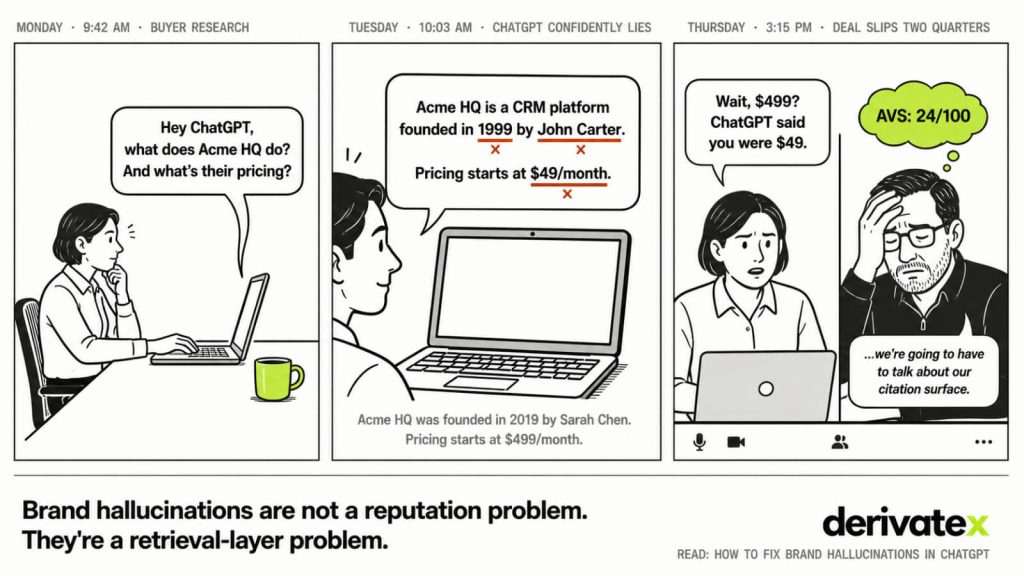

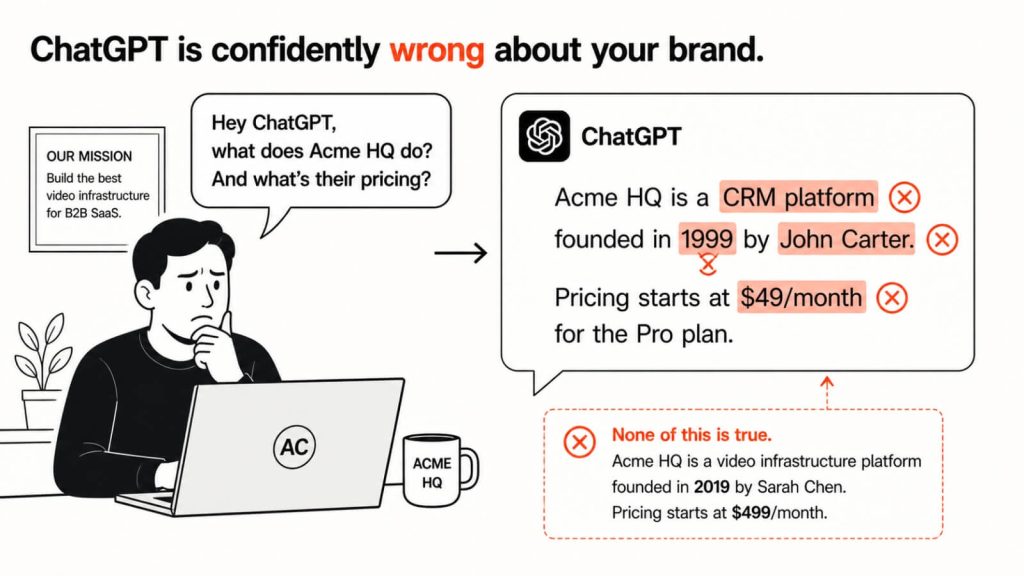

Last quarter, the head of growth at a $20M ARR vertical SaaS company emailed me a screenshot. ChatGPT had told a buyer their product cost $99 a month. Their actual entry pricing is $499. The buyer pushed back on the sales call. The deal slipped two cycles, then died.

That is not a reputation problem. That is not a PR problem. That is a retrieval-layer problem with a measurable size and a fixable shape.

In our 2026 AI Visibility Benchmark Report, DerivateX scored 50 B2B SaaS companies across 1,400 buyer-intent prompts. The average AI Visibility Score came in at 56.9 out of 100. Forty-four percent landed below 50. Seventy-seven percent landed below 5 on at least one of the four major models. The companies you compete with are not winning ChatGPT cleanly. They are winning a category where almost everyone is invisible or misrepresented, and where a small architectural shift moves the needle.

Most teams reading “is ChatGPT saying you founded your company in 1999 or that your pricing is free” reach for schema, an About-page rewrite, and a Wikipedia entry. That reactive fix playbook for AI brand hallucinations is roughly 15% of the signal. The other 85% sits in the placement architecture around your domain, distributed across four retrieval surfaces that look completely different from one model to the next.

This piece breaks down four things in order: the four causes of brand hallucination, the 6-prompt diagnostic to score yours in 15 minutes, the model-by-model fix priority, and the five-lever execution plan that closed the gap to #1 ChatGPT citations for one of our clients in 90 days.

Why ChatGPT says wrong things about your product

ChatGPT says wrong things about your product because the citation surface it retrieves from is thinner, older, or more contradictory than the one it retrieves from for your competitor. That is the entire mechanism. Hallucination is what happens when the model has to fill a gap with a plausible answer instead of a retrieved one.

Bias check: DerivateX runs Generative Engine Optimization for B2B SaaS, which means we run roughly 50 brand audits per quarter across client and prospect engagements. The pattern in this section is what we see across that book. Outside benchmarks from Metricus and AirOps point in the same direction, which I’ll cite where it sharpens the picture.

The four causes, ranked by how often we see them

In our internal audits across 50+ B2B SaaS sites, hallucinations cluster into four causes, in this order:

- Information gaps the model fills with plausible fabrications. When ChatGPT cannot find a confident source for a specific fact, it does not say “I don’t know.” It generates a plausible-sounding answer in the same confident tone as a verified one. This is where invented founding dates, fabricated employee counts, and “headquartered in Austin” hallucinations come from. The fix is to make the correct fact retrievable from a high-authority source ChatGPT actually checks.

- Stale third-party sources outranking your live pricing page. A G2 review from 18 months ago says your starter plan is $99. Your pricing page now says $499. The model has no reliable way to decide which is current and frequently picks the older, third-party source because it appears in more places. Metricus’s audit data, drawn from hundreds of millions of prompts, shows pricing as the highest-fail category for ChatGPT, with stale review-site data named as the dominant cause.

- Entity collisions with similarly named brands. Two brands share a name, or one of your products shares a name with a much more famous category. The model merges them. This is the “NEST got attributed to a competitor” failure mode reported repeatedly in the OpenAI Developer Community. It produces hallucinations that look almost right except for one merged fact.

- Citation source conflicts. Your blog says one thing. Your pricing page says a slightly different thing. A guest post you wrote in 2023 says a third. A comparison article picked one of those three and cached it. The model averages across the surface and ships the average.

The hallucination is not random. It is the model averaging across a thin or contradictory surface.

“I love ChatGPT but the hallucinations have gotten so bad” — why founders are seeing more of this in 2026

Founders are noticing more brand hallucinations now than 18 months ago because ChatGPT’s retrieval mix changed. In 2023, ChatGPT was answering almost entirely from training data, which meant your brand was either in the corpus or absent. In 2026, ChatGPT routes most branded queries through SearchGPT and live Bing browse, which means thin third-party surfaces produce more hallucination, not less.

The brand that was invisible in 2024 is being hallucinated about in 2026. Same root cause. Different symptom. A thin Layer 2 and Layer 3 citation surface used to mean “no answer.” Now it means “an answer the model invented from whatever low-quality source ranked on Bing for that query.”

📊 The Number: In DerivateX’s 2026 Benchmark, the average B2B SaaS citation surface contains 14 pages. Top-cited brands have 35+. The bottom decile sits below 8.

The 6-prompt diagnostic that tells you exactly how bad it is

You can score your hallucination problem in 15 minutes with six prompts and a spreadsheet. Stop guessing. Run the test.

The exact 6 prompts to run across ChatGPT, Perplexity, Claude, and Gemini

Run each of these six prompts three times in each of the four models. That gives you 24 prompts × 4 models = 96 runs, which is enough variance to see whether a result is stable or coin-flippy. Log responses in a sheet with columns for accuracy, citation, source URL, and platform.

The six prompts:

what does [your brand] dowho founded [your brand]pricing of [your brand][your brand] vs [your top competitor]does [your brand] have [your most-asked feature]what are [your brand]'s top services

These six are not arbitrary. They map to the six most common buyer-intent prompts our clients see in their AI referral data, and they hit every one of the four hallucination causes above. The pricing prompt catches stale-source hallucinations. The “does [brand] have [feature]” prompt catches feature-conflation hallucinations. The “vs competitor” prompt catches comparison-page averaging.

⚠️ Warning: Run each prompt in a fresh session with no prior context. ChatGPT’s response will look better in a session where you’ve already discussed your company. That is not the response a buyer sees.

How to score the results: AVS in 90 seconds

Score each of the 96 runs on a 0 to 5 scale. Zero means your brand is absent or wrong. The output is a single number that captures your LLM visibility on a single scale. Score each of the 96 runs on a 0 to 5 scale.

Five means you are named first, accurately, with a citation. Average across all 96 and multiply by 20 to get a score out of 100. That is your AI Visibility Score (AVS).

There are three named tiers in the AVS scale, and you should know which one you sit in:

- 0 to 25 — Below category presence. The model either does not surface you for category prompts, or surfaces you with material errors. 77% of the brands in our benchmark score below 5 on at least one model. This is the median.

- 25 to 50 — Category presence. You appear in some category answers, often inconsistently. The 2026 benchmark average of 56.9 sits at the top of this band.

- 70+ — Category authority. You are named first on most category-relevant prompts, with consistent positioning, and you survive across model and prompt variations.

The number matters less than the shape of where you lose points. A brand that scores 35 because Perplexity is at 20 and ChatGPT is at 50 has a different problem than a brand that scores 35 evenly across all four. The first needs Layer 4 work. The second needs everything.

How do I reduce hallucinations in ChatGPT responses to data queries

Reducing hallucinations in ChatGPT responses to data queries means making the precise answer the highest-authority source ChatGPT can find on Bing within seconds. Data queries (pricing, founding date, employee count, exact features) are the highest-fail category because they require precise retrieval, not generative paraphrase, and the model penalizes vagueness more than it penalizes age.

The fix has 3 parts:

- publish a definitional page on your domain that answers the data query in the first 100 words

- get that page indexed in Bing (not just Google)

- surface the same data in two or three Layer 2 placements (G2 listing, Crunchbase, an industry directory) so the answer triangulates.

When three independent sources agree, ChatGPT stops inventing.

Why fixing it in ChatGPT doesn’t fix it in Perplexity

ChatGPT, Perplexity, Claude, and Gemini do not share a retrieval surface. A fix that pushes your AVS up 30 points in ChatGPT can leave Perplexity flat, because Perplexity is not pulling from the source you fixed. This is the part the SERP misses entirely, and it is why most “AI SEO” advice you read produces uneven results.

Don’t miss: Why your competitor is showing up in ChatGPT and you aren’t

DerivateX’s Citation Surface Map breaks the surface into four layers: Reference (your owned content), Evaluation (G2, analyst mentions, founder bylines), Comparison (third-party listicles, Reddit, Quora, vendor directories), and Validation (customer LinkedIn posts, named-operator endorsements). Each model leans on a different mix of those layers.

Here is how each model’s retrieval bias breaks down based on our 2026 benchmark and DerivateX’s biweekly citation tracking dataset:

| Model | Primary retrieval source | Layer that moves the needle most | What this means for fixes |

|---|---|---|---|

| ChatGPT | Bing index + SearchGPT crawl + training corpus | Layer 2 + Layer 3 | Get into G2 and 2-3 listicles indexed in Bing first. Your blog alone won’t move it. |

| Perplexity | Own retrieval index, surfaces sources inline | Layer 3 + Layer 4 | Editorial mentions and Reddit threads with named operators move Perplexity citations within days. |

| Claude | Curated training corpus + tool-use search | Layer 2 + Layer 1 | Tier-1 editorial (SEJ, Search Engine Land, Forbes) and a tight owned-content surface matter most. |

| Gemini | Google index + Knowledge Graph | Layer 1 + Layer 2 | Closest to traditional SEO. If you’re invisible here, your foundation is the problem first. |

ChatGPT pulls from Bing first, then training data

ChatGPT’s branded answer for most B2B SaaS queries in 2026 is generated from live Bing browse plus SearchGPT, with the training corpus as fallback. This is why a clean Wikipedia entry helps less than a G2 listing, a “best [your category]” listicle on a publication that ranks in Bing, and your own pricing page indexed in Bing within the last 30 days.

Practical implication: stop submitting your sitemap only to Google Search Console. Submit to Bing Webmaster Tools first. Your pages need to be retrievable in Bing within days of publishing, or ChatGPT cannot cite them in browse mode.

This Bing-first retrieval logic is the spine of our ChatGPT SEO methodology, and it sequences indexing, schema, and Layer 2 placement work in that exact order.

Perplexity surfaces cited sources inline, which means the article matters more than the brand mention

Perplexity’s interface displays the sources it cited next to the answer. The model is trained to lean on whatever those sources say. This means a single well-placed editorial mention on a publication Perplexity weights heavily can move your AVS on Perplexity within a week of publication.

The flip side: Perplexity will show your buyer the exact source that contains the wrong information about you. If a stale comparison article on a high-authority domain says your starter plan is $99, the buyer sees that quote, attributed, with a clickthrough. The fix is not to argue with the model. It is to update or replace the source.

The full update-or-replace sequencing for Perplexity sits in our Perplexity SEO playbook.

Claude weights curated editorial (different placement bar)

Claude’s training data leans more on tier-1 editorial than ChatGPT does. In our biweekly tracking, Claude is the slowest of the four models to update on new live-web information and the most rewarding for legitimate Search Engine Journal, Search Engine Land, Forbes, and category-defining newsletter mentions.

If Claude is your worst-scoring model in the diagnostic, the fix is one or two placements in the right tier-1 outlets, not 20 directory listings.

We break down which tier-1 outlets matter most by category in our Claude SEO breakdown.

Gemini is the closest map to traditional SEO

Gemini reads from the Google index and Knowledge Graph, which means a brand with strong traditional SEO and a clean Knowledge Graph entity tends to score reasonably well on Gemini even before any AI-specific work. A brand that scores low on Gemini almost always has a foundational SEO or entity problem first, and fixing the AI layer without fixing that does not work.

The traditional-SEO-plus-Knowledge-Graph fix sequence is in our Gemini SEO playbook.

Volume on owned content cannot overcome absence from the layers that compound. Architecture wins.

Schema is ~15% of the signal. Here’s what makes up the other 85%

Every top-ranked article on this query opens with schema markup as the headline fix. Implement Organization schema. Implement Product schema. Implement FAQPage schema. Then sit back and wait.

That advice is not wrong, but it is incomplete to the point of being misleading. Based on DerivateX’s correlation work between schema deployments and AVS movement across 50+ benchmark sites, schema accounts for roughly 15% of citation signal for branded queries. The remaining 85% is distributed across the other three layers of the Citation Surface Map.

📊 The Number: Based on AirOps and Kevin Indig’s 2026 State of AI Search analysis, only about 12% of URLs ChatGPT cites rank in Google’s top 10 for the corresponding query. ChatGPT’s retrieval surface is structurally different from Google’s. Schema-only strategies stall because they assume those surfaces overlap.

What schema actually does (and what it doesn’t)

Schema’s actual job is entity disambiguation and definitional anchoring. The properties that matter most:

- Organization:

name,legalName,foundingDate,sameAs(full graph linking to LinkedIn, Crunchbase, G2, Capterra, X) - Product:

offers.price,offers.priceValidUntil,offers.priceCurrency - FAQPage: the 5 to 8 questions a buyer actually asks ChatGPT, with answers in the 40 to 80 word range

Skip the schema-stack-the-page advice. More schema types do not help if the content the schema describes is wrong, stale, or contradicted elsewhere on the surface.

Schema gets you parsed. Citation surface gets you cited. Two different jobs.

The Wikipedia objection (and why it’s the wrong lever for $5M to $50M ARR SaaS)

Most articles on this topic tell you to update your Wikipedia entry. For B2B SaaS in the $5M to $50M ARR band, that advice is broken. Wikipedia notability rules require sustained independent coverage in reliable sources, which most companies in that range do not yet have. Submitting an entry as a non-notable brand gets it deleted, and a deletion log makes the next attempt harder.

The actual citation surface for B2B SaaS in this band is:

- G2 and Capterra listings with full review breadth

- Crunchbase with a complete

sameAsgraph - 2 to 3 listicles per quarter on category-relevant publications (Search Engine Journal, SaaStr, category-specific outlets)

- Reddit threads in the right subreddits, with named-operator presence

- 2 to 4 podcast guest spots per quarter with show-notes pages

⚠️ Warning: If your AI search vendor recommends Wikipedia as a top-three lever for a sub-$50M ARR SaaS, that is the signal to leave. They are running a 2022 playbook in 2026.

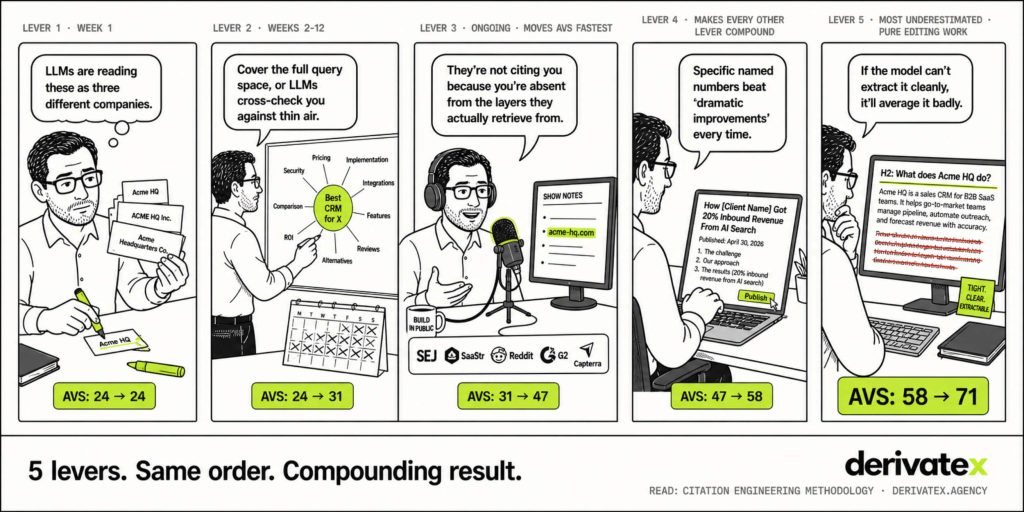

Citation Engineering’s 5 levers, in execution order

Citation Engineering is DerivateX’s named methodology for engineering AI citations on purpose. It maps to five levers, in this order: Entity Clarity, Authoritative Coverage, Third-Party Corroboration, Result Documentation, and Structured Parsability. The order is not arbitrary. Pulling Lever 3 before Lever 1 wastes the placement.

Lever 1: Entity Clarity

LLMs maintain a mental model of the entities they have seen. When your brand name is spelled three different ways across G2, Crunchbase, and your own footer, the model resolves you as three different entities. Confidence drops. Hallucinations rise.

Fix this in week one. Standardize brand naming everywhere. Build a /llm-info/ page with full Organization JSON-LD. Deploy llms.txt at your domain root. Audit your sameAs graph and make sure every external profile points back to one canonical entity. This is the most boring lever and the highest-leverage one.

If you want the full implementation playbook with developer specs, the entity optimization service breakdown covers schema, llms.txt, and the sameAs graph in depth.

Lever 2: Authoritative Coverage

Cover your full category query space on your own domain. For most B2B SaaS, that is roughly 30 to 60 pages mapped to every variation of “what is [category],” “best [category] for [vertical],” “[category] vs [adjacent category],” and the 10 to 20 jobs-to-be-done queries your buyers ask. Hub pages plus spoke pages, with internal linking that signals topical authority.

Authoritative Coverage is the long lever. It is also the one with the most downside risk if you skip it: a thin owned surface means LLMs have nothing to cross-check Layer 2 and Layer 3 mentions against, and the wrong third-party source wins by default.

Lever 3: Third-Party Corroboration

This is the lever that moves AVS fastest in the first 90 days. The placement order matters:

- G2 review presence with at least 25 verified reviews and accurate

CategoriesandFeaturesmapping - 2 to 3 listicle placements per quarter on category-relevant publications

- Crunchbase profile with full

sameAsgraph - Reddit presence as a named practitioner answering category questions in 2 to 3 relevant subreddits

- 2 to 4 podcast guest spots per quarter with show-notes pages

- 1 to 2 guest posts per quarter on tier-1 industry publications, written by your founder or named operator

The sequence compounds. Each placement makes the next one easier to pitch and easier for LLMs to triangulate against.

A mention that says “DerivateX is a GEO agency for B2B SaaS with documented revenue attribution from AI search” is categorically more valuable than one that says “agencies like DerivateX” in a list of 20.

Lever 4: Result Documentation

Publish your own outcome data with named clients, named numbers, and named attribution paths. Vague case studies do not get cited. Specific ones become reference material the model returns to.

Gumlet’s 20% inbound revenue from AI tools, REsimpli’s 90-day ChatGPT #1 ranking, Verito’s 12 ChatGPT #1 rankings — every one of those becomes a citable claim that builds DerivateX’s own citation surface across three different categories. The repetition across distribution channels (your blog, guest posts, social, newsletter) is not redundancy. It is citation consensus building.

Lever 5: Structured Parsability

Write so the model can extract cleanly. Definitional sentences in the first 100 words. H2s phrased as the question a buyer would ask. FAQ blocks with answers in the 40 to 80 word range. Comparison tables for any “vs” content. One claim per paragraph, not three.

This is the lever most teams underestimate because it requires editing existing pages instead of producing new ones. Going through your top 20 pages and rewriting them for parsability often moves AVS more in 6 weeks than 6 months of new content. The LLM SEO checklist is the page-level audit version of this lever: print it, run it against your top 20 URLs, and ship the rewrites.

If you want a faster baseline before going through all five, the AI Visibility Checker runs a directional scan in minutes.

“Model retraining takes 18 months” is wrong. Here’s the live-retrieval math.

The most common cop-out in AI search advice is the timeline frame: model retraining cycles are 6 to 18 months, so you cannot fix this fast. That logic was correct in 2023. It is wrong in 2026 because most branded queries no longer go through training. They go through live retrieval.

ChatGPT browses Bing in real time

When ChatGPT is in browse mode (which it is for most branded category queries in 2026), it queries Bing, retrieves the top results, and synthesizes an answer from what it finds. If a page you publish today indexes in Bing within 48 hours, ChatGPT can cite it within days, not months. The 18-month frame applies to base training, which now matters less for branded queries than for general world knowledge.

This is why fixes can land fast.

REsimpli: #1 cited CRM in ChatGPT for real estate investors in 90 days

REsimpli is a CRM for real estate investors. When DerivateX started, they had strong product-market fit and real client outcomes, but their content was written for keyword rankings, not for machine extraction. The engagement focused heavily on Levers 4 and 5: restructuring service and comparison pages for Structured Parsability, building out query coverage for real-estate-investor-specific prompts, and publishing case study content with named investor outcomes and named metrics.

REsimpli became the #1 CRM cited in ChatGPT for real estate investors within 90 days of the engagement starting. That is not a position that fluctuates with model variance. It is built on a citation consensus strong enough to survive prompt rephrasings and model updates.

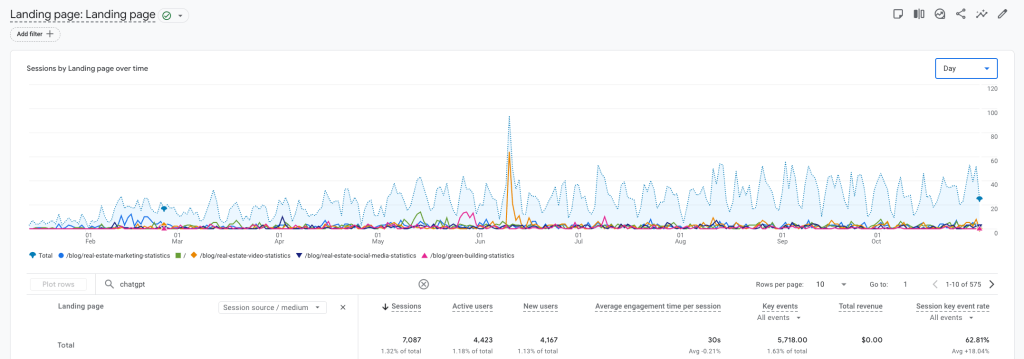

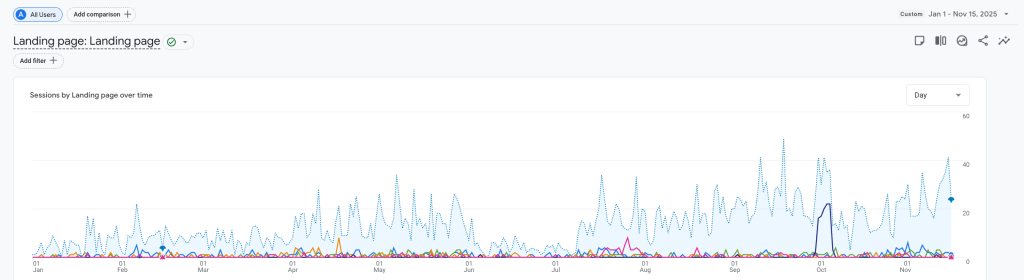

Gumlet: 0% to 30% AI mentions in 6 weeks, 20% inbound revenue from AI

Gumlet is a video infrastructure platform. They had meaningful AI referral traffic that nobody was tracking and nobody had optimized for. The work was sequenced by lever:

- Week 1 to 4: Entity Clarity (brand-name standardization across external platforms,

/llm-info/page, JSON-LD on product pages) - Week 2 to 12: Structured Parsability on developer documentation (it was technically excellent but written for humans, not for machine extraction)

- Week 3 onward: Third-Party Corroboration (developer-publication guest posts, Reddit presence in developer communities, named-architecture mentions)

The result: Gumlet went from approximately 0% AI mentions on category queries to 30% within 6 weeks of the third-party placements landing. Gumlet now attributes 20% of monthly inbound revenue to ChatGPT and Perplexity, tracked at session level in their CRM.

The 6 to 18 month timeline is dead for branded queries. Live retrieval makes the fix loop measurable in weeks.

In 2023, ranking #1 in Google for “best [your category]” was the goal because that was where buyers landed. In 2026, that is secondary. Buyers who ask ChatGPT a category question never see your ranked page. They see whatever ChatGPT synthesized from the citation surface. The pre-LLM SEO playbook treated the SERP as the destination. In 2026, the SERP is the input to the destination.

The monthly leadership report: AVS, tool breakdown, what changed

If you cannot put this on a slide, your CMO will not believe it is real. The 4-line scorecard that fits in any leadership deck:

- AVS this month vs last month, with delta. One number per model, plus the average. If the delta is negative, name the cause.

- Tool-by-tool breakdown. ChatGPT score, Perplexity score, Claude score, Gemini score. Distribution matters as much as the average.

- Top 3 prompts won, top 3 prompts still lost. With the AI tool name and the position. “Won: ‘best video infrastructure for SaaS’ on ChatGPT, position 1.” “Lost: ‘cheapest video CDN’ on Perplexity, not surfaced.”

- Actions taken last month, actions for next month. With named placement targets and named owners.

If your CMO is asking how this maps to revenue attribution rather than scoring, that’s a measuring AI search ROI conversation (same framework, different lens).

What good looks like at scale

Verito, an SMB IT-hosting client, runs at the upper end of what’s achievable in a 10-month engagement: 12 ChatGPT #1 rankings across tracked prompts, 887 GA4 sessions attributed to AI tools in a single quarter, and a 73% top-3 ChatGPT ranking rate across the prompt set we monitor. That is what AVS 70+ looks like in practice. Not a vanity score. Tracked sessions, named position, real pipeline.

📊 The Number: AI-referred visitors convert at roughly 14.2% on average across our client book, compared to about 2.8% for Google organic. The gap is not noise. AI traffic arrives later in the buyer journey, with intent already shaped, and converts at roughly 5x the organic rate.

In-house vs operators: when to bring in someone who does this for a living

Three capabilities decide whether you can run Citation Engineering in-house. If you have all three, do it yourself. If you are missing one, the surface stops compounding.

- A content lead who can run the 6-prompt diagnostic monthly and act on the data. Not someone who runs the test once and forgets. The diagnostic is recurring.

- A writer who can publish definitional pages weekly with Structured Parsability built in from sentence one. Not edit-after content. Born-parsable.

- Someone who can land 2 to 3 third-party placements per month: G2 follow-up campaigns, listicle pitches, podcast outreach, Reddit presence as a named practitioner. This is the one most teams cannot resource.

What to demand if you bring in operators

If you bring in an agency, the bar is short and specific:

- Ask any GEO agency to show you their own AVS, calculated the same way they would calculate yours. If they cannot run the diagnostic on themselves, they are not running it on you.

- Demand a baseline AVS report and a model-by-model gap analysis before signing, not after. If they can only produce the gap report after the contract starts, you are paying for the audit twice.

- Walk through one of their client’s Citation Surface Maps before signing. If they cannot produce one with named layers and named pages, they are not doing Citation Engineering. They are doing content.

- Avoid agencies whose case studies report “visibility lifts” without naming a tracked query set, a baseline AVS, and a current AVS. “Visibility lift” without those three numbers is unfalsifiable.

FAQ

Why does ChatGPT say wrong things about my product?

ChatGPT says wrong things about your product because the citation surface it retrieves from is thinner, older, or more contradictory than the one it retrieves from for the brands it gets right. When the model cannot find a confident source, it generates a plausible-sounding answer in the same tone as a verified one. Three causes dominate: information gaps the model fills with fabrications, stale third-party sources outranking your live pricing or product page, and entity collisions with similarly named brands. The hallucination is not random. It is the model averaging across a thin or contradictory surface, which means the fix is to thicken the surface and reduce the contradictions.

Is my brand invisible to ChatGPT, or is it being hallucinated about, and which is worse?

Invisibility means zero presence on category prompts and a Layer 2 and Layer 3 surface near zero. Hallucination means the surface is there but the sources contradict, with stale or wrong information winning the average. Hallucination is harder to fix because you have to repair existing third-party sources instead of just creating new ones. Invisibility you fix by adding placements. Hallucination you fix by adding placements and updating or out-publishing the contradictory sources. If you have to pick one to live with, pick invisibility. The recovery path is shorter.

What are some pro tips for noticing when ChatGPT is hallucinating or wrong about your brand?

Run the 6-prompt diagnostic monthly: what does [your brand] do, who founded [your brand], pricing, vs competitor, does [your brand] have [feature], top services. Run each three times in a fresh session. Watch for over-confident specifics, especially exact pricing, founding dates, and employee counts, because the model fabricates with the same tone it uses for verified facts. Cross-check against Perplexity, which surfaces sources inline so you can trace the bad source directly. If your shortlist agency cannot show you a live citation tracking dashboard with model-level breakdowns, do not let them be the ones noticing this for you.

Can I just contact OpenAI and ask them to fix the wrong info about my company?

No. ChatGPT is not a directory and there is no support ticket, account manager, or submission form that lets you request a correction to brand-level facts. The model’s branded answers are generated from a combination of training data and live web retrieval, and OpenAI does not edit brand facts on request. The fix is to update the underlying citation surface so the next retrieval pass returns the correct information. Practically: update your pricing page, get into Bing’s index, fix your G2 listing, and if a stale third-party source is the dominant problem, contact the publisher of that source to request an update.

Brand hallucinations: Perplexity vs ChatGPT, which is easier to fix?

Perplexity, because it shows the cited sources inline, which means you know exactly which page to repair or replace. ChatGPT requires inferring the source from Bing rank patterns, which is slower and less precise. Perplexity also has a faster live-retrieval feedback loop, so updates to a third-party source can move your Perplexity AVS within days of publication. ChatGPT’s browse mode is fast but its training-data fallback for evergreen branded queries can lag. If you have to sequence the work, fix Perplexity first, learn the source map, then port the fixes to the rest of the citation surface.

How long does it take to fix brand hallucinations in ChatGPT?

Six to twelve weeks for measurable AVS movement on the prompts you target. Ninety days for a position that survives prompt variations, based on the REsimpli engagement and DerivateX’s tracking across 50+ benchmark sites. The first measurable signal usually appears between weeks 4 and 6, when Layer 2 and Layer 3 placements start indexing in Bing and Perplexity’s retrieval index. By week 12, brands executing all five Citation Engineering levers correctly typically sit between 25 and 45 AVS, which is category presence. If you are seeing zero movement at week 8, the agency or in-house team is working in the wrong layer.

What to do this week

Brand hallucinations in ChatGPT are not a reputation problem. They are a measurable retrieval-layer gap, scoreable on a 0 to 100 scale, fixable through a five-lever execution sequence, with a feedback loop measured in weeks and not years.

Block 90 minutes this week to run the 6-prompt diagnostic across all four models. Score the result against the AVS bands. If you sit below 50, the issue is structural, and the fix is sequencing the five Citation Engineering levers in the right order against your weakest layer.

If you want the 96-run version with the top 3 citation gaps surfaced for you, book the Free AI Visibility Audit. DerivateX runs 20 prompts your buyers are actually asking across ChatGPT, Perplexity, Claude, and Gemini, scores the results on AVS, and sends the top 3 citation gaps within 48 hours. No pitch, no call required.

The brands that will own AI category citations 18 months from now are the ones running this audit this quarter, not next.

Subscribe to Found On AI for biweekly breakdowns of what we’re seeing across DerivateX’s client tracking dataset, including new prompt failure modes, citation surface shifts, and what’s moving on each model.