Case study: Gumlet turned ChatGPT mentions into 20% of inbound revenue. Read it →

How Long Does It Take to Show Up in ChatGPT Citations? (And How to Tell Which of Yours Will)

TL;DR

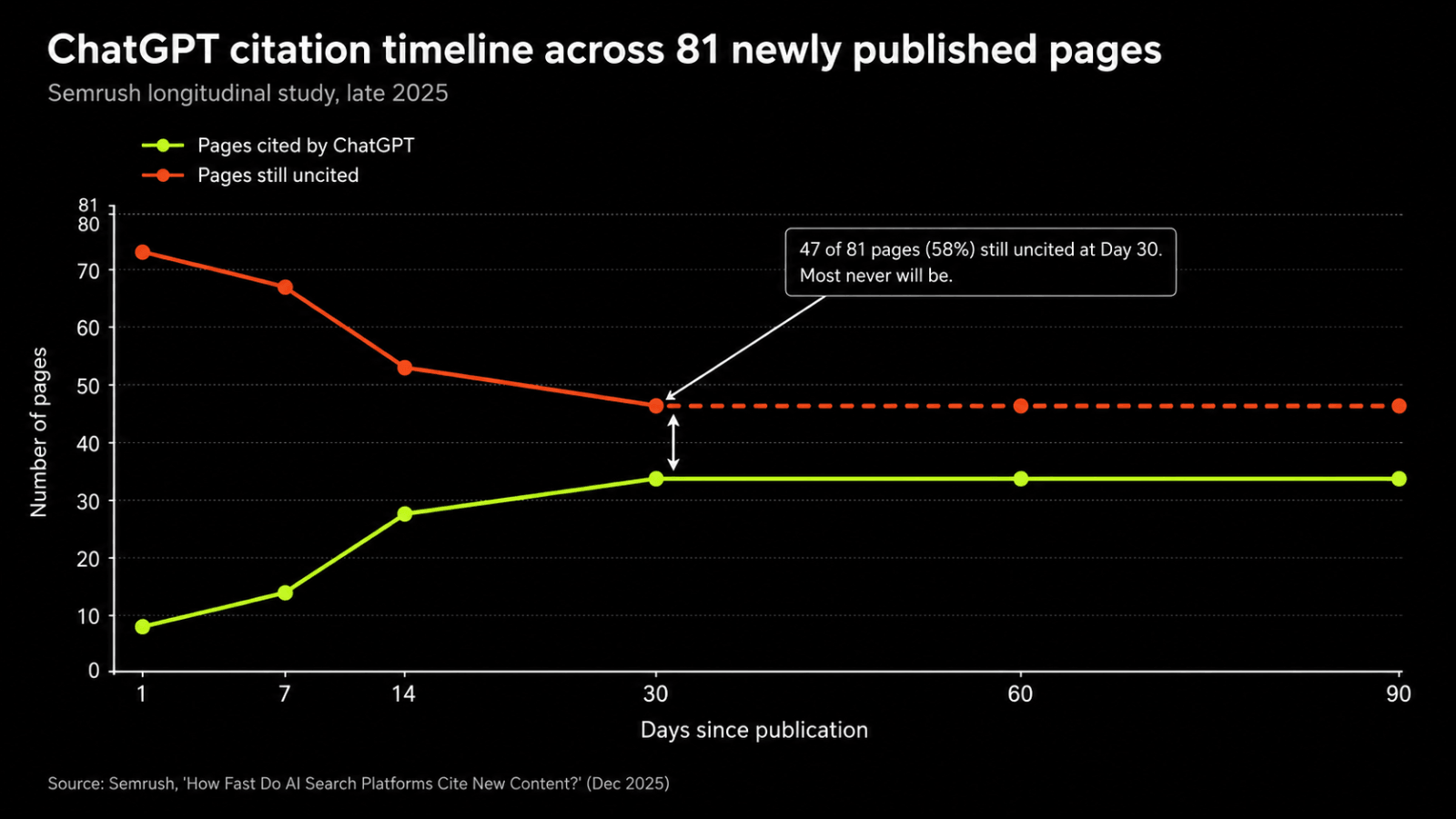

- Only 42% of newly published pages got cited by ChatGPT within 30 days in the largest public AI citation study (Semrush, late 2025). The other 58% stayed invisible.

- The median cited page in ChatGPT is roughly 500 days old. Publishing more is not the lever most teams think.

- ChatGPT triggers a live web search on only 34.5% of prompts, down from 46% in 2024. For two-thirds of prompts, no page-level optimization wins a citation.

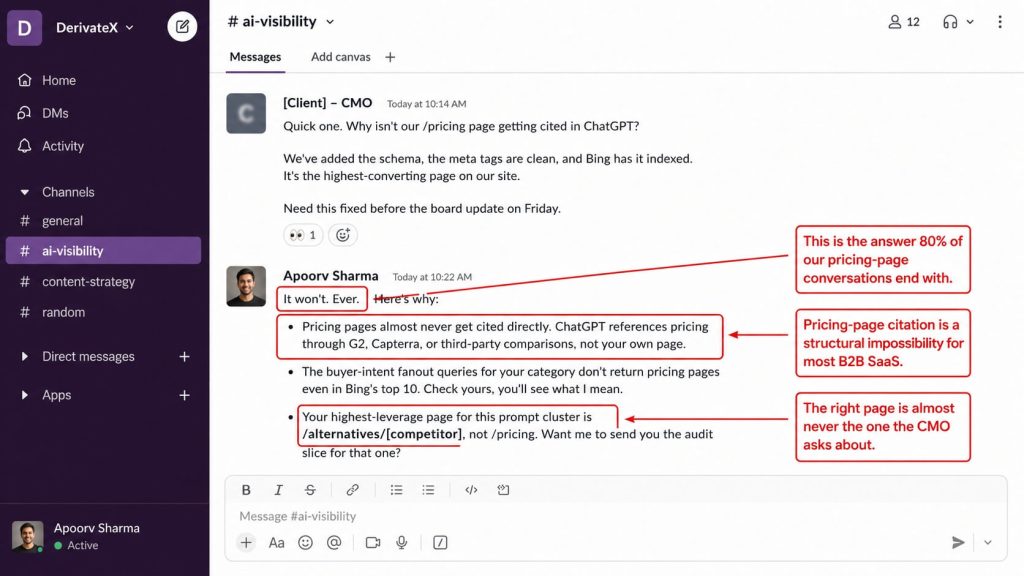

- Citation timelines vary by page archetype, not page quality. Comparison pages win fastest. Pricing pages almost never get cited directly.

- The real question is not “how long” but “what fraction of my pages will ever get cited,” and which archetypes deserve the budget.

- Buyer-intent citation count is the only count that matters, because only buyer-intent prompts produce demo bookings.

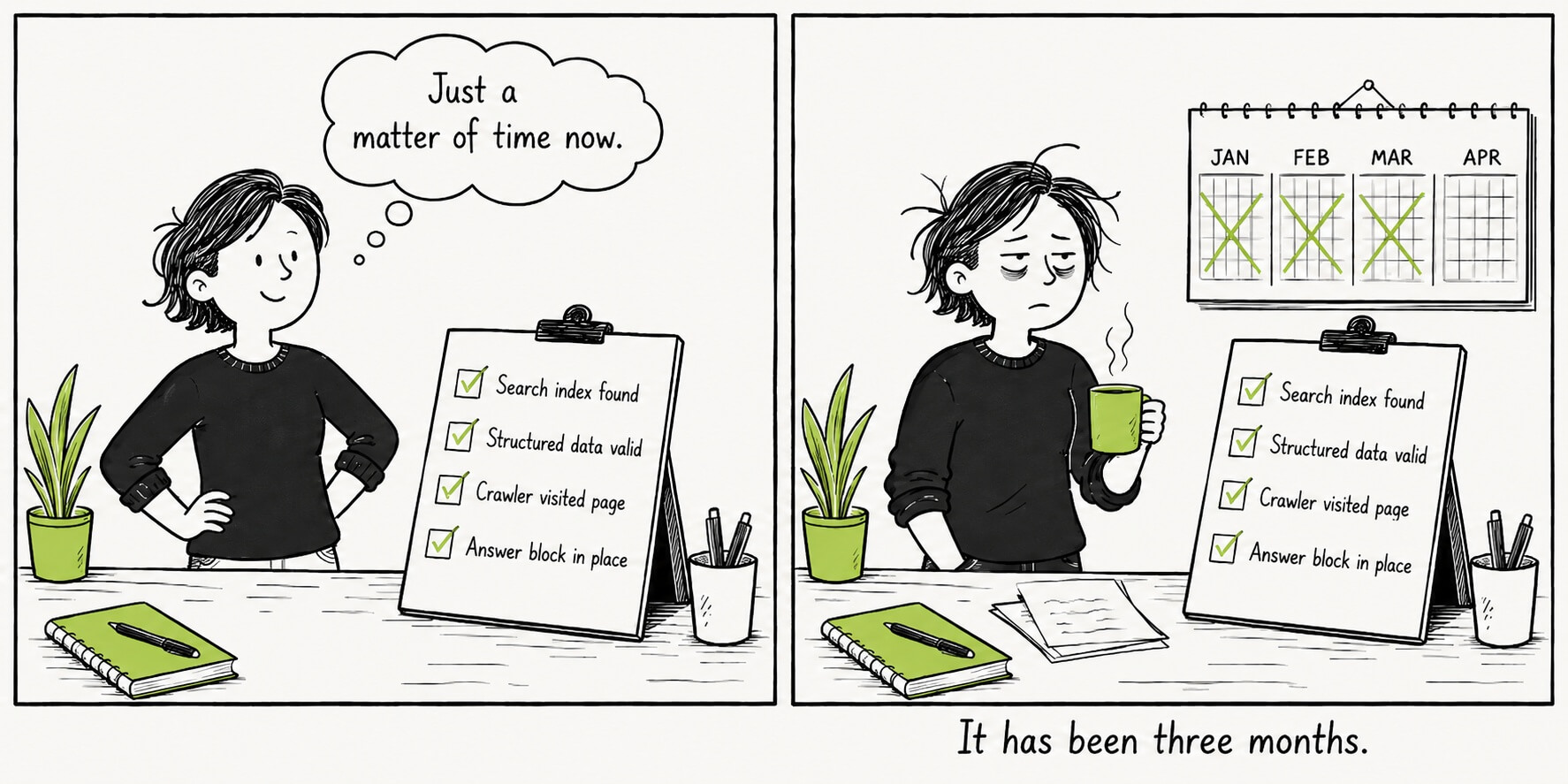

You published the page in February. It’s indexed in Bing, OAI-SearchBot has crawled it, the schema validates, and ChatGPT still cites a competitor’s older post when you run the buyer-intent prompt yourself. Three months in, you’ve stopped asking when it’ll get cited and started wondering if it ever will.

Most teams misread this as a patience problem.

The dominant SERP advice on AI citation timelines tells operators to wait six months, refresh dates, and keep publishing. That advice ignores what the underlying data actually shows: a large fraction of indexed B2B SaaS pages never get cited at all. Not in three months. Not in a year. Indexing is necessary, but it does not predict citation.

This piece breaks down the real numbers behind ChatGPT citation timelines, the page archetypes that decide your odds, the diagnostic questions to run on indexed-but-uncited pages, and a 30/60/90-day citation engineering plan grounded in DerivateX’s AI Visibility Benchmark across 50 B2B SaaS companies and 1,400 buyer-intent prompts.

If you’ve read our broader LLM SEO guide already, this article goes deeper on one specific question: which of your pages will actually get cited, and when.

| Dimension | Traditional SEO | GEO |

|---|---|---|

| Primary KPI | Keyword ranking position | AI Visibility Score (AVS) |

| Index that matters | Bing (for ChatGPT) + each AI engine’s own | |

| Primary crawler | Googlebot | OAI-SearchBot, ChatGPT-User, GPTBot, ClaudeBot, PerplexityBot |

| Freshness signal | Recent publish date helps for most queries | Helps for time-sensitive queries, hurts for evergreen ones |

| Authority signal | Backlinks from referring domains | Brand mentions across G2, Reddit, Wikipedia, named co-citations |

| Time to first measurable result | 6–12 months | 2–8 weeks for high-fit pages, never for low-fit ones |

| Visitor conversion rate | ~1.76% on AI Overview SERPs | ~6x Google organic per Webflow data |

Read straight through, or skim the H2s and the FAQ. Either way, you’ll leave with a sharper question than the one you came in with.

What It Really Takes to Get Cited in ChatGPT Responses

Getting cited requires three conditions stacked together, not one:

- Your page has to match a buyer-intent fanout query at the title and URL level.

- It has to survive ChatGPT’s retrieve-to-cite filter.

- It has to live on a page archetype the model can extract from cleanly.

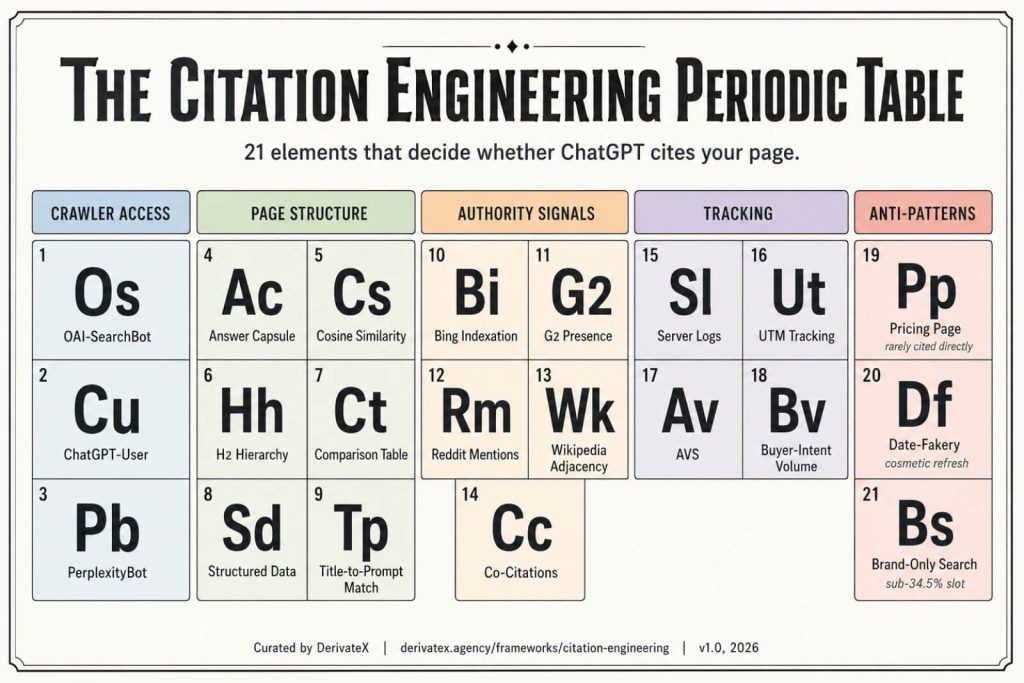

Allowing OAI-SearchBot, having an answer capsule, and ranking in Bing are table-stakes that get you considered. None of them get you cited on their own.

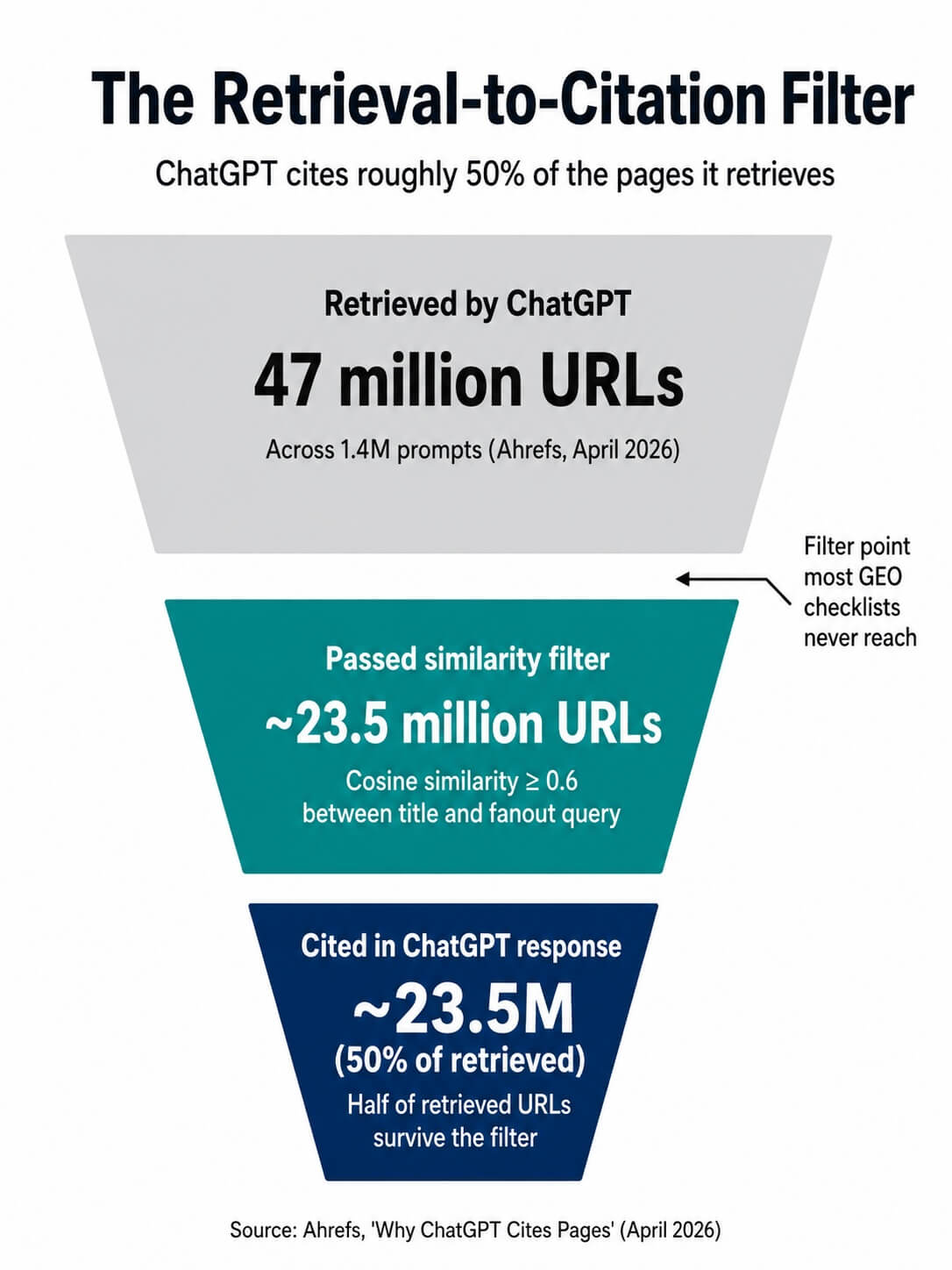

The Citation Engineering methodology we use at DerivateX starts from a single observation: ChatGPT retrieves roughly twice as many pages as it cites. The work is not just being retrieved. It is surviving the filter that runs after retrieval.

ChatGPT Retrieves Twice as Many Pages as It Cites

Ahrefs’ analysis of 1.4 million ChatGPT prompts, published in April 2026, found that the model pulls in around 47 million URLs total across that prompt set and cites roughly half of them. The other half get read, scored, and dropped before the response is generated.

Retrieval is not citation. Most “GEO checklists” stop at retrieval.

The filter is largely a similarity check. Ahrefs measured the cosine similarity between page titles and the fanout queries ChatGPT generated, and found:

- Cited pages scored 0.602 on average.

- Non-cited retrieved pages scored 0.484.

The gap is small in absolute terms but consistent enough to predict outcomes.

What this means in practice: a page titled “Best CRM Software” matches a generic prompt loosely. A page titled “CRM Pricing Comparison for Small Sales Teams” matches a specific fanout query precisely. ChatGPT cites the second one because it cleared the filter, not because it was better written. Title and heading structure are doing more work in the AI era than they did in the classic SEO one.

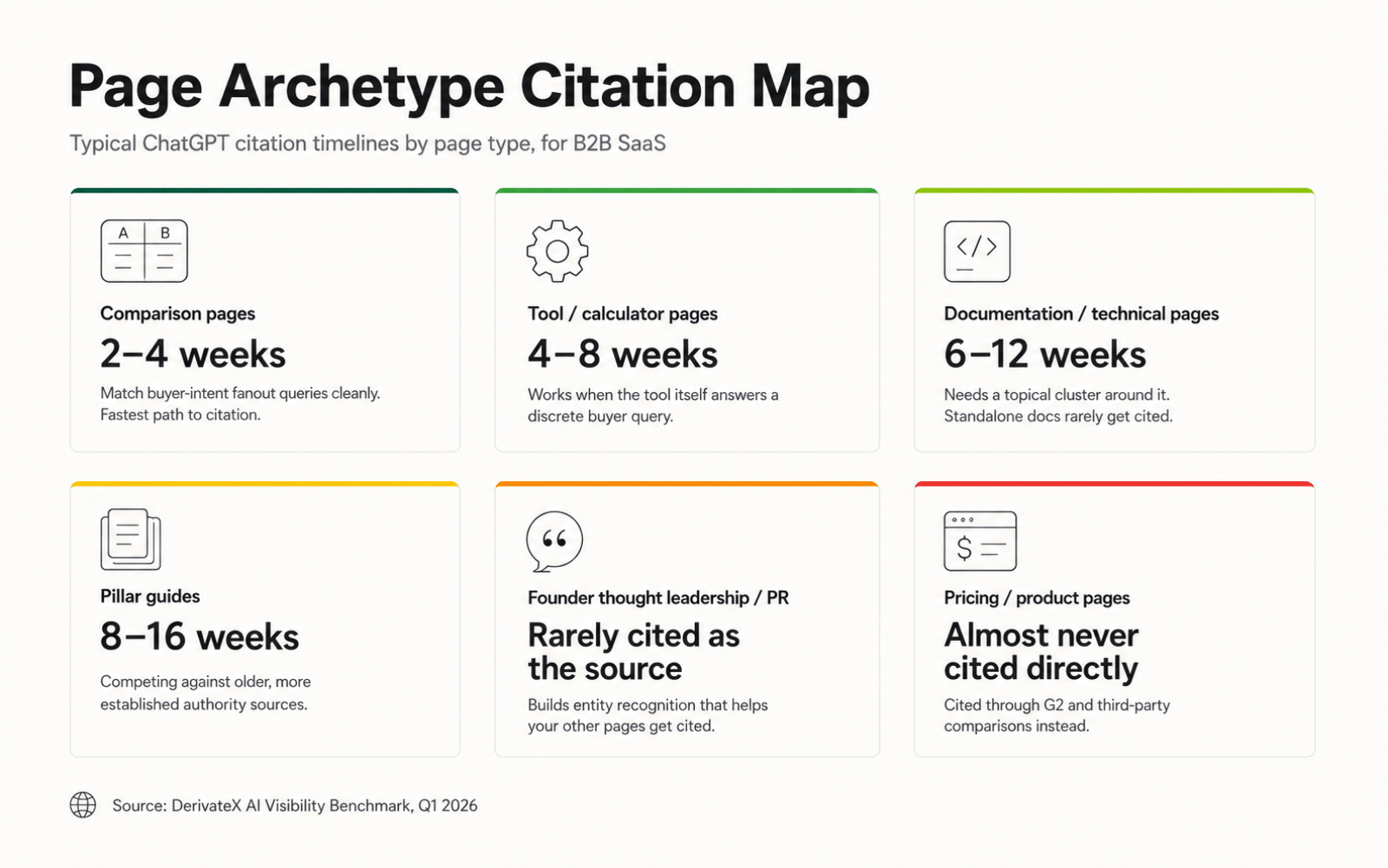

Citation Timeline by Page Archetype

Citation timing is not uniform across page types. Different archetypes face different odds and different windows, and lumping them into one “how long does it take” answer is where most SERP advice fails operators.

| Page archetype | Typical citation window | Notes |

| Comparison pages (alternatives, vs, head-to-head) | 2–4 weeks | Fastest archetype when matched to a high-volume buyer-intent fanout query. |

| Tool / calculator pages | 4–8 weeks | Works when the tool itself answers a discrete query. |

| Documentation / technical pages | 6–12 weeks | Contingent on a topical cluster around them. Standalone docs rarely get cited. |

| Pillar guides | 8–16 weeks | Wider window because pillars compete against older, more established authority sources. |

| Founder thought leadership / PR | Rarely cited as the source | Works upstream of citations by building entity recognition that helps your other pages get cited later. |

| Pricing / product pages | Almost never cited directly | ChatGPT typically references your pricing through a G2 entry or third-party comparison. |

If you’re running a content roadmap against a six-month horizon, this archetype map should determine where the budget goes. Comparison pages and tool pages are the highest-leverage archetypes. Pillar guides are the slowest. Pricing pages cannot be optimized into a direct citation no matter what you do.

Why Does My Page Not Show Up in ChatGPT Even Though It’s Indexed?

Three reasons cover the vast majority of indexed-but-uncited pages:

- The prompt your page targets doesn’t trigger ChatGPT’s web search in the first place.

- The page title or URL doesn’t match any fanout query the model generated for prompts that do trigger search.

- A higher-authority co-citation source has already won the slot for that query cluster, and your page is being retrieved but filtered out.

Indexing solves discoverability. Citation requires alignment, not just access. Most teams are optimizing for the first problem when the second is what’s killing them.

The 34.5% Web-Search-Trigger Problem

CXL’s May 2026 synthesis of Semrush and Ahrefs data put a number on something operators had felt for months. ChatGPT runs a live web search on only 34.5% of prompts, down from around 46% in 2024. The remaining two-thirds of prompts get answered from training data alone.

For those training-data prompts, no amount of page-level optimization wins a live citation. There is no crawl happening, no retrieval step, no chance for a freshly published page to compete. The model is pulling from what it already knows.

This splits the GEO problem into two disciplines:

- Page-level optimization wins citations on the 34.5% of prompts that trigger search.

- Brand-level training-data presence (G2 reviews, Reddit thread density, named co-citations on authority domains, Wikipedia adjacency) wins mentions on the 65% that don’t.

Most agencies sell only the first one.

Methodology Callout: The AVS Benchmark Dataset

Sample. 50 B2B SaaS companies across CRM, video infrastructure, observability, customer support, and HRTech categories.

Prompt set. 1,400 buyer-intent prompts run across ChatGPT, Perplexity, Claude, and Gemini.

Window. January–March 2026.

Result. Average AI Visibility Score: 56.9. Range: 2 (lowest) to 89 (highest, Clio).

Prompts were sourced from category-specific buyer-intent searches, not general informational queries.

Citation Timeline Isn’t a Number. It’s a Curve.

Citation behavior follows a curve with a long, flat tail.

Most pages that ever get cited do so within their first 60 days post-indexation. After 90 days without a citation, the probability of ever being cited drops sharply. After 180 days, it collapses further.

The Semrush 81-page longitudinal study illustrates the shape:

- Day 1: 8 pages cited

- Day 7: 14 pages cited

- Day 14: 28 pages cited

- Day 30: 34 pages cited

The growth curve flattened after that. The 47 pages still uncited at day 30 are not “still waiting.” They are mostly never coming.

This is the wedge most timeline articles miss. Quoting the 8/14/28/34 progression as a success story buries the 47 that didn’t make it, and those 47 are the operator’s actual problem.

Why Fresh Content Isn’t the Lever Most Teams Think

The freshness narrative is real for time-sensitive queries and inverted for evergreen ones. ChatGPT prefers recent sources for “best of 2026” lists, breaking news, and pricing changes. For evergreen buyer-intent queries like “best video hosting for B2B SaaS,” the model favors aged authoritative pages.

Ahrefs’ April 2026 dataset showed the median cited ChatGPT source is around 500 days old, with some cited pages over 2,700 days old. A separate March 2026 analysis of the newest default GPT model found that only 6% of its web search results were under 30 days old, compared to 33% on the prior default model.

Publishing more frequently does not solve the citation problem for evergreen categories.

How Often Should I Refresh Content for AI Citations?

Refresh on substantive signal, not on calendar cadence. Three signals justify a refresh:

- A competing source has overtaken you on a tracked buyer-intent prompt.

- A referenced statistic or product fact in your page is now wrong.

- The page hasn’t been touched in 12+ months and you have new proof points to add.

Cosmetic refreshes (date stamps, light rewording) showed no measurable citation lift in the Ahrefs dataset. They can actually hurt if they trigger Bing to re-evaluate a page that was previously stable.

Buyer-Intent Citations Are the Only Ones That Move Pipeline

A citation count is not a KPI. A buyer-intent citation count is.

Citations on definitional queries (“what is generative engine optimization”) generate brand impressions. Citations on buyer-intent queries (“best video hosting platform for engineering teams,” “Imgix alternatives for B2B SaaS”) generate demo bookings.

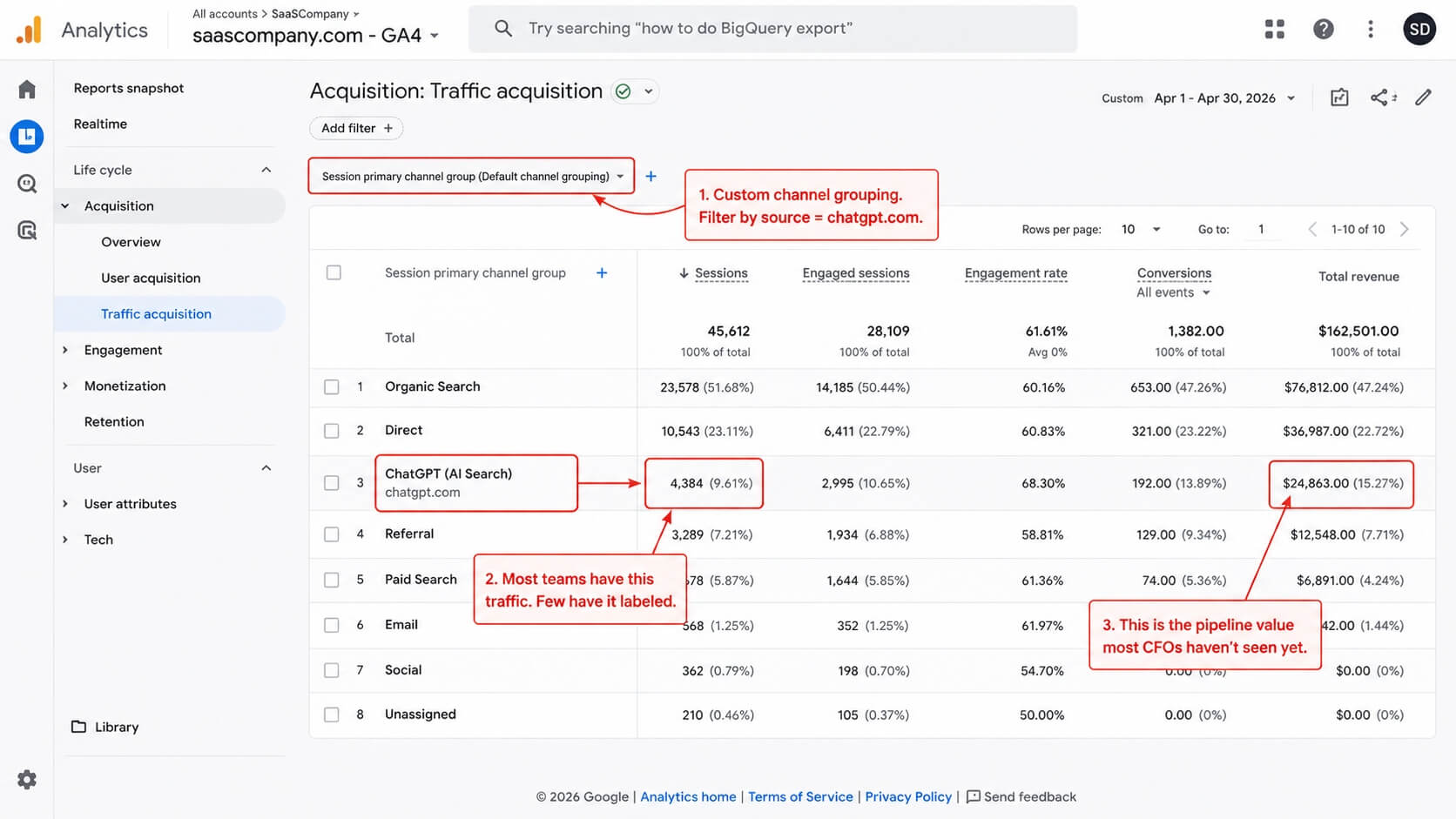

DerivateX has tracked 137+ citations for Gumlet across buyer-intent prompts in ChatGPT, Perplexity, Claude, and Gemini. AI discovery now accounts for roughly 20% of Gumlet’s inbound revenue, measurable via utm_source=chatgpt.com referral data in GA4 and direct attribution in their CRM.

That number is the citation-to-revenue handshake most teams haven’t connected because they’re counting all citations as equal.

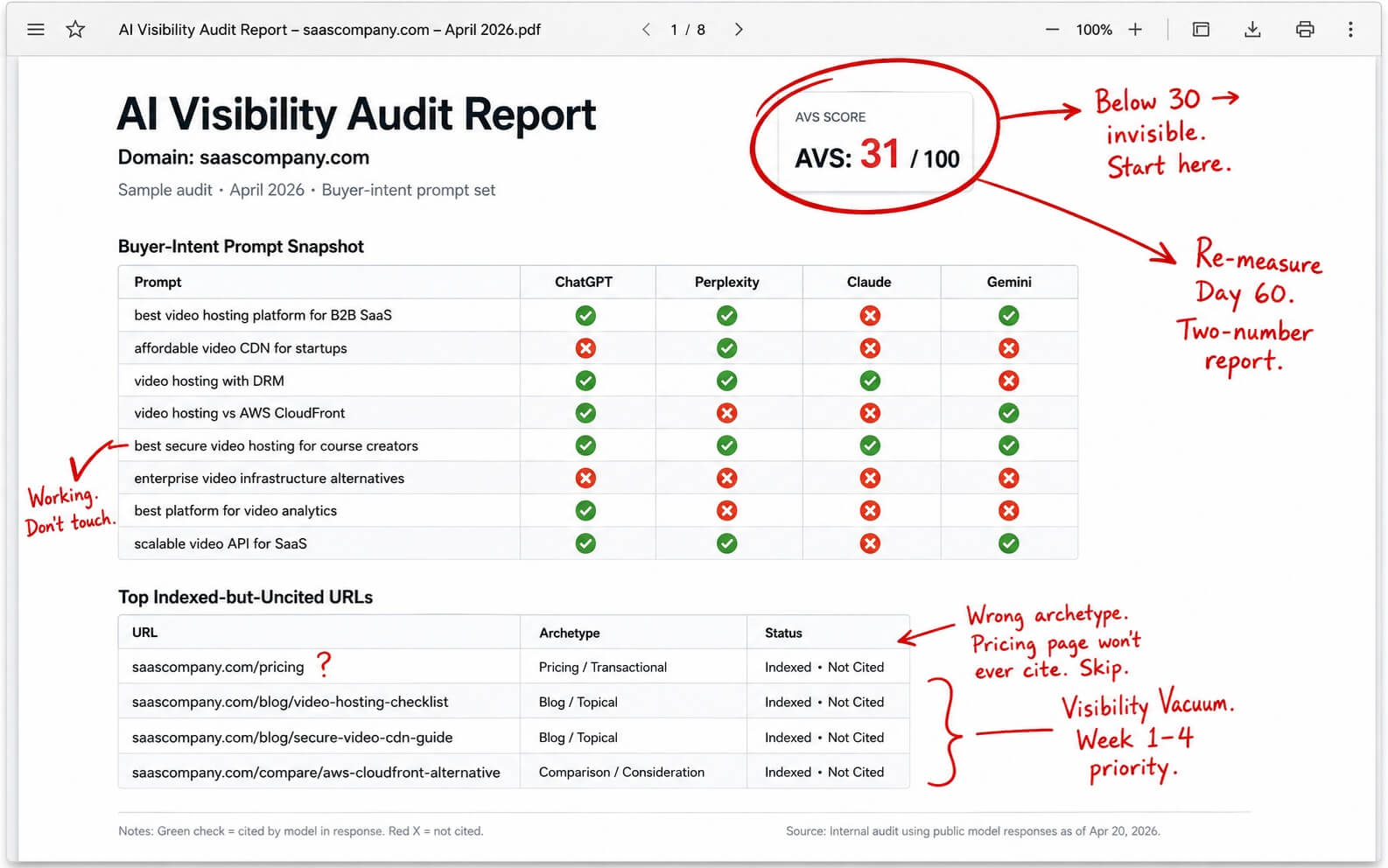

AVS as the Right KPI

AI Visibility Score (AVS) measures the percentage of buyer-intent prompts in a tracked set on which a domain is cited as a source. It scopes out informational citations, brand mentions without links, and citations on prompts no buyer would ever run.

Across the DerivateX AVS Benchmark of 50 B2B SaaS companies:

- Average AVS: 56.9

- Below 30: brand is largely invisible to AI buyers in its category

- Above 75: brand owns its category in AI answers

- Highest in dataset: Clio at 89

- Lowest in dataset: 2

How Do I Track Which Pages ChatGPT Is Citing?

Citation tracking takes a three-layer stack:

- Server log parsing for OAI-SearchBot, ChatGPT-User, and GPTBot hits, grouped by URL and frequency.

- UTM-aware referrer filtering for utm_source=chatgpt.com traffic in GA4, separated into its own channel rather than buried in the default “referral” bucket.

- Scheduled prompt-set monitoring that runs a fixed list of buyer-intent prompts across ChatGPT, Perplexity, Claude, and Gemini on a weekly cadence and reports which of your pages were cited each time.

You can run a free version of the third layer using DerivateX’s AI Visibility Checker, which checks your domain against a sample buyer-intent prompt set and returns your citation rate, the pages getting cited, and the prompts you’re missing.

It’s not a replacement for a full audit. It gives you the baseline number to argue from.

Do I Need to Allow OAI-SearchBot in Robots.txt?

Yes, unless you want to be excluded from ChatGPT search citations entirely.

OAI-SearchBot is OpenAI’s search crawler. It’s separate from GPTBot, which is the training crawler. The two can be configured independently. Allowing OAI-SearchBot is necessary for citation eligibility.

It’s a one-line check, not a growth lever. Move on. Before you do, audit the rest of your file: most B2B SaaS sites we look at have at least one of these common robots.txt mistakes silently blocking AI crawlers, and many have not yet implemented an llms.txt file which is becoming the emerging standard for AI search directives. The full crawler-side configuration sits in our LLM SEO checklist.

Is 10,000 Citations a Lot? Is 40 Citations a Lot?

The answer is context-dependent on prompt-intent and scope.

For a single B2B SaaS brand tracked across one quarter, 40 buyer-intent citations is a strong working baseline (roughly the midpoint of the AVS Benchmark). 10,000 citations only means something when you scope it:

- 10,000 citations on definitional queries = brand awareness theater.

- 10,000 citations on buyer-intent prompts = top decile of any B2B SaaS category.

The raw count is the wrong frame. Scope the count to prompts a buyer in your ICP would actually run.

Benchmarks by ARR Band

| ARR band | Quarterly buyer-intent citation target | What it signals |

| Under $5M (Seed–Series A) | 5–15 | Below this, the brand is invisible. |

| $5M–$20M (Series A–B) | 15–40 | Competitive band. Where most GEO engagements start. |

| $20M+ (Series B and beyond) | 40–120+ | Category-credible. Below 40, larger competitors are eating your AI-channel demos. |

| Category leaders | 137+ | Achievable per the Gumlet tracking. Clio’s AVS of 89 implies a similar order of magnitude. |

These bands assume a tracked prompt set of 100 to 300 buyer-intent prompts run weekly across four engines. A smaller prompt set produces smaller numbers without indicating worse performance.

The 30/60/90-Day Citation Engineering Plan

Don’t wait six months. Run a structured 90-day plan that diagnoses, engineers, and measures.

Verito’s engagement with DerivateX moved one indexed-but-uncited page from Position 40 to AI’s #1 Pick for a high-intent buyer query inside this same window. The timeline is workable when the work is targeted.

Days 1–30: Diagnose the Indexed-but-Uncited Gap

Pull every indexed URL on the domain. Run the page titles against a tracked buyer-intent prompt set of at least 100 prompts. Tag every URL by archetype:

- Comparison

- Tool

- Documentation

- Pillar

- Pricing

- Product

- Founder content

Tag the highest-priority indexed-but-uncited URLs. These are pages that target a buyer-intent prompt, are indexed in Bing, and still show up in zero citations.

This list is your Visibility Vacuum surface map. It should drive the next 60 days of work.

A first audit usually surfaces 8 to 25 high-priority indexed-but-uncited URLs on a typical B2B SaaS site of 200 to 500 pages. The exact number is less interesting than the ratio of priority pages to total pages.

Days 31–60: Engineer Citations on Three Archetypes

For each of three priority indexed-but-uncited URLs, do four things:

- Rewrite the title and primary H2 to match a specific fanout query at the cosine-similarity level. The brief: title contains the exact phrase the buyer would type, not a paraphrase.

- Insert one citable proprietary statistic, sourced and dated, in the top third of the page.

- Add one comparison table with factual column values, not subjective ratings.

- Confirm the answer capsule is positioned in the top 30% of the page, link-free, in 40 to 80 words.

Re-trigger Bing recrawl via IndexNow after publish. Wait 14 days, then re-run the tracked prompt set against the rewritten pages.

Days 61–90: Measure AVS Lift, Not Citation Count Alone

Re-run the tracked prompt set weekly. Report two numbers to leadership:

- AVS delta (percentage point change across the tracked prompt set)

- Buyer-intent citation count delta (absolute change)

Tie any utm_source=chatgpt.com traffic that lands during the period to pipeline value in the CRM, separated from generic referral attribution. If you’re measuring AI search ROI properly for the first time, this is where most teams discover they have AI-sourced pipeline they were attributing to “direct.”

The two-number report is the citation-to-revenue handshake.

AVS delta proves the GEO work moved the needle. Pipeline value proves the AI channel converts. Without both, the work reads as a vanity exercise to a CFO.

Is ChatGPT Citation Accurate? And What About the References It Can’t Find Back?

ChatGPT citations are accurate when the model is in search mode and the cited URL still resolves. They are unreliable when the model is in knowledge mode, where citations are pattern-matched from training data and may point to URLs or papers that don’t exist.

The phantom-citation problem readers encounter is a feature of mode, not of any individual brand or page.

You can tell which mode you’re in by checking for numbered source links in the response:

- Numbered citations with hover-able links = live retrieval ran.

- No links, just plain text references = the model is making up plausible-looking sources from training-data patterns.

Verify before quoting any reference ChatGPT gives you back without inline citations.

FAQ

When did ChatGPT start adding sources and citations?

ChatGPT began surfacing inline source citations with the launch of ChatGPT Search in late October 2024, when OpenAI formalized OAI-SearchBot as the search-specific crawler separate from GPTBot.

Before that, ChatGPT operated almost entirely from training data. Any “citations” it produced were pattern-matched constructions that often pointed to URLs that did not exist.

The shift to retrieval-augmented citations is what created the citation-tracking discipline as we know it in 2026. Inline citations with hover-able source links are now the default behavior of ChatGPT Search whenever the model triggers a live web search.

Why doesn’t ChatGPT cite my pages even though I rank well in Google?

Google ranking does not predict ChatGPT citation because ChatGPT uses Bing’s index, not Google’s.

Seer Interactive’s analysis found roughly 87% of ChatGPT citations align with Bing’s top organic results for the same query. A page ranking first on Google can rank tenth or worse on Bing for the same keyword, and ChatGPT will cite whoever sits in Bing’s top results.

The first fix is to verify your domain in Bing Webmaster Tools, submit your sitemap, and check whether your money pages are indexed and ranking on Bing specifically. If you’re seeing traffic decline despite stable rankings, the Bing-Google index gap is usually the first thing to check. Google rankings and Bing rankings are two separate optimization paths in 2026.

How long does it actually take to get cited by ChatGPT?

For a high-fit B2B SaaS page that matches a buyer-intent prompt and is indexed in Bing, the realistic window is 2 to 8 weeks.

Comparison pages move fastest, often within 14 days of Bing indexation. Pillar guides and documentation can take 8 to 16 weeks. After 90 days without a citation, the page is unlikely to be cited at all without structural rewrites to the title, URL, and on-page answer capsule.

The right framing is not how long, but whether the page has the structural traits to be cited in the first place.

Is ChatGPT killing organic search traffic for B2B SaaS?

ChatGPT is shifting traffic, not killing it.

Click-through rates on AI Overview queries have fallen from 1.76% to around 0.61%, but Webflow has reported that 8% of their signups now originate from LLM referral traffic, converting at roughly six times the rate of Google organic.

The net effect for B2B SaaS is fewer clicks per impression but higher pipeline conversion per click. Brands that aren’t tracking utm_source=chatgpt.com referrals as a distinct channel in GA4 are missing pipeline that’s already arriving, just hidden inside the “direct” or “referral” buckets.

How do I know which of my pages will get cited by ChatGPT?

The cleanest test is to run your indexed page titles against a tracked buyer-intent prompt set, then check cosine similarity between each page’s title and the fanout queries ChatGPT generates for those prompts.

Pages scoring above 0.6 on title-to-prompt similarity are citation candidates. Pages scoring below 0.5 are unlikely to ever clear ChatGPT’s retrieval filter without rewrites.

Page archetype is the second filter: comparison and tool pages clear faster than pillar guides, and pricing pages rarely clear at all. Run the full diagnostic on your domain before committing to a 90-day GEO sprint.

Are 40 citations enough for a B2B SaaS brand?

For a Series A to B B2B SaaS company in the $5M to $20M ARR range, 40 buyer-intent citations per quarter across ChatGPT, Perplexity, Claude, and Gemini is a competitive baseline.

The number alone is not the headline. The share of those citations that fall on buyer-intent prompts (versus definitional or off-category prompts) is what matters.

A brand with 40 citations distributed across high-intent purchase queries is in stronger pipeline position than one with 400 citations distributed across glossary-type informational queries. Track AVS, not raw citation count.

Conclusion

The timeline question hides the real question.

Most published B2B SaaS pages will never be cited by ChatGPT, the citation curve is front-loaded inside 60 days, and the archetypes that win are narrower than most content roadmaps assume. The work that matters is identifying which of your indexed pages can clear ChatGPT’s retrieval filter, which archetypes are doing the citation work in your category, and what the pipeline value of those citations actually is.

The right next step is a diagnostic, not another publishing sprint.

Run a free AI Visibility Audit on your domain to see your actual citation rate, the prompts you’re missing, and which pages are stuck in the Visibility Vacuum. The audit will tell you within a week whether your domain has a citation problem or a content problem. Those are two different fixes with two different timelines.

The category is moving fast enough that a Q3 2026 baseline will look meaningfully different from a Q1 2026 one. Brands that get their tracking stack and their archetype map in place this quarter will be the ones reporting AVS-to-pipeline numbers to their board by year-end. The rest will still be asking how long it takes.