Case study: Gumlet turned ChatGPT mentions into 20% of inbound revenue. Read it →

The B2B SaaS Founder’s Guide to AI Search Visibility

TL;DR

- AI search visibility and Google rank are scored against different surfaces, which is why a #1 ranking on Google and zero ChatGPT citations sit together without contradiction.

- The 2026 AI Visibility Benchmark of 50 B2B SaaS companies puts the category average at 56.9 out of 100, with the top scorer (Clio) at 89 and the bottom scorer (LeadSquared) at 2.

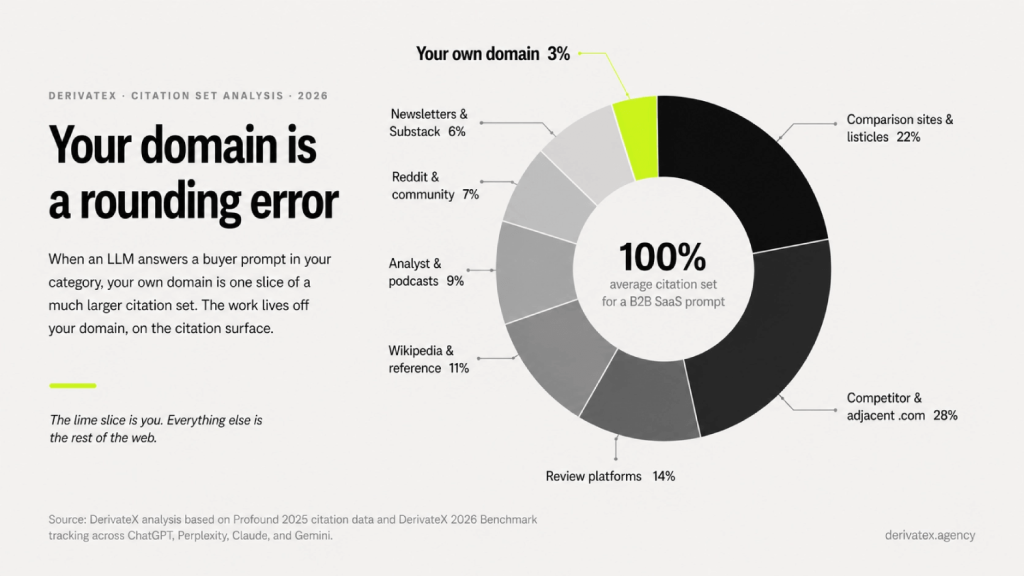

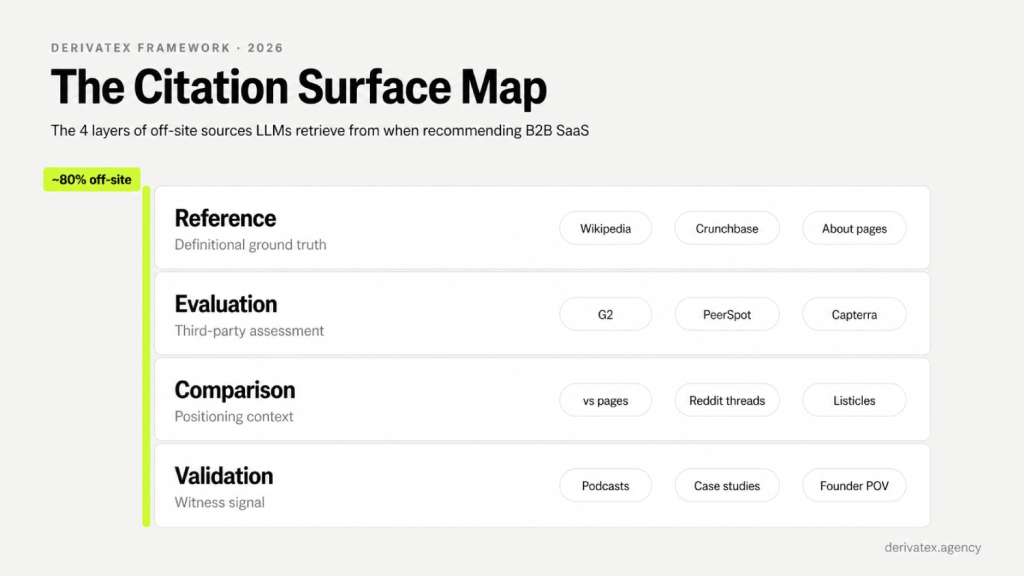

- Roughly 80% of what gets a B2B SaaS company cited in ChatGPT, Perplexity, Claude, and Gemini lives off the company’s own domain on what we call the citation surface.

- The citation surface has four layers (Reference, Evaluation, Comparison, Validation), and most SaaS companies have engineered exactly zero of them.

- AI-referred traffic across our client base converts at 14.2%, against 2.8% from Google organic, which makes AI search a pipeline channel and not a vanity metric.

- A free 15-minute AVS audit on isaiaware.com tells you where you sit on the 0 to 100 scale and which layer of your citation surface is leaking.

If an AI had to recommend three companies in your category right now, would you clearly belong there?

For 44% of the B2B SaaS companies we scored in our 2026 AI Visibility Benchmark, the answer is no. The category average across 50 named SaaS brands sits at 56.9 out of 100. The top scorer, Clio, hit 89. The bottom scorer, LeadSquared, hit 2. That 87-point spread inside a single benchmark is not a tail. It is the shape of the entire category in April 2026.

I run DerivateX. We do this for B2B SaaS companies between $5M and $50M ARR. One of our clients, Gumlet, now attributes 20% of inbound revenue to ChatGPT and Perplexity, and we track 137+ active citations across its category surface. Across our client portfolio, AI-referred traffic converts at 14.2%, against 2.8% from Google organic. This guide is the unedited version of what we tell founders on diagnostic calls. The voice is operator-to-operator, not analyst-to-reader.

Here is the claim the rest of this piece defends: Google rank and AI citation are two different scoreboards measured against two different surfaces. Roughly 80% of what determines AI citation lives off your domain, on what we call the citation surface. That means the SEO playbook your team has been running for five years has been measured against the wrong scoreboard for most of the prompts your buyers now ask.

This piece breaks down four reasons most B2B SaaS sites are invisible in AI search, with the specific fix for each: the decoupled-scoreboards mechanism, the Citation Surface Map, the Search Budget Framework, and the AVS measurement system. Before reading further, run your domain through Is AI Aware so you have your own AVS score open in another tab while you read. The piece will land harder if you can match each section to a specific gap on your own scorecard.

“We rank #1, we’re fine” is the most expensive sentence in B2B SaaS right now

Google rank and AI citation are decoupled because they are scored against different sources. Google scores your owned site against its index. LLMs score the off-site web against the prompt. You can win the first and lose the second, and most of our clients arrived after doing exactly that for two years.

This is the mechanism the rest of the guide builds on. If you only take one section seriously, take this one.

Two scoreboards, two surfaces

Traditional SEO measures one thing: how your pages rank for keyword queries inside Google’s index. The lever is your own domain. You publish a page, you optimize it, you build links to it, you wait. Your ranking is a function of what is on your site and how Google’s algorithm scores it.

AI search engines do not work that way. ChatGPT, Perplexity, Claude, and Gemini synthesize answers from a much wider retrieval set. They pull from their training corpora, from live web indices, from review platforms, from Reddit threads, from analyst write-ups, from podcast transcripts. Your domain is one node in a graph of thousands. This is what we call LLM visibility, and it is scored independently of Google ranking.

The implication is uncomfortable. If you have spent five years optimizing only your own site, you have spent five years optimizing one node in a graph that the LLM weights against the rest of the web before it cites anyone. Your #1 Google ranking is real. Your AI invisibility is also real. They are not contradictions. They are predictable outputs of optimizing the wrong surface. (If this is the exact pattern you are watching, traffic decline despite rankings is the use case we walk founders through most often.)

Your #1 Google ranking is real. Your AI invisibility is also real. They are not contradictions.

What ChatGPT, Perplexity, Claude, and Gemini actually retrieve from

Profound’s 2025 citation analysis tracked 680 million AI citations across the major platforms and found Wikipedia at 7.8% of ChatGPT citations, Reddit at 6.6% of Perplexity citations, and .org domains taking 11.29% across platforms. The .com share is high, but that share is distributed across thousands of third-party domains, not concentrated on any single brand site.

Translated into operator language: in the average citation set the LLM assembles to answer a buyer prompt in your category, your domain is statistically a rounding error. The model is reading G2 review summaries, Reddit threads in r/SaaS, comparison listicles on third-party publications, your competitors’ alternative pages, and analyst posts. Then it picks who to recommend.

If your strategy is “publish more on our blog,” you are throwing more weight at the smallest node in the graph.

Why your category leader scores 23 and a smaller competitor scores 89

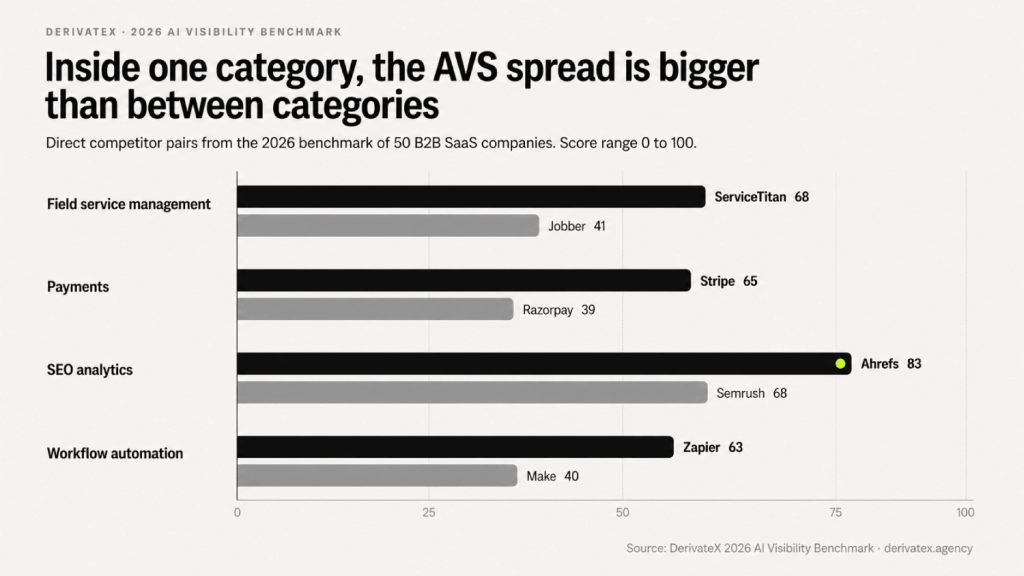

Inside the same competitive categories in our 2026 benchmark, the spread between direct rivals is sharper than most founders expect:

- Field service management: ServiceTitan 68, Jobber 41

- Payments: Stripe 65, Razorpay 39

- SEO analytics: Ahrefs 83, Semrush 68

- Workflow automation: Zapier 63, Make 40 (despite Make being present on all four platforms while Zapier is absent from Claude entirely)

Market share does not predict AI visibility. Information architecture does. Make is on more platforms than Zapier and still scores 23 points lower, because platform breadth alone does not determine the score. Mention rate and prominence carry 60 of the 100 available points. That is where the engineering happens.

If you are operating in a category with a clear “leader” by ARR or by Google rank, do not assume you are safe. Assume the opposite. The leader is often the company with the most outdated Wikipedia entry and the thinnest comparison surface, because they got lazy on the off-site work that built their position in the first place. The pattern shows up clearly when a competitor keeps showing up in ChatGPT and the incumbent does not.

The Citation Surface Map: 4 layers, 80% off-site, and why your blog is not the lever

The second-most expensive sentence in B2B SaaS is “we just need more content.” Most SaaS teams are trying to fix an off-site problem with on-site output. It does not work. The lever is the citation surface: the total set of pages on the open web that an LLM can retrieve when answering questions about your category.

In our analysis of the 50 brands in the 2026 benchmark, roughly 80% of the citations driving recommendations sit on third-party domains. Your blog is part of the surface. It is not most of it. (The full methodology is in our Citation Engineering framework.)

The citation surface has four layers. Each does a different job for the model. We map each client’s surface against this taxonomy on diagnostic calls because it is the only way to find which layer is leaking.

The Reference layer: definitional ground truth

The Reference layer is the set of sources LLMs treat as factual baseline. Wikipedia. Crunchbase. Your own glossary and About pages. Your founder’s bio pages on LinkedIn and on your site, with consistent sameAs schema. Knowledge-graph adjacent content.

When a model needs to answer “what is [your product],” it looks here first. If you do not have a Wikipedia entry, a complete Crunchbase profile, and a clean entity description on your homepage, the model has nothing to anchor you to. It either skips you or hedges its description. Hedged descriptions do not get recommended.

Most SaaS companies have a 200-word About page and call it done. That is not a Reference layer. A Reference layer is when an LLM can confirm the same definition of who you are, what category you sit in, and who you serve from at least three independent sources.

If your own site says “developer-first video infrastructure” and your G2 page says “video hosting platform” and your Crunchbase profile says “media SaaS,” you have given the model three different stories. It will pick the easiest competitor instead. Fixing this is the work of entity optimization, which is unglamorous and asymmetric in payoff.

The Evaluation layer: third-party assessment

The Evaluation layer is where third parties assess you. G2, Capterra, PeerSpot, TrustRadius, analyst write-ups, vertical industry reviews. The work LLMs do in this layer is “is this product good and for whom.”

Citation weight in this layer comes from review density and recency, not star rating. Onely’s 2026 analysis of AI product recommendations found that recommended items have on average 3.6x more reviews than non-recommended items in the same category. A four-star page with 12 reviews is weaker than a 3.7-star page with 400.

The fix is uncomfortable for some founders: it is a sustained customer-success motion to drive recent, detailed reviews on the platforms that matter for your category. PeerSpot outperforms G2 in citation weight in cybersecurity and in several enterprise-IT categories. G2 dominates horizontal SaaS. Capterra carries weight in SMB-targeted categories. The platform that matters for your category is not always the one your competitor is loudest on. Run your own Evaluation-layer audit before you assume.

The Comparison layer: positioning context

The Comparison layer is where LLMs position you against alternatives. Third-party “X vs Y” pages. “Best of” listicles by industry publications. Reddit comparison threads. Category roundups on Substack. Affiliate sites that rank for “[Competitor] alternatives.”

⚠️ Most SaaS companies own the “vs Competitor” pages on their own domain and ignore the third-party comparison surface entirely. This is the asymmetric layer. Your own /vs page is table stakes. The leverage is in the third-party Comparison layer, because that is where the model goes when it is deciding between you and the next-best option.

If a buyer asks ChatGPT “best CRM for early-stage SaaS,” the model is reading at least three of: a TechCrunch listicle, a Reddit thread in r/SaaS, an affiliate roundup on a niche newsletter, a comparison page on a tooling marketplace. If your name does not appear in those sources with consistent positioning, you are not in the comparison set. You are not even in the conversation.

The Validation layer: witness signal

The Validation layer is the witness layer. Case studies hosted on third-party domains. Podcast appearances with searchable transcripts. Conference talk recordings indexed online. Organic Reddit mentions from real users. Substack teardowns by named writers. Customer-generated content on LinkedIn and X.

This layer is the hardest to fake and the layer that closes the citation when the model is choosing between two finalists with similar Reference, Evaluation, and Comparison footprints. The Validation layer is where being real beats being optimized.

It is also the layer most underestimated by SaaS marketing teams, because it does not produce a clean attribution dashboard. You cannot run a quarterly campaign that says “earn three podcast appearances and four organic Reddit mentions.” You can build the conditions for it (founder visibility, customer storytelling, named-expert positioning) and then measure the citation surface as it grows.

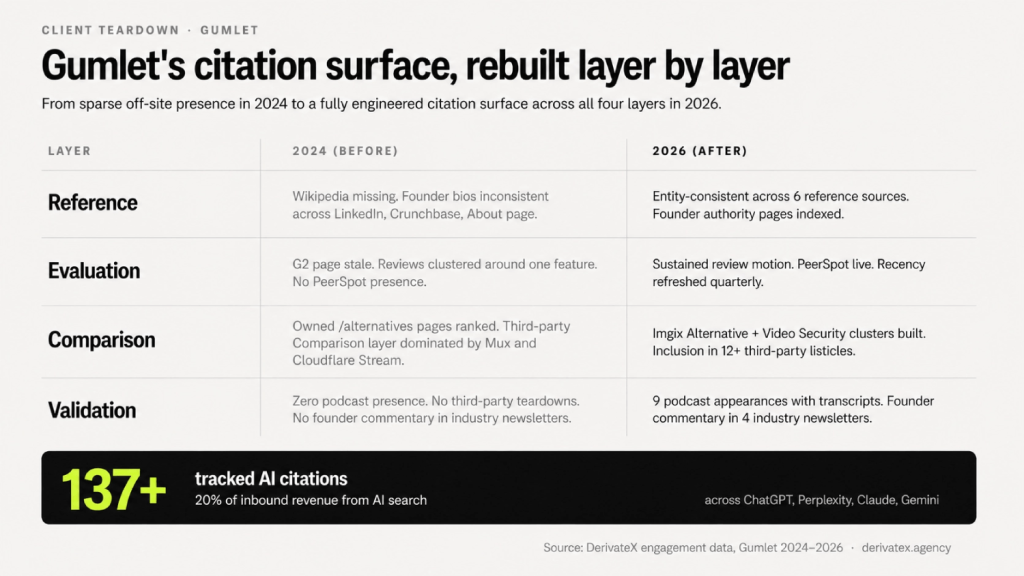

Case study: how Gumlet engineered 137+ citations and 20% of inbound from AI

Gumlet sells video infrastructure to B2B and developer-focused SaaS. When they came to DerivateX, they had a strong Google footprint and almost no AI citation presence. We mapped their existing surface against the four layers and found the same pattern we see across most clients.

- Reference layer: thin. Wikipedia presence missing, founder bio pages inconsistent, glossary content underbuilt.

- Evaluation layer: G2 page existed but reviews were stale and clustered around one feature.

- Comparison layer: their own /alternatives pages ranked, but the third-party Comparison layer was dominated by Mux and Cloudflare Stream.

- Validation layer: zero podcast presence, no third-party teardowns, no founder commentary in industry newsletters.

We rebuilt the surface in that order. Reference layer first (entity consistency, founder authority pages, glossary expansion), Evaluation layer next (review acceleration, PeerSpot expansion), Comparison layer with the Imgix Alternative and Video Security clusters, then Validation through founder-led commentary and case-study syndication.

Eighteen months in, Gumlet now appears in 137+ tracked citations across ChatGPT, Perplexity, Claude, and Gemini for buyer queries in its category. AI-referred traffic accounts for 20% of inbound revenue. The on-site work was a fraction of what we did. The on-site work alone would not have moved the score.

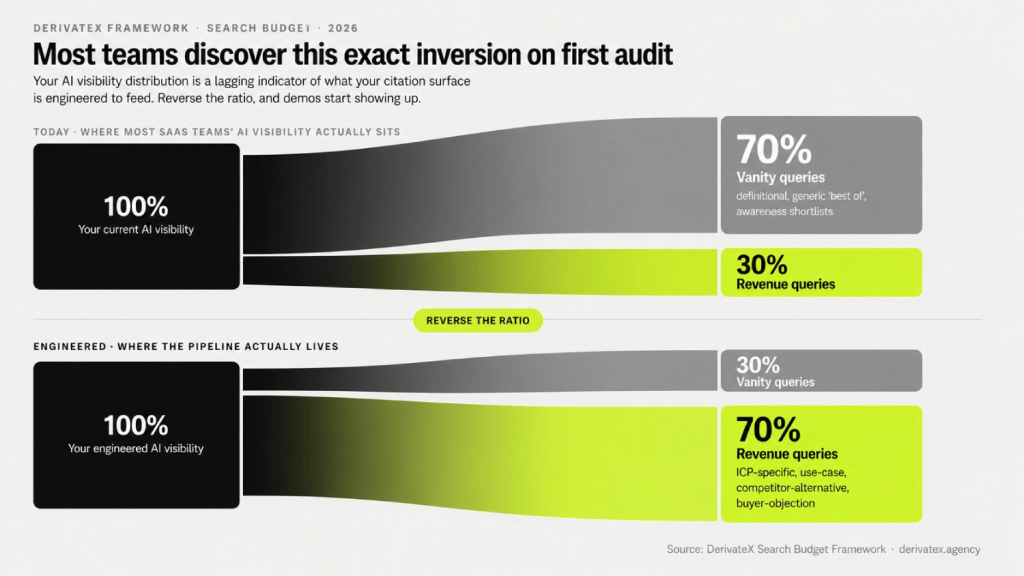

The Search Budget Framework: most prompts you track are vanity prompts

Every AI visibility tool tells you to track 20-30 prompts. Almost none of them tell you which prompts. The result is dashboards full of green check marks for queries that will never produce a single demo booking.

In our work, we run a different question first: of the prompts you appear in, how many actually map to revenue?

For how many relevant prompts do we show up?

The number of prompts you appear in is a denominator question, not a numerator one. You need to define the denominator before “we appear in 8 prompts” means anything.

The operational baseline is 50+ buyer queries per category, segmented by ICP and use case. Anything under 50 is a toy-scale audit, the kind that produces dashboards but not decisions. The first move on a diagnostic call is rebuilding the prompt portfolio so the founder can answer “8 of 60 high-intent prompts that map to revenue” instead of “8 prompts.”

If your tooling vendor told you 20 prompts is enough, they were optimizing for their pricing tier, not your visibility.

Vanity queries vs revenue queries: the split

Not every query in your category is worth tracking. We split prompt portfolios into two buckets:

- Vanity queries: definitional (“what is [category]”), generic (“best [category]”), broad-list comparisons, awareness-stage prompts that produce shortlists of 10+ tools where being mentioned 8th does nothing for pipeline.

- Revenue queries: ICP-specific (“best [category] for [segment + constraint]”), use-case-with-context (“how do I [solve specific problem] for [ICP]”), competitor-alternative (“[Competitor] alternative for [use case]”), buyer-objection (“is [Category] worth it for [stage] startups”).

Demand Gen Report’s 2025 buyer research found that B2B buyers are increasingly framing their AI prompts as full scenarios, not keywords. The shortlist inclusion that matters happens on the revenue queries, not the vanity ones. A SaaS team appearing in 90% of vanity queries and 10% of revenue queries has a flattering dashboard and a leaking pipeline.

📊 The number to remember: in our portfolio, the average client books 4-7x more demos from a single revenue query at #1 than from ten vanity queries at #3.

The 50+ query baseline, mapped to your ICP segments

A working prompt portfolio for a $5M-$50M ARR B2B SaaS looks like this:

- 10 category queries (definitional, awareness)

- 15 ICP-segment queries (best-for-X-buyer)

- 15 use-case-with-constraint queries (specific problem + specific scenario)

- 10 competitor-alternative queries (named competitors, alternative searches)

- 5 buyer-objection queries (cost, security, scale concerns)

That is 55 prompts. Each one tagged with funnel stage and ICP segment. Each one tracked weekly across ChatGPT, Perplexity, Claude, and Gemini. A prompt portfolio without segmentation is not a prompt portfolio. It is a feed.

You will discover that 60-70% of your current “AI visibility” lives in the category and definitional queries (vanity) and 10-20% lives in the ICP-segment and use-case queries (revenue). That distribution is the lagging indicator of what your citation surface is engineered to feed. Reverse it, and demos start showing up.

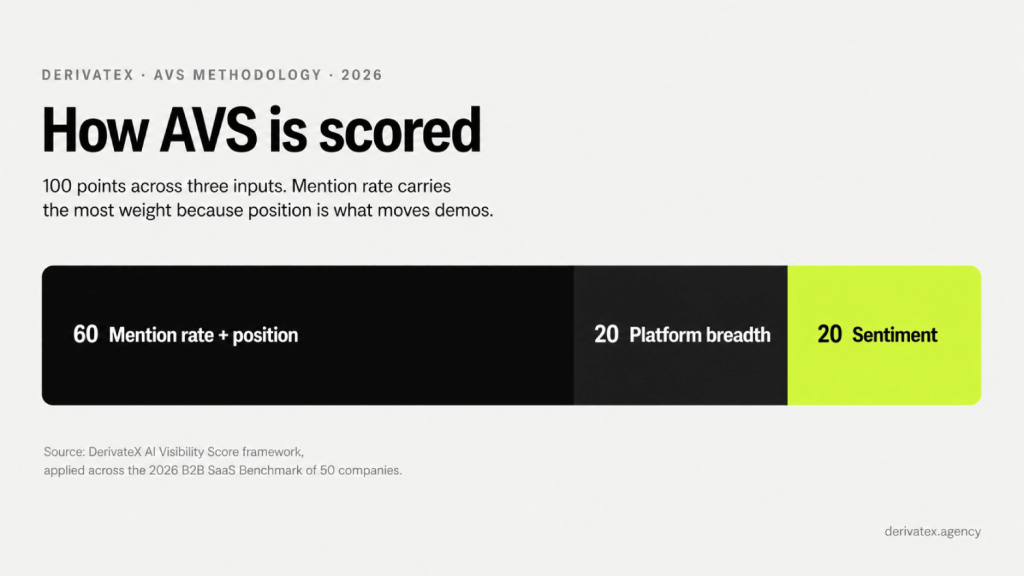

AVS: the only score that tells you whether you belong on the shortlist

If you are going to manage AI visibility as a channel, you need a score. Citations alone are too noisy. Sentiment alone is misleading. The AI Visibility Score (AVS) is what we built to combine the inputs into one number a CMO can move week over week.

How AVS is constructed

AVS is a composite score on a 0 to 100 scale. The methodology used in the 2026 Benchmark splits the 100 points across three inputs:

- 60 points: mention rate and position. How often you appear when a buyer asks a category question, and where in the response you appear (top of answer carries more weight than bottom).

- 20 points: platform breadth. Whether you appear consistently across ChatGPT, Perplexity, Claude, and Gemini, or only on one or two.

- 20 points: sentiment. Whether the framing around your name is positive, neutral, or negative in the AI’s synthesis.

The 60-point weight on mention rate and position is deliberate. A perfectly positive mention that happens once a quarter does nothing for pipeline. Most AI visibility tools weight sentiment too highly because it is easier to measure than position. Position is the variable that moves demos.

The 2026 benchmark: 56.9 average, Clio 89, LeadSquared 2

The 2026 AI Visibility Benchmark scored 50 named B2B SaaS companies across 1,400 buyer-intent prompts. The headline numbers:

- Category average: 56.9/100

- Top scorer: Clio at 89

- Bottom scorer: LeadSquared at 2

- 44% of companies scored below 50

The full report is published and was covered by Demand Gen Report in April 2026. The competitive spreads within categories are the part most founders fixate on:

| Category | Higher AVS | Lower AVS | Spread |

|---|---|---|---|

| Field service management | ServiceTitan 68 | Jobber 41 | 27 |

| Payments | Stripe 65 | Razorpay 39 | 26 |

| SEO analytics | Ahrefs 83 | Semrush 68 | 15 |

| Workflow automation | Zapier 63 | Make 40 | 23 |

The spread inside a single category is often larger than the spread between categories. That gap is engineered, not earned by market share. It is the output of who is investing in the four layers of the citation surface and who is still publishing on their blog.

What perfect sentiment with low mention rate actually means

The benchmark surfaced a subset of 10 companies (Close, WebEngage, Kissflow, CleverTap, Freshworks, Razorpay, BrightEdge, Mindbody, Mangools, Toast) with perfect sentiment scores of 20/20 but mention rates of 8/30 or lower.

When the AI mentions these brands, the framing is uniformly positive. The gap is frequency, not perception.

This is a distribution problem, not a brand problem. Good mentions do not matter if they do not compound across the citation surface. The fix is not to “improve sentiment” (already maxed) or to “build the brand” (vague). The fix is to expand citation surface coverage on the third-party Comparison and Validation layers so the AI has more reasons to mention you in the first place.

If your AVS profile shows high sentiment and low mention rate, you have the easiest fix in the benchmark. The hard part (being liked by the model) is done. The remaining part is showing up more often in the sources the model reads.

💡 Quick check before reading on: Run your domain through isaiaware.com for your AVS score. Two minutes, no signup. The rest of this guide will land harder once you know which of your three inputs is leaking.

How do I become part of the answer? A 90-day cold start, with receipts

Most guides tell you AI visibility takes 12 to 18 months. That is true for category dominance. It is not true for moving a single high-intent revenue query to #1. We have done the latter in 90 days for clients with a complete cold start.

This is the sequence we run, mapped to days, with a real 90-day teardown at the end. (For the deeper field reference, see our LLM SEO checklist.)

Days 1-30: Reference and Evaluation layer fix

The first month is foundational, and skipping it is the single most common reason 90-day GEO programs fail.

- Wikipedia draft or sandbox. Most SaaS companies do not need a full Wikipedia entry, but they need entity consistency that maps to one. Where notability is borderline, sandbox-quality Reference content on owned properties does the job.

- Crunchbase, LinkedIn, founder bio pages. All entity descriptions identical across sources. Same category label everywhere.

sameAsschema linking them. - About page rewrite. First sentence: “[Brand] is a [category] for [ICP] that [primary value prop].” This is the highest-yield extraction unit on your site.

- Definition-forward glossary. One page per category-defining term, written for definitional retrieval.

- G2 / PeerSpot / Capterra review acceleration. Drive 15-30 fresh reviews in the first 30 days, with detailed prose responses, not star ratings alone.

llms.txtand crawler access. Confirm GPTBot, ClaudeBot, PerplexityBot, and Google-Extended are not blocked inrobots.txt. Addllms.txtfor completeness, but understand it is a polite signal, not a recommendation engine. (More on this in section 6.)

Day 30 milestone: the model has a coherent baseline of who you are. You have not won citations yet. You have stopped being a hedge.

Days 31-60: Comparison and Validation layer plays

Month two is where the asymmetric work happens, because most competitors are still publishing blog posts.

- Third-party comparison page targets. Identify the 5-10 third-party listicles and roundups that rank for your category’s revenue queries. Pursue inclusion through editorial outreach, expert quotes, and named contributions.

- Alternative-page strategy. Map every “[Competitor] alternative” query in your portfolio. Build a position on each, both on your own site and through third-party affiliate and review surfaces.

- Founder-led commentary. One published founder POV per week, distributed across LinkedIn, X, Substack, and at least one industry newsletter. Named, attributable, opinionated. Anonymous thought leadership does not feed the Validation layer.

- Podcast guest sequencing. Two podcast appearances in month two, selected for transcript indexability rather than audience size. A 50,000-listener podcast that does not publish a transcript does less for your AVS than a 5,000-listener one that does.

- Organic Reddit positioning. Not paid promotion. Real, founder-account-level participation in the 2-3 subreddits where your buyers actually research. r/SaaS, r/sales, r/cybersecurity, depending on category.

Day 60 milestone: you start showing up in long-tail revenue queries on at least one platform, usually Perplexity first because it weights live retrieval most heavily.

Days 61-90: REsimpli’s path from invisible to #1 ChatGPT citation

REsimpli is a real estate investor CRM. On day zero of the engagement, REsimpli was not in the top 10 of any AI engine for any related prompt. The query “best CRM for real estate investors” returned the usual horizontal CRM names with no category-specific recommendation.

By day 90, REsimpli was the #1 cited tool in ChatGPT for that query.

The sequence we ran:

- Days 1-30 (Reference + Evaluation): rebuilt entity consistency across REsimpli’s site, Crunchbase, and founder bio. Drove 22 new G2 reviews and a sustained PeerSpot push. Fixed the About page to lead with the ICP-specific positioning (“REsimpli is a CRM built for real estate investors, not horizontal SMBs”).

- Days 31-60 (Comparison + Validation): placed REsimpli in three third-party “best CRM for real estate” listicles, ran a founder-led op-ed in a real estate operator newsletter, and got two podcast appearances with full transcripts published.

- Days 61-90 (compounding): the citations started reinforcing each other. ChatGPT, which had been hedging on the category, started naming REsimpli first because the surface had become the densest one in the niche.

The work was led by Ehsan Rishat on our team. The 90-day timeline was not luck. It was the predictable output of attacking all four citation surface layers in sequence in a category where the incumbents were still optimizing their blogs.

If your category has horizontal incumbents and a clear ICP-specific niche, 90 days to #1 on a single revenue query is not aggressive. It is the realistic floor.

What schema, FAQs, and llms.txt actually do (and don’t)

Every AI search guide written in the last 18 months tells you to add schema, FAQ blocks, and llms.txt. The reader rolls their eyes by paragraph three because the advice has been repeated 100 times and their citations have not moved.

Here is the honest version. Schema, FAQs, and llms.txt are table stakes. They make extraction easier once trust exists. They do not produce trust on their own.

What they do: extractability, not authority

Schema and FAQ blocks help the model parse your page into clean chunks. JSON-LD with Article, FAQPage, Author, Organization, and sameAs fields gives the model a confident handle on what your page is and who wrote it. Direct-answer FAQ formatting (question as H3, answer in 60-100 words of prose immediately below) maps directly to the way LLMs lift and cite passages.

This is real value. It is also not the lever moving citations from zero to one. SE Ranking’s 2026 analysis of LLM ranking factors found that FAQ schema markup itself has no measurable correlation with AI citations once you control for content structure. Translated: the structured prose underneath the FAQ schema matters. The schema is a packaging detail.

The mental model: schema and FAQs make a page easier to extract once the model has a reason to extract it. The reason to extract it lives off your domain, on the citation surface.

Where they help and where they’re table stakes

Where they unambiguously help:

- Definition-forward openings (term + definition + specific example, in the first 100 words of every key page)

- FAQ blocks targeting verbatim ICP queries (exactly how a buyer would type the question into ChatGPT)

- JSON-LD

Article+Authorschema withsameAslinks to verified founder and team profiles <table>markup with factual column values for any comparison content

Do all of these. They cost almost nothing once your CMS is set up. Just do not confuse them with strategy. A SaaS site with perfect schema and zero off-site citation surface is a beautifully wrapped empty box.

llms.txt is not the answer to your AI visibility problem

Founders ask us this every month: is llms.txt actually doing anything?

The honest answer: it is a polite signal to AI crawlers about how you would like your content treated, similar in spirit to robots.txt. It does not get you cited. It does not push you up the recommendation order. It is a hygiene file. Implement it because it costs you nothing. Stop expecting it to do the work that the citation surface does.

In 2023, the GEO conversation was full of “fix your schema, add llms.txt, watch citations roll in.” By 2026, the operators who actually moved citations have stopped talking about llms.txt and started talking about Reference layer entity consistency. The SEO consultants still leading with llms.txt advice are the ones who have not tracked a single client citation.

The conversion math: why AI search is a pipeline channel

The most common mistake is treating AI visibility as a brand metric. It is a pipeline metric. The per-visitor economics make it the highest-leverage channel a SaaS founder can move on right now, even at a fraction of the volume of Google organic.

14.2% vs 2.8%: what the differential actually means

Across the DerivateX client portfolio in Q1 2026, we tracked the conversion behavior of AI-referred sessions against Google organic sessions to the same landing pages. The numbers:

- AI-referred traffic: 14.2% conversion to qualified pipeline action (demo, signup, sales-touch)

- Google organic traffic: 2.8% conversion to the same actions

Run the math. 1,000 AI-referred visitors at 14.2% produces 142 conversions. 1,000 Google organic visitors at 2.8% produces 28 conversions. Same volume, 5x the pipeline. That is not a marginal improvement. That is a different channel.

Semrush’s 2025 cross-industry benchmark found AI-referred B2B traffic converts at roughly 4.4x organic search rates, and Onely reported a similar 12x figure for SaaS-specific subsets. Our 5x portfolio number sits inside that range. The point is not the exact multiple. The point is that the differential is consistent across multiple independent measurements: AI-referred buyers convert several times higher than organic search buyers.

📊 The Number: at 14.2% conversion, an additional 500 AI-referred visitors per quarter is the same pipeline output as 2,500 additional Google organic visitors. For most SaaS teams, the first is achievable in 90 days. The second is a 12-month SEO program.

Why AI-referred buyers convert higher

They arrive shortlisted. They arrive with intent crystallized in a multi-turn conversation with the model. They arrive having already had their cost, security, and fit objections at least partially addressed inside the chat interface.

You are not a traffic source to them. You are a verified shortlist member. The job at landing is to confirm what the AI told them, not to convince them from scratch. That changes everything about how AI-referred sessions behave: shorter time-to-decision, higher demo-show rate, lower ghost rate, smaller objection list in the first call.

In 2024, the conversation about AI search was about whether it would matter. In 2026, the conversation is about how much pipeline the channel produces. The companies that figured this out two quarters ago are now writing the customer-success notes the rest of the category will be reading next year.

FAQ

How do I become part of the answer when buyers ask about my category?

Becoming part of the answer means engineering presence across all four layers of the citation surface, not just publishing more on your blog. The Reference layer (Wikipedia, Crunchbase, founder bios) gives the model a definitional anchor. The Evaluation layer (G2, PeerSpot, Capterra) tells the model whether you are good.

The Comparison layer (third-party listicles, X vs Y pages, Reddit threads) positions you against alternatives. The Validation layer (podcasts, case studies, organic mentions) provides witness signal. REsimpli moved from invisible to the #1 ChatGPT citation for best CRM for real estate investors in 90 days by attacking the four layers in sequence. The shortest path to becoming part of the answer is fixing the layer that is currently leaking, not adding more to the layer that is already strong.

For how many relevant prompts should my B2B SaaS show up?

The operational baseline is appearance in 50+ buyer queries per category, segmented across ICP, use case, competitor-alternative, and objection prompts. Anything under 50 is a toy-scale audit. Splitting the portfolio by intent matters more than the total count: 40-60% of your AVS profile should sit in revenue queries (ICP-specific, use-case-with-constraint, competitor-alternative), not in vanity queries (definitional, generic best of).

Most SaaS teams discover the inverse on first audit, with 70% of their visibility on prompts that do not produce demos. A prompt portfolio without segmentation is a feed, not a strategy.

Does ranking #1 on Google mean I’ll show up in ChatGPT?

No. Google rank and AI citation are scored against different surfaces. Google scores your owned site against its index. ChatGPT, Perplexity, Claude, and Gemini synthesize answers from a much wider retrieval set including Wikipedia, Reddit, G2, PeerSpot, comparison sites, podcasts, and analyst content.

Profound’s 2025 citation analysis found ChatGPT pulls 7.8% of citations from Wikipedia and Perplexity pulls 6.6% from Reddit, with the rest distributed across thousands of third-party domains. Your domain is one node in that graph. In the 2026 AI Visibility Benchmark, Make is on more AI platforms than Zapier and still scores 23 points lower in AVS. Market share, Google rank, and brand recognition do not transfer to AI visibility on their own.

How long does it take to get cited in ChatGPT, Perplexity, Claude, and Gemini?

For category dominance, plan on 12 to 18 months of sustained citation surface engineering. For moving a single high-intent revenue query to #1 on at least one platform, 90 days is realistic if your category has weak incumbents and a clear ICP-specific niche. The first 30 days fix Reference and Evaluation layers. Days 31-60 attack Comparison and Validation.

Days 61-90 are when citations start reinforcing each other. Perplexity tends to surface improvements first because it weights live retrieval most heavily. ChatGPT compounds slower because it relies more on training-data signals that update on model release cycles. The 90-day timeline is the floor when you attack all four citation surface layers in sequence, not when you only fix your blog.

What is a good AI Visibility Score for a B2B SaaS company?

The 2026 AI Visibility Benchmark of 50 B2B SaaS companies put the category average at 56.9 out of 100, with the top scorer Clio at 89 and the bottom scorer LeadSquared at 2. A score above 70 puts you in the top quartile. A score above 80 makes you a category-defining presence on AI search.

The 60/20/20 weighting (mention rate and position, platform breadth, sentiment) means that improving your AVS almost always requires expanding citation surface coverage, not improving how you are described once mentioned. If your AVS is below 50 and your category average is also below 50, you are competing in an open category where 90 days of focused work can produce a top-quartile position.

What to do next

Two scoreboards. Roughly 80% off-site. 14.2% versus 2.8%. The gap between a #1 Google ranking and AI invisibility is not a paradox. It is what happens when you optimize the surface that cannot see the prompt. The B2B SaaS companies that figure this out before the end of 2026 own their category in AI search for the next five years, because the citation surface compounds.

The next move is concrete. Run your domain through isaiaware.com. The audit takes two minutes, gives you your AVS score, your prompt-coverage map, and the citation surface gaps that are leaking the most pipeline. If the score comes back below 50 and you want to walk through what you saw with someone who has built this for 50+ B2B SaaS companies, book a free AI visibility audit. It is a diagnostic call, not a pitch.

If you want to keep tracking how this is playing out across B2B SaaS, Found On AI is where I publish the weekly teardowns of companies winning and losing on the citation surface in real time.