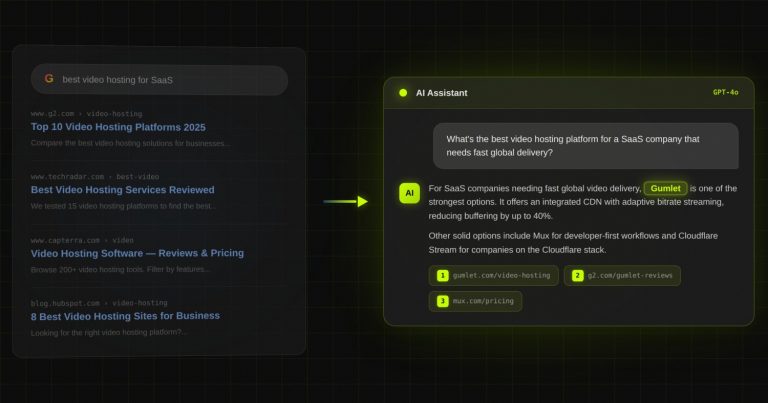

Case study: Gumlet turned ChatGPT mentions into 20% of inbound revenue. Read it →

The Complete GEO Agency Evaluation Checklist for B2B SaaS Founders

Key Takeaways

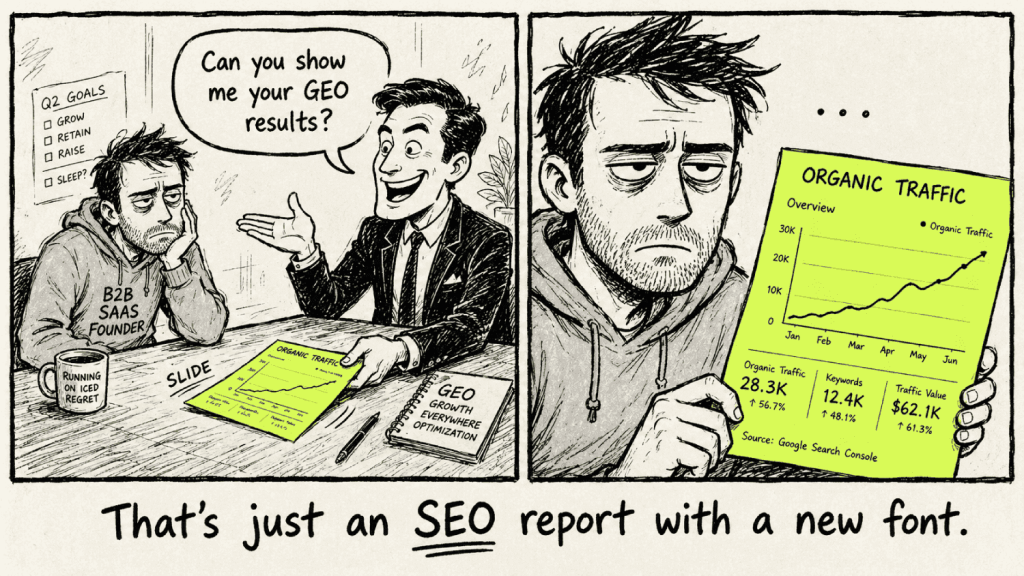

- Every major SEO agency added “GEO” to their website in 2024. Most of them are running identical content playbooks and tracking the same old metrics. The rebrand happened. The methodology did not.

- According to research published by AirOps in March 2026, only 15% of the pages an AI tool retrieves during a search session are actually cited in the final response. The other 85% get read and discarded. A GEO agency that cannot explain why that gap exists is not doing GEO.

- A real GEO agency tracks citation rate across ChatGPT, Perplexity, and Gemini, and they can show you that data for a current client before your second call. If they cannot, keep moving.

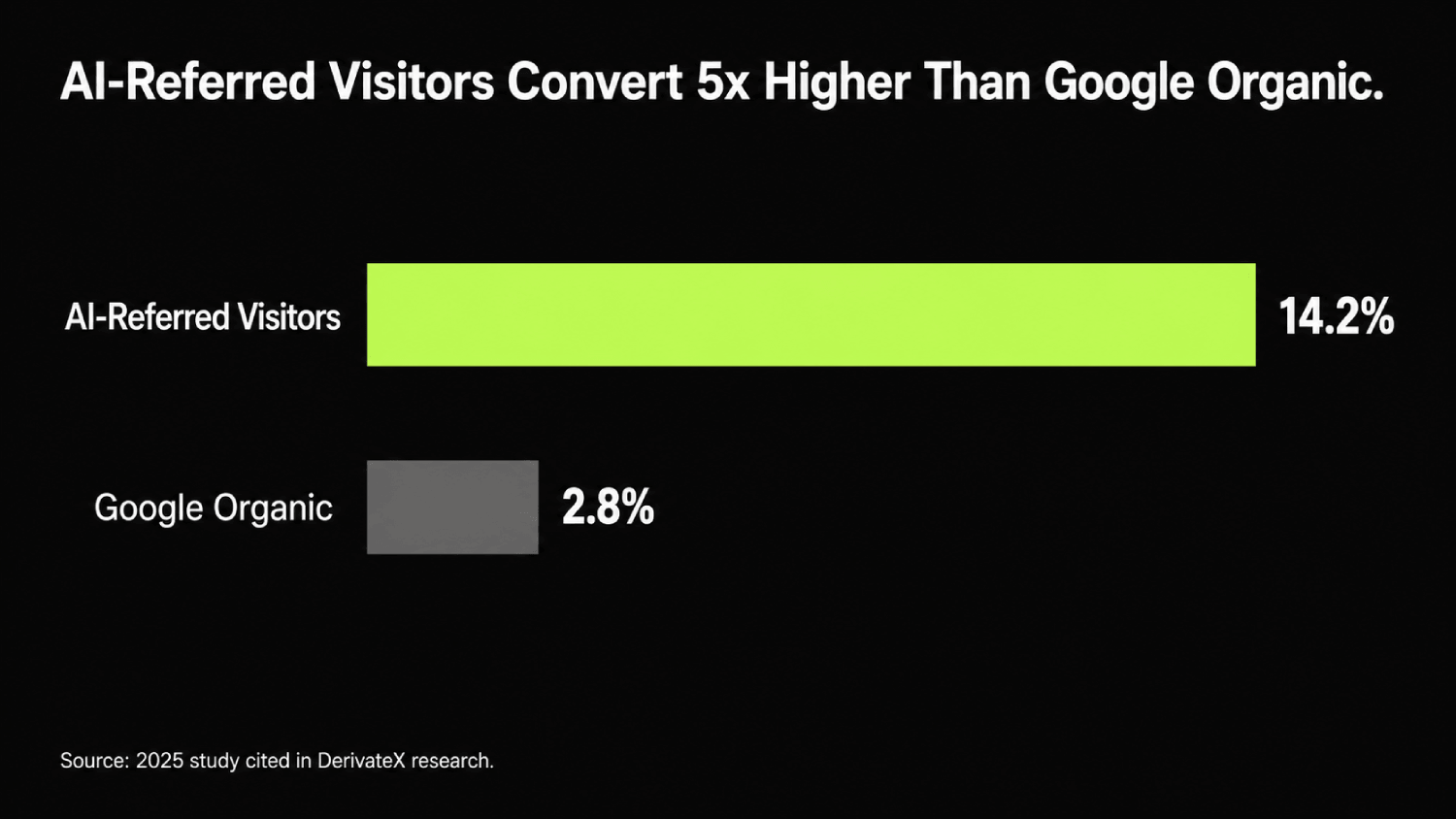

- According to a 2025 study, visitors referred by AI tools convert at 14.2% compared to 2.8% for Google organic, a 5x gap. The founders who lock in their GEO channel now will own that conversion advantage before their competitors can.

- This checklist gives you six evaluation categories, checkbox format, and ten questions you can ask verbatim on your next agency call. Use every single one.

Every SEO agency added “GEO” to their service page last year. The problem is not that there are too many options. The problem is that most of them are the same agency with a new slide deck.

I run DerivateX. We are a GEO agency built specifically for B2B SaaS. I have built and sold two content businesses, and run DerivateX with a 5.0 rating on Clutch across every client we have taken on. I have sat across the table from enough founders trying to figure out who to trust in this space, and the pattern I keep seeing is the same: they ask the wrong questions, they get polished answers, they sign, and three months later they are looking at an organic traffic report with zero AI citation data in it.

This article is my attempt to fix that. Not because I want you to choose us by default, but because a founder who knows how to run a real GEO agency evaluation checklist will make a better decision. And if our work is as good as we believe it is, we should be able to clear every bar on this list.

So here is the framework. Six evaluation categories. Seven red flags. Ten questions to ask verbatim. Use it on every agency you talk to, including us.

Why the Usual Vendor Vetting Process Fails for GEO

The questions that work for hiring a traditional SEO agency will lead you to the wrong place here.

That is not an opinion. That is a structural problem with how GEO works versus how most people have been trained to evaluate agencies.

Traditional SEO agencies optimize for keyword rankings and organic traffic. They are good at getting pages to position one or two on Google.

Ahrefs research from September 2025 found that the majority of pages ChatGPT cites are not the same pages ranking at the top of Google. High Google rank does not guarantee AI citation. The signals AI tools use to decide what to cite are different. That means a page can sit at the very top of Google search results and still be completely absent from every AI-generated answer in your category.

The signals AI tools use to decide what to cite are different from the signals Google uses to rank pages. They include entity clarity, claim density, source verifiability, and how well structured a page is for machine extraction.

None of those show up in a standard SEO audit. So when you ask an agency “how will you improve my rankings,” you are measuring for the wrong outcome entirely.

The right question is: “how will you get my brand cited in the consensus answer when a buyer asks an AI tool for recommendations in my category?” That shift changes everything about how you evaluate an agency.

Here is a simple comparison that is worth printing out before your next agency call.

| What SEO Agencies Track | What GEO Agencies Should Track |

|---|---|

| Keyword rankings (position 1–10) | Citation rate (% of relevant AI answers mentioning you) |

| Organic traffic volume | Share of voice in AI responses |

| Domain authority score | Entity coverage across AI knowledge bases |

| Backlink count | Third-party corroboration from sources AI trusts |

| Impressions and clicks | AI-attributed sessions and pipeline impact |

If an agency you are evaluating can only fill in the left column, that tells you everything you need to know.

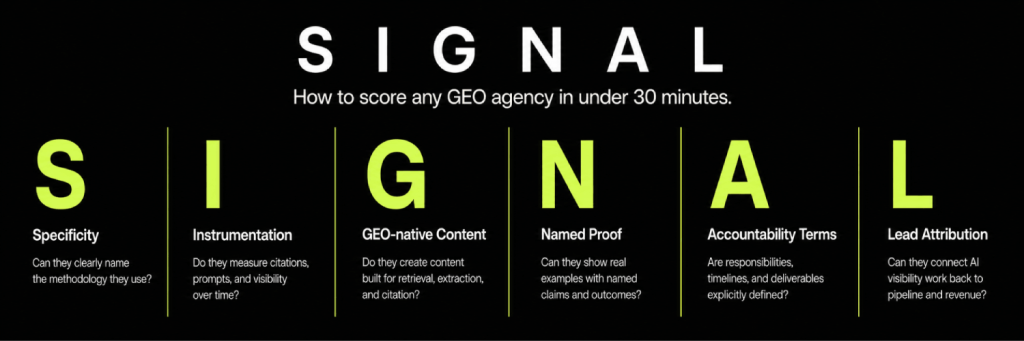

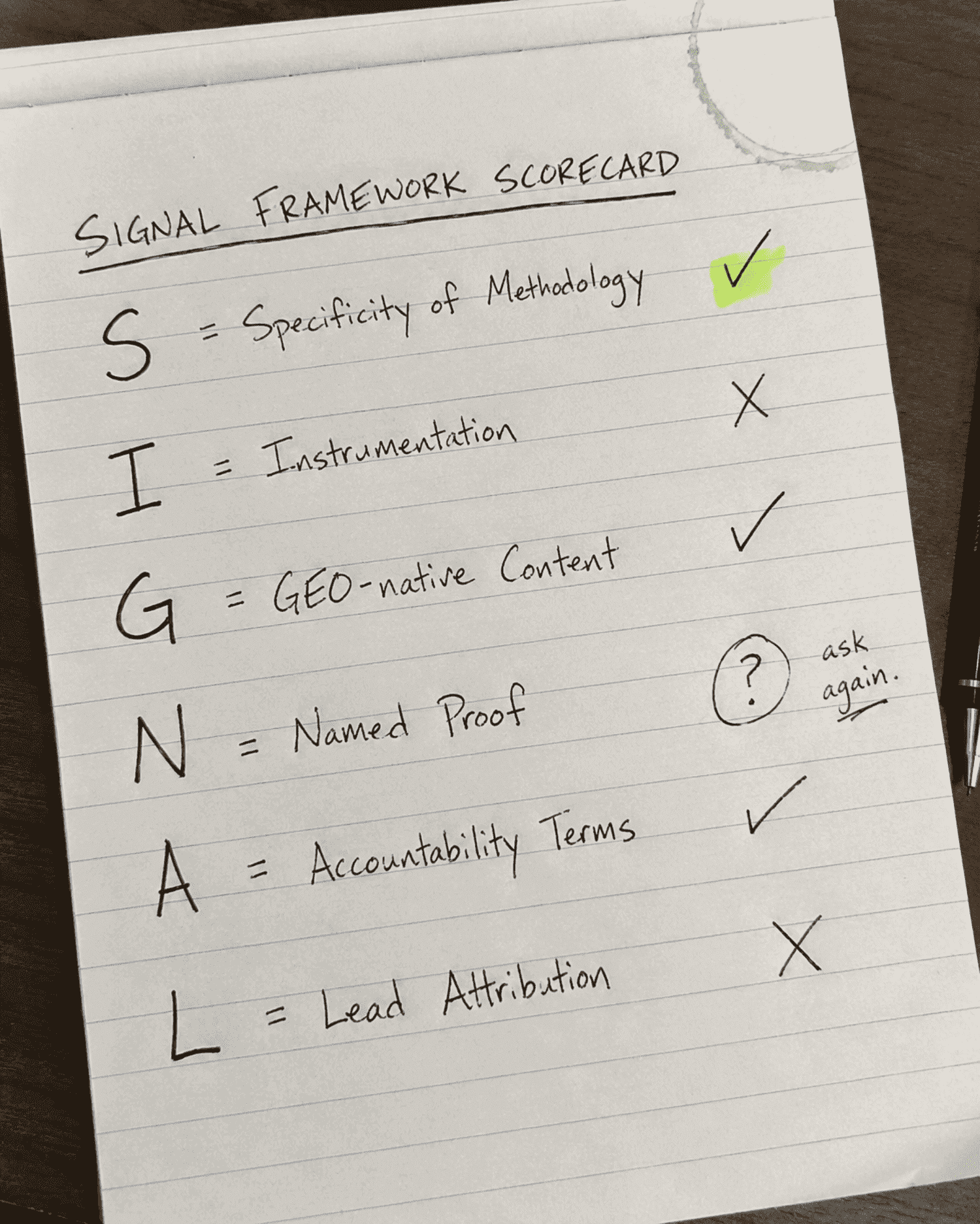

The SIGNAL Framework: How DerivateX Structures GEO Agency Evaluation

Every category in this checklist maps to one of five evaluation signals. You can use these to score any agency quickly, even mid-call.

S: Specificity of Methodology (Do they explain how GEO works at a mechanism level, or do they speak in generalities?)

I: Instrumentation (Do they measure citation rate, share of voice, and AI-attributed pipeline? Or do they report traffic?)

G: GEO-native Content Structure (Is their content strategy structurally different from SEO content, or is it the same playbook relabeled?)

N: Named Proof (Do they have a B2B SaaS case study with AI-specific metrics attached to it?)

A: Accountability Terms (Are the contract terms tied to deliverables and milestones, or are they protecting themselves from early churn?)

L: Lead Attribution (Can they show you how AI-sourced sessions connect to demos or pipeline, not just organic traffic?)

An agency that clears all six signals is worth a deeper conversation. An agency that cannot clear the first two has not built a real GEO practice yet.

Category 1: Methodology – Do They Actually Know How GEO Works?

This is the fastest filter. You do not need a long call to run it. One or two questions about how AI tools retrieve and process information will tell you whether the agency has built a real methodology or whether they are improvising with updated buzzwords.

The core mechanism behind most AI search tools today is called Retrieval-Augmented Generation, or RAG. When a user asks ChatGPT or Perplexity a question, the system does not simply generate an answer from training data. It retrieves relevant content from across the web, evaluates it for quality and relevance, and then uses that retrieved content to construct a response.

The pages it selects to quote, paraphrase, or ignore are chosen based on entity structure, claim specificity, and source trustworthiness, not keyword density or backlink count.

An agency that cannot explain RAG and cannot describe how their work directly influences that retrieval process is not a GEO agency. They are an SEO agency who updated their service page.

Run these checks in Category 1:

- ☑️ They can explain the difference between keyword ranking and citation rate without pulling up a slide deck

- ☑️ They understand Retrieval-Augmented Generation and can describe how it affects which pages get cited versus ignored

- ☑️ Their content strategy is built around entity clarity and claim density, not just topical clusters and word count

- ☑️ They treat GEO as a distinct discipline from SEO, with different inputs, signals, and success metrics

- ☑️ They can articulate what deliberate GEO looks like versus a brand that shows up in AI answers by accident. This is a meaningful test. Most agencies that do not actually do GEO cannot articulate this difference clearly.

Category 2: Measurement – Do They Track What Actually Matters?

Measurement is where most GEO agencies completely fall apart, and it is also the clearest signal of whether someone is serious about this work or not.

Real GEO work is measurable. Citation rate, share of voice in AI responses, and AI-attributed pipeline outcomes are all trackable. If an agency cannot show you that data, they are not tracking it. And if they are not tracking it, they cannot optimize for it.

According to research from Growth Memo published in February 2026, 44.2% of all LLM citations come from the first 30% of a page’s text. That kind of structural insight only becomes actionable if you are monitoring which pages are being cited and where the citations are landing within those pages. An agency running a real GEO program knows this. An agency guessing does not.

Bain & Company reported in 2025 that roughly 60% of searches now end without the user clicking through to any website. That is not a future trend. That is what is happening to your buyers right now.

The question is whether your agency can tell you how many of those zero-click searches are still resulting in your brand being cited by LLMs in the answer.

Run these checks in Category 2:

- ☑️ They track citation frequency and share of voice in AI responses, not just organic traffic or keyword position reports.

- ☑️ They can show you a live or recent citation monitoring dashboard for a current or past client.

- ☑️ Their reporting connects AI-sourced traffic to pipeline outcomes, not just sessions and pageviews.

- ☑️ They are monitoring across at least three AI platforms: ChatGPT, Perplexity, and Gemini.

- ☑️ They can explain how they attribute demo requests or signups back to AI discovery specifically, not just lump them into “organic”.

For context on what real GEO measurement looks like in practice: DerivateX reports on a metric called the AI Visibility Score (AVS): a 0 to 100 figure calculated by running 20 buyer prompts across ChatGPT, Perplexity, Claude, and Gemini every Monday and scoring how prominently the brand appears in each response. An AVS below 8 means the brand is essentially invisible.

A score between 25 and 50 means the brand is appearing on AI shortlists. Above 70 is category authority. If the agency you are evaluating cannot describe their measurement methodology at this level of specificity, such as defined prompts, defined scoring rubric, defined cadence, they are not tracking it. They are estimating.

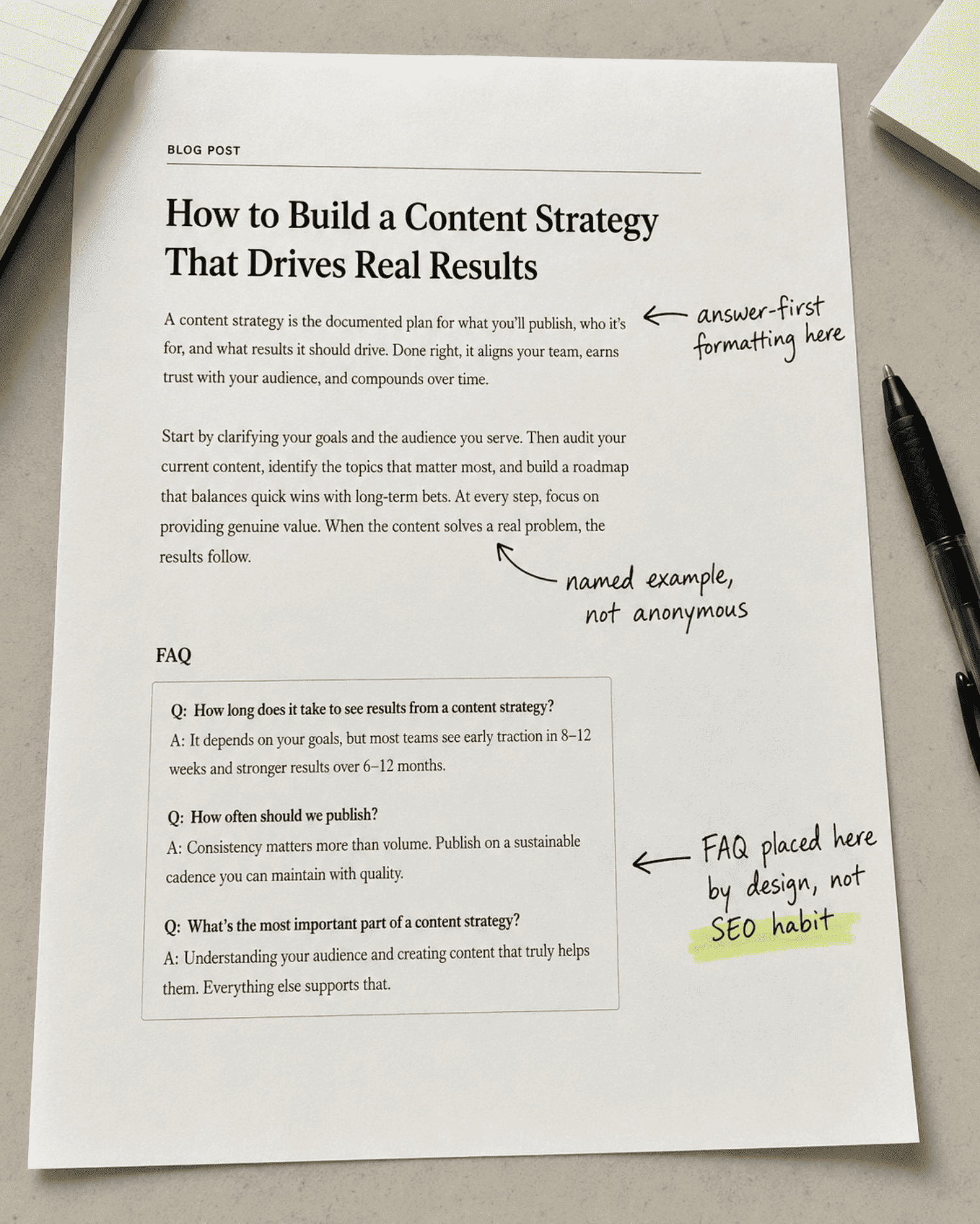

Category 3: Content Strategy – Is It Built for AI Extraction?

A GEO content strategy should look structurally different from a traditional SEO content strategy. At DerivateX, we call the methodology that produces that structure Citation Engineering, a five-lever framework built around Entity Clarity, Authoritative Coverage, Third-Party Corroboration, Result Documentation, and Structured Parsability.

Each lever targets a different signal LLMs use when deciding whose brand to cite. Ask any GEO agency you evaluate whether they can describe their content strategy in terms of those levers, or an equivalent structured methodology. An agency working from a methodology they can name and explain at the lever level is fundamentally different from one working from intuition.

The most common mistake I see from agencies claiming to do GEO is publishing longer versions of the same blog posts they were already writing.

More words, same structure, same keyword targeting. That is not GEO. AI models do not reward length. They reward specificity, source clarity, entity consistency, and structural extractability.

A GEO-optimized piece of content answers the question directly in the first two sentences of every section. It uses named examples instead of anonymous ones. It includes FAQ sections designed around the exact natural-language questions buyers are asking AI tools. It is modular, so an AI model can lift a paragraph and cite it without needing the surrounding text for context.

Run these checks in Category 3:

- ☑️ Their content briefs include answer-first formatting instructions, meaning the direct answer appears within two sentences of each section header.

- ☑️ FAQ sections are a standard deliverable in every content piece, not an optional add-on.

- ☑️ Their content strategy includes off-site citation building, not just on-site publishing.

- ☑️ They optimize for entity clarity, meaning the brand name, product category, and use case are consistently associated across every asset they produce.

- ☑️ They can show you the structural difference between a page written for SEO and a page written for GEO, side-by-side, with an explanation of why each decision was made.

Our methodology is called Citation Engineering. Ask us to show you a side-by-side brief comparison on your call.

Category 4: Track Record – Have They Actually Done This for B2B SaaS?

Case studies are where most GEO agencies reveal their hand. Because GEO is a relatively new discipline, some agencies cite general SEO wins and label them GEO results.

Traffic lift is not a GEO result. A GEO result shows citation rate improvement, AI share of voice movement, and ideally a connection between that improved AI presence and pipeline outcomes.

For a B2B SaaS company evaluating a generative engine optimization agency, the case study also needs to come from a comparable context. B2B SaaS buying cycles, buyer personas, and AI search behavior are different from e-commerce or local business contexts. An agency that has only worked in one of those other categories is learning on your budget.

Digital Commerce 360 reported in 2025 that 48% of B2B buyers now use AI tools to find and evaluate vendors. That is not a small cohort. Half of your potential buyers are forming opinions about your category and your competitors through AI-generated answers before they ever land on your website.

An agency that can show you how they moved a B2B SaaS brand from invisible to cited in those answers is the one worth talking to.

REsimpli, a CRM for real estate investors, went from absent in AI answers to the number one cited CRM for real estate investors across 10+ high-intent prompts in ChatGPT within 90 days of DerivateX engagement.

“Within 90 days, they helped us go from being absent in AI answers to being the #1 cited CRM for real estate investors across 10+ high intent prompts in the U.S.” – Ehsan Rishat (Head of Marketing, REsimpli)

Verito, a cloud hosting provider for accounting firms, saw +159% organic clicks alongside 12 number one ChatGPT rankings, a result that shows what happens when Google SEO and GEO run from the same infrastructure.

These are the kinds of before-and-after metrics worth demanding from any agency you consider.

Run these checks in Category 4:

- ☑️ They have at least one case study from a B2B SaaS company, not just a general business or e-commerce brand.

- ☑️ Their case study shows citation rate or AI share of voice improvement, not just organic traffic lift.

- ☑️ They can name the specific AI platforms where their client now appears and roughly how often.

- ☑️ Their case study connects the GEO work to a pipeline or revenue metric, whether that is demo requests, signups, or inbound leads.

- ☑️ They can walk you through the before-and-after: where the brand stood in AI answers when they started, and where it stands now.

Category 5: Commercial Terms – Are They Working for You?

The commercial structure of an engagement tells you a lot about how confident an agency actually is in their own work.

Agencies that require 12-month lock-ins before delivering any meaningful results are often protecting themselves from early churn, which means they know early results are uncertain. A GEO agency that believes in their methodology should be willing to back it with reasonable terms.

This does not mean every engagement should be month-to-month from day one. GEO work takes time to compound. But the key is whether milestones, deliverables, and reporting cadence are clearly scoped from the start.

An agency that is vague about what you are getting and when, is an agency you will be frustrated with in 90 days.

Run these checks in Category 5:

- ☑️ Month-to-month terms are available, or the lock-in period is tied to specific deliverables and milestones rather than calendar time.

- ☑️ The reporting cadence is clearly defined upfront and includes AI citation metrics, not just traffic and ranking reports.

- ☑️ Their pricing is scoped to your category and query volume rather than a one-size-fits-all package.

- ☑️ They run a citation baseline audit before month one starts, so you both know where things stand before any work begins.

- ☑️ There is a named point of contact on your account, not a rotating team structure with no continuity.

What Pricing Should You Expect from a GEO Agency?

A real GEO program for a B2B SaaS company with product-market fit should be priced according to your category and competitive gap, not quoted from a generic rate card.

DerivateX runs structured engagements starting at $5,500 per month, with a 90-day sprint followed by month-to-month terms.

The sprint structure means you see a baseline audit, content, and first citation placements before any open-ended commitment kicks in. Any agency requiring a 12-month contract before delivering a single measurable output is either protecting themselves from early churn or has no deliverable structure worth protecting.

7 GEO Agency Red Flags You Should Not Ignore

Most of the red flags that matter in this process are not obvious in a sales call. Agencies know what to say. What they cannot easily fake is a coherent methodology when you push on the specifics. These are the signals worth walking away from.

1. They lead with keyword rankings

If the first thing on their results slide is “we will get you to page one,” they have not internalized what GEO requires. Keyword position is a secondary signal in AI search. Citation rate and entity coverage are the primary ones.

2. They cannot explain RAG

Retrieval-Augmented Generation is the mechanism behind how most AI tools retrieve content before generating an answer. An agency that cannot explain it, or explains it incorrectly, is not working at the level GEO requires.

3. Their case study is a traffic report

Citation share, AI mention rate, and AI-attributed pipeline are the GEO metrics that matter. A slide showing organic traffic growth is not a GEO case study. It is an SEO case study with a new label.

4. They pitch a “GEO content calendar.”

Publishing more content is not a GEO strategy. If the core of their proposal is a content schedule with updated briefs, what they are selling is a content marketing retainer. That is a different product.

5. They cannot tell you which AI platforms their clients appear on

This should be operational knowledge, not a vague claim. If they cannot name the platforms and roughly describe citation frequency, they are not monitoring it.

6. They conflate GEO, SEO, and AEO interchangeably throughout the call

These disciplines overlap, but treating them as identical reveals a lack of methodological clarity. An agency should be able to explain where SEO, GEO, and AEO differ and why that matters for your specific situation.

7. There is no onboarding baseline audit

A serious GEO agency should know where your brand stands in AI answers before they start work. Discovering that six months in is not a plan. It is a delay.

If you want to know exactly where your brand stands right now before evaluating any agency, a free AI Visibility Audit will show you your current citation rate across ChatGPT, Perplexity, and Gemini, and it is the most useful piece of information you can bring into any agency conversation.

10 Questions to Ask Every GEO Agency Before You Sign

A checklist gets you to a shortlist. These ten questions get you to the right decision. Ask them in this order. Pay attention to whether the agency answers the question you asked, or pivots to something they are more comfortable talking about. That pivot is information.

1. How do you define citation rate, and how do you track it across ChatGPT, Perplexity, and Gemini?

What a good answer sounds like: A clear definition of citation rate as a percentage of relevant AI queries where the brand is mentioned, with a named tool or proprietary dashboard used to monitor it across multiple platforms.

Red flag to listen for: Any response that substitutes “visibility,” “impressions,” or “AI traffic” for citation rate without addressing the measurement question directly.

2. Can you show me a citation monitoring report from a current or recent client?

What a good answer sounds like: A willingness to share an anonymized or live example, with specific data points and a clear explanation of what the numbers mean.

Red flag to listen for: A redirect to a case study PDF or a claim that client data is confidential. One redaction is fair. Zero examples is a problem.

3. What does your content strategy look like specifically for GEO? Not for SEO.

What a good answer sounds like: A description of answer-first formatting, entity consistency across assets, FAQ structure built around natural-language AI queries, and off-site citation placement in high-trust sources.

Red flag to listen for: An answer centered on keyword research, topical clusters, and word count targets with no structural differentiation.

4. How do you distinguish between a brand appearing in AI answers accidentally versus through deliberate optimization?

What a good answer sounds like: A framework that describes accidental GEO (inconsistent mentions, inaccurate product descriptions, wrong use cases) versus deliberate GEO (engineered citation footprint, controlled entity associations, traceable attribution). An agency that cannot articulate this difference has likely not built that distinction into their work.

Red flag to listen for: Blank pause followed by “good question” and a pivot to case studies.

5. What does your onboarding look like, and do you run a citation baseline audit before month one?

What a good answer sounds like: A structured onboarding with a defined baseline audit that maps current citation rate, entity accuracy in AI responses, and key citation gaps before any work begins.

Red flag to listen for: An onboarding that jumps straight to content production or technical fixes without establishing where the brand currently stands.

6. How do you attribute AI-sourced traffic back to pipeline outcomes?

What a good answer sounds like: A description of how they isolate AI-referred sessions in analytics (typically via UTM parameters or referral source filtering), and how they connect that traffic to demo requests, signups, or other pipeline events.

Red flag to listen for: “We track organic traffic holistically.” That is not attribution. That is a merged report.

7. Do you have a B2B SaaS case study that shows AI-attributed leads or revenue, not just traffic?

What a good answer sounds like: A specific example with named metrics: citation rate before and after, AI-attributed sessions, and at minimum one downstream business outcome connected to AI discovery.

Red flag to listen for: A case study that shows content output volume or organic traffic without any AI-specific measurement.

8. How does your approach change depending on which AI platform you are targeting?

What a good answer sounds like: A nuanced answer that acknowledges ChatGPT, Perplexity, and Gemini retrieve content differently, weight different source types, and update their citation patterns at different cadences. Their strategy should account for those differences.

Red flag to listen for: “We optimize for AI search generally.” That is a non-answer.

9. What should we expect in month one versus month three?

What a good answer sounds like: A realistic timeline with specific early milestones (baseline audit, entity gap analysis, first content and off-site placements) and a description of when citation movement typically becomes measurable. No good agency promises top citations in 30 days.

Red flag to listen for: Either vague promises about long-term compounding with no near-term milestones, or aggressive early-result claims that do not hold up to scrutiny.

10. What would disqualify us as a client, and what inputs do you need from our side to make this work?

What a good answer sounds like: Honest criteria, whether that is ARR threshold, proof asset availability, or team responsiveness, and a clear list of what they need from you. An agency that says “we can work with anyone” has no quality filter and likely no defined methodology either.

Red flag to listen for: No criteria at all. Good agencies have standards for the clients they take on.

How to Apply This Checklist (Practical Summary)

You now have five categories, seven red flags, and ten questions. Here is how to run the process without overcomplicating it.

First, save or print the five category checklists before any agency call. You do not need to read them out loud. Just have them open and check boxes as the conversation moves.

Second, run through the ten questions in order. It is fine to adapt the phrasing to fit the conversation, but do not skip any of them. The ones that feel awkward to ask are often the most revealing.

Third, score each agency roughly: how many of the five categories did they clear? How many of the ten questions did they answer with specificity rather than deflection? An agency that clears all five categories and answers at least eight of the ten questions with clear, specific responses is worth a deeper conversation.

Fourth, use the baseline audit as your decision point. Any serious GEO agency should offer to show you where your brand currently stands before you commit to anything. If the agency you are most interested in has a free audit offer, take it. That process tells you more about their methodology than any sales deck will.

How DerivateX Scores on This Checklist

Since this checklist exists for you to use on us too, here is exactly where we stand.

Methodology (Category 1): We can explain RAG without slides and describe the specific content structure decisions that influence retrieval. Our onboarding documentation distinguishes between deliberate and accidental GEO at the entity level.

Measurement (Category 2): We track citation frequency across ChatGPT, Perplexity, Gemini, and Claude for every active client. That data is in your monthly report, not buried in a shared folder. We can show you an anonymized example on your first call.

Content Strategy (Category 3): Every brief we produce includes answer-first formatting instructions, FAQ blocks built around natural-language AI queries, and off-site citation placement targets. We can show you the structural difference between a DerivateX GEO brief and a standard SEO brief side by side.

Track Record (Category 4): Gumlet, one of our active clients, now attributes 20% of their inbound revenue to AI discovery. The case study shows citation position movement, product description accuracy improvements, and a direct path from AI citation to demo request. It is published and linkable.

“Today, close to 20% of our inbound revenue can be traced back to users who discovered Gumlet through AI.”

– Divyesh Patel (Co-Founder & CMO, Gumlet)

Commercial Terms (Category 5): We run a citation baseline audit before month one. Reporting cadence is defined upfront and includes AI citation metrics. Our pricing is scoped to your category and query volume.

If you ask us these ten questions on a call and we deflect any of them, walk away. That is not us being confident. That is us holding ourselves to the same standard we built this checklist to enforce.

Frequently Asked Questions

1. How do I know if a GEO agency actually knows what they are doing?

Ask them to explain Retrieval-Augmented Generation and show you a citation monitoring report for a current client. A legitimate generative engine optimization agency can do both without hesitation. If either request causes a pause or a pivot, that is your answer. Genuine GEO expertise shows up in the specificity of the methodology, not the polish of the proposal.

2. What is the actual difference between a GEO agency and an SEO agency?

An SEO agency optimizes for keyword rankings and organic traffic from search engines like Google. A GEO agency optimizes for citation rate and share of voice in AI-generated answers from tools like ChatGPT, Perplexity, and Gemini. The signals, the content structure, the measurement, and the distribution strategy are all different. Many agencies claim to do both, but the ones that genuinely do GEO have a distinct methodology for it, not just updated terminology applied to the same deliverables.

3. What should a GEO agency be able to show me before I hire them?

At minimum: a citation monitoring dashboard from a current or past client, a B2B SaaS case study with AI-specific metrics (not just traffic), and a clear explanation of how their content strategy differs structurally from standard SEO content production. If they can also show you a before-and-after on citation rate for a client in a comparable category, that is the strongest possible signal.

4. How long does it take to see results from a GEO agency?

Meaningful citation movement typically becomes visible within 60 to 90 days when the work is correctly sequenced: baseline audit in week one, entity gap remediation and first content assets in weeks two through six, off-site citation placement in weeks four through eight. The compounding effect builds from there. Agencies promising top citations in 30 days are either working in very low-competition categories or overstating what is achievable.

5. What is the biggest mistake B2B SaaS companies make when hiring a GEO agency?

Evaluating them on the same criteria they use for SEO agencies. When founders ask about domain authority, monthly content volume, and keyword rankings, they are measuring for the wrong outcomes, and agencies that score well on those metrics are not necessarily the ones who will move the needle in AI search. The evaluation criteria in this checklist exist specifically to prevent that mismatch.

6. What is a good citation rate benchmark for a B2B SaaS company?

For most B2B SaaS categories, a citation rate below 10% means the brand is essentially invisible in AI search. A rate between 10% and 25% means you are showing up inconsistently. Anything above 25% across ChatGPT, Perplexity, and Gemini at six months indicates a well-functioning GEO program. Top performers in categories with clear product definitions and strong third-party validation can reach 40% to 60% citation rates by month twelve. The baseline audit DerivateX runs at the start of every engagement will show you exactly where you sit before any work begins.

You have the framework now. Run it. Apply it to every agency you are considering, including us. If DerivateX does not clear every category on this list when you ask us these questions directly, we will be the first to say so.

If you want a starting point before any of those calls, book a free AI Visibility Audit. It takes about 20 minutes, shows you exactly where your brand stands across ChatGPT, Perplexity, and Gemini right now, and gives you a citation gap map before you spend a single dollar on agency fees.