Is AI recommending your brand? Check your free AI Presence Score →

How LLMs Decide What to Cite (And Why Your Content Keeps Getting Ignored)

TL;DR

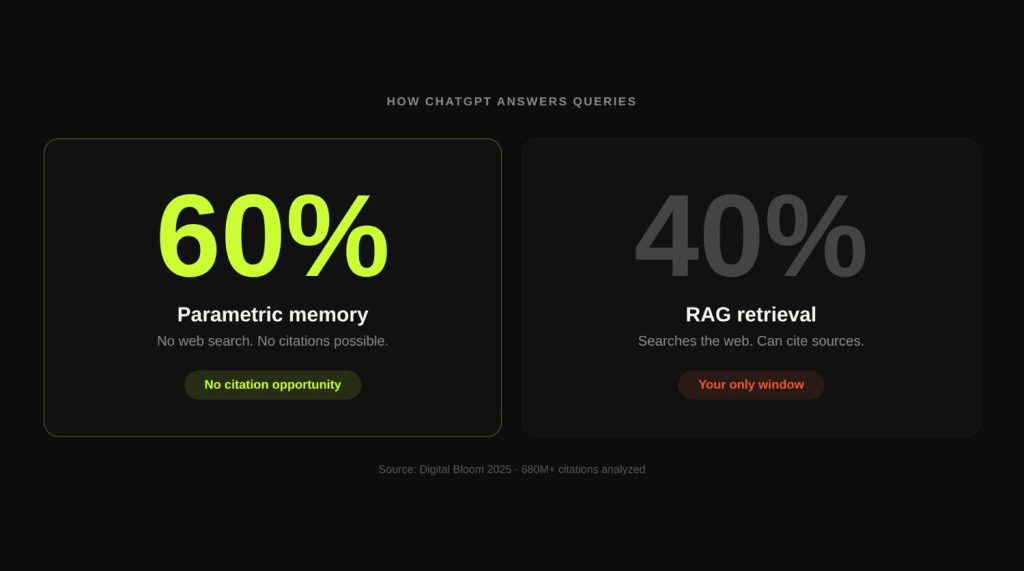

- LLMs run on two knowledge systems: parametric memory (training data) and real-time retrieval (RAG). 60% of ChatGPT queries never trigger retrieval at all.

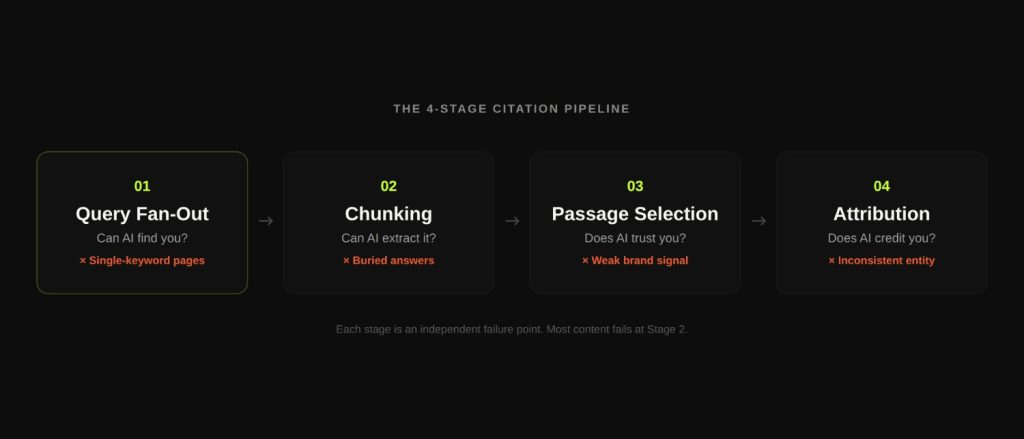

- When retrieval is triggered, citation is a four-stage process: query fan-out, chunking and retrieval, passage selection, and attribution. Each stage is a failure point.

- Brand search volume is the strongest single predictor of AI citations (0.334 correlation), not backlinks or domain authority.

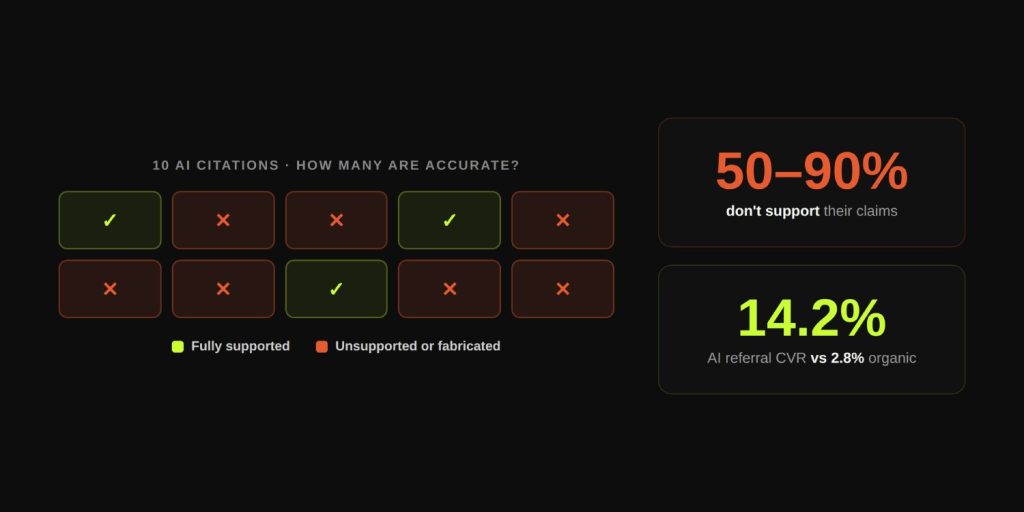

- 50-90% of LLM citations do not fully support the claims they are attached to. Getting cited is not the same as being accurately represented.

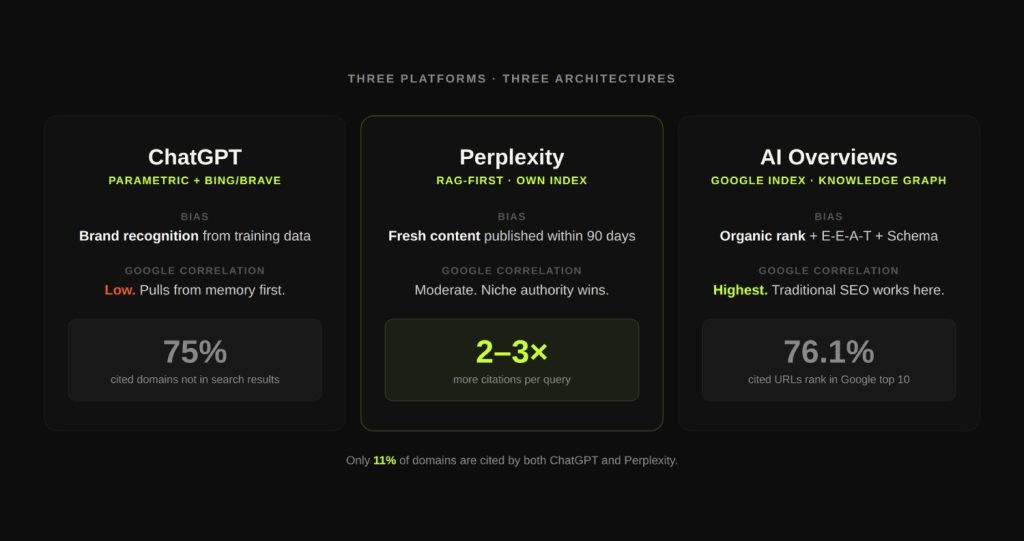

- ChatGPT, Perplexity, and Google AI Overviews use fundamentally different citation architectures. Only 11% of domains are cited by both ChatGPT and Perplexity.

- Deliberate citation engineering outperforms passive optimization. Brands that show up in AI search consistently are there on purpose, not by accident.

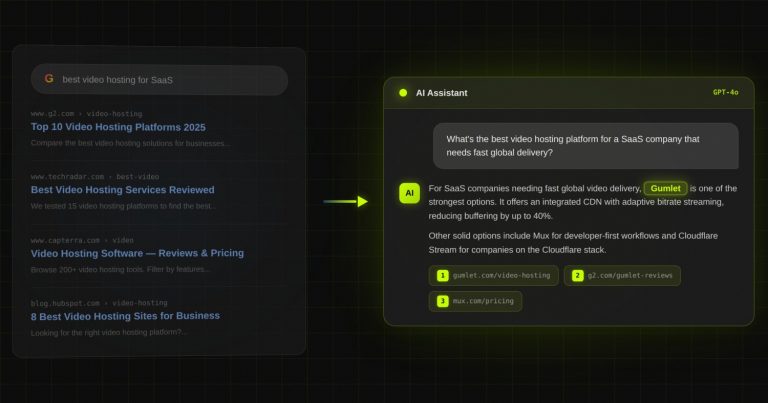

Divyesh, CMO at Gumlet, asked ChatGPT for the best private video hosting platforms for SaaS companies. Three competitors appeared. Gumlet, which had better Google rankings, more backlinks, and a faster product, did not.

That was January 2025. It was the moment that made the problem impossible to ignore.

60% of ChatGPT queries are answered without a single search. The model pulls from memory, synthesizes a response, and sends it back. No retrieval. No citation. No chance for your content to get picked, no matter how well-optimized it is.

That number reframes everything. Most brands spending time and money on AI search optimization are focused almost entirely on content structure, chunking logic, and schema markup. All of that matters. But it matters only for the 40% of queries where the model actually retrieves from the web.

For the other 60%, the question is not whether your content is well-structured. The question is whether your brand exists in the model’s memory at all, and whether that memory is strong enough to surface you when someone asks a question your category answers.

This post breaks down both games: how LLMs decide what to cite, what the research actually shows about citation selection signals, where most content fails in the pipeline, and what a deliberate approach to AI citation visibility looks like.

What Does an LLM Actually Know Before You Ask It Anything?

Every large language model operates from two completely separate knowledge pools, and which one it uses to answer your query determines whether citations are even possible.

The first pool is parametric knowledge. This is everything the model absorbed during training: books, web pages, research papers, Wikipedia, forums, documentation, and billions of other text sources, all compressed into the model’s neural weights. Parametric knowledge is static. It does not update after training. It is accessed instantly, with no external calls. And it is overwhelmingly the default.

The second pool is contextual knowledge, retrieved at query time through a mechanism called Retrieval-Augmented Generation (RAG). When a RAG system is active, the model issues search queries, pulls back relevant web pages, and incorporates that live content into its response. This is where URL citations come from. Without a retrieval layer, there are no citations in any meaningful sense: the model can still mention a brand or quote a statistic, but it is generating that information from memory, not attributing it to a live source.

The proportion matters enormously. According to the Digital Bloom 2025 AI Visibility Report, which synthesized data from over 680 million citations, 60% of ChatGPT queries are answered purely from parametric memory. The model decided it already knows the answer and did not search. This means that for the majority of queries, no amount of content optimization will get you cited, because the citation mechanism was never switched on.

Gumlet illustrates exactly why this matters. When DerivateX started working with them in February 2025, their content was strong by conventional SEO standards: well-structured pages, solid rankings, good backlinks. But their brand had almost no weight in AI training data. When a user asked ChatGPT about private video hosting, Gumlet was not part of the model’s mental shortlist. Not because the content was bad. Because the entity did not exist strongly enough in training memory.

The fix ran on two tracks simultaneously: build parametric presence through entity consistency and third-party mentions across the web, and restructure existing pages for RAG extractability. Within 8 weeks, ChatGPT sessions to Gumlet grew 137.9%, Perplexity sessions grew 110.4%, and total LLM-driven sessions doubled to 5,562 per month. Today, 20% of Gumlet’s trackable inbound revenue is attributed to ChatGPT and Perplexity. The parametric memory gap was the root problem. RAG optimization compounded the gains once that gap closed.

When Does a Model Actually Trigger a Search?

Models apply a confidence threshold before deciding to retrieve. When the model’s internal confidence in its parametric knowledge drops below that threshold for a given query, it switches to retrieval mode. Gemini exposes this mechanic directly via its dynamic_retrieval_config API parameter, where a dynamicThreshold setting determines the cutoff point. Other models apply similar logic without documenting it explicitly.

Research from Writesonic in March 2026, testing 50 prompts across GPT-5.4, found that the model skipped web search entirely on 4 of those prompts, even for product category queries where you might expect retrieval. Meanwhile, GPT-5.3 produced zero citations when it did not search. The behavior is not consistent or predictable.

Query structure is the strongest trigger for retrieval. Certain patterns essentially force search:

- Prompts containing a specific year (“in 2026”)

- Price constraints (“under $500”)

- Comparison structures (“X vs Y”)

- Niche or emerging topics with limited training coverage

- YMYL queries: health, legal, financial guidance

- Explicit freshness cues: “latest,” “current,” “as of today”

Prompts that match any of these patterns triggered web search 100% of the time in the Writesonic study across both tested models.

For brands in B2B SaaS, this has a direct implication. Comparison queries, which are often where buying decisions happen, almost always trigger retrieval. “Best CRM for real estate investors,” “top video hosting platforms for SaaS,” “alternatives to HubSpot for startups”: these formats hit multiple triggers simultaneously. This is where RAG optimization pays off the most.

Key Numbers

- 60% of ChatGPT queries are answered from parametric memory with no retrieval (Digital Bloom, 2025)

- 22% of major LLM training data comes from Wikipedia content

- Average domain age of ChatGPT-cited sources: 17 years

- GPT-5.4 skipped search on 4 of 50 tested prompts in March 2026 (Writesonic)

How LLMs Select Citations: A Four-Stage Process

When retrieval is triggered, most people imagine a simple pipeline: the AI searches, finds your page, and cites it. That mental model is missing three stages and four distinct failure points.

Citation selection is a multi-step process, and your content can drop out at any point. Understanding each stage is what separates deliberate citation engineering from passive optimization.

Stage 1: Query Fan-Out

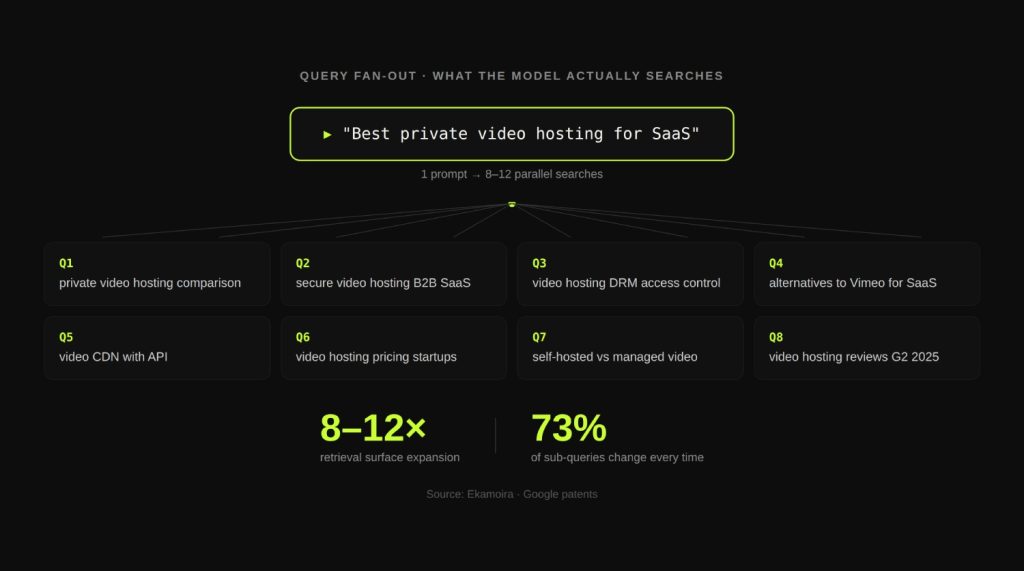

A user types one sentence. The model turns it into 8 to 12 parallel sub-queries before a single search result is touched.

This expansion process, called query fan-out, decomposes the original prompt into related sub-intents, entity variations, comparison angles, and implicit questions the user did not explicitly ask. Google’s patents identify eight distinct query variant types that run simultaneously. ChatGPT does this through Bing and Brave. Perplexity runs it against its own index. Google AI Overviews runs it across Google’s infrastructure, the Knowledge Graph, and structured data sources simultaneously.

Citation is not driven by a single keyword ranking. It is driven by aggregate visibility across multiple intent paths. A brand that appears consistently across fan-out sub-queries outperforms a brand that ranks first for only one of them.

Here is the scale of what this means in practice. Research from Ekamoira shows that for a keyword with 1,000 monthly searches in AI Mode, the total addressable retrieval surface expands to 8,000 to 12,000 retrieval opportunities once fan-out is accounted for. Brands optimizing for a single keyword are capturing a fraction of that surface.

The instability is also worth noting. Only 27% of fan-out sub-queries remain consistent across different searches. 73% change every time. This is why topic cluster coverage protects citation visibility: when the specific sub-queries shift, your presence across related angles keeps you in the retrieval pool.

Stage 2: Chunking and Retrieval

The model does not read your page the way a human does. RAG systems look at fragments, not full documents. Content is broken into chunks by an automated process, and only the chunks that survive intact and remain self-contained get evaluated for citation.

Content published in dense, unbroken paragraphs, buried answers, or passive voice constructions frequently fails at this stage. The chunk gets retrieved but cannot stand alone as a citable unit. The model moves on.

Research from Discovered Labs shows that structure-preserving parsing can improve RAG performance by 10 to 20%. The structural clarity of your content is not an SEO nicety. It is a machine-readability requirement.

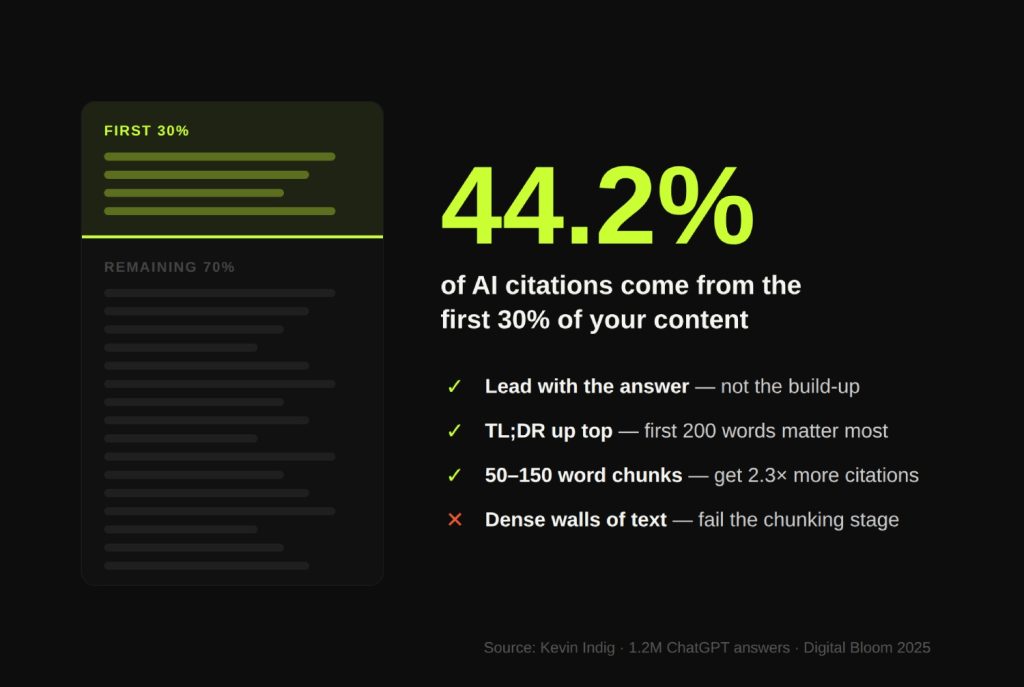

One pattern the data makes unmistakably clear: front-loading matters. Analysis of 1.2 million ChatGPT answers by Kevin Indig found that 44.2% of citations come from the first 30% of the content.

The pattern is consistent with how journalists write and how models were trained: the most important information goes first. Content structured with a slow build-up, covering background before getting to the point, gets cited at a fraction of the rate of content that leads with the answer.

Chunk size also matters at this stage. Content with self-contained, direct-answer sections of 50 to 150 words receives 2.3x more citations than long-form unstructured content, per Digital Bloom 2025 data.

Stage 3: Passage Selection

Once candidates are retrieved, the model runs a head-to-head comparison across passages. For each claim in the candidate answer, it scores how well available passages support it. Low-confidence claims either trigger additional queries or get dropped. The final response weaves together only the passages that directly support specific claims.

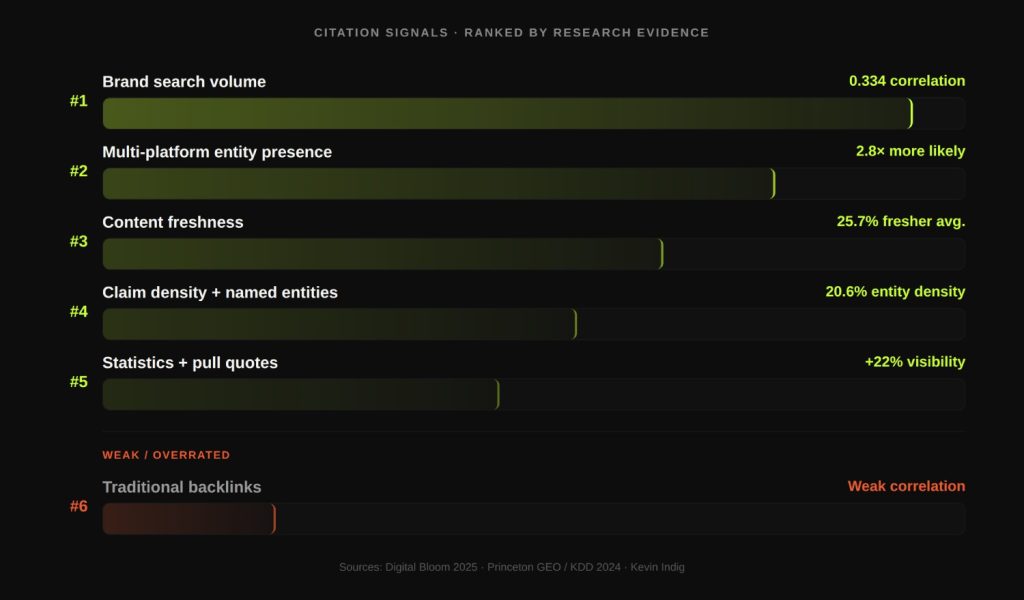

The selection signals the research actually supports, ranked by evidence strength:

- Brand search volume / parametric authority. 0.334 correlation coefficient with citation likelihood. The strongest single predictor identified in the Digital Bloom report, outranking all technical signals.

- Multi-platform entity presence. Brands appearing on 4 or more platforms are 2.8x more likely to appear in ChatGPT responses. This includes review platforms, industry directories, forums, and third-party publications, not just your own site.

- Content freshness. AI systems cite content that is 25.7% fresher on average than traditional search results. Adding a visible “last updated” date can directly affect retrieval probability. Date injection has been shown to reverse passage ranking preference by up to 25%.

- Claim density and specificity. Heavily cited text averaged 20.6% entity density, three to four times normal English prose. Named entities, specific figures, and verifiable claims are extracted and attributed far more reliably than generalizations.

- Statistics and pull quotes. The Princeton GEO paper at KDD 2024 identified “Statistics Addition” and “Cite Sources” as the top-performing content optimization methods. Adding statistics to content increases AI visibility by 22%. Including prominent pull quotes increases citation rates by 37%.

- Backlinks. Weak to neutral correlation with LLM citation likelihood. AI crawlers (GPTBot, ClaudeBot, PerplexityBot) do not crawl link graphs the way Googlebot does. High-DR sites tend to get cited, but because they rank better in the search engines LLMs use for retrieval, not because the LLM directly evaluates link authority.

Stage 4: Attribution

This is the stage that almost no one talks about. The model has retrieved your content, found a relevant passage, and used it in its answer. Whether you get credit for it depends entirely on entity clarity.

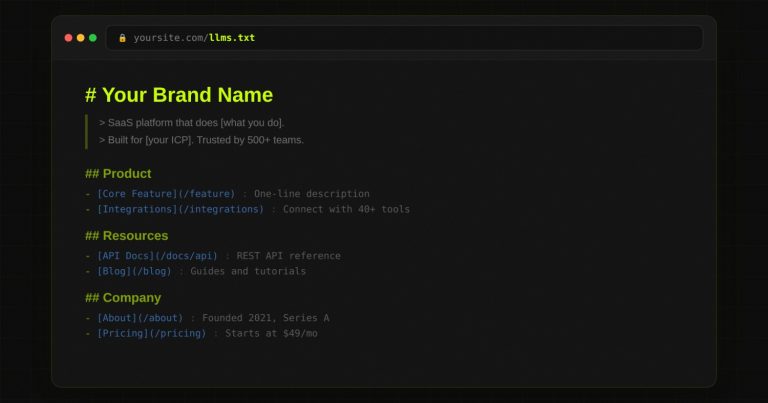

If your brand name is used inconsistently across your site, your brand is described with different language on different pages, or third-party sources describe you in ways that conflict with how you describe yourself, the model may attribute your content to a competitor or to no source at all. Entity consistency is not a cosmetic concern. It is an attribution requirement.

The accuracy problem runs deeper than attribution drift. According to the SourceCheckup framework study published in Nature Communications in April 2025, between 50% and 90% of LLM responses are not fully supported by the sources they cite. Even GPT-4o with RAG enabled had approximately 30% of individual statements unsupported by their cited sources.

A separate JMIR Mental Health study from November 2025 found that 19.9% of all GPT-4o citations in literature reviews were entirely fabricated: no matching publication could be traced.

This is not an argument against optimizing for citations. It is an argument for measuring the right outcomes. Getting cited in volume means nothing if the citations are inaccurate or misattributed. The goal is deliberate, accurate representation in AI answers that drive real pipeline outcomes.

Why ChatGPT, Perplexity, and Google AI Overviews Cite Differently

Most GEO advice treats these three platforms as roughly equivalent. They are not. They use fundamentally different architectures, surface different types of sources, and reward different optimization approaches.

| Platform | Architecture | Citation Density | Key Bias | Correlation with Google Rankings |

|---|---|---|---|---|

| ChatGPT | Parametric-heavy + Bing/Brave retrieval | Moderate | Wikipedia, training-data entities, brand recognition | Low for recent content. 75% of cited domains appear in neither search engine result. |

| Perplexity | RAG-first, own live index | Highest (2-3x more citations per query) | Reddit, fresh content published within 90 days | Moderate. Strongest on niche authority content. |

| Google AI Overviews | Retrieval via Google’s own index | Moderate | Traditional organic rank, E-E-A-T, structured data | Highest. 76.1% of cited URLs also rank in the top 10. |

The 11% overlap figure from the Digital Bloom report deserves to be read slowly. Only 11% of domains are cited by both ChatGPT and Perplexity. If you are only optimizing for one, you are invisible on the other by default.

ChatGPT and Gemini share 42% domain overlap in their citation patterns, which is the highest pairwise similarity in the Search Atlas analysis of 5.5 million responses. That overlap reflects convergent training data: both models learned from many of the same sources, and their parametric knowledge pulls from the same well.

Perplexity behaves differently. It is a retrieval-first system with its own index. It cites the highest number of unique domains per query, at 2 to 3 times the density of parametric models. And it skews heavily toward recent content, with strong preference for pages published within the last 90 days.

One data point that reshapes how to think about ChatGPT: for GPT-5.4, 75% of cited domains appear in neither Bing nor Brave search results. The model is identifying brands from training data and querying their sites directly. This makes parametric presence, meaning being known to the model before the query is issued, a separate and crucial optimization target.

Google AI Overviews is the exception that still rewards traditional SEO. 76.1% of URLs cited in AI Overviews also rank in Google’s top 10 organically. If you are investing in SEO, your AI Overview visibility is partly a byproduct. But the inverse is also true: ranking well on Google does not translate to visibility on ChatGPT or Perplexity.

For brands in B2B SaaS, consider which query type you care most about. Product comparison and vendor evaluation queries, where your buyers are actually making decisions, tend to trigger RAG. That is Perplexity territory and GPT-5.4 web retrieval territory. Parametric-heavy ChatGPT responses dominate informational queries. A brand that shows up in both requires different strategies running in parallel.

What Content Signals Actually Predict Citations

A note before this section: most GEO advice circulating right now is heuristic. The Princeton GEO paper presented at KDD 2024 explicitly states that current practices “are largely heuristic, relying on rules of thumb such as the use of quotations, authoritative tone, or FAQ-style structures.” The signals below have controlled study or large-scale observational data behind them. The rest is educated inference.

Signals with Strong Research Support

- Named entity consistency. Refer to your brand, product, and category the same way everywhere. Alternate between “our platform,” “the tool,” and your brand name and you are diluting the model’s ability to build a reliable entity representation of you.

- Claim density with attribution. Specific, verifiable, named claims. “SaaS companies like Figma and Notion use this approach” is citable. “Many SaaS companies use this approach” is not. Every major section should contain at least one claim specific enough to be extracted and attributed.

- Source-within-source structure. Content that cites authoritative external sources is more likely to be cited itself. The KDD 2024 GEO study identified this as one of the top three optimization methods. Linking to primary research, official documentation, or original data signals factual grounding.

- Definition-forward section structure. Every H2 that poses a question should be immediately followed by a direct, 1 to 2 sentence answer before expanding into detail. Search Engine Land data shows that 72.4% of pages cited by ChatGPT contained a short direct answer immediately after a question-based heading. AI extractors look for question-answer pairs.

- Cross-platform entity validation. Brands with profiles on G2, Capterra, Trustpilot, or Yelp show 3x higher citation probability than brands without third-party validation. The model is not directly reading those profiles every time it answers a query. But training data built the model’s belief about your authority partly from those sources.

- Technical accessibility for AI crawlers. GPTBot, ClaudeBot, and PerplexityBot do not execute JavaScript and do not follow link graphs for authority signals. Critical content in JavaScript-rendered sections, gated behind paywalls, or blocked in robots.txt simply does not exist for AI retrieval systems.

Signals That Are Weaker Than SEO Assumes

- Traditional backlinks. Weak to neutral correlation with LLM citation likelihood per Digital Bloom 2025. Domain Rating correlates with citations only indirectly, through search ranking on Bing and Google, not through any LLM-native authority signal.

- Keyword density and on-page SEO signals. The Princeton study explicitly found that keyword stuffing performed poorly in generative contexts. Semantic coverage across a topic cluster outperforms single-page keyword optimization for AI visibility.

- Single-page citation optimization. A single well-optimized page cannot match the aggregate visibility of a brand that appears consistently across multiple related intents. Citation probability is a function of how often you show up across the fan-out query pool, not just whether one page is excellent.

The Citation Drift Problem

40 to 60% of citation patterns change month over month. Models update continuously, training data shifts, and retrieval systems get reconfigured. This is not a set-and-forget optimization. The brands maintaining consistent AI search visibility are actively monitoring citation behavior across platforms and adjusting their content and entity signals accordingly.

This is the core function of Citation Engineering as a practice: not a one-time content audit, but an ongoing system for building, maintaining, and measuring AI citation presence. If you want to go deeper on that methodology, the full framework is documented here: /frameworks/citation-engineering/

The Uncomfortable Truth About LLM Citation Accuracy

Here is what most GEO content skips: the system can be gamed, and it is not fully reliable, and both of those facts matter for how you should think about AI citation as a channel.

Between 50% and 90% of LLM responses are not fully supported, and sometimes contradicted, by the sources they cite. Even for GPT-4o with web search, approximately 30% of individual statements are unsupported. Nature Communications, SourceCheckup Framework, Wu et al., April 2025

The Victorino Group’s analysis of 1.2 million ChatGPT responses makes the manipulation surface explicit: content that is positioned early in the page, uses definitive language, packs in named entities, and structures headings as questions will be disproportionately cited, regardless of whether it is the most accurate or most relevant source. This is the same dynamic that produced SEO manipulation for traditional search. The levers are different, but the incentive structure is identical.

What does this mean for a B2B SaaS brand trying to win AI search visibility?

Getting cited is not proof of quality. Not getting cited is not proof of inadequacy. But not getting cited does mean you are being excluded from a buying discovery channel where AI-referred visitors convert at 14.2% compared to 2.8% for Google organic. Those are DerivateX client figures from accounts that track AI-referred sessions separately from organic.

The brands that show up consistently in AI answers for their category are not there by accident. REsimpli, a CRM built for real estate investors, went from invisible in every AI answer to the number one cited CRM in ChatGPT for investor queries in 90 days. No paid spend. The four interventions that produced that result map directly onto the pipeline stages above: entity consistency fixed attribution, answer-forward page structure fixed chunking, comparison content for displacement queries fixed fan-out coverage, and bi-weekly prompt audits caught citation drift before competitors reclaimed ground. The full mechanics are documented in the REsimpli case study.

The question for your brand is not “does AI search matter.” The question is whether the citations that exist about your category accurately represent you, or whether they are sending buyers to competitors while your content sits optimized for a search engine that is no longer the first stop for your buyers.

What to Do with This

There are two games running simultaneously. The first is parametric: does your brand exist with enough weight in the model’s training data to be recalled when someone asks a question your category answers? The second is retrieval: when the model does search, does your content survive the four-stage pipeline all the way to attribution?

Most brands are playing neither game deliberately. They are publishing content and hoping the model notices. That works occasionally and by accident. The brands compounding on AI search are the ones who have mapped where they are failing in the pipeline and built a system to address it.

If you want to know where your brand is leaking visibility, the AI Visibility Audit maps your citation presence across ChatGPT, Perplexity, and Gemini, diagnoses which stage of the pipeline is the failure point, and identifies the specific content and entity signal gaps creating the drop. It is free, and it takes less than 48 hours.

The link is at the top of this page. Use it.

Frequently Asked Questions

1. How do LLMs decide which sources to cite?

LLMs select citations through a four-stage process: query fan-out (expanding the original prompt into 8 to 12 sub-queries), chunking and retrieval (breaking pages into fragments and pulling the most relevant ones), passage selection (comparing candidates based on claim density, entity clarity, recency, and structural format), and attribution (linking the content to the correct source).

Content fails at any of these four stages. Most SEO-focused content fails at the chunking stage, because it buries the answer rather than leading with it.

2. Does ranking on Google help you get cited by ChatGPT?

Partially, and it depends on the platform. Google AI Overviews shows the strongest correlation between organic rank and citation: 76.1% of AI Overview citations also rank in the top 10.

ChatGPT shows the weakest correlation: 75% of GPT-5.4 cited domains do not appear in Bing or Google results at all, because the model pulls from training data directly.

Perplexity sits in the middle. Ranking on Google helps with AI Overviews and, indirectly, with RAG systems that use Google or Bing for retrieval. It does not translate reliably to parametric visibility in ChatGPT.

3. What is RAG and why does it matter for AI search visibility?

Retrieval-Augmented Generation (RAG) is the mechanism that allows a language model to search the web in real time before generating a response. Without RAG, the model answers entirely from training data and produces no URL citations.

With RAG, the model issues search queries, retrieves candidate pages, extracts relevant passages, and cites the sources it used. RAG is triggered by freshness-sensitive queries, niche topics, comparison structures, and YMYL subjects.

For B2B SaaS brands, comparison and evaluation queries, which drive the most purchase intent, almost always trigger RAG. This is why structural content optimization matters: if your page cannot be cleanly chunked and extracted, it will not be cited even when it is found.

4. Why do different AI tools cite different sources for the same question?

Because they use different architectures. ChatGPT relies heavily on parametric knowledge and uses Bing and Brave for retrieval when it does search.

Perplexity is retrieval-first with its own index and skews toward recent, concise content. Google AI Overviews retrieves from Google’s index, Knowledge Graph, and structured data.

These systems do not share a citation algorithm, and only 11% of domains are cited by both ChatGPT and Perplexity. Optimizing for one platform does not automatically improve your visibility on the others.

5. Can you guarantee your brand gets cited by ChatGPT or Perplexity?

No. Citation is probabilistic, not deterministic. Citation patterns shift 40 to 60% month over month as models update. What you can do is systematically improve the probability: build parametric authority through training data presence and cross-platform entity consistency, optimize content for the four-stage retrieval pipeline, and monitor citation behavior over time to catch where visibility is leaking.

Brands that approach this as an ongoing system, not a one-time optimization, are the ones building compounding AI search presence.

Also Read

If your buyers use ChatGPT or Perplexity,

you need to know exactly where you stand.

Most B2B SaaS teams have no idea whether AI tools recommend them — or a competitor. We audit your AI search visibility and show you what to fix first.

for Gumlet

REsimpli in 90 days

trust DerivateX