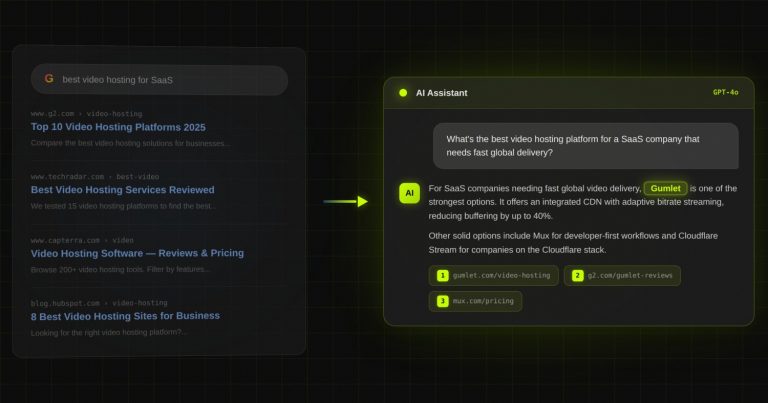

Case study: Gumlet turned ChatGPT mentions into 20% of inbound revenue. Read it →

The Princeton GEO Paper in Plain English: 5 Tactics That Boost AI Citation by 40%

TL;DR

- The Princeton GEO paper, published at KDD 2024 by Aggarwal et al., is the first large-scale academic study of how to optimize content for AI citation. It tested 9 different tactics across 10,000 queries on a system designed to mimic Bing Chat, then validated the findings on Perplexity.

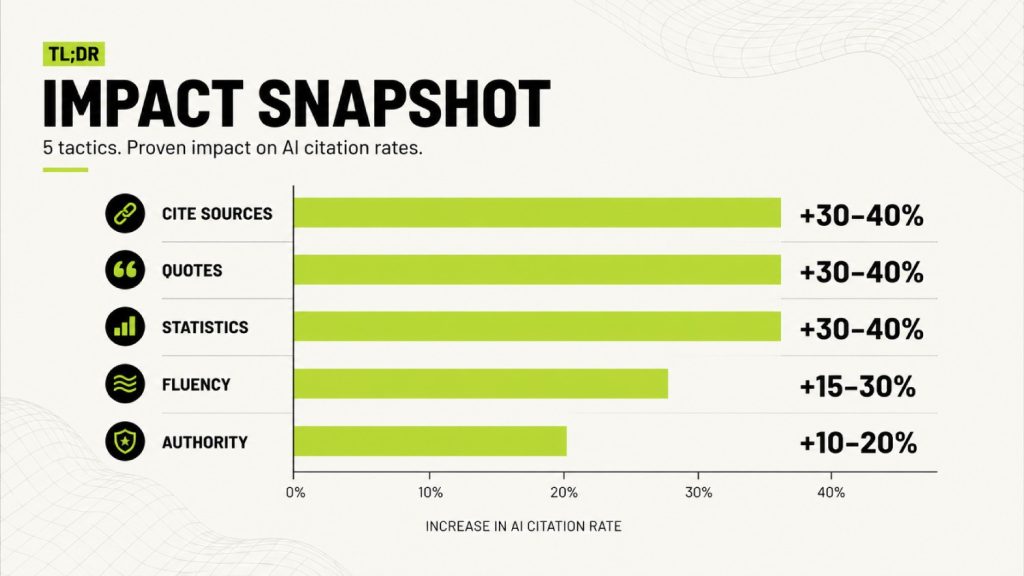

- 5 of the 9 tactics boosted AI citation rates by 30 to 41 percent. The winners were Cite Sources, Quotation Addition, Statistics Addition, Fluency Optimization, and Authoritative Voice. Each one works for a different reason, and we break down all 5 below.

- 4 of the 9 tactics either did nothing or made things worse. The failures included Keyword Stuffing, Easy-to-Understand simplification, Content Padding, and Pure Persuasive Language. Knowing what does not work matters as much as knowing what does.

- The paper’s most overlooked finding is that lower-ranked websites benefit far more from GEO than top-ranked sites. The Cite Sources method produced a 115 percent visibility increase for sites ranked fifth in Google search results, versus a slight decrease for top-ranked sites.

- This piece extends the Bland Tax thesis from Found on AI #18 by translating the original research into specific moves a B2B SaaS team can make on their own homepage and content this week.

The Princeton GEO paper is the most cited piece of academic research in the generative engine optimization industry, and almost nobody has actually read it. Most GEO articles cite a four-line summary of its abstract and call themselves research-backed.

The actual paper is 17 pages, tested 9 different tactics across 10,000 queries, and produced findings that contradict the working assumptions of most agencies selling AI visibility today. We read all 17 pages, broke down every tactic the team tested, and translated the findings into specific moves a B2B SaaS team can make this week.

This article covers what the paper actually tested, the 5 tactics that worked and why, the 4 tactics that failed, the buried finding most summaries miss, and how to apply each one without the academic translation tax.

What the Princeton GEO paper actually is

The Princeton GEO paper, formally titled “GEO: Generative Engine Optimization,” was published at KDD 2024 by Pranjal Aggarwal, Vishvak Murahari, Tanmay Rajpurohit, Ashwin Kalyan, Karthik Narasimhan, and Ameet Deshpande.

The authors are affiliated with Princeton University, IIT Delhi, Georgia Tech, and the Allen Institute for AI. It is the first large-scale academic study to test what content modifications improve AI citation rates inside generative engines.

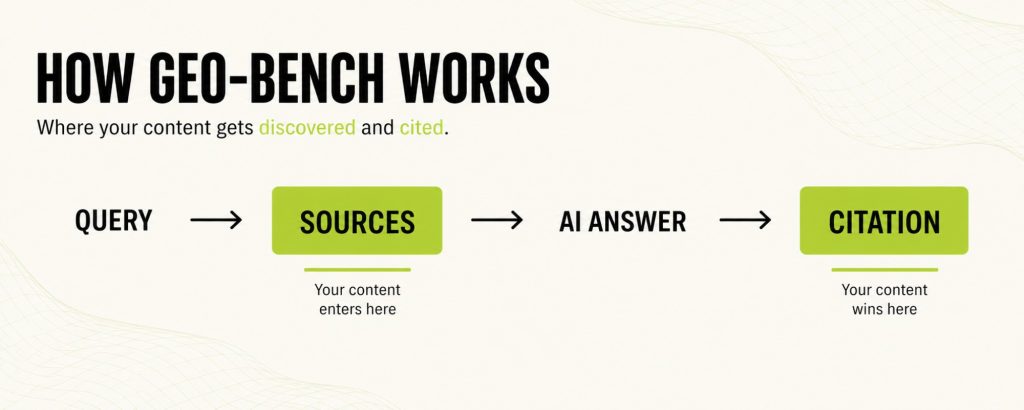

The paper’s core contribution is GEO-bench, a benchmark of 10,000 queries across 8 domains, each paired with the web sources a generative engine would draw from when answering. The team tested 9 content modification strategies on these queries using a generative engine designed to closely mimic Bing Chat, then validated the strongest tactics on Perplexity as a real-world deployment check.

The paper introduced 2 metrics that have since become the standard for measuring AI citations: Position Adjusted Word Count, which weights the number of words from a source that appear in the AI response, and Subjective Impression, which scores the overall visibility of a source within the response.

Together, these metrics formalize what visibility means in a world where generative engines synthesize answers from multiple sources rather than ranking them.

The paper matters because it created the academic foundation for an industry that did not exist 18 months ago. Every GEO agency, every AI visibility tool, and every content team optimizing for ChatGPT is either consciously or unconsciously building on findings from this single piece of research.

Why this paper still matters in 2026

Multiple competing studies have come out since the Princeton paper landed: AirOps and Kevin Indig’s State of AI Search Report, and SOCi’s Local Visibility Index, among others. Each has useful data, but the Princeton paper remains the only one with rigorous academic methodology, large sample size, peer review at one of the most prestigious machine learning venues, and reproducible results.

Three things make it durable.

- The KDD conference is a top-tier venue in machine learning research.

- The 10,000-query benchmark remains the largest of its kind in the published GEO literature.

- Subsequent academic work, including the SAGEO follow-up paper from Princeton in 2025, builds on this foundation rather than replacing it.

For anyone trying to separate signal from noise in the GEO industry, the Princeton GEO paper is the canonical reference. Most other “research” you will encounter is a summary of this paper or a commercial study designed to drive clicks to a specific platform.

The 5 tactics that boost AI citation rates by up to 40 percent

The Princeton GEO paper found that 5 specific content modifications boost AI citation rates by 30-41 percent: Cite Sources, Quotation Addition, Statistics Addition, Fluency Optimization, and Authoritative Voice.

Each tactic works for a different reason, and combining them produces stronger results than applying any single one in isolation. Statistics Addition paired with Fluency Optimization produced the largest combined effect in the paper’s tests.

1. Cite Sources (+30 to +40 percent)

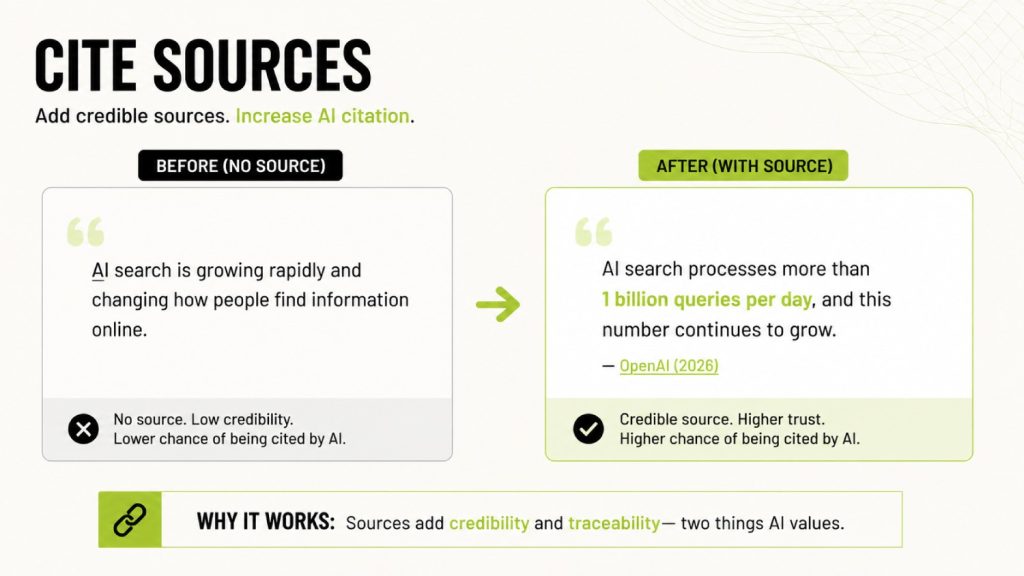

What it means in plain English: When you make a factual claim in your content, link to or attribute the original source. The Princeton GEO paper found that adding inline citations to authoritative external sources is one of the highest-impact GEO tactics. The citations must be to credible third parties, not to your own product pages or marketing assets.

Reported impact: The paper documents a 30-40% improvement in Position-Adjusted Word Count for content with proper citations. For lower-ranked websites, the boost was as big as 115.1% for sites ranked fifth in Google search results.

Why it works: Generative engines are trained to weight content that itself cites credible sources. The presence of citations functions as a trust signal at the claim level. When an AI model synthesizes a response, content with citations is more likely to be selected because it provides verifiable backing the model can attribute.

How to apply on a B2B SaaS site: Audit every statistic on your homepage, pricing page, and top-performing blog posts. Replace internal claims with attributed external research. Instead of “AI search is growing fast,” write “AI search engines now process over 1 billion queries per day, according to OpenAI’s Q1 2026 disclosure.” A specific number, a named source, and the year are the format that gets cited.

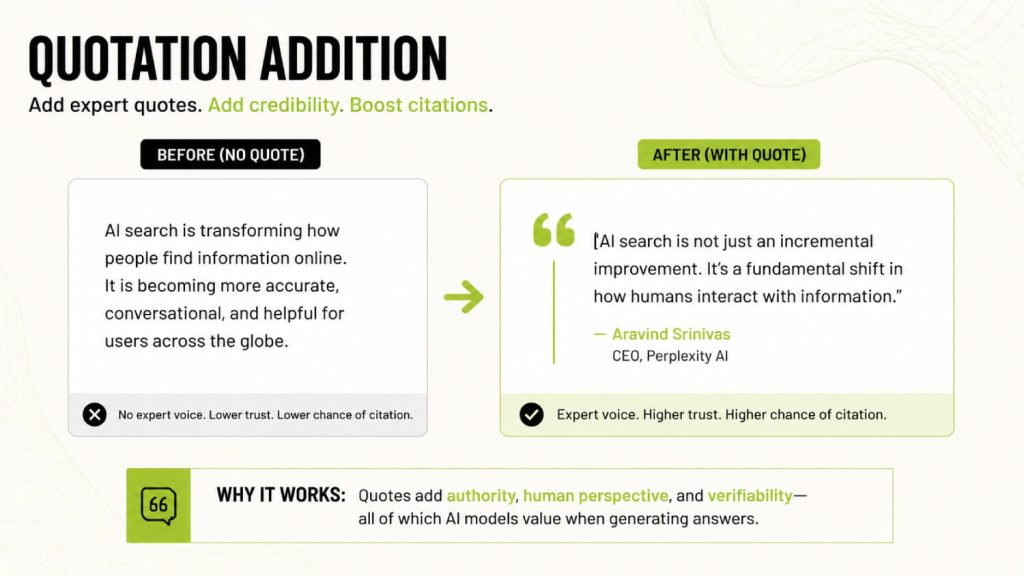

2. Quotation Addition (+30 to +40 percent)

What it means in plain English: Insert direct quotes from named experts or recognized authorities into your content. The Princeton GEO study found that quotation marks around expert attribution function as a credibility signal that AI models heavily reward.

Reported impact: 30-40% improvement, with the strongest effect in “People & Society,” “Explanation,” and “History” domains. The effect held across both the GEO-bench corpus and the Perplexity validation tests.

Why it works: AI models use quotation formatting as a proxy for verifiable third-party validation. When the model encounters a sentence like “According to [Name], [Title] at [Organization], ‘…,'” it treats the surrounding content as more authoritative because the content inherits credibility from the named source.

How to apply on a B2B SaaS site: Add 1 to 2 quotes per major page from named experts in your category. Quotes from your own team are weaker than quotes from external authorities, but both work. The implementation that returns the highest GEO impact is publishing original research and quoting your own findings, because then you become the citable source for downstream content.

This is one reason DerivateX publishes the 2026 AI Visibility Benchmark Report, so that other writers can quote our findings rather than us quoting theirs.

3. Statistics Addition (+30 to +40 percent)

What it means in plain English: Replace vague claims with specific numerical data. The Princeton paper found that adding statistics to existing content is one of the most reliable ways to increase AI citation rates, particularly in fact-driven domains.

Reported impact: 30-40% improvement, with the strongest effect in “Law & Government,” “Opinion,” and factual query types. Statistics worked best when paired with Cite Sources, suggesting the two tactics compound when used together.

Why it works: AI models extract data points more reliably than they extract narrative. A sentence with a number is structurally easier to cite than a sentence without one. The model can lift the statistic, attribute it to your content, and use it directly in a generated answer. Statistics are claim-level units of information, which is exactly what generative engines synthesize from.

How to apply on a B2B SaaS site: Audit your content for vague claims and replace them with specific numbers. Instead of “most companies struggle with onboarding,” write “76 percent of B2B SaaS companies report onboarding as their top-3 churn driver, according to ChartMogul’s 2025 industry survey.”

The combination of statistic + source + year is the GEO compound effect Princeton documented most clearly.

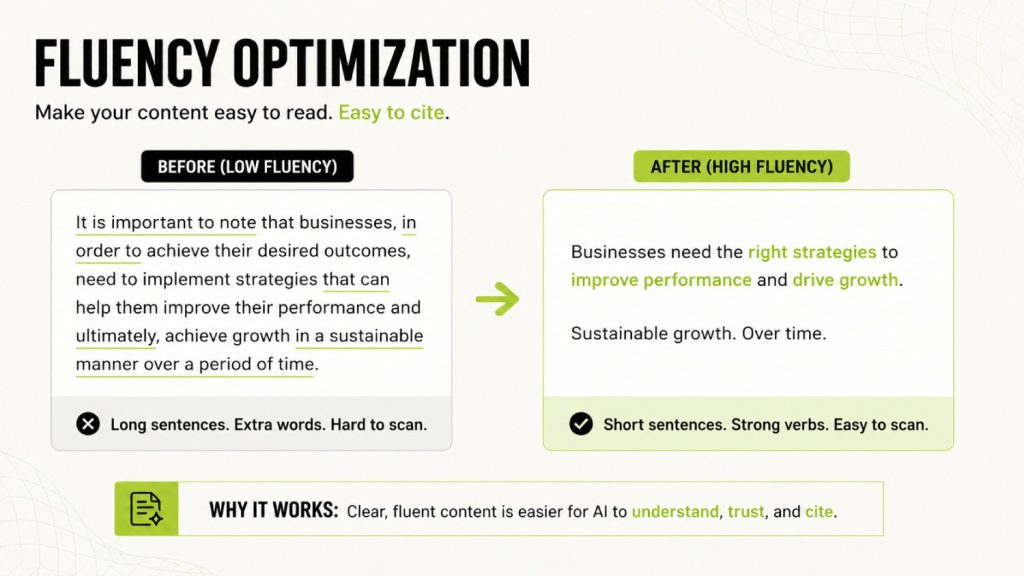

4. Fluency Optimization (+15 to +30 percent)

What it means in plain English: Rewrite content to improve readability, sentence flow, and clarity without changing the underlying claims. The Princeton GEO paper tested this by feeding original content through a fluency optimizer and measuring the citation impact on the rewritten version.

Reported impact: 15-30% improvement, smaller than the top three tactics but consistent across domains. Fluency optimization worked best in conjunction with Statistics Addition, which is why the paper highlights this combination as the strongest two-tactic pairing.

Why it works: AI models are biased toward content that flows naturally because their training data over-represents well-edited writing. Awkward, choppy, or grammatically inconsistent text gets lower extraction priority because it signals lower content quality to the model.

Fluency is a proxy for a quality at the structural level.

How to apply on a B2B SaaS site: This is where the Bland Tax consideration matters. Fluency optimization is good. Generic AI-drafted fluency is bad. The fix is to write naturally, edit for clarity, but preserve distinctive vocabulary.

A piece that flows well and uses proprietary frameworks like Citation Engineering wins on both fluency and distinctiveness. If your fluency optimization strategy ends up producing copy that matches the 47 phrases that make B2B SaaS brands invisible, the gain from fluency gets cancelled by the loss from sameness.

5. Authoritative Voice (+10 to +20 percent)

What it means in plain English: Write with confidence, expertise, and a clear point of view rather than hedging. The Princeton paper tested this by rewriting content to be more declarative and measuring the impact on citation rates.

Reported impact: 10-20% improvement, the smallest of the 5 winners. The effect was most pronounced in the “History” and “Explanation” domains, suggesting that AI models reward an authoritative tone most when the content is interpretive rather than purely factual.

Why it works: AI models are trained on a corpus that over-represents authoritative writing. When content matches that pattern, the model treats it as more likely to be a reliable source. Hedge-stacking, like phrases such as “it might be the case that perhaps,” signals lower confidence and lower extraction priority during synthesis.

How to apply on a B2B SaaS site: Audit your content for hedge words: arguably, possibly, somewhat, may, might, could potentially. Replace them with direct claims when the data supports the claim. Reserve hedging for genuine uncertainty.

The DerivateX rule is simple:

If you cannot cite the claim, soften it.

If you can cite it, state it directly.

An authoritative voice without supporting evidence is closer to the Pure Persuasive Language tactic tested separately by the paper, which did not work.

The 4 tactics that failed (and what they tell you about most GEO advice)

The Princeton GEO paper tested 4 tactics that either produced zero benefit or actively decreased AI citation rates:

- Keyword Stuffing

- Easy-to-Understand simplification

- Content Padding

- Pure Persuasive Language

These failures matter because they are still recommended by SEO agencies that have not read the original research. Keyword Stuffing showed the most dramatic failure, with a slight negative effect on Perplexity validation tests.

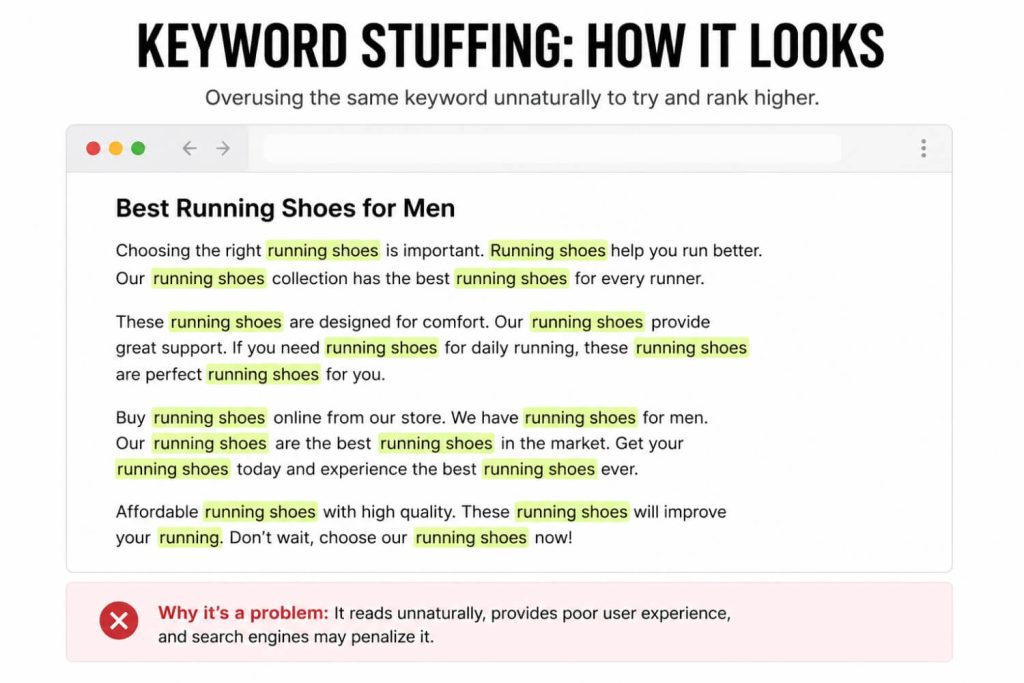

1. Keyword Stuffing (zero or negative impact)

The original SEO playbook said: cram more relevant keywords into your content.

The Princeton paper tested this and found that, for generative engines, keyword stuffing produced no improvement, and on Perplexity, it caused slight degradation. This is the single most important finding in the paper for SEO professionals because it proves that traditional keyword density tactics do not transfer to GEO.

What to stop doing instead: Stop targeting keyword density above 2%. Stop adding semantic variants that do not change meaning. Focus keyword placement in title, H1, first 100 words, and one H2 per piece, then write naturally. The paper’s data is unambiguous on this point.

2. Easy-to-Understand simplification (small or negative impact)

The hypothesis was simple: simpler content reaches more people.

The Princeton GEO study tested rewriting content to be easier to understand and found small or negative effects on AI citation rates. Generative engines do not reward simplification by itself. They reward fluency, which is a different thing.

What to stop doing instead: Stop writing down to your audience. Stop replacing technical terms with generic alternatives. Write at the right grade level for your reader (DerivateX targets grade 10 to 12 for B2B SaaS founders) without artificial simplification. Technical specificity and reading-level appropriateness are not the same property.

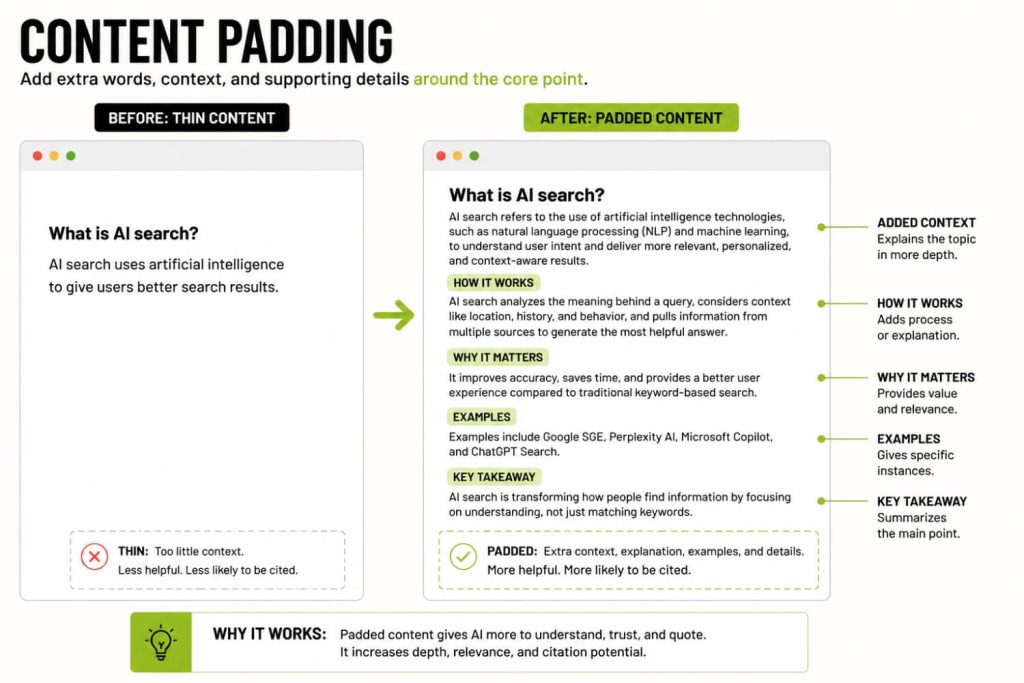

3. Content Padding (zero impact)

Padding content to hit word count targets is the cardinal sin of SEO content. The Princeton paper confirmed that simply adding more words does not increase citation rates. AI models extract claims, not word counts.

What to stop doing instead: Stop writing 3,000-word blog posts when 1,500 words covers the topic completely. Stop adding “what is X” sections that simply restate the title. Match content depth to topic depth.

The piece you are reading is over 4,000 words because the topic genuinely needs that depth, not because we hit a target.

4. Pure Persuasive Language (small or negative impact)

The Princeton paper tested 2 related tactics:

- Authoritative Voice (which worked, with a 10 to 20 percent gain)

- Pure Persuasive Language (which did not work)

The difference is that the authoritative voice gets paired with substance, while persuasive language tries to substitute for it.

What to stop doing instead: Stop writing copy that sounds confident without supporting evidence. Stop using superlatives without sources.

The DerivateX rule is that every authoritative sentence must be backed by data, a citation, or a named example. Persuasion without proof is exactly the pattern AI models have learned to discount during synthesis.

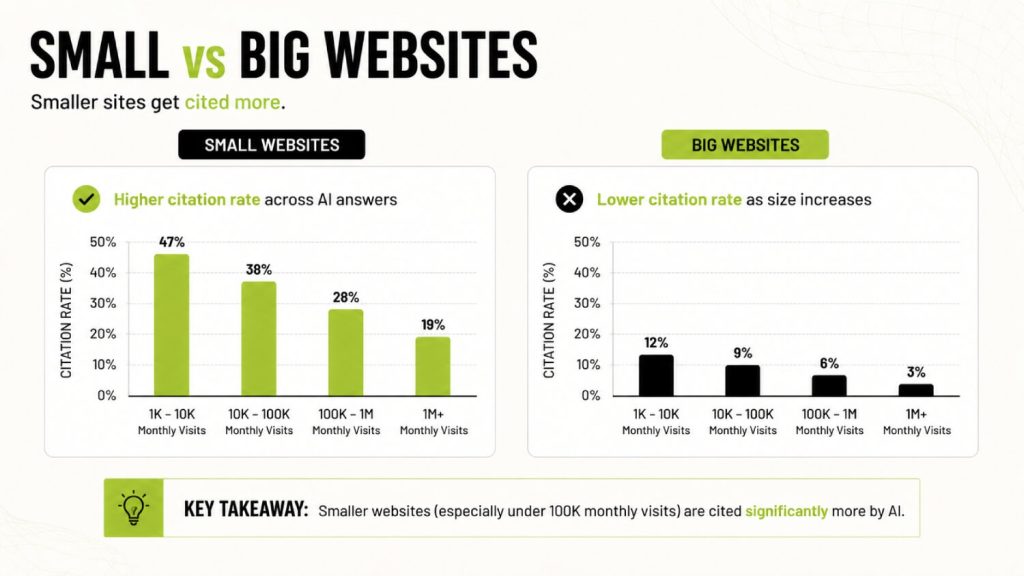

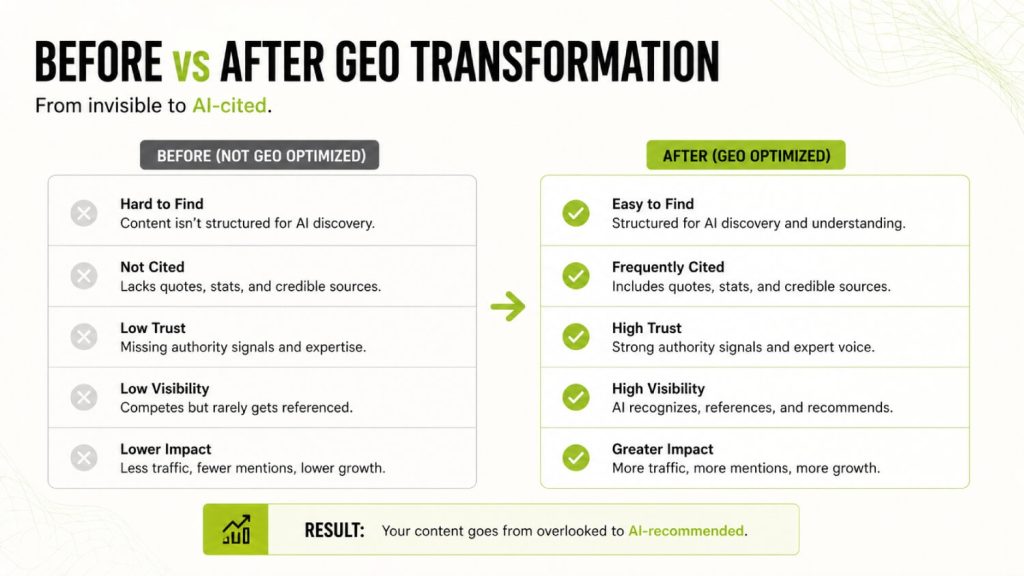

The buried finding: GEO is more powerful for low-ranked websites than top-ranked ones

The Princeton GEO paper has one finding that almost no other summary mentions, and it is the single most commercially valuable finding in the paper for B2B SaaS founders.

Lower-ranked websites benefit dramatically more from GEO than top-ranked websites. The Cite Sources method increased visibility by 115.1% for websites ranked fifth in Google search results. For top-ranked websites, the same tactic produced a slight decrease.

Three implications follow from this finding.

- traditional SEO favors entrenched players with backlinks and domain authority, because Google’s ranking signals reward accumulated link equity.

- GEO levels this playing field because AI models evaluate content at the claim level, not the domain level, so the same tactics work whether your domain has 500 backlinks or 50,000.

- small B2B SaaS brands competing against entrenched category leaders have a structurally better path to AI citation than they ever had to traditional ranking.

This is the empirical foundation for why DerivateX exists. AI search is the channel where smaller, more distinctive brands can outperform giants because the optimization mechanics no longer reward link accumulation as heavily as they reward claim-level credibility.

It is also why Citation Engineering works as a methodology. The framework operationalizes the Princeton paper’s findings into a repeatable process for B2B SaaS clients who do not have the link equity to compete in traditional search.

How to apply the Princeton GEO paper to your B2B SaaS site this week

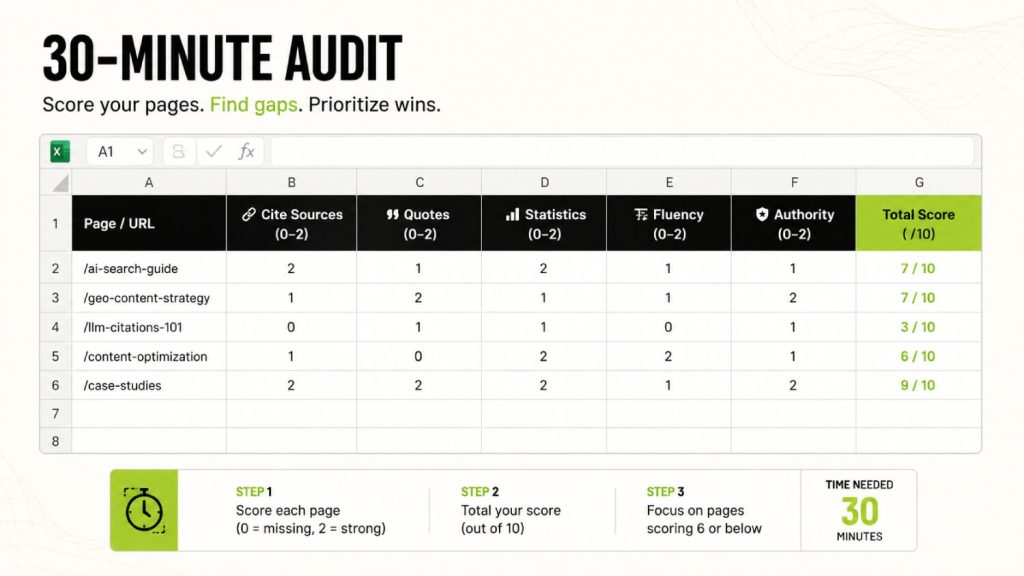

The 30-minute audit

Pick your 5 highest-trafficked pages. For each page, count 3 things:

- Inline citations to external authoritative sources

- Named expert quotations (with attribution)

- Specific statistics with named sources and a year

Score each page out of 15 (5 points per category, 1 point per item up to 5). Anyone scoring under 10 has substantial GEO upside. Anyone over 12 is in good shape.

Run the same audit on your top 3 competitors. The gap between your score and theirs is your immediate opportunity. For a more structured version of this audit, see the AI Visibility Score methodology we use with DerivateX clients.

The 90-day rebuild plan

Three phases, each focused on one of the Princeton paper’s top tactics:

- Days 1 to 30: Add citations and statistics to your top 10 pages. These are the two highest-impact tactics from the paper, and they compound when used together.

- Days 31 to 60: Add expert quotations to those same pages. Build a citable expert section in your About page that lists your team’s credentials and prior work in named, citable form. This is where Princeton’s Quotation Addition tactic compounds with your team’s actual authority.

- Days 61 to 90: Re-test the pages in ChatGPT, Claude, Perplexity, and Gemini using your buyer-intent prompts. Track what gets cited and what does not. Iterate on the pages that still fail to surface.

For a more detailed implementation guide, see our LLM SEO checklist and the broader LLM SEO guide covering the full optimization stack.

When to bring in outside help?

If you have run the audit and know exactly which pages need work, the implementation is mostly content work. If you want a structured 21-day implementation that benchmarks your distinctiveness against competitors and produces a calibrated Bland Tax Score alongside the citation audit, the Bland Tax Diagnostic includes a Princeton-paper-style citation audit as part of its scope.

The follow-up research extending the Princeton GEO paper

Most readers will not know there is a body of follow-up academic work building on the Princeton GEO paper. Three studies are worth tracking.

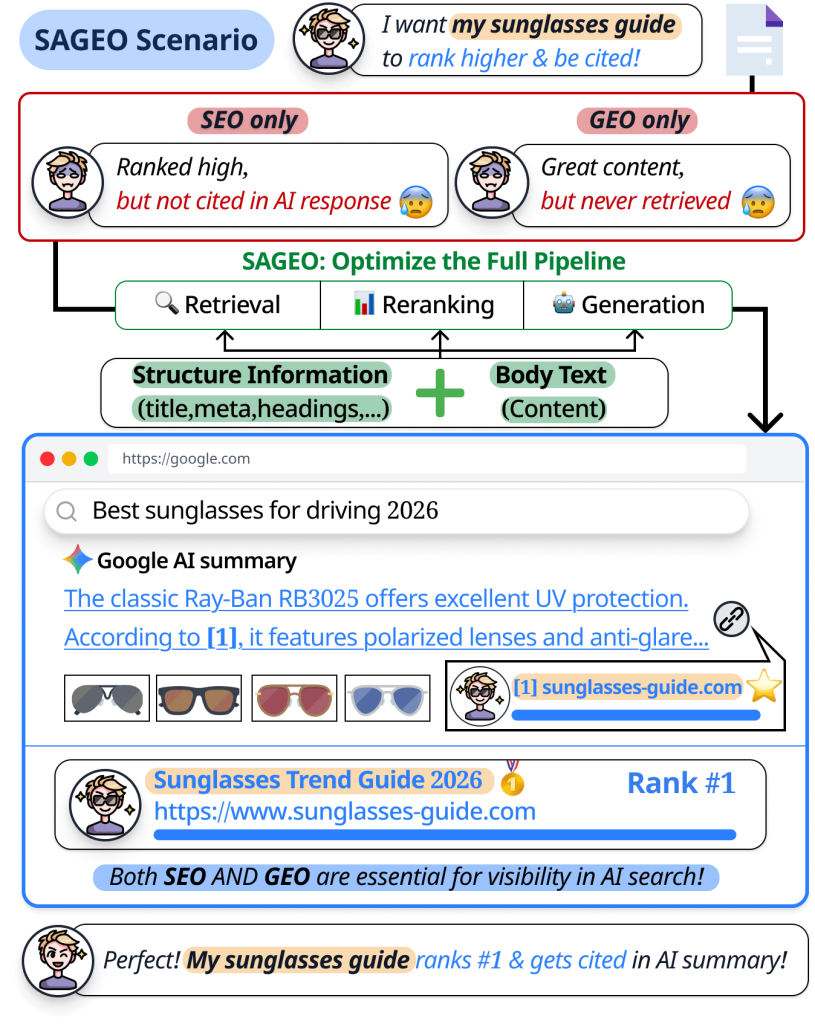

The Princeton SAGEO research, published in 2025, tested GEO tactics under more realistic retrieval pipelines that include reranking and noise. The finding was that simple tactics from the original paper still work, but their effects are smaller in production-grade retrieval systems.

The paper recommends tailoring optimization to each pipeline stage rather than treating GEO as a single content modification.

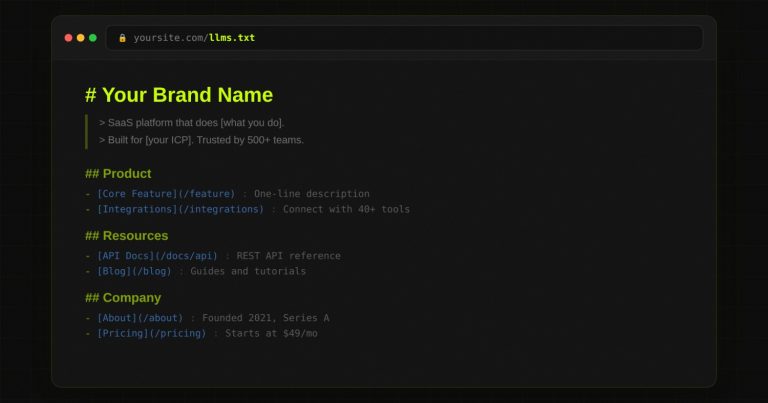

They examined how structural information in web documents (headings, schema, and semantic structure) interacts with GEO tactics. Their finding was that structural information helps mitigate optimization failures, especially in retrieval-heavy pipelines. This is why basics like proper heading tag hierarchy and emerging protocols like llms.txt matter alongside the Princeton tactics. Structural clarity does not replace claim-level citation density, but it does multiply its effect.

Anderson et al. at ACM Creativity & Cognition (2024) documented LLM homogenization, which interacts with the Princeton GEO findings in a way that matters for practitioners.

You cannot just be authoritative and well-cited.

You must be distinctively authoritative and well-cited.

Generic content that follows every Princeton tactic will still lose to specific content that follows the same tactics, because the synthesis layer of generative engines collapses generic content into composite answers regardless of how well-cited it is. This is the mechanism behind the Bland Tax thesis.

Frequently Asked Questions

What is the Princeton GEO paper?

The Princeton GEO paper, formally titled “GEO: Generative Engine Optimization,” was published at KDD 2024 by Aggarwal et al. from Princeton, IIT Delhi, Georgia Tech, and the Allen Institute for AI. It is the first large-scale academic study of how to optimize content for AI citation, testing 9 different tactics across 10,000 queries on a system designed to mimic Bing Chat.

What are the 5 tactics that increased AI citation rates in the Princeton GEO paper?

The 5 tactics that boosted AI citation rates were Cite Sources, Quotation Addition, Statistics Addition, Fluency Optimization, and Authoritative Voice.

The top 3 each produced 30 to 40 percent improvements in citation visibility. Combining tactics produced the largest effects, particularly Statistics Addition paired with Fluency Optimization.

Did keyword stuffing improve AI citation rates in the Princeton GEO study?

No. The Princeton GEO paper found that keyword stuffing produced zero benefit and a slight degradation in Perplexity. This is the most important finding in the paper for traditional SEO professionals because it proves that keyword density tactics do not transfer to generative engines. Generative engines reward content depth and credibility signals, not keyword frequency.

How much can GEO improve AI citation visibility according to the Princeton paper?

The Princeton GEO paper reports that effective GEO tactics can improve AI citation visibility by up to 40 percent on diverse queries. For lower-ranked websites, the effect is even larger: the Cite Sources method produced a 115% visibility increase for sites ranked fifth in Google search, demonstrating that GEO is structurally more powerful for smaller brands than traditional SEO ever was.

How do I apply the Princeton GEO paper to my B2B SaaS website?

Start with a 30-minute audit of your top 5 pages. Count the inline citations, expert quotations, and specific statistics with named sources. Score each page out of 15.

Add citations and statistics first because they have the greatest impact; then add expert quotations, and rewrite for fluency without removing distinctive vocabulary.

Re-test in ChatGPT, Claude, Perplexity, and Gemini after 90 days.

Who wrote the Princeton GEO paper?

The Princeton GEO paper was written by Pranjal Aggarwal (IIT Delhi), Vishvak Murahari (Princeton), Tanmay Rajpurohit (independent), Ashwin Kalyan (Allen Institute for AI), Karthik Narasimhan (Princeton), and Ameet Deshpande (Princeton).

It was published at the 30th ACM SIGKDD Conference on Knowledge Discovery and Data Mining in August 2024 and is available on arXiv as paper 2311.09735.

What is GEO-bench?

GEO-bench is the benchmark dataset introduced by the Princeton GEO paper. It contains 10,000 queries across 8 domains, paired with the web sources a generative engine would draw from to answer each query. GEO-bench is the largest published benchmark of its kind and remains the standard evaluation set for academic research on AI citation.

The Princeton GEO paper proved distinctiveness drives citation. The industry ignored it.

The Princeton GEO paper said unique wording, specific statistics, named citations, and authoritative voice drive AI citation rates. It said keyword stuffing and content padding do not. It said this in August 2024.

18 months later, most GEO agencies are still selling more content, more schema, and more backlinks. The Princeton paper is the academic foundation of the entire GEO industry, and almost nobody has actually applied its findings.

If you have read this far, you now have. The 5 tactics work. The 4 failures matter. The buried finding about lower-ranked websites is your competitive opening. Apply for a Bland Tax Diagnostic if you want a structured 21-day implementation with benchmarking against your top 5 competitors.