Case study: Gumlet turned ChatGPT mentions into 20% of inbound revenue. Read it →

First-Party vs. Third-Party Citations in LLMs: What’s the Difference and Why It Matters

TL;DR

- LLM citations come in three types, not two: first-party citations from your owned domain, third-party citations from sites that mention you, and AI-internal citations where the model surfaces your brand from training memory without showing a link.

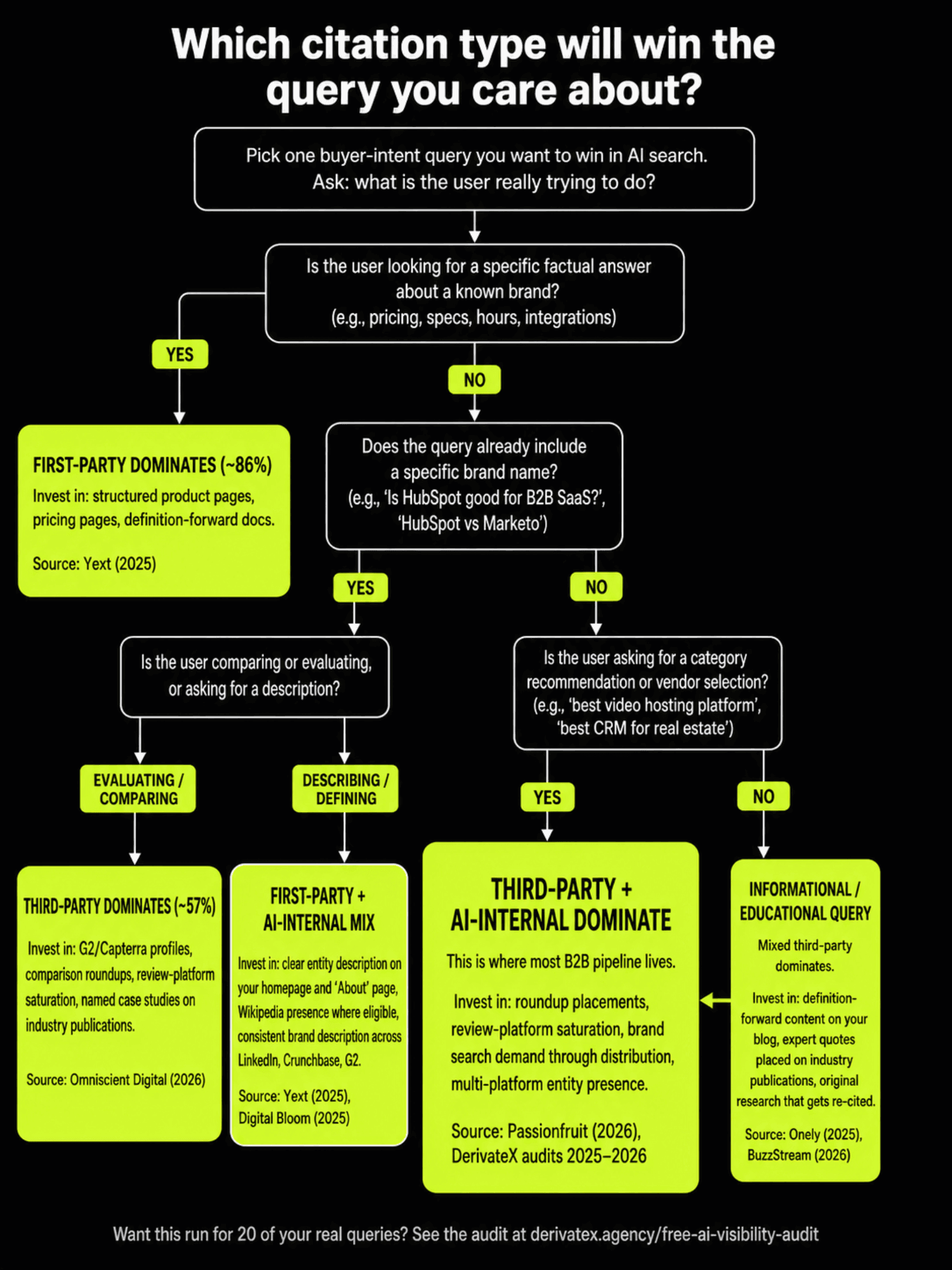

- Which type wins depends on the query. Yext’s analysis of 6.8 million AI citations found brand-controlled sources captured 86% of citations for objective queries like pricing and specifications, while Omniscient Digital’s analysis of 23,387 citations found third-party sources captured 57% of citations for branded evaluative queries.

- The two findings do not contradict. They describe different parts of the same surface, and most B2B pipeline-driving queries fall in the half where third-party citations dominate.

- Roughly half of all third-party citations are engineerable rather than earnable. Review profiles, comparison roundup placements, structured directories, and expert source databases are placeable assets with predictable cost per placement.

- AI-internal citations sit at the top of the leverage stack. They are the second-order outcome of consistent first-party and third-party investment over six to eighteen months, decided by entity strength rather than any single page.

You run your brand name through ChatGPT and watch five citations come back. Two are pages from your own site. Two are review platforms you have a free profile on. One is a Reddit thread from 2023 that you have never seen before.

You want to know which of these you can replicate, which you can engineer, and which you can only hope for the next time someone asks the model a similar question. Most existing writing on first-party vs third-party citations in LLMs treats this as a binary choice and picks a side.

One camp points to data showing brand-owned domains capture the majority of citations. Another camp points to data showing third-party sources capture the majority.

Both camps are reading correct numbers, but the strategic conclusion either one draws is wrong because the question they are answering is the wrong question. This piece breaks down the three citation types LLMs actually use, maps which type wins for which query type, and separates the third-party citations you can engineer from the ones you can only earn.

By the end you will be able to look at your current content and PR allocation and say with specifics whether it is matched to the queries that drive your pipeline or pointed at the wrong layer of the citation surface.

The Three Citation Types LLMs Actually Use

LLM citations operate across three distinct surfaces, and conflating them is the single most common reason GEO budgets get misallocated. The first two are the ones every existing post talks about. The third is the one that decides recommendations when neither of the first two surfaces gets retrieved.

First-Party Citations Are Sources from Domains You Own

A first-party citation is any reference to a page on a domain you control. Your blog, your product pages, your documentation, your pricing page, and your help center all qualify. When ChatGPT or Perplexity pulls a passage from your own site and attributes it back with a clickable link, that is a first-party citation.

These citations dominate when the query has an objective, single-source answer. If a buyer asks an LLM what your pricing is, your pricing page is the canonical source. The model treats your owned content as the authoritative input and rarely overrides it with third-party material.

The Gumlet engagement we ran in 2025 illustrates this clearly. Pricing-related and feature-specific queries pulled directly from Gumlet’s own structured pages. The model had no reason to look elsewhere because the answer existed cleanly on the canonical source.

Third-Party Citations Are Sources from Domains You Don’t Own

A third-party citation is any reference to your brand or product on a domain you do not control. Editorial coverage, review platforms, community discussions, analyst write-ups, and competitor comparison pages all fall into this category.

Third-party citations split into four functional sub-types that behave differently in retrieval. Editorial coverage covers Forbes, TechRadar, Tom’s Guide, and category-defining publications. Review platforms cover G2, Capterra, Trustpilot, and TrustRadius.

Community discussions cover Reddit, Quora, Slack archives, and niche forums. Comparison content covers third-party listicles, alternative pages, and head-to-head reviews. Each sub-type carries a different trust weight in the model.

A Reddit thread reads as raw user voice. A G2 profile reads as structured validation. A Forbes mention reads as editorial endorsement.

The Verito engagement we ran in 2025 moved Verito from page-four invisibility to AI’s default recommendation for legal IT by saturating all four sub-types simultaneously, not just one.

AI-Internal Citations Are When the Model Surfaces Your Brand Without a URL

An AI-internal citation happens when an LLM recommends or describes your brand from its training memory and shows no clickable source. The model has learned about you well enough during pre-training or fine-tuning that it does not need to search the web to talk about you.

This is not a failure mode. It is the highest-leverage form of presence. Research from a 2025 attribution study found that around 30% of LLM answers across major models include zero clickable citations, with Gemini providing no citations on roughly 92% of queries and GPT-4o skipping web search on roughly 24% of queries even when it was technically available.

For all of those answers, the only thing that decides whether your brand is mentioned is whether the model already knows you. Most existing GEO writing ignores this category because it cannot be tracked through a referrer URL. That makes it inconvenient, not unimportant.

For a meaningful share of buyer-intent queries, AI-internal is the only citation type that exists.

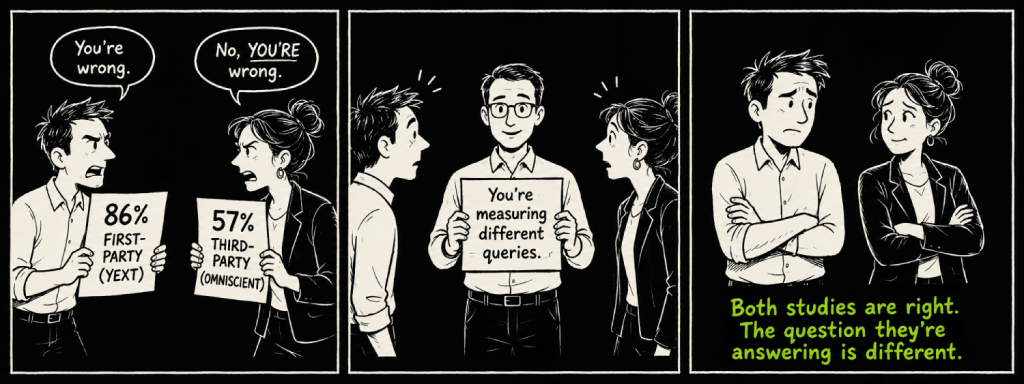

Why Two Major Studies Look Like They Contradict Each Other

The cleanest way to understand the citation surface is to sit the two most-cited studies in the space side by side and explain why both of them are correct. Yext analyzed 6.8 million AI citations and found brand-controlled sources captured 86% of them. Omniscient Digital analyzed 23,387 citations and found third-party sources captured 57% of branded query citations.

The numbers do not conflict. The studies measured different parts of the same surface.

Yext’s 86% Is True for Objective Queries

The Yext sample weighted heavily toward queries with single-source factual answers. Pricing, hours of operation, specifications, addresses, integrations, supported file formats, and similar fact-retrieval queries dominate the dataset.

For these queries the model has no reason to triangulate across sources because the brand’s own page is the canonical answer. A pricing page is more reliable on its own pricing than any third party could be. A product specification page is the source of truth for that product.

The 86% figure is what happens when most queries in your sample have an objective owner.

Omniscient’s 57% Is True for Branded Evaluative Queries

The Omniscient sample looked at branded prompts where users were running evaluation, comparison, or recommendation queries. “Is X a good choice for Y?” “What do customers say about X?” “Is X better than Z?” These are decision queries, not fact queries.

For these prompts the model treats the brand’s own content as biased input by default. It pulls reviews, listicles, forums, case studies, and comparison content because those sources are perceived as independent. The 57% figure is what happens when the sample is weighted toward queries where independence matters.

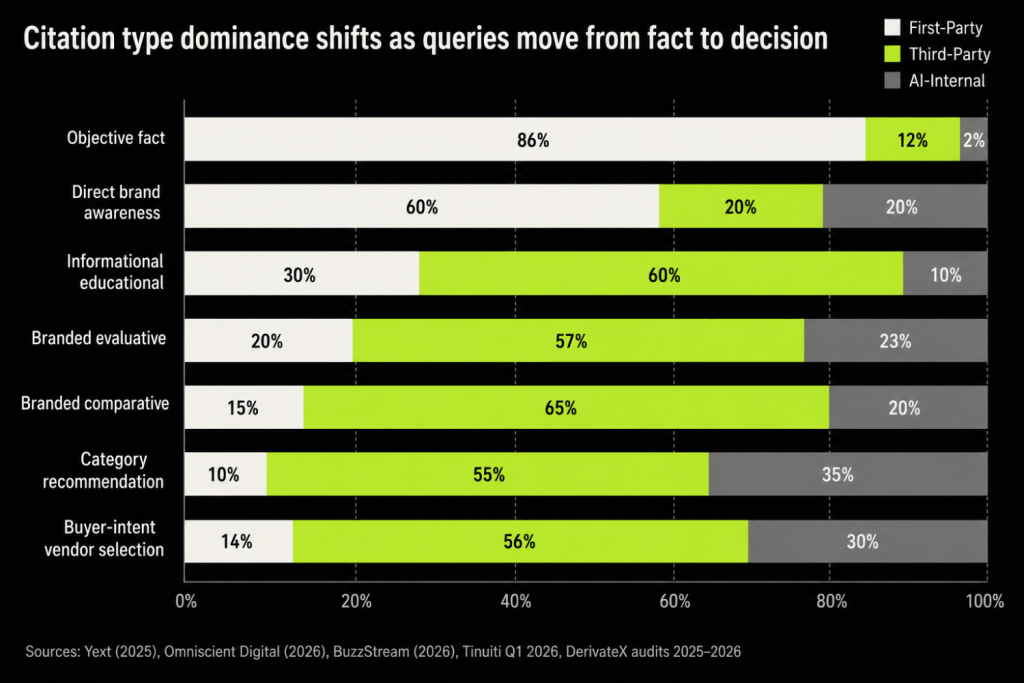

The Resolution Is That Citation Type Dominance Is a Function of Query Type

Both studies are accurate. The strategic mistake is treating either of their headline numbers as a universal law about LLM citation behavior. The actual operating principle is that the dominant citation type for any query is decided by what kind of query it is.

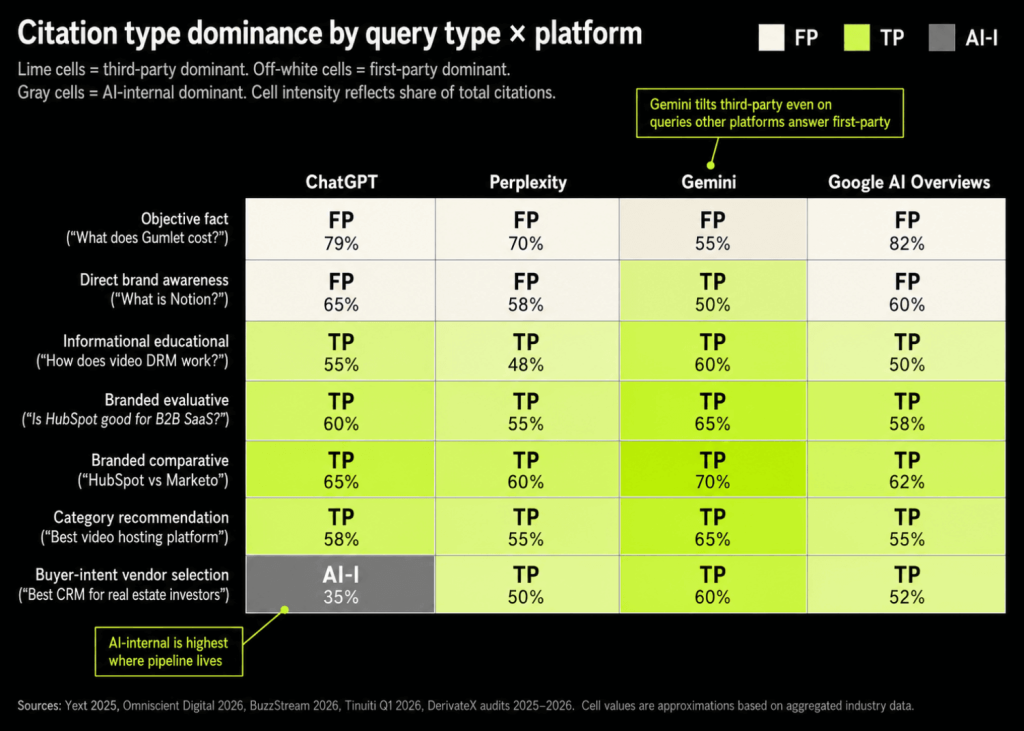

A 2026 BuzzStream analysis of 4 million citations across 4,000 prompts adds a third data point that fits the same pattern. ChatGPT specifically pulled 68.9% of citations from owned content for branded prompts, the highest first-party share of any major surface. Different platforms tilt differently, but the within-platform variation by query type still moves more than the cross-platform variation.

Which Citation Type Wins for Which Query Type

Once you accept that query type drives citation type, the next step is mapping the relationship explicitly. The table below pulls together the dominant citation type for each major B2B query category, drawn from the Yext, Omniscient, BuzzStream, and Tinuiti datasets along with what we have seen across audits.

| Query Type | Example | Dominant Citation Type | Source Evidence |

|---|---|---|---|

| Objective fact | “What does Gumlet cost?” | First-party | Yext (86% brand-controlled) |

| Direct brand awareness | “What is Notion?” | First-party + AI-internal | Yext, Digital Bloom 2025 |

| Informational educational | “How does video DRM work?” | Mixed third-party | Onely, BuzzStream |

| Branded evaluative | “Is HubSpot good for B2B SaaS?” | Third-party (57%) | Omniscient Digital |

| Branded comparative | “HubSpot vs Marketo” | Third-party | Omniscient, Quattr |

| Category recommendation | “Best video hosting platform” | Third-party + AI-internal | Passionfruit 2026, Quattr |

| Buyer-intent vendor selection | “Best CRM for real estate investors” | Third-party + AI-internal | Audits we have run across 2025 to 2026 |

This mapping holds whether the query is run in ChatGPT, Perplexity, Gemini, or AI Overviews, with platform tilt changing the magnitudes but not the order.

For Most B2B Pipeline Queries, Third-Party Citations Win

The queries closest to a purchase decision are the queries third-party citations dominate. B2B buyers do not run “what is Salesforce” when they are evaluating a CRM. They run “best CRM for [use case]” and “Salesforce vs HubSpot for [scenario]” and “is Salesforce worth it for [company size].” Those are exactly the query shapes Omniscient measured, where third-party content captured 57% and thought leadership on owned domains captured only 5.4%.

Quattr published a working illustration of this in early 2026. When their team ran “Quattr” through Google AI Mode, eight of the ten cited sources were third-party, including review platforms, editorial coverage, comparison pages, and community threads. Their own domain was one input among many, not the primary one.

This is also what we see across audits at DerivateX. For the buyer-intent queries that produce demos, third-party citations consistently outweigh first-party citations by a 4-to-1 ratio or higher. The ratio inverts only when the query already includes the brand name and asks a fact about it.

First-Party Citations Still Matter, but Mostly for Defending Your Brand Name

First-party content is necessary for visibility but insufficient for recommendation. Your owned pages are what win branded fact queries, what supply the structured chunks LLMs need during retrieval, and what defend your position when a competitor’s comparison page tries to define you. None of that is optional.

What first-party content cannot do alone is win unbranded category queries. The model does not know to look at your site for those queries because it does not yet know your brand belongs in the consideration set. That problem is solved in the third-party and AI-internal layers, not on your blog.

This is the cleanest break with how most teams thought about content strategy in 2022 and 2023. The pre-LLM playbook said “rank your owned pages and the rest takes care of itself.” The 2026 playbook says owned pages are the floor, not the ceiling, and most of the recommendation surface lives off your domain.

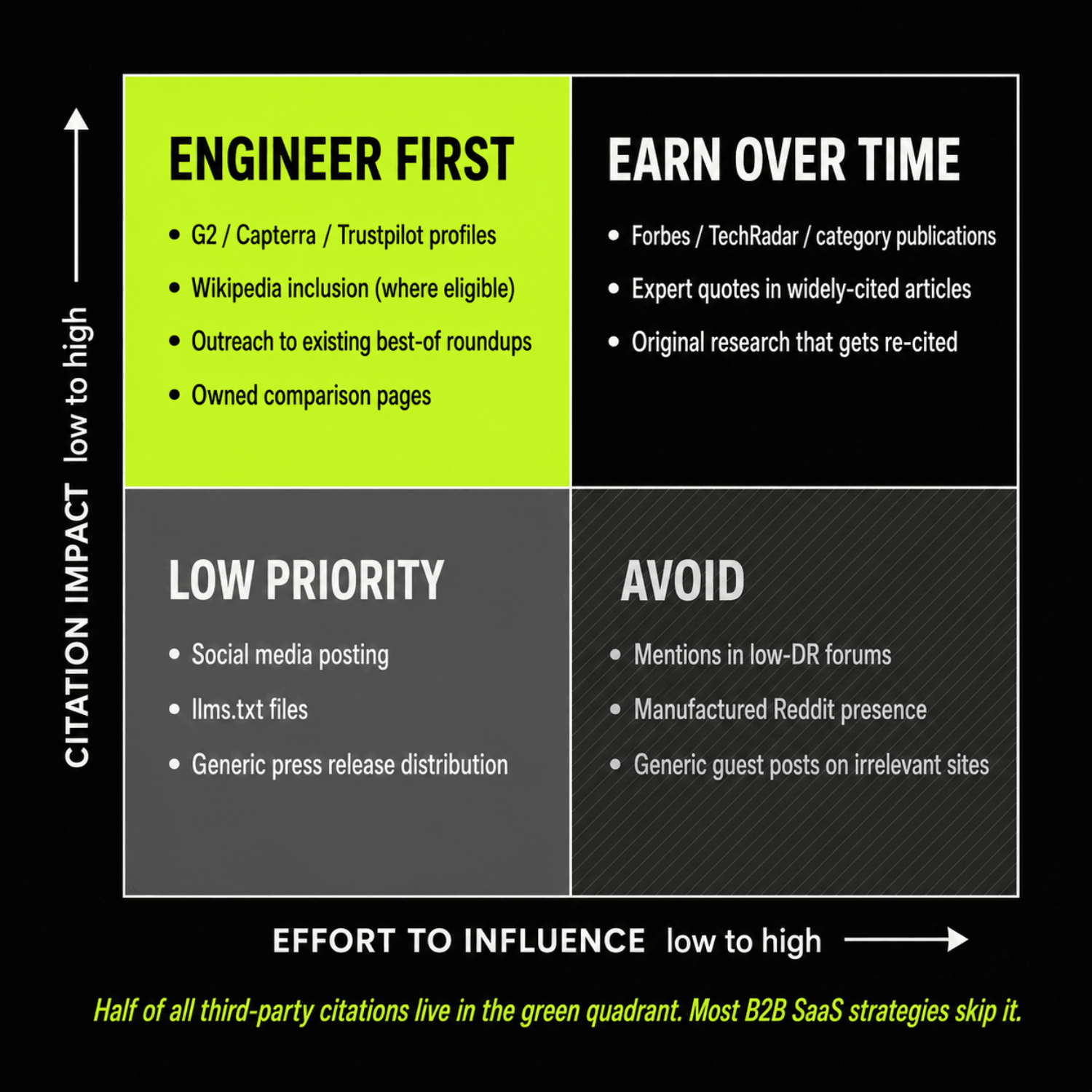

The Citation Control Spectrum: What You Can Engineer vs. What You Can Only Earn

The second strategic mistake after misreading the studies is treating the third-party layer as one bucket of “earned media” you have to wait for. About half of all third-party citations are engineerable. The other half are earnable in the sense that you can prepare for them but not directly place them.

Sorting between the two is what separates a deliberate GEO operation from a hopeful one. The 2×2 below maps the major citation tactics by effort to influence and citation impact.

| Low effort to influence | High effort to influence | |

|---|---|---|

| High citation impact | G2, Capterra, Trustpilot profiles. Wikipedia inclusion where eligible. Outreach to existing best-of roundups. Owned comparison pages. | Forbes, TechRadar, and category-defining publication placements. Expert quotes in widely-cited articles. Original research that gets re-cited. |

| Low citation impact | Social media posting. llms.txt files. Generic press release distribution. | Mentions in low-DR forums. Manufactured Reddit presence. Generic guest posting on irrelevant sites. |

Note one practical implication of the bottom-left quadrant. The llms.txt question gets asked often enough to deserve a direct answer: it sits in the low-impact, low-effort cell because, as of mid-2026, no major LLM crawler has confirmed reliance on the file for retrieval decisions.

The Engineerable Half of Third-Party Citations

The high-impact, lower-effort quadrant is where most B2B SaaS brands are leaving the largest amount of LLM visibility on the table. Review profiles on G2, Capterra, Trustpilot, and category-specific directories are claimable assets, not lottery tickets. Research summarized by Passionfruit in early 2026 found that brands with profiles on G2, Capterra, Trustpilot, or Yelp showed roughly three times higher citation probability than brands without them.

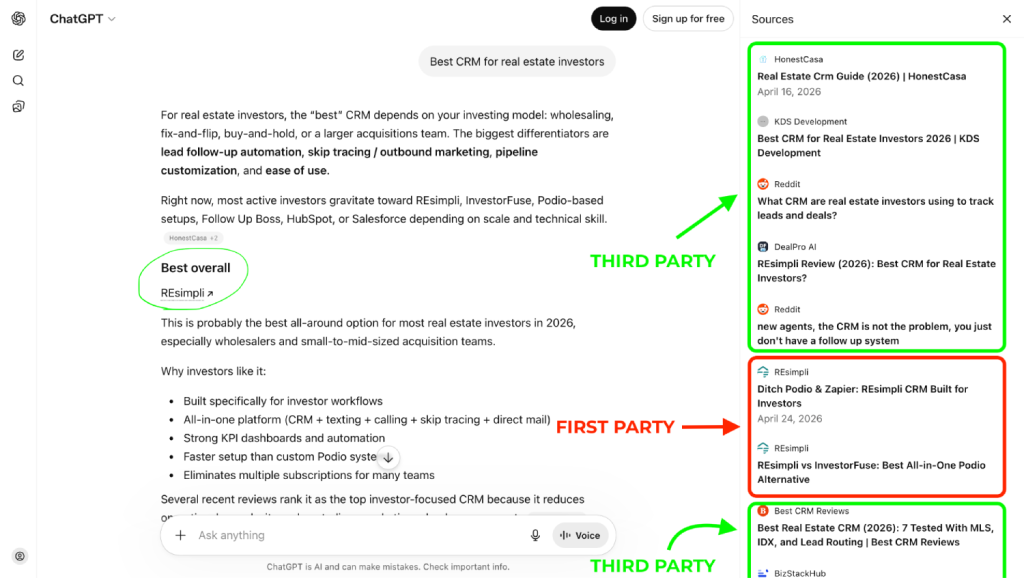

A G2 profile, a Capterra listing, and inclusion in three category roundup pages will move your AI citation surface more than ten new blog posts. The REsimpli engagement we ran is a clean illustration. Becoming the default ChatGPT citation for “real estate CRM for investors” required three coordinated motions: canonical comparison pages on REsimpli’s own domain, structured profiles across the major review platforms in their category, and placements on real estate investing publications already getting cited in the buyer-intent queries.

Outreach to existing roundup pages is the most underused move in the set. Every category has 5 to 15 “best X for Y” listicles already ranking and already getting cited by LLMs during retrieval. Most are happy to add a vendor with a clean pitch and a credible product because adding entries makes their content more comprehensive.

The placement cost is hours of outreach per inclusion, not dollars. This is the Citation Engineering thesis applied to the third-party layer specifically.

The Earnable Half and Why You Still Need It

The high-impact, high-effort quadrant covers the citations you cannot directly buy or place. Editorial coverage on Forbes or TechRadar, expert quote pickups in widely-cited articles, original research that other people cite, and organic Reddit virality all sit here. You can run motions that increase the probability of these happening, but you cannot guarantee any specific placement.

These earned citations carry higher trust weight in the model than the engineered surfaces because LLMs have observed which surfaces are placeable and which are editorial. A Forbes feature is treated as more independent signal than a G2 profile, which is treated as more independent than a vendor’s own blog post. The hierarchy matters when the model is deciding which sources to weigh most heavily for an evaluative query.

The Kroto engagement we ran without any paid link building, taking impressions from 3,500 to 326,000, leaned almost entirely on the engineerable half. That worked because Kroto’s category had enough placeable third-party surface available to build authority before the earned layer matured. Most B2B SaaS categories are similar.

AI-Internal Citations Are the Compounding Layer

AI-internal citations sit downstream of consistent presence on both first-party and third-party surfaces. They are the slowest layer to build and the highest-leverage one to own, because they decide what the model says about your category when no link is shown at all.

How Brands End Up in the Model’s Memory

Three signals dominate the entry of a brand into model memory based on the published correlations. Brand search volume showed the strongest single correlation with citation likelihood in the 2025 Digital Bloom report at 0.334, higher than any technical signal measured. Multi-platform entity presence was the next strongest factor, with brands appearing on four or more platforms showing roughly 2.8x higher citation probability in ChatGPT responses.

Entity consistency across the major knowledge graphs and reference sites came in close behind. The Gumlet engagement is the cleanest internal example we can point to. In early 2025, Gumlet was strong on traditional SEO signals but had almost no weight in LLM training data for the private video hosting category.

By late 2025, after coordinated work on first-party clarity and saturation across review platforms, comparison roundups, and adjacent SaaS publications, Gumlet had become a default ChatGPT recommendation in its category. Roughly 20% of new inbound revenue was attributable to AI search discovery.

“We were getting demos where the prospect said ‘I found you on ChatGPT’ and we had no playbook for reproducing that. The work DerivateX ran turned that from accident into pipeline.”

— Divyesh Patel, Gumlet

AI-internal citations are not a tactic. They are the second-order outcome of consistent first-party and third-party investment over six to eighteen months.

Why This Type Cannot Be Engineered Directly

You can engineer the inputs that produce AI-internal citations. You cannot engineer the model’s training data directly. The distinction matters because most “AI SEO” pitches collapse the two and promise outcomes that the engineering layer cannot actually produce on its own.

The deliberate inputs are first-party clarity, third-party saturation across the four sub-types, entity consistency across knowledge graphs, and brand search demand built through distribution. The accidental component is timing, which is a function of when the next major model update incorporates the signals you have built.

As of mid-2026, training-cycle latency for the major foundation models has compressed to roughly six to nine months between data and deployment, which means the work compounds faster than it did even a year ago.

Two operating frameworks make this layer measurable. The AI Visibility Score tracks the cumulative outcome across all three citation types. The Citation Surface Map decomposes the score into the specific surfaces driving or capping it.

Co-Citation Overlap Between LLMs and Traditional Search Is Real, but Smaller Than Most Strategies Assume

Can I measure co-citation overlap between LLMs and traditional search? The answer is yes, and the overlap percentage is one of the most useful diagnostics you can run on your own visibility. The number is rarely as high as practitioners assume.

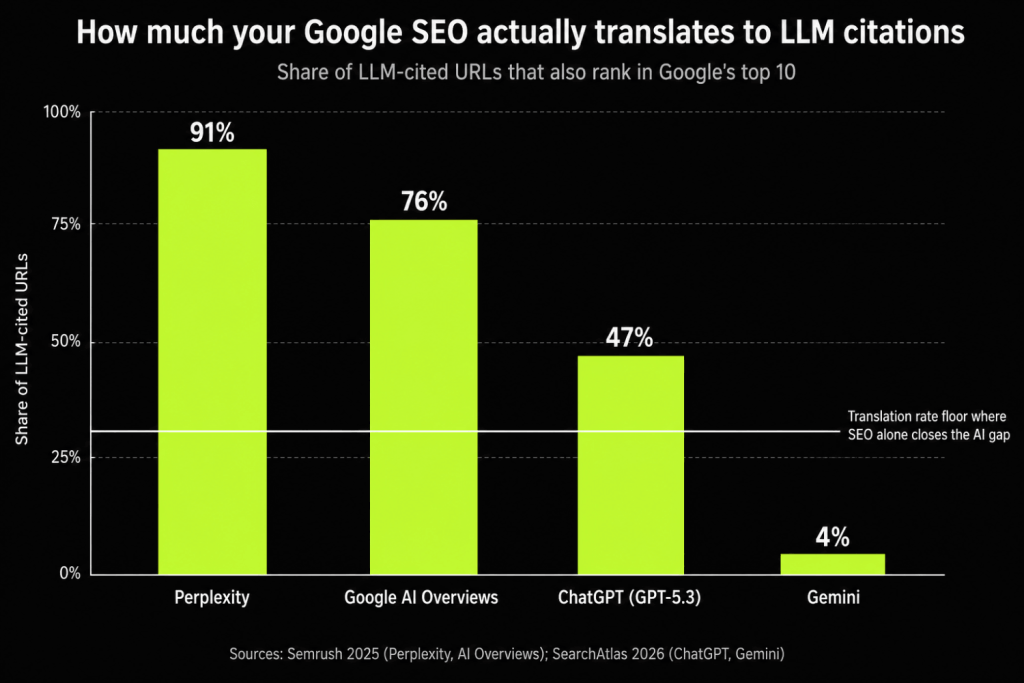

Perplexity Has the Highest Overlap with Google

A 2025 Semrush study comparing Google’s top 10 results against LLM citation sets found Perplexity citing Google’s top 10 sources roughly 91% of the time. Perplexity’s architecture runs an active web search on every query and weights traditional ranking signals heavily during retrieval. If you rank well on Google, you are highly likely to be cited by Perplexity for the same query.

ChatGPT and Gemini Diverge Sharply

The same Semrush analysis and a follow-up SearchAtlas study showed ChatGPT and Gemini citation sets sharing far less ground with Google’s top results. Gemini shared roughly 4% of cited domains with Google’s top 10 in one cut of the data. ChatGPT relied heavily on Bing-flavored retrieval combined with parametric memory, producing partial overlap at the domain level and lower overlap at the URL level.

The implication is that a strong Google ranking is necessary for Perplexity visibility, contributory for ChatGPT, and largely uncorrelated with Gemini. To what extent do traditional SEO improvements translate into stronger LLM citations? The answer is platform-specific, and the unweighted average across the major surfaces sits well below where most teams assume.

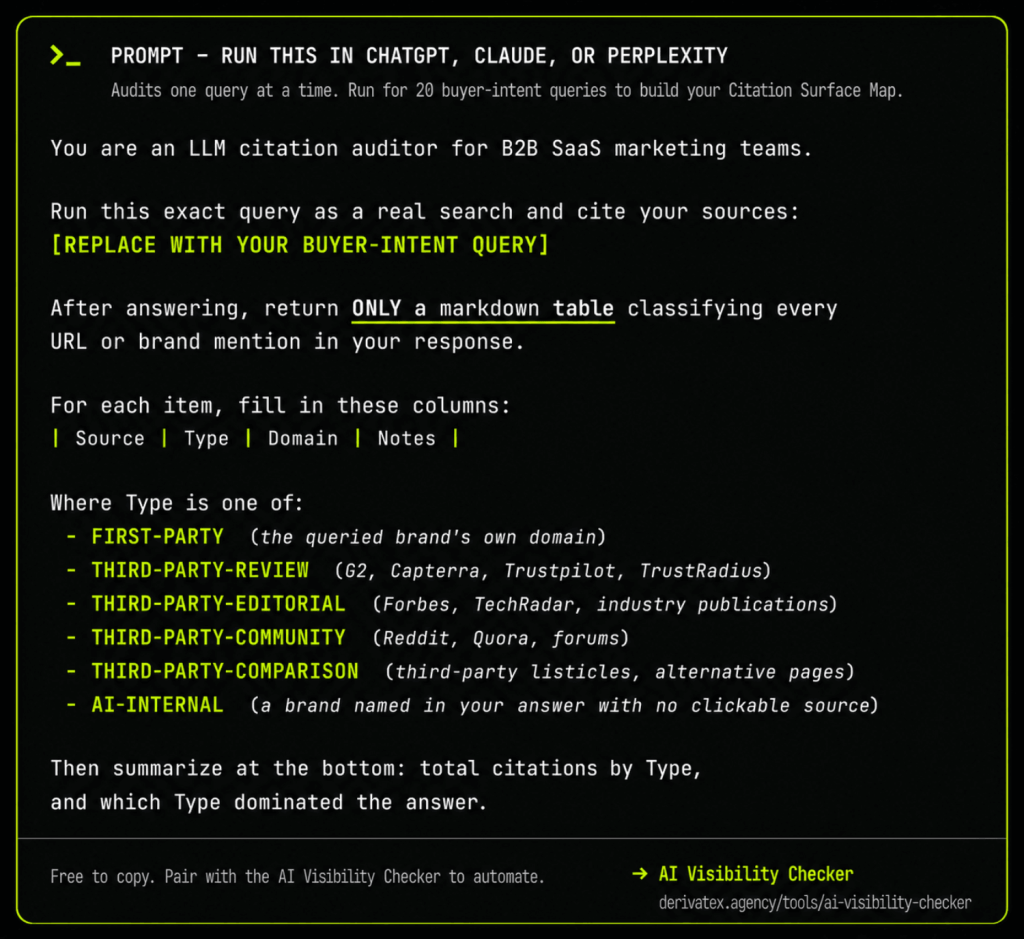

How to Measure Co-Citation Overlap for Your Own Brand

Pull your top 20 buyer-intent queries. Run each of them in ChatGPT, Perplexity, Gemini, and Google AI Overviews with web search enabled. Log every cited URL.

Cross-reference each URL against your Google ranking position for the same query. The overlap percentage you get is your SEO-to-LLM translation rate. The AI Visibility Checker automates the citation pull across the four major surfaces if you want to skip the manual logging.

Most B2B brands measure their SEO-to-LLM translation rate at 15% to 25%, which means three out of four LLM citation opportunities are being decided outside the search ranking layer. If your translation rate is below 30%, traditional SEO alone will not close your AI visibility gap regardless of how much you spend on content or links.

How to Allocate Effort Across the Three Citation Types

The three-type model and the engineerable-vs-earnable map become useful only when they translate into a budget allocation question. The audit motion below is the one we run with new clients and the one most B2B SaaS brands can run on themselves before any spend decision.

Audit Where You Currently Stand on All Three Types

Take 20 buyer-intent queries from your category. Run each one across ChatGPT, Perplexity, Gemini, and Google AI Overviews. For every citation that appears, classify it as first-party, third-party, or AI-internal, and note whether you or a competitor is named.

Compute your share of voice across the three layers. The result is your current Citation Surface Map. It reveals the actual distribution of where you are winning, where competitors are winning, and where neither of you is yet present.

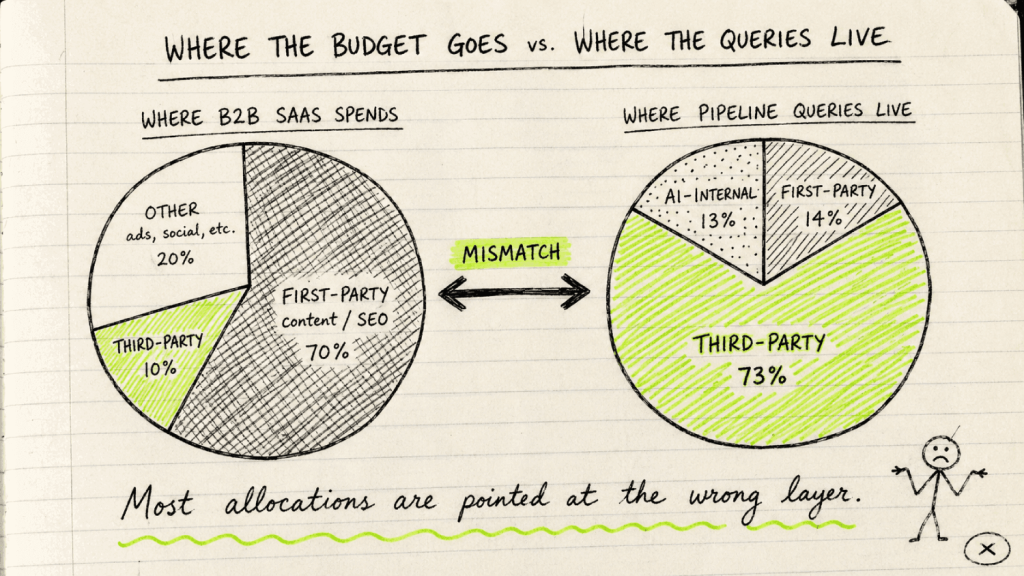

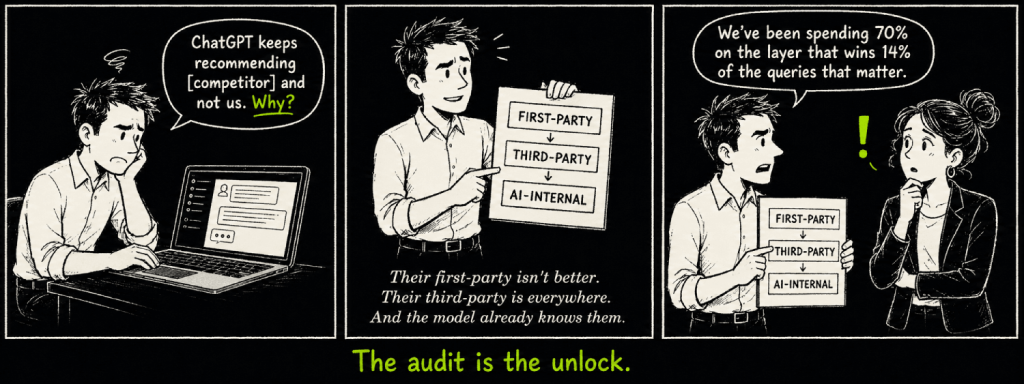

For Most B2B SaaS Brands, the Misallocation Looks the Same

The most common B2B SaaS allocation puts roughly 70% of effort into the citation type that wins 14% of pipeline-driving queries. Across audits we have run in 2025 and 2026, the typical pattern is heavy first-party investment through blog content and SEO. Almost no deliberate work on engineered third-party placements.

Zero coordinated motion on entity consistency or brand search demand. A more matched allocation looks closer to 45% first-party, 55% third-party, with entity reinforcement compounding inside both. The exact split depends on category maturity, current AI-internal presence, and how branded versus unbranded the buyer journey is.

The shift from a 70-10-0 allocation to a 45-55 split with active entity work is what most teams need to make in the next 12 months to stay in the consideration set as more buyers move discovery into LLMs.

Sequence Matters: First-Party First, Then Third-Party, Then Entity Saturation

You cannot earn third-party placements without owned content credible enough to cite. You cannot build entity strength without third-party validation feeding the model’s training data. The order is fixed even if the proportion is not.

The sequencing rule we apply at DerivateX is direct. Get first-party clean enough to be quotable in three months. Saturate engineered third-party in months three through nine.

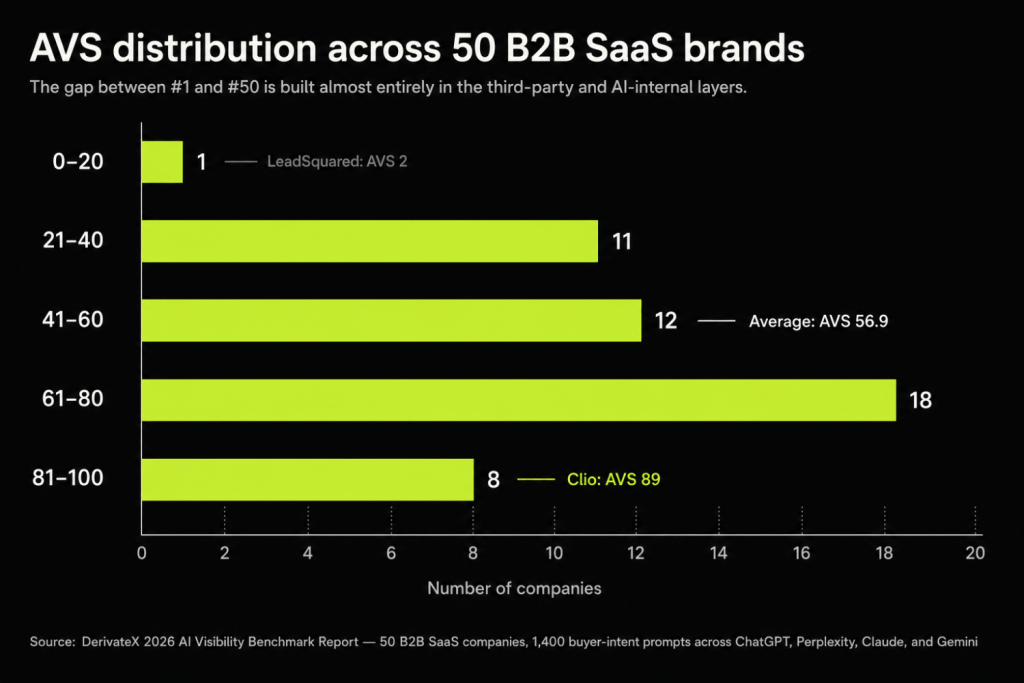

Compound entity reinforcement in parallel from month six onward. The brands we see winning AI search are the ones that respect this sequence rather than trying to compress it. The full benchmarks from our 2026 AI Visibility Report show how this sequence maps to scoring outcomes across 50 B2B SaaS companies.

FAQ

1. What’s the difference between first-party and third-party citations in LLMs?

First-party citations come from domains you own, like your blog, product pages, documentation, or pricing page. Third-party citations come from domains you do not own, including review platforms like G2 and Capterra, editorial publications like Forbes and TechRadar, comparison roundups, and community discussions on Reddit or Quora. A third type, AI-internal citations, occurs when the model recommends your brand from its training memory without showing any clickable source.

The strategic difference is which type wins which query: first-party dominates objective fact queries, third-party dominates branded evaluative queries, and AI-internal decides recommendations when neither of the first two surfaces is retrieved.

Citation strategy in 2026 is a function of query type, not domain ownership.

2. What kind of content earns third-party citations in LLMs?

Third-party citations cluster in four content formats based on Omniscient Digital’s analysis of 23,387 branded query citations.

- Reviews, listicles, forums, and case studies captured roughly 57% of branded query citations.

- Directory and review platform pages captured around 17%.

- Brand product pages on third-party sites captured around 12%.

- Thought leadership and editorial commentary captured around 5.4%.

The pattern tells you where to invest. For LLM visibility on evaluative queries, comparison roundups, structured review platform profiles, and named case studies on third-party publications produce the highest citation density per dollar of GEO spend.

3. Can I measure co-citation overlap between LLMs and traditional search?

Yes, and the calculation is direct. Take 20 buyer-intent queries, run each in ChatGPT, Perplexity, Gemini, and Google AI Overviews, log every cited URL, and compare those URLs against your Google ranking positions for the same queries.

The overlap percentage by platform tells you where SEO is doing useful work for AI visibility and where it is not. Perplexity tracks Google’s top 10 closely, around 91% per a 2025 Semrush study. ChatGPT and Gemini track Google far more loosely, with Gemini sharing roughly 4% of cited domains with Google’s top 10 in one published analysis.

Your SEO-to-LLM translation rate is the diagnostic that tells you whether to keep investing in rankings or pivot to other layers.

4. To what extent do traditional SEO improvements translate into stronger LLM citations?

Traditional SEO improvements translate strongly into Perplexity citations, partially into ChatGPT and AI Overviews citations, and weakly into Gemini citations. Across audits we have run in 2026, the unweighted SEO-to-LLM translation rate for B2B SaaS sits between 15% and 25%. SEO is necessary infrastructure for AI visibility because rankings feed the retrieval layer that LLMs use, but it is not sufficient on its own.

The signals AI search adds beyond search rankings include brand search demand, multi-platform entity presence, and structural parseability of content. Optimizing only for traditional rankings captures roughly half of AI visibility and leaves the rest to chance.

5. Why does my competitor show up in ChatGPT and not us?

Three causes account for almost every case of this pattern. The first is entity strength: your competitor has stronger AI-internal presence because their brand has been mentioned more consistently across the surfaces LLMs train on, and the model recommends them by default.

The second is third-party saturation: they appear on more review platforms, in more comparison roundups, and in more category-defining listicles than you do, so retrieval pulls them in for evaluative queries.

The third is content extractability: their owned pages may be more structured, more definition-forward, and easier for retrieval pipelines to parse. The fastest diagnostic is to ask ChatGPT directly what it knows about each of you and compare the answers side by side.

6. Can I get cited by ChatGPT without backlinks?

Yes. Backlinks show weak to neutral correlation with LLM citation likelihood across the published research. AI crawlers like GPTBot and ClaudeBot do not crawl link graphs the way Googlebot does.

The signals that produce ChatGPT citations are entity clarity across knowledge graphs and the major review platforms, structured content that retrieval systems can parse cleanly, presence on the third-party surfaces that LLMs use during retrieval, and brand search volume strong enough to register as a known entity in training data.

Several brands we have worked with have grown AI citation share substantially without paid link building, including Kroto, which moved from 3,500 to 326,000 monthly impressions over four months without buying a single link.

Conclusion

The mental model the rest of GEO writing is operating on, that LLM citations are either yours or someone else’s and you should focus on making yours better, is incomplete enough to produce predictably wrong allocation decisions. Citations come in three types, dominance is decided by query type, and most third-party citations are engineerable rather than earnable. Your strategy should map to the queries that actually drive your pipeline, not to the citation type that is easiest to influence.

The fastest way to know whether your current allocation is right is to look at your actual citation distribution across 20 buyer-intent queries. If first-party is winning more than third-party for evaluative and comparison queries, you are looking at an unusual category. If third-party is winning and you have made no deliberate investment in placeable third-party surfaces, you are looking at a gap any competitor with the same audit can close in 90 days.

That diagnostic is what we run as the Free AI Visibility Audit. It produces your Citation Surface Map across the three types, names the engineerable third-party placements you are missing, and quantifies the SEO-to-LLM translation rate for your top buyer-intent queries. If the model is recommending your competitors and not you for the queries closest to revenue, this is the diagnostic that tells you why.