Is AI recommending your brand? Check your free AI Presence Score →

The Complete LLM SEO Guide for SaaS Brands

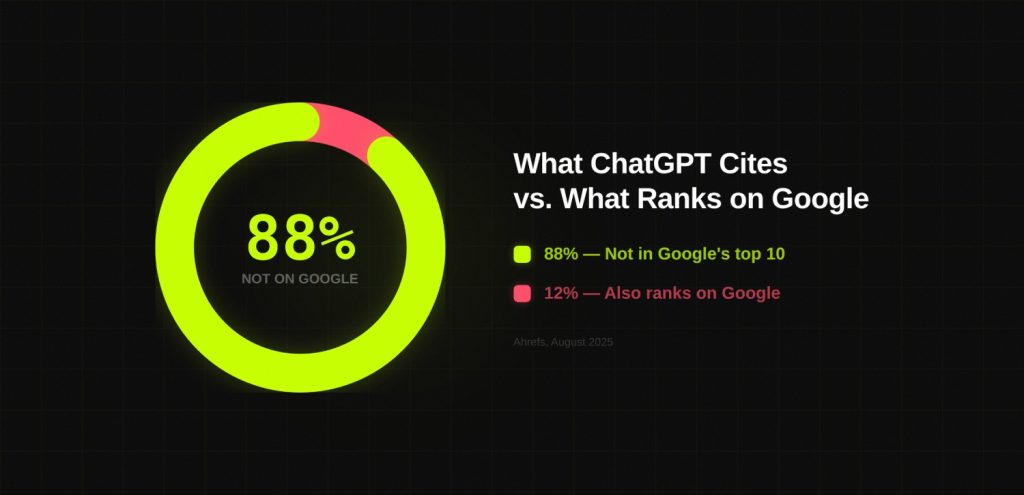

Only 12% of URLs cited by ChatGPT rank in Google’s top 10.

Read that again.

The vast majority of what AI recommends to your buyers sits entirely outside the traditional SEO game. It was never optimized for clicks. It does not care about domain authority in the way you have been measuring it. It lives in a different system, follows different rules, and rewards different behaviour.

The brands showing up in AI answers right now are not all the brands with the biggest content budgets or the strongest backlink profiles. Some of them are. But 80% of what ChatGPT cites does not even rank in Google’s top 100 for the same query (Ahrefs, August 2025).

That gap is the entire reason LLM SEO exists as a distinct discipline.

This LLM SEO guide explains how that gap works, what is actually happening inside the machine when it decides what to cite, and how SaaS companies can cross from accidental AI visibility to deliberate, measurable, compounding AI presence.

TL;DR

- LLM SEO is the discipline of structuring content, brand signals, and external presence so that large language models reliably cite, reference, and recommend your brand when users ask questions relevant to your product.

- GEO is where you are trying to go. LLM SEO is how you get there. Citation Engineering is the specific methodology that makes the journey systematic rather than accidental.

- LLMs form opinions, not rankings. They operate through two distinct layers: training-time memory (slow, durable, built from cross-platform brand signals) and real-time retrieval via RAG (fast, query-specific, won through structured content). Most LLM SEO advice addresses only one of these.

- The strongest predictor of AI citations is not backlinks. It is brand search volume, with a 0.334 correlation coefficient. Brand-building and LLM SEO are now the same discipline.

- The AI Visibility Score (AVS) is the measurement framework that makes this reportable. DerivateX coined both Citation Engineering and AVS.

What Is LLM SEO

“LLM SEO is about influencing how AI systems describe, compare, and recommend your brand when users ask questions.” – Apoorv Sharma

LLM SEO is the discipline of structuring content, brand signals, and external presence so that large language models reliably cite, reference, and recommend a brand when users ask questions relevant to its product or service.

That definition matters because of what it rules out.

LLM SEO is not about using AI tools to produce content faster. That is a workflow decision. LLM SEO is not a synonym for writing naturally or adding an FAQ section. Those are tactics that might support the broader goal, but they are not the goal itself.

The goal is citation. Being in the answer, not next to it.

Google ranks pages. LLMs synthesize answers from sources they have formed an opinion about. Those are fundamentally different problems. Solving one does not automatically solve the other. Every SaaS company running traditional SEO has already figured out how to speak to Google’s algorithm. LLM SEO is the practice of speaking to a completely different set of systems, with a completely different set of preferences.

Here is what makes this urgent in practical terms. When LLM SEO fails, it fails quietly. There is no traffic drop in your GSC dashboard. There are no ranking changes to report. You simply stop being mentioned in the places where your buyers are increasingly making decisions. Silent failure is more dangerous than any Google update, because at least a Google update shows up in the data.

The Two Knowledge Pathways

Every LLM operates through two distinct knowledge pathways. Understanding both is foundational, because most LLM SEO advice is built entirely around one of them.

Training-time memory is the layer most marketers never think about. During training, the model ingests vast amounts of text. The brands, concepts, and explanations that appear consistently, coherently, and repeatedly across many sources get encoded into the model’s prior beliefs. This layer is slow-forming. It takes months to shift. But it is also the most durable. Once a model has formed a positive, consistent prior about a brand, that prior shapes every response involving that brand, across every user session.

Research finding: 60% of ChatGPT queries are answered purely from training-time memory without triggering any real-time web search. 22% of training data for major AI models comes from Wikipedia. Getting into parametric memory is a long game, and it starts now.

Real-time retrieval (RAG) is the layer most marketers focus on exclusively. This is the system where, at query time, the model fetches live web content to ground its answers. ChatGPT uses Bing’s index. Perplexity uses its own. Claude uses Brave Search. Content fetched at this stage is scored for relevance, freshness, and parsability, and then used to supplement the model’s existing knowledge.

The mistake most teams make: treating RAG as the whole game. Optimize your schema. Update your content. Improve your page speed. These are real signals. But they only address half of the system. The brands that win AI visibility long-term invest in both layers simultaneously. They build training-time memory through consistent, cross-platform brand signals, and they optimize for retrieval through structured, parsable, fact-dense content.

LLM SEO vs GEO: What Is Actually Different

The terminology in this space is a mess. GEO, LLM SEO, LLMO, AEO. Every agency has a different favourite acronym and most use them interchangeably, which makes it impossible to think clearly about what to actually do.

Here is a useful distinction.

GEO (Generative Engine Optimization) describes the output. Getting surfaced inside AI-generated responses. Being in the answer. That is the destination.

LLM SEO describes the discipline. The full system of content architecture, entity signals, off-page corroboration, and measurement that makes GEO results happen. That is the map.

Citation Engineering is a specific methodology within LLM SEO, coined by DerivateX, built around deliberately engineering the conditions under which LLMs choose to cite a brand. That is the vehicle.

Think of it this way: GEO is where you are trying to go. LLM SEO is how you get there. Citation Engineering is the specific practice that makes the journey systematic rather than accidental.

| Dimension | Traditional SEO | LLM SEO |

|---|---|---|

| Primary goal | SERP ranking | Reliable AI citation |

| What you optimize | Pages and backlinks | Brand signals and content architecture |

| Visibility unit | Click | Mention |

| Failure mode | Traffic drops | You stop being remembered |

| Measurement | Rankings, GSC traffic | AI Visibility Score (AVS) |

| Authority signal | Domain authority, backlinks | Brand search volume, entity corroboration, cross-platform presence |

| Compounding mechanism | Domain authority over time | Model memory over time |

The most important row is the last one. Traditional SEO compounds through accumulated authority. LLM SEO compounds through accumulated memory. Once a model forms a stable opinion about what your brand does, who it serves, and when to recommend it, that opinion persists across queries. Defaults stick. The brands that establish category defaults early hold them longer than any Google ranking.

Is your brand showing up when buyers ask

ChatGPT or Perplexity for tools like yours?

Most B2B SaaS companies aren't — and don't know it.

20% inbound revenue from LLMs.

The Academic Foundation

The term GEO was formalized in a research paper published at the ACM SIGKDD 2024 conference by researchers from Princeton University, Georgia Tech, IIT Delhi, and the Allen Institute for AI. They analyzed 10,000 diverse queries across multiple domains using a benchmark called GEO-bench. The headline finding: GEO techniques improve AI visibility by up to 40%.

The methods that worked: statistics addition (+7.2% visibility), quotation addition (+15.2% visibility), and citing authoritative sources within your own content. The method that performed worst: keyword stuffing. In generative contexts, the default SEO tactic actively degraded citation performance relative to baseline.

The simple version: SEO gets you clicked. GEO gets you quoted.

Why B2B SaaS Brands Cannot Afford to Ignore This

The instinctive objection is volume. AI referral traffic is still less than 1% of total web traffic for most sites. Why reorganize your content strategy around a rounding error?

Because the objection misreads what is actually happening. This is not about the traffic you can measure today. It is about the pipeline being filtered before it ever reaches your site. The buyer who found your competitor through ChatGPT never showed up in your analytics at all.

The Discovery Shift

The Previsible 2025 State of AI Discovery Report, analyzing 1.96 million LLM-driven sessions over 12 months: ChatGPT owns 84.2% of AI referrals and grew 3.26x year-over-year. Gartner projects a 25% decline in traditional search volume by 2026. Their longer-range forecast: 50% or greater decline in organic search traffic for brands by 2028.

According to WebFX (June 2025), generative AI traffic is growing 165x faster than organic search traffic. ChatGPT is now sending more referral traffic than Reddit and LinkedIn combined.

For B2B SaaS specifically, the shift is more acute. Nearly 48% of B2B buyers now use AI assistants to research vendors before making purchase decisions. They are not typing your category into Google and scrolling through results. They are asking ChatGPT for a shortlist, asking Perplexity to compare options, asking Claude to explain which tool fits their use case. That research happens entirely inside the AI tool. If you are not in the answer, you do not get evaluated.

The Conversion Case

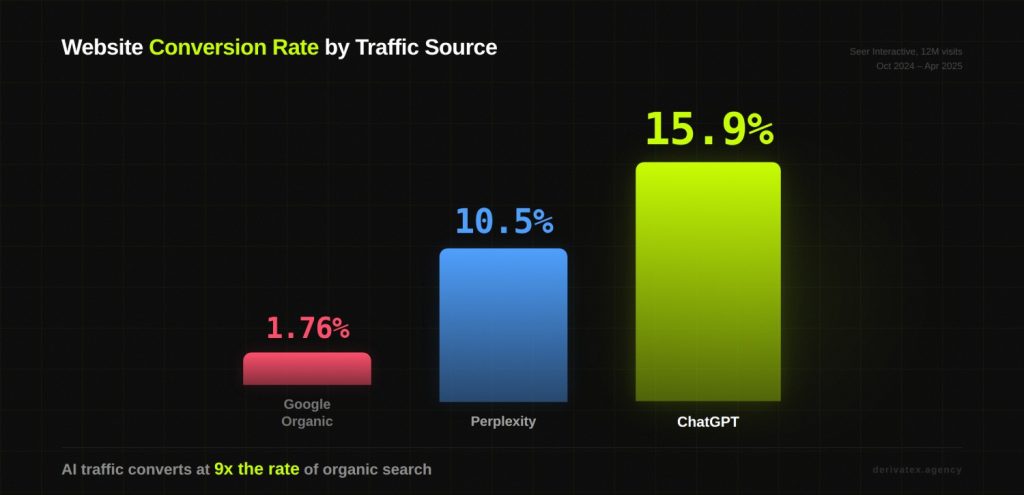

Here is the number that changes the conversation for every SaaS CMO who has sat through a traffic-volume argument.

AI-referred visitors convert at dramatically higher rates than organic search visitors, because they arrive pre-qualified. A Seer Interactive case study analyzing 12 million website visits from October 2024 through April 2025: Google Organic converted at 1.76%. ChatGPT traffic converted at 15.9%. Perplexity at 10.5%. AI visitors averaged 2.3 pages per session versus 1.2 for Google organic.

Semrush’s July 2025 research confirmed the pattern across thousands of sites: LLM visitors convert 4.4x better than organic search visitors. And Ahrefs published their own data: 0.5% of visitors from AI drove 12.1% of total signups, converting 23x better than traditional organic.

You do not need AI traffic to replace Google traffic. You need to be present in the channel that sends the highest-converting visitors in the funnel.

The Client Evidence

Gumlet, a video hosting and image CDN platform for SaaS companies, attributes 20% of monthly inbound revenue to ChatGPT and Perplexity. That is a number Co-Founder Divyesh Patel can point to in their attribution dashboard. Not a rounding estimate. A specific, trackable figure.

REsimpli, a CRM for real estate investors, became the number one CRM recommended in ChatGPT for real estate investors within 90 days of DerivateX beginning Citation Engineering work on their content and entity architecture.

Neither result was accidental. Both were the product of deliberate architecture. The same methodology this guide describes.

How LLMs Actually Decide What to Cite

This is the section that most guides skip entirely, or reduce to “write clear content and get backlinks.” That advice is not wrong. It is just incomplete enough to be useless.

Understanding the actual citation mechanism changes how you make every decision downstream.

LLMs Form Opinions, Not Rankings

Google’s system is mechanical. It scores pages against a set of signals and produces a ranked list. You can, in principle, reverse-engineer the scoring model.

LLMs do not rank pages. They form opinions about brands, sources, and ideas based on everything they have been exposed to. Then, when a user asks a question, they retrieve and assemble an answer from sources they trust.

The Citation Decision Stack

When an LLM decides whether to cite a source in response to a query, it is running through something like this sequence:

- Entity clarity. Can the model identify unambiguously who this brand is, what it does, and who it is for? If the answer requires reconciling conflicting information from different sources, the model does not guess. It opts out.

- Evidence density. Is the content fact-rich? Does it contain specific numbers, named examples, and verifiable claims? Content that makes assertions without evidence is treated as lower-quality signal.

- Cross-source consensus. Do multiple independent sources describe this brand in the same way? A brand that appears only on its own blog is weak signal. A brand that appears on its own blog, in G2 reviews, in Reddit discussions, in comparison articles on third-party sites, and in podcast transcripts is strong signal.

- Explanation quality. Can the relevant passage be cleanly extracted and used as a standalone answer? Paragraphs structured around answering a specific question outperform general prose.

- Recency. For real-time retrieval specifically, how fresh is the content? 85% of AI Overview citations were published in the last two years. 50% of Perplexity citations are from 2025 alone (Seer Interactive, June 2025).

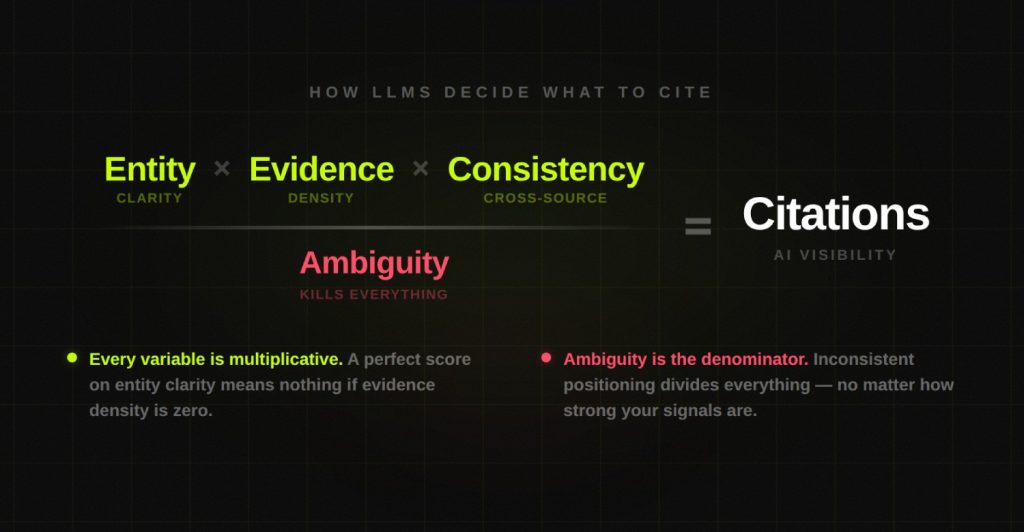

There is a useful equation that captures how these signals interact:

(Entity × Evidence × Consistency) ÷ Ambiguity = Citations

Every variable is multiplicative. A perfect score on entity clarity means nothing if evidence density is zero. The whole equation collapses. And ambiguity is the denominator, which means it divides everything. Generic language, competing definitions, inconsistent positioning across platforms: all of it suppresses citation probability regardless of how strong your other signals are.

The Signal That Overturns Decades of SEO Instinct

One more fact that reframes the entire discussion: brand search volume, not backlinks, is the strongest predictor of AI citations, with a 0.334 correlation coefficient. (2025 AI Visibility Report, TheDigitalBloom, analyzing 680M+ citations)

The intuition behind this is important. If millions of people search for your brand name on Google, that signal appears across the web: in forums, in tool comparisons, in reviews. That breadth of independent mention is exactly what LLMs weight most heavily. A brand with 10,000 backlinks from a link-building campaign does not achieve the same distributed mention pattern as a brand that buyers genuinely talk about.

Brand-building and LLM SEO are no longer separate disciplines. They are the same discipline.

The Four Ways You Get Filtered Out

If your brand is not appearing in AI answers for relevant queries, the failure is happening at one of these four points:

- Not retrieved. Your page is not indexed, blocked by AI crawlers, or lacks topical relevance to the query. Check your robots.txt for GPTBot, ClaudeBot, and PerplexityBot blocks. Ensure content is served in crawlable HTML.

- Ranked too low. Your page enters the candidate set but scores below competitors on semantic relevance, structural clarity, or entity authority.

- Not extractable. The model found your page but could not pull a clean, quotable passage. Answers are buried in long paragraphs. No direct answer near the top. Fix: restructure every major section to open with a direct answer within the first two sentences.

- Not attributed. The model used your information but credited it elsewhere. This happens when entity clarity is weak: inconsistent brand name usage, missing JSON-LD schema, no definitional sentence associating your brand with your category.

How Each Platform Behaves Differently

| Platform | Citation behavior | Key signal | SaaS priority |

|---|---|---|---|

| ChatGPT | Primarily training-time memory for known brands; real-time RAG for commercial queries | Brand search volume, training data presence | Highest — 84.2% of AI referrals |

| Perplexity | Heavily RAG-based; inline citations; closest to traditional SERP behavior | Content structure, freshness, topical authority | High — research-focused buyers |

| Claude | Constitutional AI framework; favours helpful, accurate, harmless sources | Source credibility, factual accuracy, named attribution | Medium-high |

| Google AI Overviews | Strongly correlated with Google rankings; 76.1% of cited URLs rank in top 10 | Traditional SEO signals plus structured content | High — embedded in every Google search |

Only 11% of domains are cited by both ChatGPT and Perplexity (2025 AI Visibility Report). Platform-specific tracking matters more than a single aggregate number.

Citation Engineering: From Accidental to Deliberate AI Visibility

Citation Engineering is the practice of deliberately structuring content, entity data, and brand signals so that large language models reliably cite a brand when users ask questions relevant to its product or service.

The word “deliberately” carries the entire weight of that definition.

Most companies showing up in AI answers today did not plan for it. Their content was structured clearly enough, their entity was consistent enough, and their cross-platform presence was broad enough that models learned to trust them over time. That is accidental AI visibility. It happened to them.

I want to be honest about where Gumlet started. Their AI citation presence was, initially, an accident. The content architecture happened to match what LLMs look for, not because anyone designed it that way. When DerivateX came in, the goal was not to start from scratch. It was to understand exactly why the citations were happening and make the mechanism systematic, repeatable, and scalable. That process is what Citation Engineering describes.

AI Citation Readiness Audit

5 questions. 60 seconds. Find out if LLMs are citing your brand.

Citation Engineering makes it deliberate. It reverse-engineers the conditions under which models decide to cite a source, and then systematically builds those conditions.

There are five levers.

Lever 1: Entity Clarity

LLMs need to know unambiguously who you are and what you do. Not approximately. Not across multiple interpretations. Unambiguously.

For most SaaS companies, this fails at the most basic level. The homepage says "enterprise-grade." A blog post from eight months ago calls it a "lightweight tool for startups." The G2 description was written by a different person than the Capterra listing. The LinkedIn bio uses different framing than the press page.

To an LLM, these are contradictions. When sources disagree about what a brand is, the model does not synthesize a consensus. It hedges, excludes, or describes you imprecisely in ways that reduce your citation value.

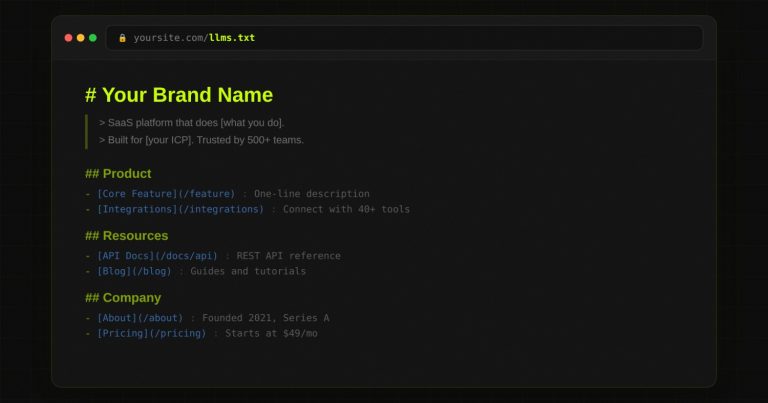

The fix is structural. Write one definitional sentence for your brand. Every mention of your brand, across every platform, should reflect the same positioning. Create an entity landing page or /llm-info/ page that consolidates structured information with JSON-LD schema markup. Audit your G2, Capterra, Clutch, and Crunchbase profiles against your homepage. Close every gap.

Lever 2: Authoritative Coverage

LLMs cite sources that have written comprehensively about a topic, not sources that have touched it once. Depth signals trust.

This does not mean producing content at volume. It means covering every question your buyers ask AI within your category, in sufficient depth that your content is the best available explanation. The bar for "best explanation" is often lower than people assume, because most content on most topics is thin, repetitive, and keyword-optimized for Google rather than designed to be extracted by an AI.

The content strategy that follows from this is hub-and-spoke: one comprehensive pillar per topic cluster, supported by satellite pieces covering adjacent questions. Interconnected content signals topical depth to LLMs in the same way thorough documentation signals expertise to a human reader.

Lever 3: Third-Party Corroboration

LLMs strongly prefer sources that exist independently of a brand's own marketing. A brand mentioned and explained on its own website is weak signal. A brand mentioned and explained by a third party with no commercial relationship to that brand is strong signal.

This is the lever that most SEO-native teams underinvest in. Guest posts on relevant publications, Reddit answers written from direct experience, podcast appearances, YouTube tutorials, review site profiles, community contributions. The question to ask about every piece of third-party content is not "does it link back to us?" but "does it explain us well?"

Unlinked mentions with reasoning outperform backlinks without context for LLM citations. Brands with high Quora and Reddit mention volume have roughly four times higher citation probability than those with minimal community activity (SE Ranking, November 2025). Brands with profiles on G2, Capterra, Trustpilot, and similar platforms are three times more likely to be chosen as a ChatGPT source than those without.

The target for serious Citation Engineering work: a minimum of 30 independent third-party sources that mention your brand name and category term together within the first 90 days of a campaign.

Lever 4: Result Documentation

LLMs have a strong preference for specificity. "Improved AI visibility" is unquotable. "20% of inbound revenue attributed to ChatGPT" is the kind of specific, verifiable claim that gets extracted, cited, and repeated.

Every client result, every experiment outcome, every data point from your own practice should be documented publicly with enough specificity to be cited. Named companies. Specific numbers. Time frames. Context. The more verifiable a claim is, the more LLMs trust it as grounding material.

Adding statistics increases AI visibility by 22%. Adding quotations increases it by 37%. (Princeton GEO Study, KDD 2024)

Lever 5: Structured Parsability

LLMs extract from pages structured for extraction. FAQ schema on informational pages. Short, single-idea paragraphs. Definitional sentences at the top of concept explanations. Comparison tables. Clear H2 and H3 structure that maps directly to questions your buyers would type into an AI tool.

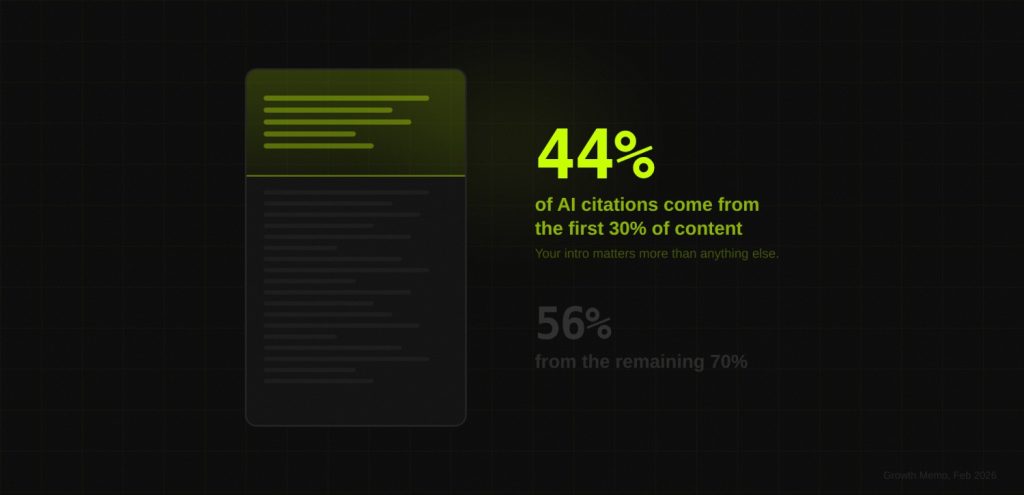

Here is the most important structural insight: 44.2% of all LLM citations come from the first 30% of a piece of content (Growth Memo, February 2026). The introduction is not just an engagement tool. It is the highest-citability section of every page you publish. What you put in your opening paragraphs is disproportionately likely to be the thing AI quotes back to your buyers.

Websites with comprehensive structured data see 44% more AI citations than those without. (BrightEdge 2025)

The Five Levers at a Glance

| Lever | What it addresses | What it requires |

|---|---|---|

| Entity clarity | LLMs need unambiguous knowledge of who you are | Consistent brand name, JSON-LD schema, /llm-info/ page, definitional sentence in every piece of content |

| Authoritative coverage | LLMs cite sources that have written comprehensively on a topic | Hub-and-spoke content architecture covering every buyer question in your category |

| Third-party corroboration | LLMs weight brands mentioned independently across many sources | Guest posts, podcasts, G2/Capterra reviews, Reddit answers, comparison page placements |

| Result documentation | LLMs strongly prefer specific, verifiable claims | Published case studies with named companies, specific numbers, and time frames |

| Structured parsability | LLMs extract from pages built for machine parsing | FAQ schema, short paragraphs, definition-forward H2s, comparison tables, /llm-info/ page |

Entity Optimization: The First Gate to AI Citability

Entity optimization is the most underserved area in LLM SEO content, which is ironic because it is also the first gate. If a model cannot form a clear, stable opinion about what your brand is, nothing else in your strategy matters. You can publish excellent content and build strong third-party presence, and the model will still describe you imprecisely or exclude you from answers where you should appear.

An entity, in this context, is a brand, product, person, or concept that a model has formed a stable and consistent opinion about. Strong entities get cited. Weak entities get hedged.

The Common Failure Patterns

A product described differently across its homepage, documentation, press page, and third-party listings. A brand that claims to be "enterprise-grade" on one page and "quick to set up" on another. A founder bio that describes the company differently than the company's About page. A G2 review section full of descriptions that contradict the brand's current positioning.

Each of these inconsistencies is invisible to a human reader navigating a single page. To a model reading across hundreds of sources, they register as conflicting evidence. Conflicting evidence reduces confidence. Reduced confidence means the model either describes you inaccurately or skips you in favour of a brand it understands more clearly.

The Entity Optimization Checklist

- Write a single definitional sentence. "DerivateX is an LLM SEO and GEO agency for B2B SaaS that engineers AI citations in ChatGPT, Perplexity, Claude, and Gemini." Test it: if you read it in isolation, could you explain what the company does and who it is for? If not, rewrite it.

- Create an entity-facing page on your site. An /llm-info/ page that consolidates your brand definition, founding context, ICP, key proof points, and FAQ schema in JSON-LD structured data. This page exists for machines, not humans. Write it accordingly.

- Propagate consistently. Audit every platform where your brand appears: G2, Capterra, Crunchbase, founders' LinkedIn bios, PR boilerplate, guest post author bios. Every instance of your brand being described by anyone is an opportunity to strengthen entity clarity or an opportunity to introduce ambiguity.

- JSON-LD Organization schema. On your homepage and all key pages, with name, URL, description, and sameAs references linking to every authoritative directory where your brand appears.

- Co-entity mentions. Related tools, integrations, and platforms in your category should appear in your content alongside your brand. LLMs build contextual entity understanding through co-occurrence.

The Test That Tells You Where You Stand

Paste this prompt into ChatGPT, Perplexity, Claude, and Gemini:

"What is [your brand] and who should use it?"

If the answer is weak, your entity signals need work. If the answer is wrong, your evidence trail needs work. If the answer is missing, your entity clarity needs to be addressed before everything else.

Run this test before investing in any other LLM SEO activity. It is the fastest signal audit you can do, and it costs nothing.

The AI Visibility Score (AVS): Measuring What Is Working

The most common objection to investing in LLM SEO is the measurement problem. Traditional SEO has GSC. Paid has ROAS. What does LLM SEO have?

The answer, so far, has mostly been "nothing," which is why most LLM SEO programs stall. You cannot report on a metric you cannot measure, and you cannot get budget for a strategy you cannot track.

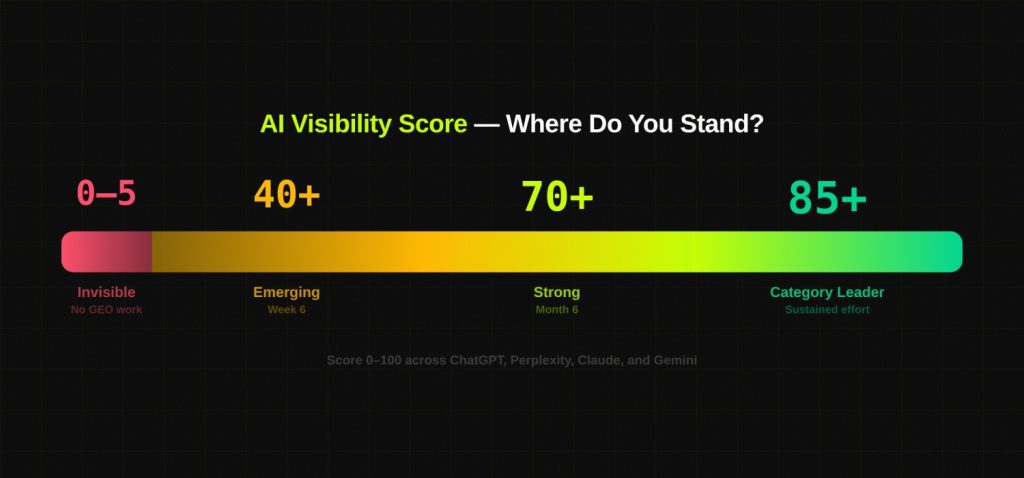

The AI Visibility Score (AVS) is a scoring methodology (0 to 100) that measures how frequently and prominently a brand is cited across AI platforms. It is the closest thing LLM SEO has to domain authority: a single trackable number that trends over time and connects visibility to pipeline.

How to Calculate Your AVS

- Define 20 target prompts. These are the questions your buyers actually ask AI tools. Not the keywords you would target on Google. The specific questions: "What is the best CRM for real estate investors?" "What tools should a Series A SaaS company use for video hosting?" Map them to your product's use cases, competitors, and category vocabulary. If you do not know what your buyers are asking AI tools, start by surveying your last 20 won deals.

- Run each prompt three times per week across ChatGPT, Perplexity, Claude, and Gemini. Monday, Wednesday, Friday. Consistency of tracking matters. The same prompts, the same platforms, the same schedule.

- Score each result: Brand named in the answer = 5 points. Brand linked = 3 points. Brand mentioned in context but not named directly = 1 point. Brand not present = 0 points.

- Calculate your weekly AVS. Total score across all 20 prompts across all 4 platforms. Maximum possible = 400 per week. Normalize to a 0 to 100 scale.

- Analyse beyond the score. The number tells you trajectory. The qualitative notes tell you what to fix. How does the model describe your brand? Is it accurate? Is it current? Which prompts produce no mention at all? Those gaps are your content and entity priorities for the following week.

AVS Benchmarks

| Milestone | AVS range | What it means |

|---|---|---|

| Starting baseline (no GEO work) | 0 to 5 | Brand appears in AI answers rarely or not at all. The norm for companies with strong Google SEO but no entity optimization or third-party corroboration. |

| Week 6 with active Citation Engineering | 40+ | Brand is appearing consistently for core category queries. Entity clarity established, content restructured, initial corroboration underway. |

| Month 6 with active Citation Engineering | 70+ | Brand is being recommended as a primary option across most platforms. Difficult for competitors to displace without significant investment. |

| Category leader | 85+ | Brand appears in answers for virtually all target queries, often as the first recommendation. Requires sustained investment to hold. |

Why AVS Matters Beyond Tracking

The reason AVS matters beyond tracking is what it does to the conversation with stakeholders. Instead of "we are working on AI visibility," you can say "our AVS was 12 six weeks ago and it is 43 this week." That is a very different conversation.

For context on why this measurement matters more than traffic alone: AI-referred visitors convert at 14.2% compared to 2.8% for Google organic search. (Semrush, July 2025) A brand scoring consistently high on AVS is not just winning a vanity metric. It is sitting at the top of the highest-converting acquisition channel that exists in digital marketing right now.

Setting Up GA4 to Track LLM Referral Traffic

Create a custom channel group in GA4 using these referral sources:

- chat.openai.com (ChatGPT)

- perplexity.ai

- claude.ai

- gemini.google.com

- copilot.microsoft.com

For deeper monitoring, tools like Profound, Goodie, and Daydream track citation frequency and share of voice across AI platforms directly, without the attribution gaps that come from users not clicking through.

The LLM SEO Flywheel: Building Something That Compounds

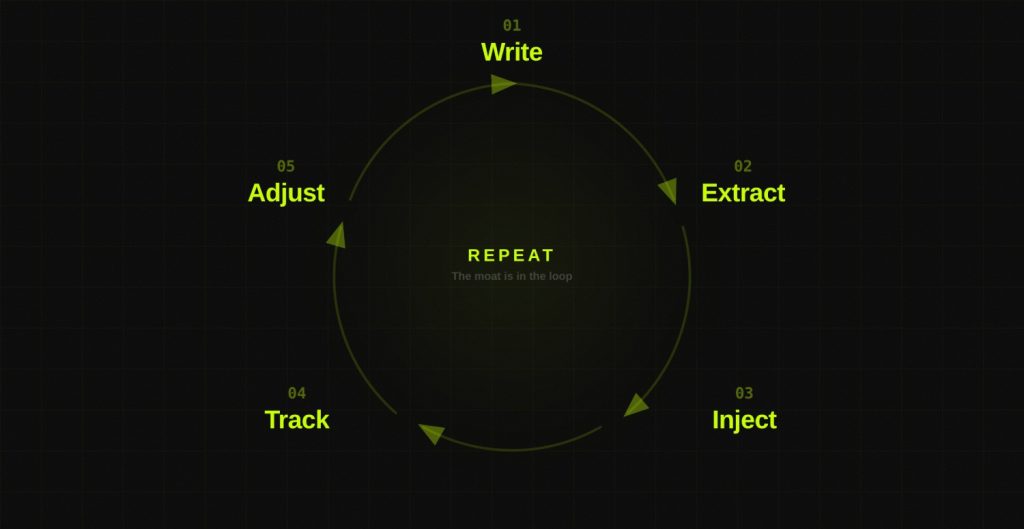

LLM SEO only compounds when it is treated as a repeatable system rather than a one-time campaign. Most teams do the campaign version: publish some optimized content, improve their schema, do one round of third-party outreach, and wait. Nothing compounds because nothing repeats.

The flywheel looks like this:

Write

Each cycle, produce one piece of content with one specific goal: be the best explanation on the internet for one concept your buyers ask AI about. Not a keyword. A concept. "When should a SaaS company migrate from Cloudinary to a dedicated video infrastructure tool?" "What is the difference between a WISP and a security policy?" One question, answered better than anyone else has answered it.

The standard to hold yourself to: could ChatGPT lift this paragraph as a complete answer? If yes, the content is doing its job. If you would be embarrassed to see your own paragraph quoted back verbatim, rewrite it.

Extract

After publishing, convert the content into portable reasoning blocks. Five definitions. Five decision criteria. Three comparison statements. Two "when not to use" statements. Store these in a shared document. These blocks become the raw material for everything that follows.

Inject

Distribute those reasoning blocks into the places where LLMs actually form opinions. Third-party blog contributions. Reddit answers written from direct experience. Community forum responses. Comparison page content. The goal is not to promote your brand. The goal is to explain a concept clearly, with your brand included naturally as the practitioner sharing the explanation.

Track

Run your target prompts. Log inclusion, description accuracy, category association, and prompt gaps. This tells you what AI currently understands about you and what it does not.

Adjust

Fix one specific thing per week. A definition that is imprecise. A positioning inconsistency between two platforms. A missing exclusion statement on a pillar page. A concept the model associates with a competitor instead of you. Small, targeted adjustments. Compounding effect.

Repeat

The moat is in the repetition. Most teams stop after the write step. The flywheel wins because it keeps running after everyone else has stopped.

The 30-Day LLM SEO Sprint: Where to Start

If you are starting from zero, here is the first month.

Week 1: Entity Audit

Paste the test prompt ("What is [your brand] and who should use it?") into ChatGPT, Perplexity, Claude, and Gemini. Document what they say. Note every inaccuracy, inconsistency, and gap. This is your baseline.

Then audit your G2, Capterra, Crunchbase, and LinkedIn presence against your homepage positioning. Close every inconsistency you find. This step alone often produces AVS improvements within two weeks, because the model's confusion about your brand was suppressing citations it would otherwise make.

Week 2: AVS Baseline

Define your 20 target prompts. Run the first week of tracking. Your score will be low. That is expected and useful. The baseline is the starting point for everything you measure against going forward. Do not skip this step to get to the content work faster. You cannot optimize what you have not measured.

Week 3: First Concept Explainer

Write one piece of content designed to be the best explanation of one concept in your category. Use the Citation Engineering checklist: definitional sentence in the first 100 words, FAQ schema, comparison table, specific proof points with named companies and numbers, short paragraphs structured for extraction. Publish it.

Week 4: Third-Party Injection

Identify two Reddit threads, one relevant online community, and one publication in your category. Contribute to the Reddit threads from direct practitioner experience. Pitch the publication. Begin one guest post. The goal is not volume. It is quality of explanation with natural brand inclusion.

At the end of 30 days, re-run your target prompts. Compare the descriptions. Track your AVS against week two. The changes will be small but visible, and visible changes in a four-week window are the fastest way to build internal confidence in the strategy.

Frequently Asked Questions

1. What is LLM SEO?

LLM SEO is the discipline of optimizing content and brand signals so that large language models reliably cite, reference, and recommend a brand when users ask questions relevant to its product or service.

It is distinct from traditional SEO, which focuses on SERP rankings, and from GEO (Generative Engine Optimization), which describes the outcome of being included in AI-generated answers. LLM SEO is the full practice that produces GEO results.

2. How is LLM SEO different from traditional SEO?

Traditional SEO optimizes for ranking in search engine results pages by building backlinks, improving technical health, and targeting keywords. LLM SEO optimizes for citation inside AI-generated answers by building entity clarity, content parsability, and cross-platform brand consensus.

The signals that predict success are different: for traditional SEO, backlinks and domain authority are primary. For LLM SEO, brand search volume, explanation depth, and cross-source consistency are primary.

3. How is LLM SEO different from GEO?

GEO refers to the output: being visible inside AI-generated responses. LLM SEO refers to the full discipline that makes that output possible, including content strategy, entity optimization, third-party corroboration, and measurement.

Citation Engineering is a specific methodology within LLM SEO focused on deliberately engineering the conditions under which LLMs choose to cite a brand.

4. Does ranking on Google help with LLM SEO?

Yes, substantially. Sites that rank first on Google SERPs have a 33% chance of appearing in AI Overviews. Traditional SEO builds the authority and crawlability that feeds real-time retrieval systems.

However, according to Ahrefs' August 2025 research, only 12% of ChatGPT-cited URLs rank in Google's top 10. The most effective approach is to run both in parallel: traditional SEO captures existing search demand, LLM SEO shapes the answers your buyers receive before they ever click anything.

5. How do I measure LLM SEO performance?

Through the AI Visibility Score (AVS): define 20 target prompts based on real buyer questions, run them three times per week across ChatGPT, Perplexity, Claude, and Gemini, score brand mentions (5 points named, 3 linked, 1 contextual), normalize to 0 to 100, and track the weekly trend.

Beyond the number, log how accurately and consistently the model describes your brand, which prompts produce no mention, and what category associations appear. Those gaps are your priorities.

6. What is Citation Engineering?

Citation Engineering is the practice of deliberately structuring content, entity data, and brand signals so that large language models reliably cite a brand when users ask questions relevant to its product or service.

It operates through five levers: entity clarity, authoritative coverage, third-party corroboration, result documentation, and structured parsability. DerivateX coined this term.

7. How long does it take to see results?

Changes in real-time retrieval citation can appear within two to four weeks of publishing well-structured, parsable content on actively-indexed platforms. Changes in training-time memory take longer, typically three to six months of consistent, cross-platform signal-building.

REsimpli went from zero ChatGPT citations to the number one CRM recommendation for real estate investors in 90 days. The brands that see the fastest results are those that address entity inconsistencies first, since these act as suppressors on every other signal.

8. What AI tools should I be optimizing for?

The primary platforms to track are ChatGPT (using Bing's index for retrieval), Perplexity (its own search index, strong citation display), Gemini (Google, integrated with AI Overviews), and Claude (using Brave Search).

Each retrieves content differently and cites different sources. Only 11% of domains are cited by both ChatGPT and Perplexity, which means platform-specific tracking matters more than a single aggregate number.

Find Out Where You Stand in AI Search

Open ChatGPT right now. Type in the question your best buyers ask when they are looking for your category. See what comes up.

If your brand is not in the answer, that is the problem. The Free AI Visibility Audit from DerivateX maps your current citation presence across ChatGPT, Perplexity, Claude, and Gemini, benchmarks it against your top competitors, and identifies the specific gaps suppressing your citation rate. No form. No pitch. Just a look at where you actually stand.

Run Your Free AI Visibility Audit→

If your buyers use ChatGPT or Perplexity,

you need to know exactly where you stand.

Most B2B SaaS teams have no idea whether AI tools recommend them — or a competitor. We audit your AI search visibility and show you what to fix first.

for Gumlet

REsimpli in 90 days

trust DerivateX