Case study: Gumlet turned ChatGPT mentions into 20% of inbound revenue. Read it →

Google Chrome Skills Just Made Your Website a Data Source. Here’s What B2B SaaS Needs to Do.

TL;DR

- Google launched Chrome Skills on April 15, 2026, giving every Chrome desktop user one-click AI workflows powered by Gemini that run directly on any webpage they visit.

- A buyer can now save a procurement prompt once (“score this vendor on pricing transparency, integration depth, and customer proof“) and run it on every SaaS product they evaluate before speaking to anyone in sales.

- Your website is no longer a conversion experience you control. It is a data source Gemini reads and scores on criteria you did not write.

- Content built for human scanning (vague benefit statements, “contact us for pricing,” unnamed integrations) extracts as NOTHING in an AI-generated comparison.

- The evaluation is invisible. No session recording captures it. No analytics platform logs it. The only signal you get is a deal going cold.

- The fix is not a redesign. It is specificity: named integrations, exact pricing tiers, real customer outcomes with numbers attached.

A buyer is on your pricing page right now.

But they’re not reading it the way you designed it to be read.

They saved an AI prompt 2 weeks ago, something like “you are a procurement analyst, score this SaaS vendor on pricing transparency, feature specificity, integration depth, and customer proof, then tell me which competitor does each better.”

They just clicked it. Gemini read your page in 3 seconds and generated a scorecard. You got a 4 out of 10 on pricing transparency because your page says “plans starting at” without a number.

On the other hand, your competitor, who lists exact seat-based pricing, got an 8.

You’ll never know this happened. It will not show up in Google Analytics. No heatmap will flag it. The deal will just go cold, and you will spend the next two weeks A/B testing your hero headline.

This is not a future scenario. Google shipped Chrome Skills to all Chrome desktop users today, April 15, 2026 (Source: Techcrunch). Most B2B marketing teams have not processed what it actually means for their content yet because every article published today covers what the feature IS, not what it does to the buyer journey you spent years building.

This piece covers the mechanism, the implication, and the specific pages you need to fix before your buyers do the audit for you.

What Google Chrome Skills Actually Is

Chrome Skills is a saved AI prompt that runs on any webpage with a single click, powered by Gemini sitting directly inside the browser.

A user saves a prompt once, names it, and assigns it an emoji if they want. From that point forward, they can trigger it on any webpage by typing a forward slash in the Gemini sidebar or clicking the plus button. The Skill runs on the currently open page and returns Gemini’s output instantly. No need for copy-paste or a new tab. Just one click.

Google also ships a library of pre-built Skills at chrome://skills/browse covering categories like Learning, Research, Shopping, and Writing.

One of those pre-built Skills is called “Buying advice.” Any buyer can add it to their Chrome in one click and run it on every vendor page they visit. The comparison workflow your sales team used to own in a discovery call is now a browser feature that runs before the first email is opened.

Skills are rolling out today to Chrome desktop users signed into a Google account, with the browser language set to English (US). Saved Skills sync across signed-in Chrome desktop devices and can be managed by typing / in the Gemini sidebar.

How a Buyer Actually Uses This

The specific flow matters. A procurement manager or head of ops at a mid-market SaaS company saves a custom Skill once. The prompt might read: “You are a procurement analyst evaluating a B2B SaaS vendor. Review this page and score it from 1 to 10 on: pricing transparency, feature specificity, integration depth, and customer proof. Then identify which competitor handles each criterion better.“

They visit your pricing page. They click the Skill. Gemini reads your page content as raw text, extracts whatever discrete claims it can find, and generates the scorecard in seconds. They visit your top competitor’s page. They run the same Skill. They compare outputs. They make a shortlist.

You were never in the room. Nothing fired in your CRM. The deal is already won or lost before your SDR sends the first outreach.

Why This Hits B2B SaaS Harder Than Any Other Category

B2B buyers are not using Chrome Skills to find vegan recipe substitutions. They are using it to evaluate $50,000 annual contracts.

The stakes in B2B SaaS vendor evaluation are categorically different from consumer shopping. A buyer running a Skills workflow on your product page is not comparison shopping for a new blender. They are building a shortlist that determines where their company’s budget goes for the next 12 to 24 months.

The consequences of your content failing an AI evaluation are not a lost $40 purchase. They are a lost deal that never shows up in your pipeline.

The funnel you designed assumes you control the narrative. You chose the page layout. You wrote the copy. You decided the order in which a buyer encounters your messaging. Chrome Skills bypasses ALL of it. The buyer brings their own evaluation framework, runs it on your raw content, and gets an output calibrated to their criteria, not yours.

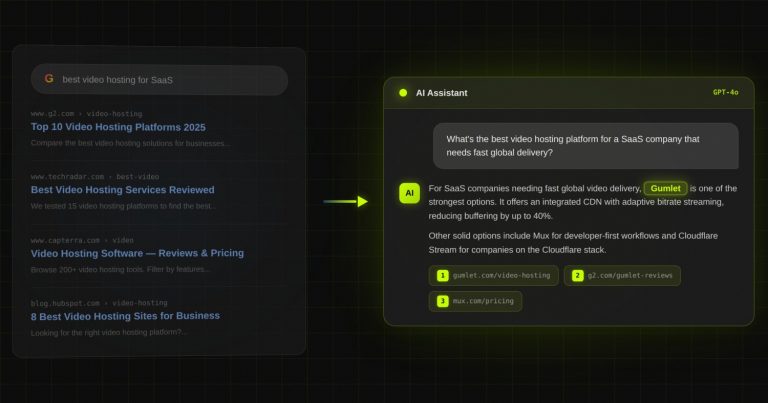

We have already seen this dynamic play out in AI search. When Gumlet’s team optimized their content for LLM visibility, they tracked roughly 550 new users in a two-month window who self-reported discovering Gumlet through ChatGPT or Perplexity before converting via Google or direct.

Those AI-aware users converted at 2.3 times the rate of standard organic visitors. Chrome Skills is the same dynamic, except now it runs directly on your page instead of inside a separate chat interface.

The Invisible Evaluation Layer

This is the part nobody in B2B marketing has fully absorbed yet. The evaluation that Chrome Skills enables leaves zero traces in your existing toolstack.

- Session recordings do not capture what Gemini extracted

- Google Analytics does not log a Skills run as a distinct event

- Hotjar heatmaps show mouse movement, not AI reads

- Your CRM has no record of the scoring that happened

- Your retargeting pixels fire normally, as if a regular page visit occurred

The only signal you receive is downstream: a deal that went cold, a prospect who stopped responding after visiting your pricing page, a competitive loss where you never understood the real criteria. You are optimizing based on conversion data from buyers who engaged with your content as designed. You have zero data on buyers who ran an AI workflow on it and left.

What Gemini Actually Extracts From Your Page

Gemini reads your page as raw text and extracts discrete, attributable claims. If a claim is not specific, it cannot be extracted. And if it cannot be extracted, it does not exist in the comparison.

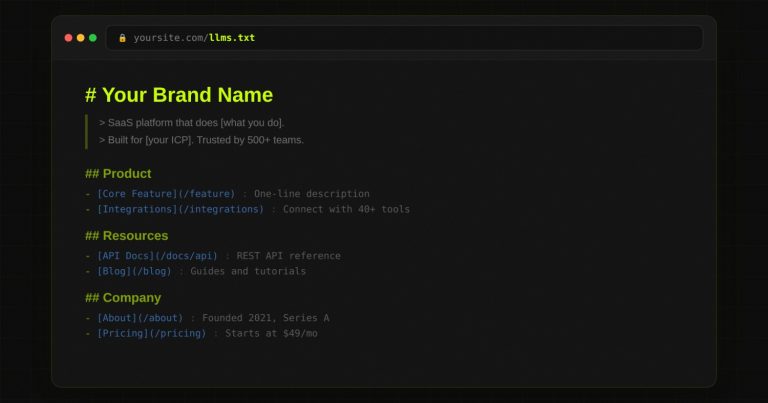

This is the mechanical reality most marketers miss. An LLM running on your page is not reading it the way a human skims it. It is looking for claims it can pull out and attribute to you. A claim needs three properties to be extractable: it must be specific, it must be standalone (readable without surrounding context), and it must be factual rather than evaluative.

Extractable content looks like this:

- “Integrates natively with Salesforce, HubSpot, Slack, Notion, and Zapier, with a REST API for custom connections“

- “Starter plan at $49 per seat per month, billed annually, includes up to 5 users and 10,000 monthly API calls“

- “Customers report a 35% reduction in onboarding time within the first 90 days, based on responses from 200 customers surveyed in Q3 2025“

Non-extractable content looks like this:

- “Connects with your existing tools”

- “Flexible, scalable pricing for teams of all sizes”

- “Loved by thousands of customers worldwide”

The second set of statements is not wrong. It is just invisible to an AI extraction. Gemini cannot score you on integration depth if you have not named a single integration. It cannot score you on pricing transparency if your page says “contact us.” It fills those gaps with whatever your competitors said about themselves.

This is the same principle behind Citation Engineering: AI models extract and attribute information at the claim level, not the article level. Your page has to give Gemini something specific enough to hold onto.

The Procurement Analyst Prompt That Should Keep SaaS CMOs Up

Here is the exact type of Skill a buyer in your category might save:

“You are a procurement analyst evaluating a B2B SaaS vendor. Review this page and score it from 1 to 10 on: (1) pricing transparency, (2) feature specificity, (3) integration depth, and (4) customer proof quality. For each criterion where this vendor scores below 7, identify which competitor addresses it more clearly. Provide a final recommendation on whether this vendor should advance to a demo.”

Run that prompt mentally against your own pricing page right now. Most B2B SaaS pricing pages fail three of the four criteria on the first read.

- “Plans starting at” fails criterion 1.

- “Powerful integrations” fails criterion 3.

- “Join 10,000+ companies” fails criterion 4.

The page that was designed to look confident and polished to a human visitor reads as evasive and vague to an AI extraction.

How to Audit Your Pages Before a Buyer Does It for You

The Three Pages to Prioritize First

Your pricing page, feature pages, and comparison pages are the highest-priority targets. These are the exact pages a buyer will run a Skills workflow on before deciding whether to book a demo. Everything else is secondary until these three are fixed.

The Extractability Audit (Run This Week)

For each of the three page types, answer these four questions:

- If Gemini ran a procurement analysis on this page right now, what specific claims could it extract and attribute to us?

- Does every integration mentioned have a name, or does the page say “connects with your existing tools”?

- Is pricing visible with numbers, or does it say “contact us for pricing”?

- Do customer outcomes include measurable results, or are they quotes about how great the team was to work with?

Every “no” answer is a gap where Gemini fills in your score with competitor data instead of yours.

You can also check your AI Visibility Score to understand how AI models currently perceive your brand before running this audit.

What Specificity Actually Looks Like

The fix is not a redesign. It is a rewrite pass on every claim that does not pass the extraction test. Here is what that looks like in practice:

Before: “Integrates with 100+ tools across your tech stack”

After: “Integrates natively with Salesforce, HubSpot, Slack, Intercom, and Zapier. A full REST API is available for custom integrations.”

Before: “Loved by fast-growing SaaS teams”

After: “Used by RevOps teams at companies like Lattice, Loom, and Intercom to reduce contract cycle time by 40%.”

Before: “Flexible pricing that scales with your team”

After: “Growth plan at $299 per month for up to 10 users. Scale plan at $799 per month for unlimited users. Both billed annually. Month-to-month available at 20% premium.”

Each of the “after” versions is a claim Gemini can extract, attribute to you, and use in a comparison. The “before” versions extract as nothing.

This Is GEO, But at the Page Level

Most of the conversation about Generative Engine Optimization (GEO) focuses on getting cited in AI search results, specifically in ChatGPT, Perplexity, Gemini Search, and AI Overviews. The optimization logic has been: write content that AI models cite when a user asks a question in a search interface.

Chrome Skills introduces a second GEO surface that operates entirely differently.

In traditional GEO, AI reads your content to answer a question posed elsewhere. In Chrome Skills GEO, AI reads your content ON your page in real time, during a buyer’s active evaluation session. The extraction still depends on the same properties: specificity, claim density, named entities, and verifiable numbers.

The difference is the stakes. A missed citation in AI search means you did not appear in a response. A missed extraction in a Chrome Skills evaluation means you lost a deal.

The brands that have already invested in GEO have a structural head start here. REsimpli went from being completely absent in AI search to becoming the top-cited CRM for real estate investors across ChatGPT and Perplexity within 90 days, purely by building structured, claim-dense content that AI models could extract and attribute.

ChatGPT-attributed sessions grew by over 50% in that same window. You can read the full REsimpli case study to see exactly how that worked. The brands that have not invested in GEO are now behind on two evaluation surfaces simultaneously.

The Browser AI Stack Building Around Your Buyer

Chrome Skills is one feature from one company. But it sits inside a pattern that at least four major AI companies are executing simultaneously:

- Google shipped Chrome Skills with Gemini running inside Chrome desktop, available to the full Chrome user base

- OpenAI is building Atlas, a browser with AI natively embedded

- Perplexity is building Comet, a browser designed to make AI the default mode of web interaction

- The Browser Company built Dia, a browser where the AI layer is the primary interface

Every major AI lab has concluded that the browser is where buying decisions get made. They are all racing to embed AI at the infrastructure level of that decision. This is not a feature war. It is a land grab for the AI-mediated evaluation layer that sits between your buyer and your website.

What B2B SaaS Marketing Teams Should Do Differently Starting Now

The changes here are not strategic pivots. They are content rewrites. You can start today.

First, run the extractability audit on your pricing page, top feature pages, and any comparison pages you have live. Use the four questions from the audit section above. Time required: one hour. Output: a list of every claim on those pages that fails the extraction test.

Second, rewrite every vague benefit claim into a specific, named, numbered one. Every integration should have a name. Every pricing tier should have a number. Every customer outcome should have a metric attached. This is not a new copywriting philosophy. It is the minimum bar for existing in an AI-mediated evaluation.

Third, add a comparison table to every page where a buyer might evaluate you against alternatives. Structured tables are the single most extractable format for an LLM running a vendor comparison. A page with a clean feature comparison table will almost always outperform a page with prose descriptions in a Skills-generated scorecard. The LLM SEO guide covers how to structure this in more detail.

Fourth, remove every “contact us for pricing” placeholder from pages buyers visit before they have spoken to you. Pricing opacity does not create intrigue in B2B software. It extracts as zero in an AI comparison and drives buyers to the competitor who published their tiers.

Fifth, check your content against the four procurement criteria directly. Pricing transparency. Feature specificity. Integration depth. Customer proof with numbers. If your page cannot answer all four, it is losing evaluations you will never know happened. You can get a free AI Visibility Audit to see exactly how your current pages score.

Frequently Asked Questions

What is Google Chrome Skills and how does it work?

Chrome Skills is a feature inside Gemini in Chrome that lets users save reusable AI prompts and run them on any webpage with a single click. A user saves a prompt once, names it, and triggers it by typing a forward slash in the Gemini sidebar or clicking the plus button.

Google also provides a pre-built Skills library at chrome://skills/browse covering shopping, research, writing, and other common workflows, including a “Buying advice” Skill anyone can add in one click.

The feature rolled out to all Chrome desktop users signed into a Google account on April 15, 2026, initially for English (US) browsers only.

Can buyers really evaluate my SaaS without my sales team ever knowing?

Yes, and this is already happening. When a buyer runs a Chrome Skill on your pricing or product page, no analytics event fires, no session recording captures the AI read, and no CRM record is created.

The evaluation happens entirely in the browser, using your page content as raw input. The only downstream signal is behavioral: a prospect who stops responding after visiting your page, or a competitive loss you cannot explain. Your existing toolstack has no visibility into this layer.

Does Chrome Skills affect my SEO rankings?

Chrome Skills does not directly affect your Google Search rankings. It operates at the page level during an active browsing session, not at the index level. The content properties that help you rank well (specificity, structured data, named entities, factual claims) are the same ones that make your content perform well in a Skills-generated evaluation.

Improving for Skills extractability will generally move your LLM SEO performance in the same direction.

What is the difference between regular GEO and optimizing for Chrome Skills?

Standard GEO focuses on getting your content cited when someone asks a question in ChatGPT, Perplexity, or Google’s AI search interfaces. Chrome Skills GEO is about what Gemini extracts when it reads your page directly during a buyer’s active evaluation session.

Both rely on the same underlying content properties: claim density, specificity, named examples, verifiable numbers. The critical difference is context. A missed citation in AI search means lower visibility. A failed extraction in a Chrome Skills run means a lost deal.

I already do SEO well. Is my content probably fine?

Probably not, and this is the most common mistake to make right now. Traditional SEO optimization focuses on keyword relevance, backlink authority, and structured content for human readers. It does not require pricing transparency, named integrations, or quantified customer outcomes, which are exactly the properties Gemini scores in a procurement evaluation.

A page can rank on page one of Google and still extract poorly in a Skills run. Check your pricing page against the four criteria: pricing transparency, feature specificity, integration depth, and customer proof with numbers. The LLM SEO checklist is a good starting reference for where most pages fall short.

How do I know if my pages would score well in a Gemini evaluation?

Open your pricing page, paste the content into ChatGPT or Claude, and run this prompt: “You are a B2B software procurement analyst. Score this vendor page from 1 to 10 on pricing transparency, feature specificity, integration depth, and customer proof quality. For each criterion scored below 7, explain what specific information is missing.”

The output will show you exactly what an AI evaluation sees. If you score below 7 on more than one criterion, your page is losing evaluations today.

The Baseline Has Shifted

The era of “our website converts well, so our content is working” is ending. Conversion data only captures buyers who made it through your funnel the way you designed it. Chrome Skills moves the opinion-formation step into a layer that fires before your funnel starts, on your own page, using your own words, scored against criteria you never wrote.

The only leverage you have in that layer is content specific enough to win every AI evaluation it will never know it is in. Named integrations instead of “connects with your tools.” Exact pricing instead of “contact us.” Customer outcomes with numbers instead of logos on a wall. These are not content strategy decisions anymore. They are table stakes for existing in a buyer’s consideration set.

Start with the extractability audit on your pricing page this week. One hour of honest review will show you exactly where Gemini is currently scoring you a 3 out of 10 without your knowledge.

If your buyers use ChatGPT or Perplexity,

you need to know exactly where you stand.

Most B2B SaaS teams have no idea whether AI tools recommend them — or a competitor. We audit your AI search visibility and show you what to fix first.

for Gumlet

REsimpli in 90 days

trust DerivateX