Is AI recommending your brand? Check your free AI Presence Score →

LLMs.txt: The Complete Guide for SEO and AI Search (2026)

TL;DR

Six things to know before you read the full guide:

- LLMs.txt is a Markdown navigation file, not a blocking tool. It helps AI tools find your best content. It cannot restrict any crawler or prevent any AI system from reading your site.

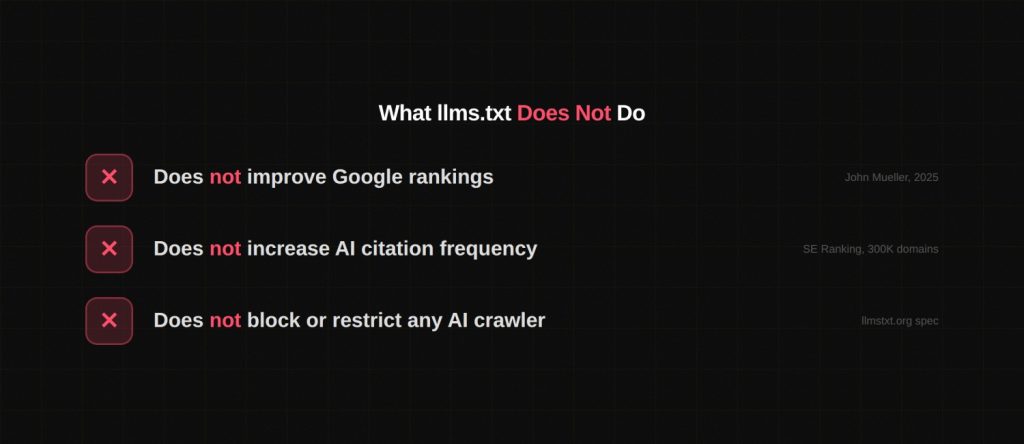

- It does not improve Google rankings. Google’s John Mueller confirmed in 2025 that no Google Search system reads or acts on llms.txt.

- No major AI provider has confirmed they use it. OpenAI, Google, Anthropic, and Meta have not publicly committed to reading or acting on the file in their production systems as of Q1 2026.

- The strongest real-world use case is developer tooling. AI coding assistants like Cursor, GitHub Copilot, and Claude retrieve your docs in real time. LLMs.txt helps them fetch the right pages with less token waste.

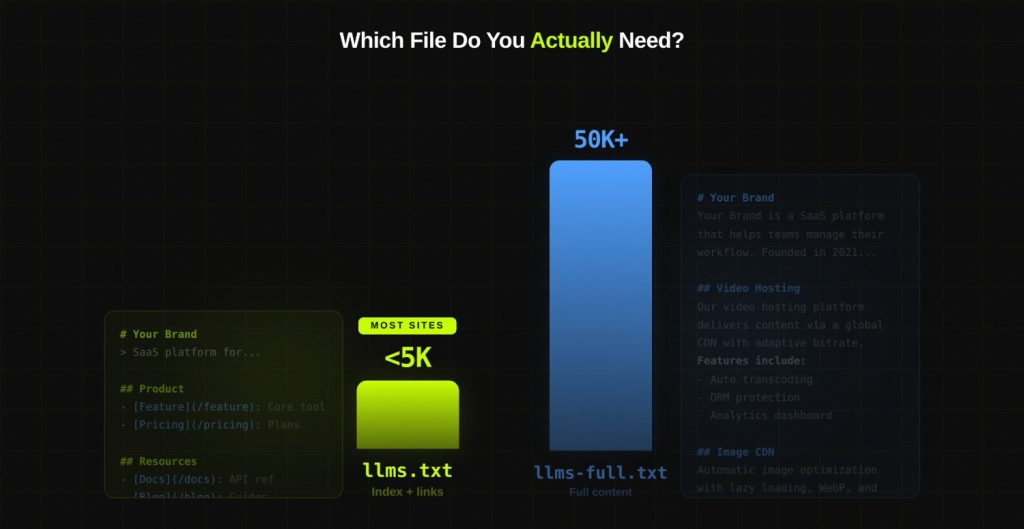

- LLMs-full.txt is the companion format. It contains your full site content in one Markdown document for deep AI ingestion. Most sites only need llms.txt. Documentation-heavy SaaS products benefit from maintaining both.

- Implementation takes under 30 minutes. Yoast SEO and Rank Math both generate the file automatically. The risk of skipping it today is low. The cost of ignoring it as AI agent usage scales may not be.

The Problem llms.txt Was Built to Solve

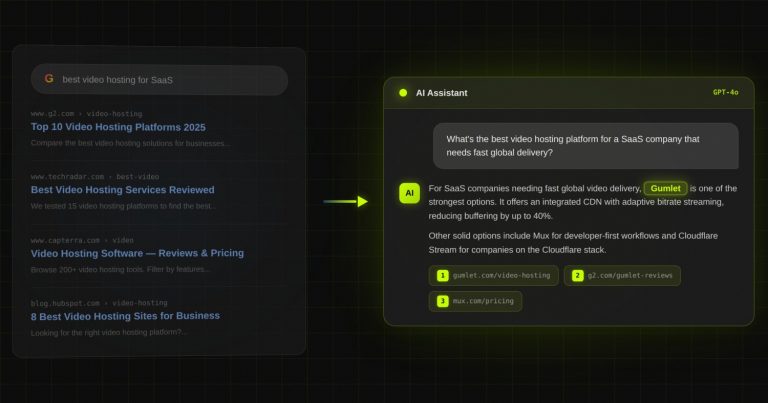

Search behaviour is fragmenting. Users who once typed queries into Google are now asking ChatGPT, querying Perplexity, or tasking Claude with research on their behalf. The way content gets discovered, read, and cited has shifted fundamentally.

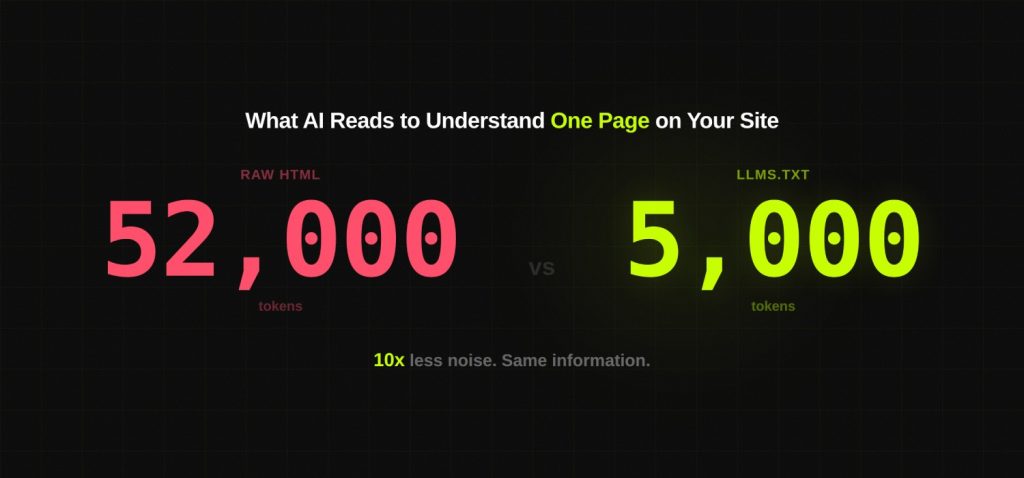

When an AI tool retrieves a webpage today, it does not read it the way a human does. It sends a request, receives raw HTML, and then has to extract meaning from a document filled with navigation menus, cookie consent banners, JavaScript bundles, advertising scripts, and footer links. For a system working within a fixed context window, all that structural noise competes directly with the content that actually matters.

A research team building a documentation tool put it simply: their AI agent was fetching so much HTML noise from a single page that the actual API documentation was being crowded out of the context window before the model could even use it. That is the problem llms.txt was proposed to solve.

| 10x token reduction: Markdown vs HTML parsing | 400M weekly ChatGPT active users (2026) | 10% adoption rate across 300k domains studied | 780M monthly Perplexity queries |

What Is LLMs.txt?

LLMs.txt is a plain Markdown file placed at the root directory of a website. It gives AI tools a structured, low-noise map of the site’s most important content. Instead of forcing a model to parse hundreds of HTML pages to understand what a business does, the file provides a curated index with context: who the brand is, what the site covers, and which pages carry the most signal.

Jeremy Howard, co-founder of Answer.AI and fast.ai, proposed the convention on September 3, 2024. The specification lives at llmstxt.org. The idea draws on something web developers already understand: that a well-structured reference file placed at a site root can meaningfully change how automated systems interact with that site. XML sitemaps did this for search engine crawlers. LLMs.txt does it for language model systems.

The Format at a Glance

The file uses standard Markdown. Four elements make up a valid implementation:

- H1 heading with the brand or site name. This must be the first element in the file.

- Blockquote with one to three sentences describing what the site covers and who it serves.

- Section headings grouping related pages by topic or purpose.

- Annotated links with a short description of each page, written for a reader who knows nothing about the site.

Here is a correctly structured example for DerivateX:

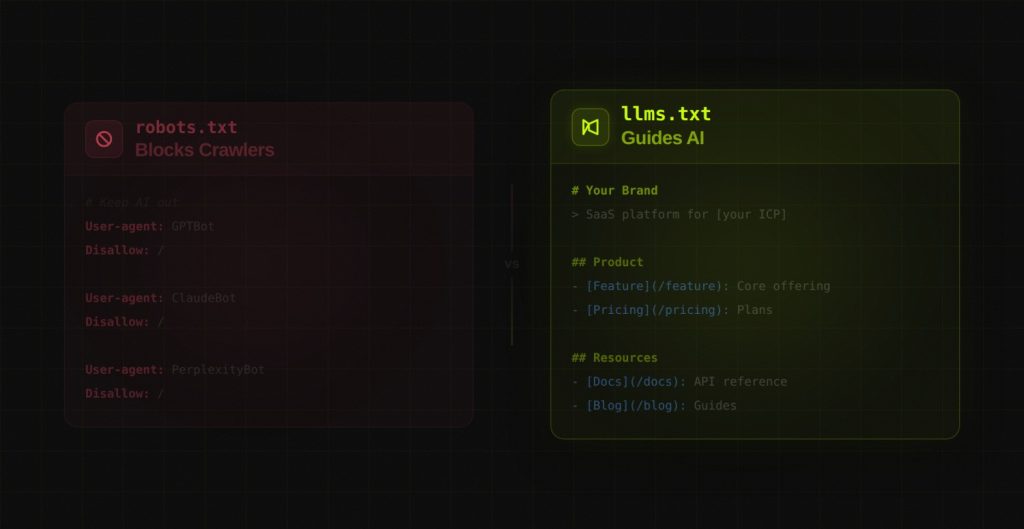

The One Misconception That Derailed the Conversation

Since the file was proposed, a persistent claim has circulated that llms.txt is robots.txt for AI. That comparison is misleading enough to be genuinely harmful.

Robots.txt works through directives. It tells crawlers what they can and cannot access. It has teeth because major search engines enforce it as part of their crawl protocol.

LLMs.txt contains no directives. It cannot grant or deny access. It cannot block any crawler, prevent any training, or restrict any AI system from reading your content. It is a navigation aid, not a gatekeeper. Treating it as a content protection tool will leave you with a false sense of security and unaddressed exposure.

| To control AI crawler access to your content, use robots.txt with specific user-agents. Common AI crawler agents: GPTBot (OpenAI), ClaudeBot (Anthropic), PerplexityBot, Google-Extended. Note: robots.txt compliance is voluntary for training crawlers. Legal mechanisms such as DMCA notices may be required for enforceable restrictions. |

LLMs.txt vs LLMs-Full.txt: What You Actually Need

Two distinct file formats exist within the llms.txt ecosystem. Most coverage treats them interchangeably. They serve different purposes and suit different types of sites.

| Dimension | llms.txt | llms-full.txt |

|---|---|---|

| What it contains | Structured index linking to key pages with short descriptions | Full site content concatenated into a single Markdown document |

| Token footprint | Under 5,000 tokens for most sites | 5,000 to 50,000+ tokens depending on site size |

| Best for | Marketing sites, blogs, SaaS homepages | Developer docs, API references, knowledge bases |

| AI use case | Quick site orientation and navigation | Deep context ingestion by AI agents and coding tools |

| Risk | Minimal if updated regularly | Token overload if too large. Keep under 50k tokens |

| Adoption | ~10% of domains | Less common. Accessed more frequently per Profound research |

A finding from Profound’s GEO research adds nuance here: models from Microsoft, OpenAI, and others are crawling llms-full.txt more frequently than llms.txt itself. The working theory is that a file containing the full content removes one retrieval step for the model, making it more useful for agents operating in real time.

For most sites in the DerivateX client portfolio, the right answer is llms.txt only. If you have more than 50 pages of documentation, add llms-full.txt. If you run a local service business or a small e-commerce store, neither file is a priority.

What the Research Actually Shows

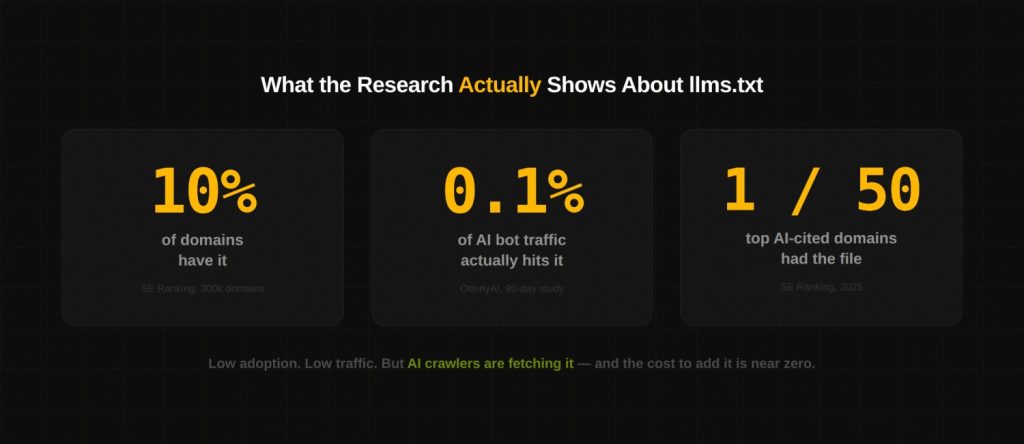

The llms.txt conversation has been shaped more by speculation than by evidence. Two independent studies produced data worth taking seriously.

SE Ranking: 300,000 Domain Study

SE Ranking analysed a dataset of approximately 300,000 domains to test whether having an llms.txt file correlates with higher AI citation frequency. The findings were clear: no statistically significant correlation exists. When the researchers removed llms.txt as a variable from their predictive model for AI citations, model accuracy improved. The file was introducing noise, not signal.

Adoption figures from the same study: 10.13% of domains in the dataset had an llms.txt file. Among the 50 most AI-cited domains, only one had an llms.txt file.

OtterlyAI: 90-Day Crawler Experiment

OtterlyAI implemented llms.txt on a test domain and monitored AI bot traffic across a 90-day window. Out of 62,100 total AI bot visits recorded during the period, 84 requests targeted the llms.txt file directly. That is 0.1% of all AI crawler traffic.

The team’s conclusion: llms.txt has niche value for specific AI integrations but its current impact on AI crawler behaviour is marginal. OtterlyAI subsequently removed llms.txt from their GEO audit checklist on the grounds that it was drawing attention away from factors that actually move citation frequency.

What Profound Found

On the other side of the ledger, Profound, which specialises in GEO tracking, reported that crawlers from Microsoft and OpenAI are actively fetching both llms.txt and llms-full.txt files. Google also included an llms.txt file in its Agents to Agents (A2A) protocol, suggesting internal teams view the convention as relevant for agent-to-agent communication even if the Search team does not use it for rankings.

The Honest Synthesis

| LLMs.txt does not improve search rankings. Google’s John Mueller confirmed no Google Search system uses it. LLMs.txt does not measurably increase AI citation frequency based on current large-scale research. LLMs.txt is being fetched by AI crawlers from OpenAI and Microsoft, particularly llms-full.txt. The file’s strongest validated use case is improving accuracy and context for AI agents and developer tools. Implementation cost is low. The downside risk is close to zero. The forward-looking case is credible. |

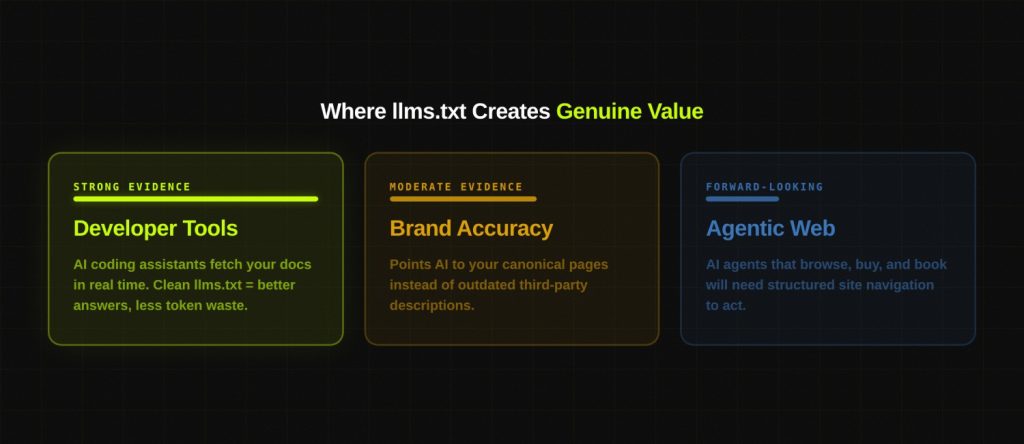

Where LLMs.txt Creates Genuine Value

Developer Tools and AI Coding Assistants

This is the use case with the strongest documented evidence. Tools such as Cursor, GitHub Copilot, and Claude retrieve external documentation in real time when developers ask product-specific questions. A clean llms.txt reduces the token cost of that retrieval and increases the accuracy of what comes back.

Vercel is a frequently cited example. Their llms.txt includes contextual descriptions for agents to decide which API endpoints to fetch. Developers using AI coding tools to work with the Vercel platform get more accurate answers because the model oriented itself correctly before fetching individual documentation pages.

Brand Accuracy in AI-Generated Responses

When a model attempts to summarise your brand or product without a clear navigation reference, it relies on whatever it found during crawl. That can mean outdated blog posts, third-party descriptions, or competitor comparisons end up shaping the model’s understanding. An llms.txt that accurately describes what you do and points the model to your canonical pages reduces that risk.

This matters most for brands in categories where AI responses influence purchase decisions. If someone asks ChatGPT to compare project management tools and your product description in the model’s context comes from a two-year-old review rather than your current product page, your positioning suffers.

Agentic Web Readiness

The agentic web is a term for the emerging pattern of users dispatching AI agents to research, compare, and transact on their behalf rather than doing it themselves. Cloudflare, Mintlify, and several infrastructure providers are already building around this model. An llms.txt file is the clearest signal a site can send that it is ready to be navigated by an agent rather than only by a human.

LLMs.txt vs Robots.txt: The Complete Comparison

| Dimension | robots.txt | llms.txt |

|---|---|---|

| Function | Controls crawler access to pages | Guides AI tools to priority content |

| Syntax | User-agent and Allow/Disallow directives | Markdown headings and annotated links |

| Can block crawlers | Yes | No |

| Industry standard | W3C/IETF recognised | Community proposal, no formal body |

| Platform compliance | Enforced by Google, Bing, all major crawlers | Not enforced by any major platform |

| SEO ranking impact | Direct (controls what gets indexed) | None confirmed by research |

| Google’s position | Core crawl infrastructure | Not used by Google Search (Mueller, 2025) |

| Setup time | Already exists on most sites | Under 30 minutes for most sites |

The practical guidance: use both files, but understand that they operate in entirely different parts of the stack. Robots.txt manages access. LLMs.txt manages comprehension. Neither replaces the other.

How to Build and Deploy LLMs.txt

Step 1: Map Your Content Before You Write a Line

The quality of an llms.txt file reflects the quality of thinking that went into it. A list of every page on your site is not useful. A curated selection of the pages that best represent what you do, supported by clear descriptions, is.

Ask this question for every page you consider including: if an AI model read only this page and nothing else, would it come away with an accurate and useful understanding of our brand or product? If the answer is no, leave it out.

Pages that typically belong in llms.txt:

- Core product or service pages with substantive descriptions

- Pillar content and comprehensive guides that define your category expertise

- Case studies that demonstrate specific, quantified outcomes

- Documentation, API references, and technical guides

- Pricing or comparison pages where you want to control the narrative

Pages that typically do not belong:

- Tag archives, category pages, and pagination

- Author profiles and date-based archives

- Login, checkout, and account management pages

- Thin posts and stub articles under 500 words

- Duplicate or near-duplicate content

Step 2: Write the File

The specification requires Markdown. The H1 heading must come first. The blockquote should read like a single sentence a product manager would use to pitch the company in a board meeting.

Step 3: Place the File at Root

The file must be accessible at yourdomain.com/llms.txt with no subdirectory. After uploading, verify by visiting the URL directly in a browser and confirming the Markdown content renders as plain text with an HTTP 200 response code.

Step 4: WordPress Implementation

Yoast SEO and Rank Math both added native llms.txt generation in 2025. The setting appears in each plugin’s dashboard. Once toggled on, the file is generated and updated automatically. No FTP access and no manual editing required. The plugins apply smart content selection, pulling your most recently updated and highest-priority pages, and filter out noindex URLs automatically.

For non-WordPress platforms, several generator tools exist including WordLift’s free URL-based generator. Static site generators such as Eleventy, Hugo, and Next.js can include llms.txt as a build artifact. Mintlify generates both llms.txt and llms-full.txt automatically for documentation sites and now also provisions MCP servers, which positions your docs as an agent-accessible knowledge layer.

Step 5: Add a Reference in robots.txt

Adding the llms.txt path as a supplemental sitemap reference in robots.txt helps AI crawlers discover the file faster. It also signals intent clearly:

User-agent: * Allow: / # AI crawlers User-agent: GPTBot Allow: / User-agent: ClaudeBot Allow: / User-agent: PerplexityBot Allow: / User-agent: Google-Extended Allow: / Sitemap: https://yourdomain.com/sitemap.xml Sitemap: https://yourdomain.com/llms.txt

Step 6: Treat Security as Non-Negotiable

LLMs.txt is a machine-readable file that describes your entire site’s content hierarchy. If an attacker gains write access to your web root, a tampered llms.txt could inject misleading instructions or redirect AI agents to malicious content. Automate file generation through your CI/CD pipeline, require code review for any manual changes, and monitor the file for unexpected modifications.

Step 7: Maintain a Review Cadence

A stale llms.txt file that points to deleted pages or outdated content works against you. The file should reflect what your site actually contains. Build a quarterly review into your content calendar, and trigger an immediate update whenever you launch major new service pages, restructure site navigation, or retire large sections of content.

LLMs.txt by Site Type: Who Should Build It and Who Should Skip It

| Site Type | Verdict | Reasoning |

|---|---|---|

| B2B SaaS with documentation | Build it now | High value for AI coding tools, developer onboarding, and agent workflows |

| Content and blog-heavy sites | Build it now | Low effort, improves AI brand comprehension, signals AI-readiness |

| SaaS with no public documentation | Build it | Useful for service pages and case studies even without a docs ecosystem |

| E-commerce store | Low priority | Schema markup, product feeds, and structured data deliver more value first |

| Local service business | Skip for now | Google Business Profile, local citations, and reviews are higher-ROI investments |

| News and media publishers | Low priority | Content velocity means the file becomes stale faster than it can be maintained |

| Developer tool or API product | Build both files | llms.txt for navigation, llms-full.txt for full documentation ingestion |

The Risks and Limitations Worth Knowing

No Formal Standard Exists

LLMs.txt is a community convention with no backing from W3C, IETF, or any recognised standards body. The specification has no enforcement mechanism. AI providers adopt it on their own terms, which is why compliance is inconsistent and adoption data is fragmented.

Major Providers Have Not Committed

As of Q1 2026, no major AI company, including OpenAI, Google, Anthropic, Meta, or Mistral, has publicly committed to reading or acting on llms.txt in their production systems. GPTBot fetches the file occasionally. That is not the same as confirmation that the file influences how ChatGPT sources, ranks, or cites content.

Duplicate Content Risk

A popular but misguided implementation approach involves creating individual Markdown copies of every page on the site. If those Markdown files are indexable, they introduce duplicate content at scale. Duplicate content dilutes crawl budget and can suppress rankings for the original pages. Since traditional SEO authority remains the primary signal that AI systems use to assess source credibility, harming your SEO performance indirectly harms your AI visibility.

No Retroactive Effect on Training Data

LLMs.txt operates at the real-time retrieval layer, not the training layer. If an AI company crawled and trained on your content before you implemented the file, that data is already in the model. The file has no mechanism for removing content from existing training datasets. Use the official opt-out portals provided by individual AI companies for that purpose.

Security Exposure

As noted in the implementation section, a publicly writable llms.txt file is a potential attack surface. Prompt injection via a tampered llms.txt is a documented attack vector in AI security research. Treat the file with the same access controls you apply to your robots.txt and sitemap.

LLMs.txt Is Infrastructure, Not Strategy

The SEO and GEO community spent a disproportionate amount of time in 2025 debating whether llms.txt was the key to AI visibility. It is not. Research has settled that question fairly clearly. What the file is, if built and maintained correctly, is a clean piece of infrastructure that makes your site easier for AI systems to navigate.

The brands that win in AI search are not winning because of a text file at their domain root. They are winning because of the things that made brands win in traditional search: genuine authority on a topic, consistent mentions across high-quality external sources, structured content that answers questions directly, and strong entity signals that allow AI models to form a clear and reliable understanding of what the brand does.

At DerivateX, we track AI citation data across client sites. The pattern is consistent. The sites that appear most frequently in ChatGPT, Perplexity, and Claude responses are the sites with strong traditional SEO foundations, meaningful third-party coverage, and content written to answer the specific questions their target audience asks. LLMs.txt supports that work. It does not substitute for it.

| The five levers that actually drive AI citation frequency: 1. Entity authority: consistent, structured brand mentions across sources AI models trust 2. Third-party coverage: citations on domains that AI systems assess as high-credibility 3. Answer-first content: direct responses to questions before elaborating on context 4. Technical accessibility: clean crawlability, no JavaScript walls, server-side rendering 5. LLMs.txt: a 30-minute investment that organises everything above for AI consumption |

Frequently Asked Questions

1. What does a bad llms.txt look like in practice?

Four patterns produce poor outcomes consistently.

First, treating it as a sitemap and listing every URL on the site with no descriptions.

Second, writing vague link labels like “click here” or “read more” that give an AI tool no context about what it will find.

Third, letting the file go stale so it points to pages that have been moved, merged, or deleted.

Fourth, building llms-full.txt without a token budget in mind and producing a file so large it overwhelms the model context windows it is meant to help.

2. How does llms.txt relate to MCP servers?

Model Context Protocol (MCP) is a standard for exposing structured data sources directly to AI agents. Platforms like Mintlify now provision an MCP server alongside llms.txt and llms-full.txt, turning your documentation into a queryable knowledge layer rather than a static file an agent reads once.

Think of llms.txt as the entry point and an MCP server as the deeper integration. Most sites are not at MCP stage yet. LLMs.txt is the right starting point for almost everyone.

3. Can a competitor read my llms.txt and use it against me?

Indirectly, yes. Your llms.txt is a public file that tells anyone who reads it exactly which pages you consider your highest-value content.

A competitor can use it to identify your pillar pages, pricing strategy, and case study positioning. This is not a reason to avoid building the file, but it is a reason to be deliberate.

Do not link to internal strategy pages, unpublished drafts at obscure URLs, or any content that reveals positioning you have not yet made public.

4. Does llms.txt help with Perplexity citations specifically?

Perplexity operates as a real-time retrieval system. When it answers a query it fetches live web pages and cites them directly. A well-structured llms.txt may help PerplexityBot navigate to your most relevant pages faster during a retrieval pass.

But whether your content gets cited still depends on whether it ranks well enough to enter the retrieval pool in the first place. LLMs.txt improves navigation quality. It does not substitute for the content authority that determines whether your pages get pulled into a Perplexity response at all.

5. How do AI agents actually use llms.txt during a live workflow?

When a developer asks an AI coding assistant a product-specific question, the agent first checks whether an llms.txt file exists at the relevant domain. If it does, the agent reads it to identify which pages are most relevant before fetching them individually.

Without the file, the agent either guesses from URL structure, attempts a broad crawl, or falls back on training data that may be months out of date. A well-written llms.txt reduces retrieval errors and improves the accuracy of answers about your product without requiring the user to do anything differently.

6. Is there a way to verify that AI tools are actually reading our llms.txt?

Yes. Check your server access logs for requests to /llms.txt and /llms-full.txt and filter by known AI crawler user-agents, including GPTBot, ClaudeBot, PerplexityBot, and Google-Extended.

You can also embed a unique honeypot link inside the file that only an automated reader would follow, then monitor for any traffic to that URL. Cloudflare Analytics breaks down bot traffic by user-agent and makes this straightforward to set up without touching raw server logs.

If your buyers use ChatGPT or Perplexity,

you need to know exactly where you stand.

Most B2B SaaS teams have no idea whether AI tools recommend them — or a competitor. We audit your AI search visibility and show you what to fix first.

for Gumlet

REsimpli in 90 days

trust DerivateX